Editor’s note: This post is part of our weekly In the NVIDIA Studio series, which celebrates featured artists, offers creative tips and tricks, and demonstrates how NVIDIA Studio technology improves creative workflows. We’re also deep diving on new GeForce RTX 40 Series GPU features, technologies and resources, and how they dramatically accelerate content creation.

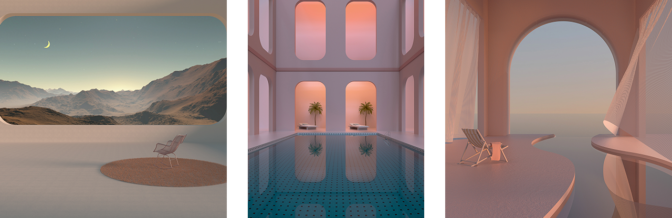

Jacob Norris is a 3D artist and the president, co-founder and creative director of Sierra Division Studios — an outsource studio specializing in digital 3D content creation. The studio was founded with a single goal in mind: to make groundbreaking artwork at the highest level.

His team is entirely remote — giving employees added flexibility to work from anywhere in the world while increasing the pool of prospective artists who have a vast array of experiences and skill sets that the studio can draw from.

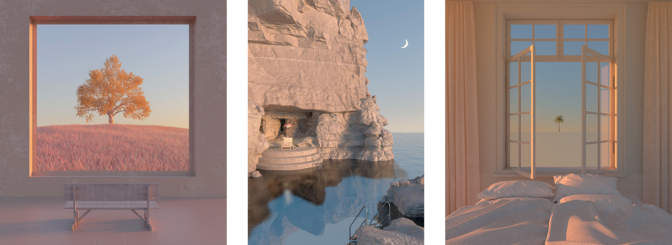

Norris envisions a future where incredible 3D content can be made regardless of location, time or even language, he said. It’s a future in which NVIDIA Omniverse, a platform for connecting and building custom 3D tools and metaverse applications, will play a critical role.

Omniverse is also a powerful tool for making SimReady assets — 3D objects with accurate physical properties. Combined with synthetic data, these assets can help solve real-world problems in simulation, including for AI-powered 3D artists. Learn more about AI and access creative resources to level up your passion projects on the NVIDIA Studio creative side hustle page.

Plus, check out the new community challenge, #StartToFinish. Use the hashtag to submit a screenshot of a favorite project featuring both its beginning and ending stages for a chance to be showcased on the @NVIDIAStudio and @NVIDIAOmniverse social channels.

Welcome to our new #StartToFinish challenge.

Join by show us a photo/video of how one of your art projects started and then one of the final result + tag #StartToFinish.

Check out these great examples from @rafianimates made with #OpenUSD in @NVIDIAOmniverse.

pic.twitter.com/z9v656oQ2Q

— NVIDIA Studio (@NVIDIAStudio) July 10, 2023

Tapping Omniverse for Omnipresent Work

“Omniverse is an incredibly powerful tool for our team in the collaboration process,” said Norris. He noted that the Universal Scene Description format, aka OpenUSD, is key to achieving efficient content creation.

“We used OpenUSD to build a massive library of all the assets from our team,” Norris said. “We accomplished this by adding every mesh and element of a single model into a large, easily viewable overview scene for kitbashing, which is the process of combining elements from several assets into an entirely new model.”

“Since everything is shared in OpenUSD, our asset library is easily accessible and reduces the time needed to access materials and make edits on the fly,” Norris added. “This helps spur inspirational and imaginational forces.”

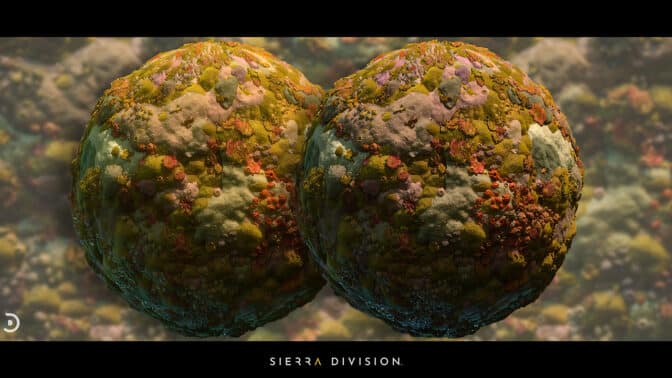

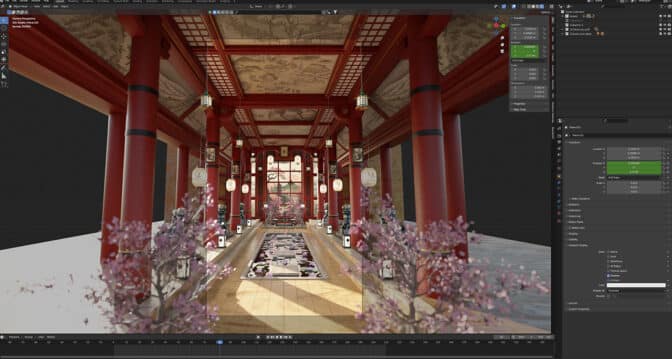

During the review phase, the team can compare photorealistic models with incredible visual fidelity side by side in a shared space, ensuring the models are “created to the highest set of standards,” said Norris.

The Last Oil Rig on Earth

Sierra Division’s The Oil Rig video is set on Earth’s last operational fossil fuel rig, which is visited by a playful drone named Quark. The piece’s storytelling takes the audience through an impeccably detailed environment.

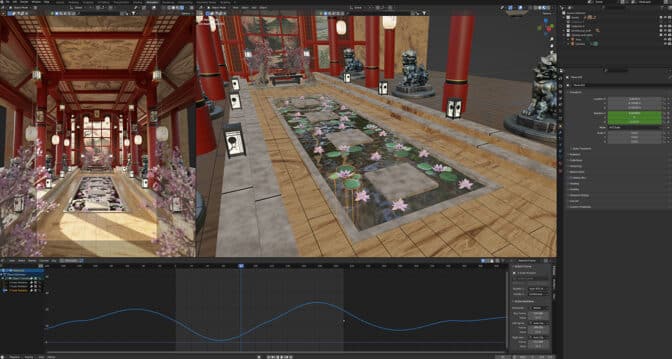

A scene as complex as the one above required blockouts in Unreal Engine. The team snapped models together from a set of greybox modular pieces, ensuring the environment bits were easy to work with. Once satisfied with the environment concept and layout, the team added further detail to the models.

Norris’ Lenovo ThinkPad P73 NVIDIA Studio laptop with NVIDIA RTX A5000 graphics powered NVIDIA DLSS technology to increase the interactivity of the viewport — by using AI to upscale frames rendered at lower resolution while retaining high-fidelity detail.

Sierra Division then created tiling textures, trim sheets and materials to apply to near-finalized models. The studio used Adobe Substance 3D Painter to design custom textures with edge wear and grunge, taking advantage of RTX-accelerated light and ambient occlusion for baking and optimizing assets in seconds.

Next, lighting scenarios were tested in the Omniverse USD Composer app with the Unreal Engine Connector, which eliminates the need to upload, download and refile formats, thanks to OpenUSD.

“With OpenUSD, it’s very easy to open the same file you’re viewing in the engine and quickly make edits without having to re-import,” said Norris.

Sierra Division analyzed daytime, nighttime, rainy and cloudy scenarios to see how the scene resonated emotionally, helping to decide the mood of the story they wanted to tell with their in-progress assets. They settled on a cloudy environment with well-placed lights to evoke feelings of mystery and intrigue.

“From story-building to asset and scene creation to final renders with RTX, AI and GPU-accelerated features helped us every step of the way.” — Jacob Norris

From here, the team added cameras to the scene to determine compositions for final renders.

“If we were to try to compose the entire environment without cameras or direction, it would take much longer, and we wouldn’t have perfectly laid-out camera shots nor specifically lit renders,” said Norris. “It’s just much easier and more fun to do it this way and to pick camera shots earlier on.”

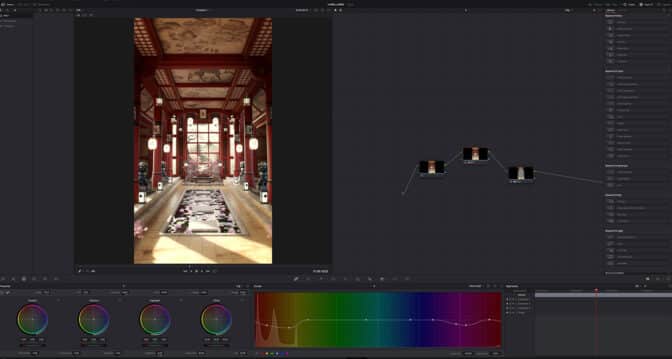

Final renders were exported lightning fast with Norris’ RTX A5000 GPU into Adobe Photoshop. Over 30 GPU-accelerated features gave Norris plenty of options to play with colors and contrast, and make final image adjustments smoothly and quickly.

The Oil Rig modular set is available for purchase on Epic Games Unreal Marketplace. Sierra Division donates a portion of every sale to Ocean Conservancy — a nonprofit working to reduce trash, create sustainable fisheries and preserve wildlife.

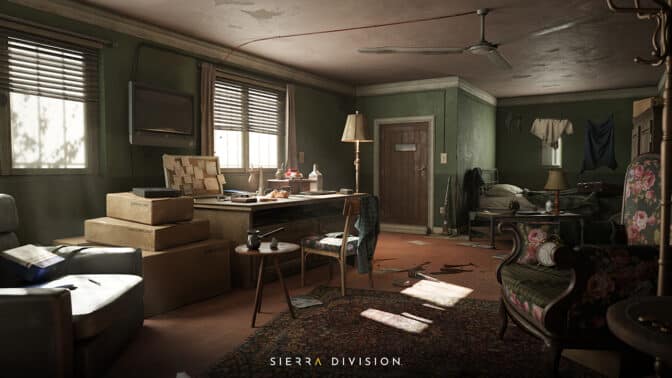

The Explorer’s Room

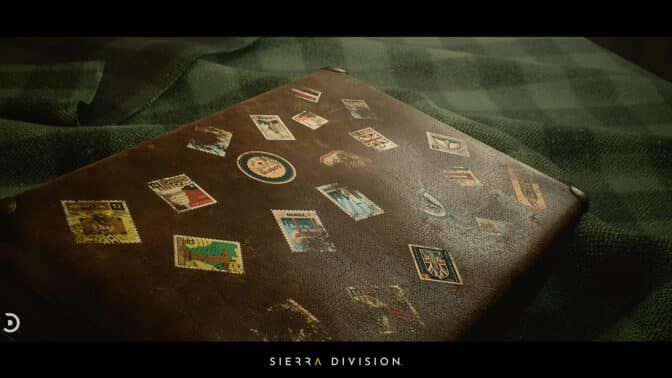

For another video, called “The Explorer’s Room,” Sierra Division collaborated with 3D artist Mostafa Sohbi. An environment originally created by Sohbi was a great starting point to expand on the idea of an “explorer” that collects artifacts, gets into precarious situations and uses tools to help him out.

Norris and Sierra Division’s creative workflow for this piece closely mirrored the team’s work on The Oil Rig.

“We all know Nathan Drake, Lara Croft, Indiana Jones and other adventurous characters,” said Norris. “They were big inspirations for us to create a living environment that tells our version of the story, while also allowing others to take the same assets and work on their own versions of the story, adding or changing elements in it.”

Norris stressed the importance of GPU technology in his creative workflow. “Having the fastest-performing GPU allowed us to focus more on the creative process and telling our story, instead of trying to work around slow technology or accommodating for poor performance with lower-quality artwork,” said Norris.

“We simply made what we thought was awesome, looked awesome and felt great to share with others,” Norris said. “So it was a no-brainer for us to use NVIDIA RTX GPUs.”

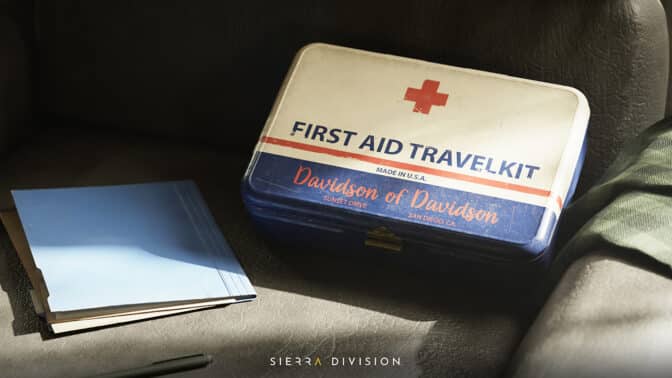

Money Heist

Norris said much of the content Sierra Division creates offers the opportunity for others to use the studio’s assets to tell their own stories. “We don’t always want to over impose our own ideas into a scene or an environment, but we do want to show what is possible,” he added.

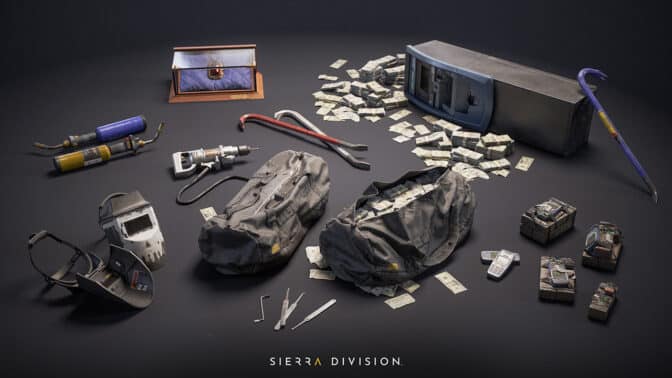

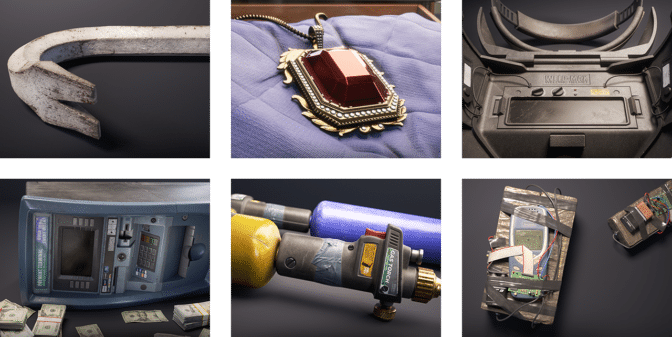

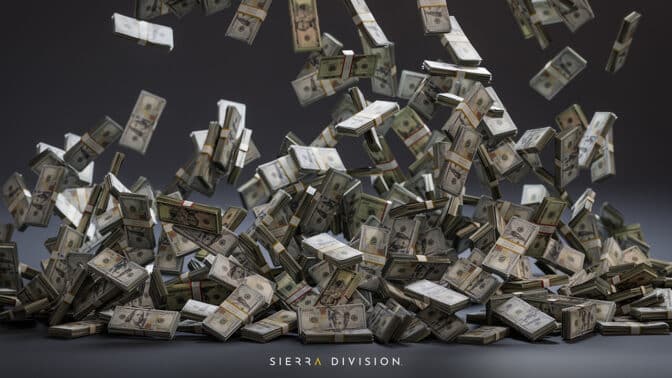

Sierra Division created the Heist Essentials and Tools Collection set of props to share with game developers, content creators and virtual production teams.

“It’s always a thrill to recreate props inspired by movies like Ocean’s Eleven and Mission Impossible, and create assets someone might use during these types of missions and sequences,” said Norris.

Try to spot all of the hidden treasures.

Check out Sierra Division on the studio’s website, ArtStation, Twitter and Instagram.

Follow NVIDIA Studio on Instagram, Twitter and Facebook. Access tutorials on the Studio YouTube channel and get updates directly in your inbox by subscribing to the Studio newsletter.

Get started with NVIDIA Omniverse by downloading the standard license free, or learn how Omniverse Enterprise can connect your team. Developers can get started with Omniverse resources. Stay up to date on the platform by subscribing to the newsletter, and follow NVIDIA Omniverse on Instagram, Medium and Twitter. For more, join the Omniverse community and check out the Omniverse forums, Discord server, Twitch and YouTube channels.

NVIDIA GeForce NOW (@NVIDIAGFN)

NVIDIA GeForce NOW (@NVIDIAGFN)