Cloud computing is powering a new age of data and AI by democratizing access to scalable compute, storage, and networking infrastructure and services. Thanks to the cloud, organizations can now collect data at an unprecedented scale and use it to train complex models and generate insights.

While this increasing demand for data has unlocked new possibilities, it also raises concerns about privacy and security, especially in regulated industries such as government, finance, and healthcare. One area where data privacy is crucial is patient records, which are used to train models to aid clinicians in diagnosis. Another example is in banking, where models that evaluate borrower creditworthiness are built from increasingly rich datasets, such as bank statements, tax returns, and even social media profiles. This data contains very personal information, and to ensure that it’s kept private, governments and regulatory bodies are implementing strong privacy laws and regulations to govern the use and sharing of data for AI, such as the General Data Protection Regulation (GDPR) and the proposed EU AI Act. You can learn more about some of the industries where it’s imperative to protect sensitive data in this Microsoft Azure Blog post.

Commitment to a confidential cloud

Microsoft recognizes that trustworthy AI requires a trustworthy cloud—one in which security, privacy, and transparency are built into its core. A key component of this vision is confidential computing—a set of hardware and software capabilities that give data owners technical and verifiable control over how their data is shared and used. Confidential computing relies on a new hardware abstraction called trusted execution environments (TEEs). In TEEs, data remains encrypted not just at rest or during transit, but also during use. TEEs also support remote attestation, which enables data owners to remotely verify the configuration of the hardware and firmware supporting a TEE and grant specific algorithms access to their data.

At Microsoft, we are committed to providing a confidential cloud, where confidential computing is the default for all cloud services. Today, Azure offers a rich confidential computing platform comprising different kinds of confidential computing hardware (Intel SGX, AMD SEV-SNP), core confidential computing services like Azure Attestation and Azure Key Vault managed HSM, and application-level services such as Azure SQL Always Encrypted, Azure confidential ledger, and confidential containers on Azure. However, these offerings are limited to using CPUs. This poses a challenge for AI workloads, which rely heavily on AI accelerators like GPUs to provide the performance needed to process large amounts of data and train complex models.

-

Publication

Graviton: Trusted Execution Environments on GPUs

The Confidential Computing group at Microsoft Research identified this problem and defined a vision for confidential AI powered by confidential GPUs, proposed in two papers, “Oblivious Multi-Party Machine Learning on Trusted Processors” and “Graviton: Trusted Execution Environments on GPUs.” In this post, we share this vision. We also take a deep dive into the NVIDIA GPU technology that’s helping us realize this vision, and we discuss the collaboration among NVIDIA, Microsoft Research, and Azure that enabled NVIDIA GPUs to become a part of the Azure confidential computing ecosystem.

Vision for confidential GPUs

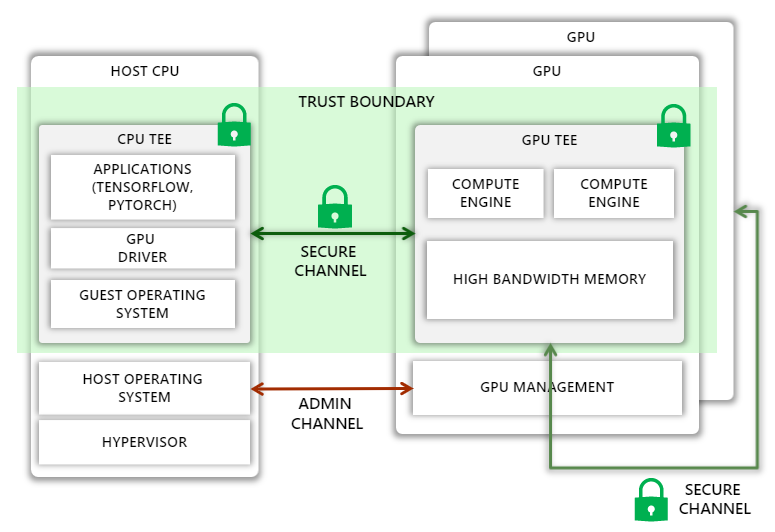

Today, CPUs from companies like Intel and AMD allow the creation of TEEs, which can isolate a process or an entire guest virtual machine (VM), effectively eliminating the host operating system and the hypervisor from the trust boundary. Our vision is to extend this trust boundary to GPUs, allowing code running in the CPU TEE to securely offload computation and data to GPUs.

Unfortunately, extending the trust boundary is not straightforward. On the one hand, we must protect against a variety of attacks, such as man-in-the-middle attacks where the attacker can observe or tamper with traffic on the PCIe bus or on a NVIDIA NVLink connecting multiple GPUs, as well as impersonation attacks, where the host assigns an incorrectly configured GPU, a GPU running older versions or malicious firmware, or one without confidential computing support for the guest VM. At the same time, we must ensure that the Azure host operating system has enough control over the GPU to perform administrative tasks. Furthermore, the added protection must not introduce large performance overheads, increase thermal design power, or require significant changes to the GPU microarchitecture.

Our research shows that this vision can be realized by extending the GPU with the following capabilities:

- A new mode where all sensitive state on the GPU, including GPU memory, is isolated from the host

- A hardware root-of-trust on the GPU chip that can generate verifiable attestations capturing all security sensitive state of the GPU, including all firmware and microcode

- Extensions to the GPU driver to verify GPU attestations, set up a secure communication channel with the GPU, and transparently encrypt all communications between the CPU and GPU

- Hardware support to transparently encrypt all GPU-GPU communications over NVLink

- Support in the guest operating system and hypervisor to securely attach GPUs to a CPU TEE, even if the contents of the CPU TEE are encrypted

Confidential computing with NVIDIA A100 Tensor Core GPUs

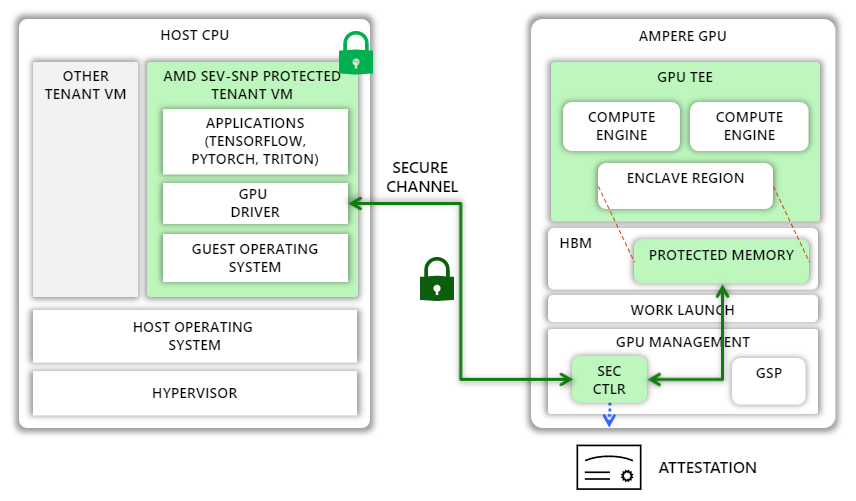

NVIDIA and Azure have taken a significant step toward realizing this vision with a new feature called Ampere Protected Memory (APM) in the NVIDIA A100 Tensor Core GPUs. In this section, we describe how APM supports confidential computing within the A100 GPU to achieve end-to-end data confidentiality.

APM introduces a new confidential mode of execution in the A100 GPU. When the GPU is initialized in this mode, the GPU designates a region in high-bandwidth memory (HBM) as protected and helps prevent leaks through memory-mapped I/O (MMIO) access into this region from the host and peer GPUs. Only authenticated and encrypted traffic is permitted to and from the region.

In confidential mode, the GPU can be paired with any external entity, such as a TEE on the host CPU. To enable this pairing, the GPU includes a hardware root-of-trust (HRoT). NVIDIA provisions the HRoT with a unique identity and a corresponding certificate created during manufacturing. The HRoT also implements authenticated and measured boot by measuring the firmware of the GPU as well as that of other microcontrollers on the GPU, including a security microcontroller called SEC2. SEC2, in turn, can generate attestation reports that include these measurements and that are signed by a fresh attestation key, which is endorsed by the unique device key. These reports can be used by any external entity to verify that the GPU is in confidential mode and running last known good firmware.

When the NVIDIA GPU driver in the CPU TEE loads, it checks whether the GPU is in confidential mode. If so, the driver requests an attestation report and checks that the GPU is a genuine NVIDIA GPU running known good firmware. Once confirmed, the driver establishes a secure channel with the SEC2 microcontroller on the GPU using the Security Protocol and Data Model (SPDM)-backed Diffie-Hellman-based key exchange protocol to establish a fresh session key. When that exchange completes, both the GPU driver and SEC2 hold the same symmetric session key.

The GPU driver uses the shared session key to encrypt all subsequent data transfers to and from the GPU. Because pages allocated to the CPU TEE are encrypted in memory and not readable by the GPU DMA engines, the GPU driver allocates pages outside the CPU TEE and writes encrypted data to those pages. On the GPU side, the SEC2 microcontroller is responsible for decrypting the encrypted data transferred from the CPU and copying it to the protected region. Once the data is in high bandwidth memory (HBM) in cleartext, the GPU kernels can freely use it for computation.

Accelerating innovation with confidential AI

The implementation of APM is an important milestone toward achieving broader adoption of confidential AI in the cloud and beyond. APM is the foundational building block of Azure Confidential GPU VMs, now in private preview. These VMs, designed in collaboration with NVIDIA, Azure, and Microsoft Research, feature up to four A100 GPUs with 80 GB of HBM and APM technology and enable users to host AI workloads on Azure with a new level of security.

But this is just the beginning. We look forward to taking our collaboration with NVIDIA to the next level with NVIDIA’s Hopper architecture, which will enable customers to protect both the confidentiality and integrity of data and AI models in use. We believe that confidential GPUs can enable a confidential AI platform where multiple organizations can collaborate to train and deploy AI models by pooling together sensitive datasets while remaining in full control of their data and models. Such a platform can unlock the value of large amounts of data while preserving data privacy, giving organizations the opportunity to drive innovation.

A real-world example involves Bosch Research, the research and advanced engineering division of Bosch, which is developing an AI pipeline to train models for autonomous driving. Much of the data it uses includes personal identifiable information (PII), such as license plate numbers and people’s faces. At the same time, it must comply with GDPR, which requires a legal basis for processing PII, namely, consent from data subjects or legitimate interest. The former is challenging because it is practically impossible to get consent from pedestrians and drivers recorded by test cars. Relying on legitimate interest is challenging too because, among other things, it requires showing that there is a no less privacy-intrusive way of achieving the same result. This is where confidential AI shines: Using confidential computing can help reduce risks for data subjects and data controllers by limiting exposure of data (for example, to specific algorithms), while enabling organizations to train more accurate models.

At Microsoft Research, we are committed to working with the confidential computing ecosystem, including collaborators like NVIDIA and Bosch Research, to further strengthen security, enable seamless training and deployment of confidential AI models, and help power the next generation of technology.

About confidential computing at Microsoft Research

The Confidential Computing team at Microsoft Research Cambridge conducts pioneering research in system design that aims to guarantee strong security and privacy properties to cloud users. We tackle problems around secure hardware design, cryptographic and security protocols, side channel resilience, and memory safety. We are also interested in new technologies and applications that security and privacy can uncover, such as blockchains and multiparty machine learning. Please visit our careers page to learn about opportunities for both researchers and engineers. We’re hiring.

Related GTC Conference sessions

The post Powering the next generation of trustworthy AI in a confidential cloud using NVIDIA GPUs appeared first on Microsoft Research.