Posted by Daniel J. Mankowitz, Research Scientist, DeepMind and Gabriel Dulac-Arnold, Research Scientist, Google Research

Reinforcement Learning (RL) has proven to be effective in solving numerous complex problems ranging from Go, StarCraft and Minecraft to robot locomotion and chip design. In each of these cases, a simulator is available or the real environment is quick and inexpensive to access. Yet, there are still considerable challenges to deploying RL to real-world products and systems. For example, in physical control systems, such as robotics and autonomous driving, RL controllers are trained to solve tasks like grasping objects or driving on a highway. These controllers are susceptible to effects such as sensor noise, system delays, or normal wear-and-tear that can reduce the quality of input to the controller, leading to incorrect decision-making and potentially catastrophic failures.

|

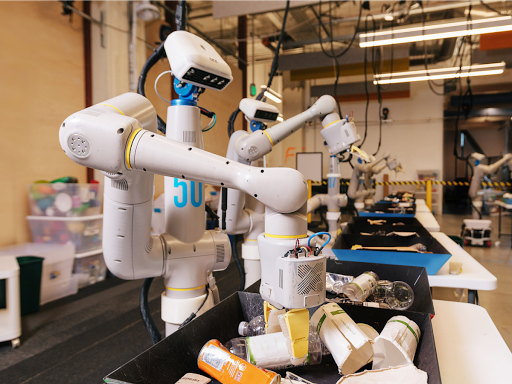

| A physical control system: Robots learning how to grasp and sort objects using RL at the Everyday Robot Project at X. These types of systems are subject to many of the real-world challenges detailed here. |

In “Challenges of Real-World Reinforcement Learning”, we identify and discuss nine different challenges that hinder the application of current RL algorithms to applied systems. We then follow up this work with an empirical investigation in which we simulated versions of these challenges on state-of-the-art RL algorithms, and benchmark the effects of each. We have open-sourced these simulated challenges in the Real-World RL (RWRL) task suite to help draw attention to these important issues, as well as accelerate research toward solving them.

The RWRL Suite

The RWRL suite is a set of simulated tasks inspired by applied reinforcement learning challenges, the goal of which is to enable fast algorithmic iterations for both researchers and practitioners, without having to run slow, expensive experiments on real-systems. While there will be additional challenges transitioning from RL algorithms that were trained in simulation to real-world applications, this suite intends to close some of the more fundamental, algorithmic gaps. At present, RWRL supports a subset of the DeepMind Control Suite domains, but the goal is to broaden the suite to support an even more diverse domain set.

Easy-to-Use & Flexible

We designed the suite with two main goals in mind. (1) It should be easy to use — a user should be able to start running experiments within minutes of downloading the suite, simply by changing a few lines of code. (2) It should be flexible — a user should be able to incorporate any combination of challenges into the environment with very little effort.

A Delayed Action Example

To illustrate the ease of use of the RWRL suite, imagine a researcher or practitioner wants to implement action delays (i.e., temporal delays on actions being sent to the environment). To use the RWRL suite, simply import the rwrl module. Next, load an environment (e.g., cartpole) with the delay_spec argument. This optional argument is specified as a dictionary configuring delay applied to actions, observations, or rewards and the number of timesteps the corresponding element is delayed (e.g., 20 timesteps). Once the environment is loaded, the effects of actions are automatically delayed without any other changes to the experiment. This makes it easy to test an RL algorithm with action delays in a range of different environments supported by the RWRL suite.

|

| A high-level overview of the RWRL suite. Add a challenge (e.g., action delays) into the environment with a few lines of code, run a hyperparameter sweep and produce a graph shown on the right |

A user can combine different challenges or choose from a set of predefined benchmark challenges by simply adding additional arguments to the load function, all of which are specified in the open-source RWRL suite codebase.

Supported Challenges

The RWRL suite provides functionality to support experiments related to eight of the nine different challenges that make applying current RL algorithms on applied systems difficult: sample efficiency; system delays; high-dimensional state and action spaces; constraints; partial observability, stochasticity and non-stationarity; multiple objectives; real-time inference; and training from offline logs. RWRL excludes the explainability challenge, which is abstract and non-trivial to define. The supported experiments are non-exhaustive and provide researchers and practitioners with the ability to analyze the capabilities of their agent with respect to each challenge dimension. Examples of the supported challenges include:

- System Delays

Most real systems have delays in either sensing, actuation or reward feedback, all of which can be configured and applied to any task within the RWRL suite.The graphs below show the performance of a D4PG agent as actions (left), observations (middle) and rewards (right) are increasingly delayed.

The effect of increasing the action (left), observation (middle) and reward (right) delays respectively on a state-of-the art RL agent in four MuJoCo domains. As can be seen in the graphs, a researcher or practitioner can quickly gain insights as to which type of delay affects their agent’s performance. These delays can also be combined together to observe their combined effect.

- Constraints

Almost all applied systems have some form of constraints embedded into the overall objective, which is not common in most RL environments. The RWRL suite implements a series of constraints for each task, with varying difficulties, to facilitate research in constrained RL. An example of a complex local angular velocity constraint being violated is visualized in the video below.

An example of constraint violations for cartpole. The red screen indicates that a violation has occurred on localized angular velocity. - Non-Stationarity

The user can introduce non-stationarity by perturbing environment parameters. These perturbations are in contrast to the pixel level adversarial perturbations that have recently gained popularity in research on supervised deep learning. For example, in the human walker domain, the size of the head and friction of the ground can be modified throughout training to simulate changing conditions. A variety of schedulers are available in the RWRL suite (see our codebase for more details), along with multiple default parameter perturbations, which were carefully defined to handicap the learning capabilities of state-of-the-art learning algorithms.

Non-stationary perturbations. The suite supports perturbing environment parameters across episodes such as changing head size (center) and contact friction (right). - Training from Offline Log Data

In most applied systems, it is both slow and expensive to run experiments. There are often logs of data available from previous experiments that can be utilized to train a policy. However, it is often difficult to outperform the previous model in production due to the data being limited, of low variance, or of poor quality. To address this, we have generated offline datasets of the combined RWRL benchmark challenges, which we made available as part of a wider offline dataset release. More information can be found in this notebook.

Conclusion

Most systems rarely manifest only a single challenge, and we are excited to see how algorithms can deal with an environment in which there are multiple challenges combined with increasing levels of difficulty (‘Easy’, ‘Medium’ and ‘Hard’). We highly encourage the research community to try and solve these challenges, as we believe that solving them will facilitate more widespread applications of RL to products and real-world systems.

While the initial set of RWRL suite features and experiments provide a starting point for closing the gap between the current state of RL and the challenges of applied systems, there is still much work to do. The supported experiments are not exhaustive and we welcome new ideas from the wider community to better evaluate the capabilities of our RL agents. Our main goal with this suite is to highlight and encourage research on the core problems that limit the effectiveness of RL algorithms in applied products and systems and to accelerate progress towards enabling future RL applications.

Acknowledgements

We would like to thank our core contributor and co-author Nir Levine for his invaluable help. We would also like to thank our co-authors Jerry Li, Sven Gowal, Todd Hester and Cosmin Paduraru as well as Robert Dadashi, the ACME team, Dan A. Calian, Juliet Rothenberg and Timothy Mann for their contributions.