Amazon SageMaker Studio is the first fully integrated development environment (IDE) for machine learning (ML). SageMaker Studio lets data scientists spin up Studio notebooks to explore data, build models, launch Amazon SageMaker training jobs, and deploy hosted endpoints. Studio notebooks come with a set of pre-built images, which consist of the Amazon SageMaker Python SDK and the latest version of the IPython runtime or kernel. With this new feature, you can bring your own custom images to Amazon SageMaker notebooks. These images are then available to all users authenticated into the domain. In this post, we share how to bring a custom container image to SageMaker Studio notebooks.

Developers and data scientists may require custom images for several different use cases:

- Access to specific or latest versions of popular ML frameworks such as TensorFlow, MxNet, PyTorch, or others.

- Bring custom code or algorithms developed locally to Studio notebooks for rapid iteration and model training.

- Access to data lakes or on-premises data stores via APIs, and admins need to include the corresponding drivers within the image.

- Access to a backend runtime, also called kernel, other than IPython such as R, Julia, or others. You can also use the approach outlined in this post to install a custom kernel.

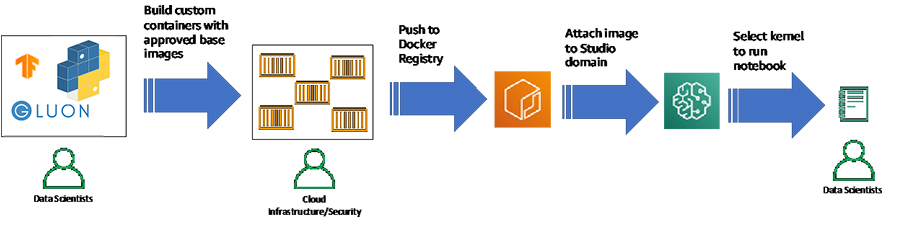

In large enterprises, ML platform administrators often need to ensure that any third-party packages and code is pre-approved by security teams for use, and not downloaded directly from the internet. A common workflow might be that the ML Platform team approves a set of packages and frameworks for use, builds a custom container using these packages, tests the container for vulnerabilities, and pushes the approved image in a private container registry such as Amazon Elastic Container Registry (Amazon ECR). Now, ML platform teams can directly attach approved images to the Studio domain (see the following workflow diagram). You can simply select the approved custom image of your choice in Studio. You can then work with the custom image locally in your Studio notebook. With this release, a single Studio domain can contain up to 30 custom images, with the option to add a new version or delete images as needed.

We now walk through how you can bring a custom container image to SageMaker Studio notebooks using this feature. Although we demonstrate the default approach over the internet, we include details on how you can modify this to work in a private Amazon Virtual Private Cloud (Amazon VPC).

Prerequisites

Before getting started, you need to make sure you meet the following prerequisites:

- Have an AWS account.

- Ensure that the execution role you use to access Amazon SageMaker has the following AWS Identity and Access Management (IAM) permissions, which allow SageMaker Studio to create a repository in Amazon ECR with the prefix

smstudio, and grant permissions to push and pull images from this repo. To use an existing repository, replace theResourcewith the ARN of your repository. To build the container image, you can either use a local Docker client or create the image from SageMaker Studio directly, which we demonstrate here. To create a repository in Amazon ECR, SageMaker Studio uses AWS CodeBuild, and you also need to include the CodeBuild permissions shown below.{ "Effect": "Allow", "Action": [ "ecr:CreateRepository", "ecr:BatchGetImage", "ecr:CompleteLayerUpload", "ecr:DescribeImages", "ecr:DescribeRepositories", "ecr:UploadLayerPart", "ecr:ListImages", "ecr:InitiateLayerUpload", "ecr:BatchCheckLayerAvailability", "ecr:GetDownloadUrlForLayer", "ecr:PutImage" ], "Resource": "arn:aws:ecr:*:*:repository/smstudio*" }, { "Effect": "Allow", "Action": "ecr:GetAuthorizationToken", "Resource": "*" } { "Effect": "Allow", "Action": [ "codebuild:DeleteProject", "codebuild:CreateProject", "codebuild:BatchGetBuilds", "codebuild:StartBuild" ], "Resource": "arn:aws:codebuild:*:*:project/sagemaker-studio*" } - Your SageMaker role should also have a trust policy with AWS CodeBuild as shown below. For more information, see Using the Amazon SageMaker Studio Image Build CLI to build container images from your Studio notebooks

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Service": [ "codebuild.amazonaws.com" ] }, "Action": "sts:AssumeRole" } ] } - Install the AWS Command Line Interface (AWS CLI) on your local machine. For instructions, see Installing the AWS.

- Have a SageMaker Studio domain. To create a domain, use the CreateDomain API or the create-domain CLI command.

If you wish to use your private VPC to securely bring your custom container, you also need the following:

- A VPC with a private subnet

- VPC endpoints for the following services:

- Amazon Simple Storage Service (Amazon S3)

- Amazon SageMaker

- Amazon ECR

- AWS Security Token Service (AWS STS)

- CodeBuild for building Docker containers

To set up these resources, see Securing Amazon SageMaker Studio connectivity using a private VPC and the associated GitHub repo.

Creating your Dockerfile

To demonstrate the common need from data scientists to experiment with the newest frameworks, we use the following Dockerfile, which uses the latest TensorFlow 2.3 version as the base image. You can replace this Dockerfile with a Dockerfile of your choice. Currently, SageMaker Studio supports a number of base images, such as Ubuntu, Amazon Linux 2, and others. The Dockerfile installs the IPython runtime required to run Jupyter notebooks, and installs the Amazon SageMaker Python SDK and boto3.

In addition to notebooks, data scientists and ML engineers often iterate and experiment on their local laptops using various popular IDEs such as Visual Studio Code or PyCharm. You may wish to bring these scripts to the cloud for scalable training or data processing. You can include these scripts as part of your Docker container so they’re visible in your local storage in SageMaker Studio. In the following code, we copy the train.py script, which is a base script for training a simple deep learning model on the MNIST dataset. You may replace this script with your own scripts or packages containing your code.

FROM tensorflow/tensorflow:2.3.0

RUN apt-get update

RUN apt-get install -y git

RUN pip install --upgrade pip

RUN pip install ipykernel &&

python -m ipykernel install --sys-prefix &&

pip install --quiet --no-cache-dir

'boto3>1.0<2.0'

'sagemaker>2.0<3.0'COPY train.py /root/train.py #Replace with your own custom scripts or packagesimport tensorflow as tf

import os

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10, activation='softmax')

])

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

model.fit(x_train, y_train, epochs=1)

model.evaluate(x_test, y_test)Instead of a custom script, you can also include other files, such as Python files that access client secrets and environment variables via AWS Secrets Manager or AWS Systems Manager Parameter Store, config files to enable connections with private PyPi repositories, or other package management tools. Although you can copy the script using the custom image, any ENTRYPOINT or CMD commands in your Dockerfile don’t run.

Setting up your installation folder

You need to create a folder on your local machine, and add the following files in that folder:

- The Dockerfile that you created in the previous step

- A file named

app-image-config-input.jsonwith the following content:"AppImageConfigName": "custom-tf2", "KernelGatewayImageConfig": { "KernelSpecs": [ { "Name": "python3", "DisplayName": "Python 3" } ], "FileSystemConfig": { "MountPath": "/root/data", "DefaultUid": 0, "DefaultGid": 0 } } }

We set the backend kernel for this Dockerfile as an IPython kernel, and provide a mount path to the Amazon Elastic File System (Amazon EFS). Amazon SageMaker recognizes kernels as defined by Jupyter. For example, for an R kernel, set Name in the preceding code to ir.

- Create a file named

default-user-settings.jsonwith the following content. If you’re adding multiple custom images, just add to the list ofCustomImages.{ "DefaultUserSettings": { "KernelGatewayAppSettings": { "CustomImages": [ { "ImageName": "tf2kernel", "AppImageConfigName": "custom-tf2" } ] } } }

Creating and attaching the image to your Studio domain

If you have an existing domain, you simply need to update the domain with the new image. In this section, we demonstrate how existing Studio users can attach images. For instructions on onboarding a new user, see Onboard to Amazon SageMaker Studio Using IAM.

First, we use the SageMaker Studio Docker build CLI to build and push the Dockerfile to Amazon ECR. Note that you can use other methods to push containers to ECR such as your local docker client, and the AWS CLI.

-

- Log in to Studio using your user profile.

- Upload your Dockerfile and any other code or dependencies you wish to copy into your container to your Studio domain.

- Navigate to the folder containing the Dockerfile.

- In a terminal window or in a notebook —>

!pip install sagemaker-studio-image-build- Export a variable called

IMAGE_NAME, and set it to the value you specified in thedefault-user-settings.jsonsm-docker build . --repository smstudio-custom:IMAGE_NAME - If you wish to use a different repository, replace

smstudio-customin the preceding code with your repo name.

SageMaker Studio builds the Docker image for you and pushes the image to Amazon ECR in a repository named smstudio-custom, tagged with the appropriate image name. To customize this further, such as providing a detailed file path or other options, see Using the Amazon SageMaker Studio Image Build CLI to build container images from your Studio notebooks. For the pip command above to work in a private VPC environment, you need a route to the internet or access to this package in your private repository.

- In the installation folder from earlier, create a new file called

create-and-update-image.sh:ACCOUNT_ID=AWS ACCT ID # Replace with your AWS account ID REGION=us-east-2 #Replace with your region DOMAINID=d-####### #Replace with your SageMaker Studio domain name. IMAGE_NAME=tf2kernel #Replace with your Image name # Using with SageMaker Studio ## Create SageMaker Image with the image in ECR (modify image name as required) ROLE_ARN='The Execution Role ARN for the execution role you want to use' aws --region ${REGION} sagemaker create-image --image-name ${IMAGE_NAME} --role-arn ${ROLE_ARN} aws --region ${REGION} sagemaker create-image-version --image-name ${IMAGE_NAME} --base-image "${ACCOUNT_ID}.dkr.ecr.${REGION}.amazonaws.com/smstudio-custom:${IMAGE_NAME}" ## Create AppImageConfig for this image (modify AppImageConfigName and KernelSpecs in app-image-config-input.json as needed) aws --region ${REGION} sagemaker create-app-image-config --cli-input-json file://app-image-config-input.json ## Update the Domain, providing the Image and AppImageConfig aws --region ${REGION} sagemaker update-domain --domain-id ${DOMAINID} --cli-input-json file://default-user-settings.jsonRefer to the AWS CLI to read more about the arguments you can pass to the create-image API. To check the status, navigate to your Amazon SageMaker console and choose Amazon SageMaker Studio from the navigation pane.

Attaching images using the Studio UI

You can perform the final step of attaching the image to the Studio domain via the UI. In this case, the UI will handle the setting up of the

- On the Amazon SageMaker console, choose Amazon SageMaker Studio.

On the Control Panel page, you can see that the Studio domain was provisioned, along with any user profiles that you created.

- Choose Attach image.

- Select whether you wish to attach a new or pre-existing image.

- If you select Existing image, choose an image from the Amazon SageMaker image store.

- If you select New image, provide the Amazon ECR registry path for your Docker image. The path needs to be in the same Region as the studio domain. The ECR repo also needs to be in the same account as your Studio domain or cross-account permissions for Studio need to be enabled.

- Choose Next.

- For Image name, enter a name.

- For Image display name, enter a descriptive name.

- For Description, enter a label definition.

- For IAM role, choose the IAM role required by Amazon SageMaker to attach Amazon ECR images to Amazon SageMaker images on your behalf.

- Additionally, you can tag your image.

- Choose Next.

- For Kernel name, enter Python 3.

- Choose Submit.

The green check box indicates that the image has been successfully attached to the domain.

The Amazon SageMaker image store automatically versions your images. You can select a pre-attached image and choose Detach to detach the image and all versions, or choose Attach image to attach a new version. There is no limit to the number of versions per image or the ability to detach images.

User experience with a custom image

Let’s now jump into the user experience for a Studio user.

- Log in to Studio using your user profile.

- To launch a new activity, choose Launcher.

- For Select a SageMaker image to launch your activity, choose tf2kernel.

- Choose the Notebook icon to open a new notebook with the custom kernel.

The notebook kernel takes a couple minutes to spin up and you’re ready to go!

Testing your custom container in the notebook

When the kernel is up and running, you can run code in the notebook. First, let’s test that the correct version of TensorFlow that was specified in the Dockerfile is available for use. In the following screenshot, we can see that the notebook is using the tf2kernel we just launched.

Amazon SageMaker notebooks also display the local CPU and memory usage.

Next, let’s try out the custom training script directly in the notebook. Copy the training script into a notebook cell and run it. The script downloads the mnist dataset from the tf.keras.datasets utility, splits the data into training and test sets, defines a custom deep neural network algorithm, trains the algorithm on the training data, and tests the algorithm on the test dataset.

To experiment with the TensorFlow 2.3 framework, you may wish to test out newly released APIs, such as the newer feature preprocessing utilities in Keras. In the following screenshot, we import the keras.layers.experimental library released with TensorFlow 2.3, which contains newer APIs for data preprocessing. We load one of these APIs and re-run the script in the notebook.

Amazon SageMaker also dynamically modifies the CPU and memory usage as the code runs. By bringing your custom container and training scripts, this feature allows you to experiment with custom training scripts and algorithms directly in the Amazon SageMaker notebook. When you’re satisfied with the experimentation in the Studio notebook, you can start a training job.

What about the Python files or custom files you included with the Dockerfile using the COPY command? SageMaker Studio mounts the elastic file system in the file path provided in the app-image-config-input.json, which we set to root/data. To avoid Studio from overwriting any custom files you want to include, the COPY command loads the train.py file in the path /root. To access this file, open a terminal or notebook and run the code:

! cat /root/train.pyYou should see an output as shown in the screenshot below.

The train.py file is in the specified location.

Logging in to CloudWatch

SageMaker Studio also publishes kernel metrics to Amazon CloudWatch, which you can use for troubleshooting. The metrics are captured under the /aws/sagemaker/studio namespace.

To access the logs, on the CloudWatch console, choose CloudWatch Logs. On the Log groups page, enter the namespace to see logs associated with the Jupyter server and the kernel gateway.

Detaching an image or version

You can detach an image or an image version from the domain if it’s no longer supported.

To detach an image and all versions, select the image from the Custom images attached to domain table and choose Detach.

You have the option to also delete the image and all versions, which doesn’t affect the image in Amazon ECR.

To detach an image version, choose the image. On the Image details page, select the image version (or multiple versions) from the Image versions attached to domain table and choose Detach. You see a similar warning and options as in the preceding flow.

Conclusion

SageMaker Studio enables you to collaborate, experiment, train, and deploy ML models in a streamlined manner. To do so, data scientists often require access to the newest ML frameworks, custom scripts, and packages from public and private code repositories and package management tools. You can now create custom images containing all the relevant code, and launch these using Studio notebooks. These images will be available to all users in the Studio domain. You can also use this feature to experiment with other popular languages and runtimes besides Python, such as R, Julia, and Scala. The sample files are available on the GitHub repo. For more information about this feature, see Bring your own SageMaker image.

About the Authors

Stefan Natu is a Sr. Machine Learning Specialist at AWS. He is focused on helping financial services customers build end-to-end machine learning solutions on AWS. In his spare time, he enjoys reading machine learning blogs, playing the guitar, and exploring the food scene in New York City.

Stefan Natu is a Sr. Machine Learning Specialist at AWS. He is focused on helping financial services customers build end-to-end machine learning solutions on AWS. In his spare time, he enjoys reading machine learning blogs, playing the guitar, and exploring the food scene in New York City.

Jaipreet Singh is a Senior Software Engineer on the Amazon SageMaker Studio team. He has been working on Amazon SageMaker since its inception in 2017 and has contributed to various Project Jupyter open-source projects. In his spare time, he enjoys hiking and skiing in the Pacific Northwest.

Jaipreet Singh is a Senior Software Engineer on the Amazon SageMaker Studio team. He has been working on Amazon SageMaker since its inception in 2017 and has contributed to various Project Jupyter open-source projects. In his spare time, he enjoys hiking and skiing in the Pacific Northwest.

Huong Nguyen is a Sr. Product Manager at AWS. She is leading the user experience for SageMaker Studio. She has 13 years’ experience creating customer-obsessed and data-driven products for both enterprise and consumer spaces. In her spare time, she enjoys reading, being in nature, and spending time with her family.

Huong Nguyen is a Sr. Product Manager at AWS. She is leading the user experience for SageMaker Studio. She has 13 years’ experience creating customer-obsessed and data-driven products for both enterprise and consumer spaces. In her spare time, she enjoys reading, being in nature, and spending time with her family.