Machine learning (ML) offers tremendous potential, from diagnosing cancer to engineering safe self-driving cars to amplifying human productivity. To realize this potential, however, organizations need ML solutions to be reliable with ML solution development that is predictable and tractable. The key to both is a deeper understanding of ML data — how to engineer training datasets that produce high quality models and test datasets that deliver accurate indicators of how close we are to solving the target problem.

The process of creating high quality datasets is complicated and error-prone, from the initial selection and cleaning of raw data, to labeling the data and splitting it into training and test sets. Some experts believe that the majority of the effort in designing an ML system is actually the sourcing and preparing of data. Each step can introduce issues and biases. Even many of the standard datasets we use today have been shown to have mislabeled data that can destabilize established ML benchmarks. Despite the fundamental importance of data to ML, it’s only now beginning to receive the same level of attention that models and learning algorithms have been enjoying for the past decade.

Towards this goal, we are introducing DataPerf, a set of new data-centric ML challenges to advance the state-of-the-art in data selection, preparation, and acquisition technologies, designed and built through a broad collaboration across industry and academia. The initial version of DataPerf consists of four challenges focused on three common data-centric tasks across three application domains; vision, speech and natural language processing (NLP). In this blogpost, we outline dataset development bottlenecks confronting researchers and discuss the role of benchmarks and leaderboards in incentivizing researchers to address these challenges. We invite innovators in academia and industry who seek to measure and validate breakthroughs in data-centric ML to demonstrate the power of their algorithms and techniques to create and improve datasets through these benchmarks.

Data is the new bottleneck for ML

Data is the new code: it is the training data that determines the maximum possible quality of an ML solution. The model only determines the degree to which that maximum quality is realized; in a sense the model is a lossy compiler for the data. Though high-quality training datasets are vital to continued advancement in the field of ML, much of the data on which the field relies today is nearly a decade old (e.g., ImageNet or LibriSpeech) or scraped from the web with very limited filtering of content (e.g., LAION or The Pile).

Despite the importance of data, ML research to date has been dominated by a focus on models. Before modern deep neural networks (DNNs), there were no ML models sufficient to match human behavior for many simple tasks. This starting condition led to a model-centric paradigm in which (1) the training dataset and test dataset were “frozen” artifacts and the goal was to develop a better model, and (2) the test dataset was selected randomly from the same pool of data as the training set for statistical reasons. Unfortunately, freezing the datasets ignored the ability to improve training accuracy and efficiency with better data, and using test sets drawn from the same pool as training data conflated fitting that data well with actually solving the underlying problem.

Because we are now developing and deploying ML solutions for increasingly sophisticated tasks, we need to engineer test sets that fully capture real world problems and training sets that, in combination with advanced models, deliver effective solutions. We need to shift from today’s model-centric paradigm to a data-centric paradigm in which we recognize that for the majority of ML developers, creating high quality training and test data will be a bottleneck.

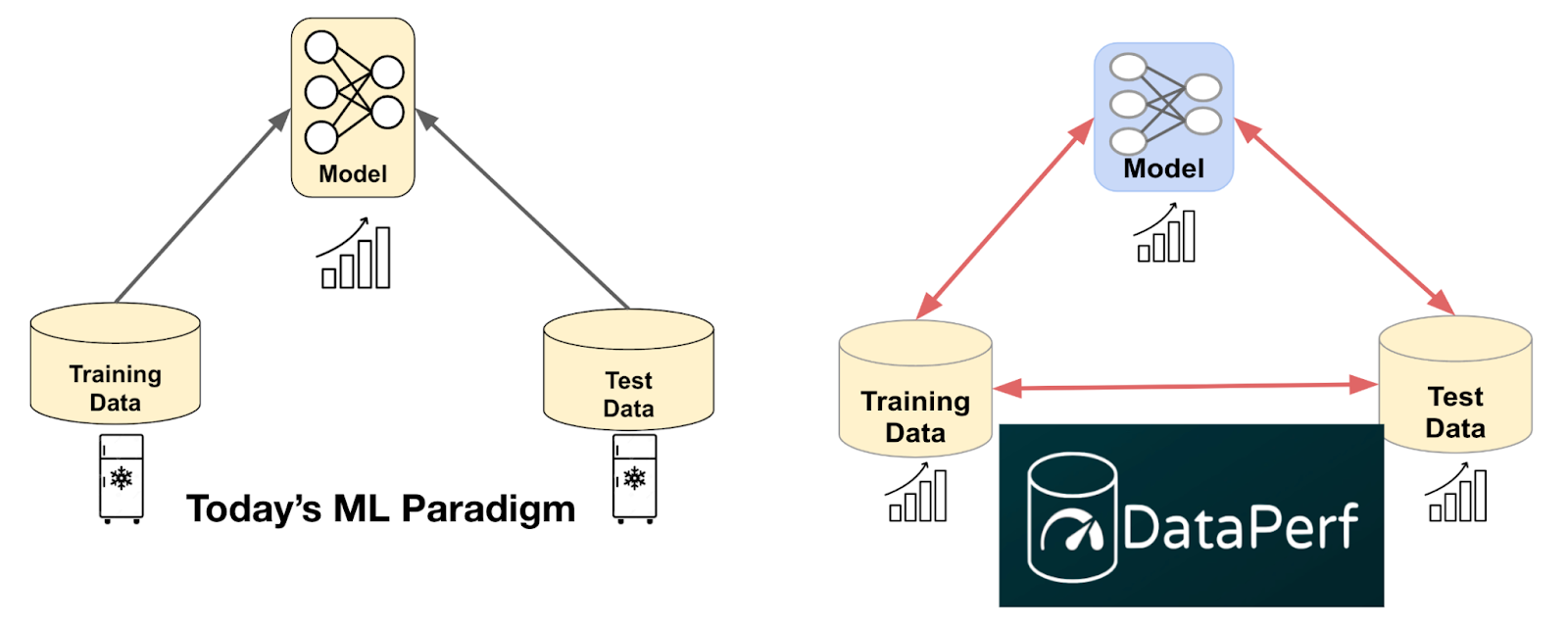

|

| Shifting from today’s model-centric paradigm to a data-centric paradigm enabled by quality datasets and data-centric algorithms like those measured in DataPerf. |

Enabling ML developers to create better training and test datasets will require a deeper understanding of ML data quality and the development of algorithms, tools, and methodologies for optimizing it. We can begin by recognizing common challenges in dataset creation and developing performance metrics for algorithms that address those challenges. For instance:

- Data selection: Often, we have a larger pool of available data than we can label or train on effectively. How do we choose the most important data for training our models?

- Data cleaning: Human labelers sometimes make mistakes. ML developers can’t afford to have experts check and correct all labels. How can we select the most likely-to-be-mislabeled data for correction?

We can also create incentives that reward good dataset engineering. We anticipate that high quality training data, which has been carefully selected and labeled, will become a valuable product in many industries but presently lack a way to assess the relative value of different datasets without actually training on the datasets in question. How do we solve this problem and enable quality-driven “data acquisition”?

DataPerf: The first leaderboard for data

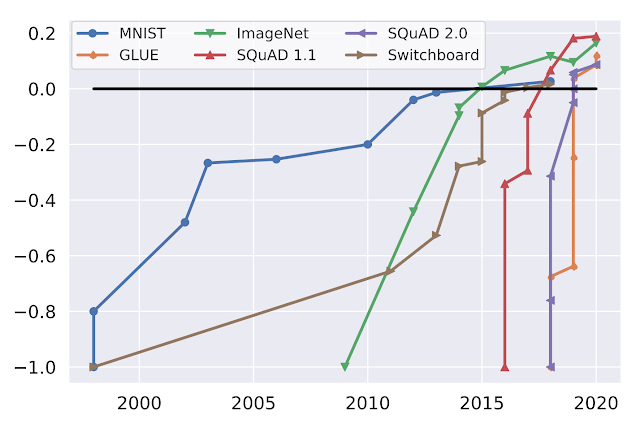

We believe good benchmarks and leaderboards can drive rapid progress in data-centric technology. ML benchmarks in academia have been essential to stimulating progress in the field. Consider the following graph which shows progress on popular ML benchmarks (MNIST, ImageNet, SQuAD, GLUE, Switchboard) over time:

|

| Performance over time for popular benchmarks, normalized with initial performance at minus one and human performance at zero. (Source: Douwe, et al. 2021; used with permission.) |

Online leaderboards provide official validation of benchmark results and catalyze communities intent on optimizing those benchmarks. For instance, Kaggle has over 10 million registered users. The MLPerf official benchmark results have helped drive an over 16x improvement in training performance on key benchmarks.

DataPerf is the first community and platform to build leaderboards for data benchmarks, and we hope to have an analogous impact on research and development for data-centric ML. The initial version of DataPerf consists of leaderboards for four challenges focused on three data-centric tasks (data selection, cleaning, and acquisition) across three application domains (vision, speech and NLP):

- Training data selection (Vision): Design a data selection strategy that chooses the best training set from a large candidate pool of weakly labeled training images.

- Training data selection (Speech): Design a data selection strategy that chooses the best training set from a large candidate pool of automatically extracted clips of spoken words.

- Training data cleaning (Vision): Design a data cleaning strategy that chooses samples to relabel from a “noisy” training set where some of the labels are incorrect.

- Training dataset evaluation (NLP): Quality datasets can be expensive to construct, and are becoming valuable commodities. Design a data acquisition strategy that chooses which training dataset to “buy” based on limited information about the data.

For each challenge, the DataPerf website provides design documents that define the problem, test model(s), quality target, rules and guidelines on how to run the code and submit. The live leaderboards are hosted on the Dynabench platform, which also provides an online evaluation framework and submission tracker. Dynabench is an open-source project, hosted by the MLCommons Association, focused on enabling data-centric leaderboards for both training and test data and data-centric algorithms.

How to get involved

We are part of a community of ML researchers, data scientists and engineers who strive to improve data quality. We invite innovators in academia and industry to measure and validate data-centric algorithms and techniques to create and improve datasets through the DataPerf benchmarks. The deadline for the first round of challenges is May 26th, 2023.

Acknowledgements

The DataPerf benchmarks were created over the last year by engineers and scientists from: Coactive.ai, Eidgenössische Technische Hochschule (ETH) Zurich, Google, Harvard University, Meta, ML Commons, Stanford University. In addition, this would not have been possible without the support of DataPerf working group members from Carnegie Mellon University, Digital Prism Advisors, Factored, Hugging Face, Institute for Human and Machine Cognition, Landing.ai, San Diego Supercomputing Center, Thomson Reuters Lab, and TU Eindhoven.