This is a guest post by Mario Namtao Shianti Larcher, Head of Computer Vision at Enel.

Enel, which started as Italy’s national entity for electricity, is today a multinational company present in 32 countries and the first private network operator in the world with 74 million users. It is also recognized as the first renewables player with 55.4 GW of installed capacity. In recent years, the company has invested heavily in the machine learning (ML) sector by developing strong in-house know-how that has enabled them to realize very ambitious projects such as automatic monitoring of its 2.3 million kilometers of distribution network.

Every year, Enel inspects its electricity distribution network with helicopters, cars, or other means; takes millions of photographs; and reconstructs the 3D image of its network, which is a point cloud 3D reconstruction of the network, obtained using LiDAR technology.

Examination of this data is critical for monitoring the state of the power grid, identifying infrastructure anomalies, and updating databases of installed assets, and it allows granular control of the infrastructure down to the material and status of the smallest insulator installed on a given pole. Given the amount of data (more than 40 million images each year just in Italy), the number of items to be identified, and their specificity, a completely manual analysis is very costly, both in terms of time and money, and error prone. Fortunately, thanks to enormous advances in the world of computer vision and deep learning and the maturity and democratization of these technologies, it’s possible to automate this expensive process partially or even completely.

Of course, the task remains very challenging, and, like all modern AI applications, it requires computing power and the ability to handle large volumes of data efficiently.

Enel built its own ML platform (internally called the ML factory) based on Amazon SageMaker, and the platform is established as the standard solution to build and train models at Enel for different use cases, across different digital hubs (business units) with tens of ML projects being developed on Amazon SageMaker Training, Amazon SageMaker Processing, and other AWS services like AWS Step Functions.

Enel collects imagery and data from two different sources:

- Aerial network inspections:

- LiDAR point clouds – They have the advantage of being an extremely accurate and geo-localized 3D reconstruction of the infrastructure, and therefore are very useful for calculating distances or taking measurements with an accuracy not obtainable from 2D image analysis.

- High-resolution images – These images of the infrastructure are taken within seconds of each other. This makes it possible to detect elements and anomalies that are too small to be identified in the point cloud.

- Satellite images – Although these can be more affordable than a power line inspection (some are available for free or for a fee), their resolution and quality is often not on par with images taken directly by Enel. The characteristics of these images make them useful for certain tasks like evaluating forest density and macro-category or finding buildings.

In this post, we discuss the details of how Enel uses these three sources, and share how Enel automates their large-scale power grid assessment management and anomaly detection process using SageMaker.

Analyzing high-resolution photographs to identify assets and anomalies

As with other unstructured data collected during inspections, the photographs taken are stored on Amazon Simple Storage Service (Amazon S3). Some of these are manually labeled with the goal of training different deep learning models for different computer vision tasks.

Conceptually, the processing and inference pipeline involves a hierarchical approach with multiple steps: first, the regions of interest in the image are identified, then these are cropped, assets are identified within them, and finally these are classified according to the material or presence of anomalies on them. Because the same pole often appears in more than one image, it’s also necessary to be able to group its images to avoid duplicates, an operation called reidentification.

For all these tasks, Enel uses the PyTorch framework and the latest architectures for image classification and object detection, such as EfficientNet/EfficientDet or others for the semantic segmentation of certain anomalies, such as oil leaks on transformers. For the reidentification task, if they can’t do it geometrically because they lack camera parameters, they use SimCLR-based self-supervised methods or Transformer-based architectures are used. It would be impossible to train all these models without having access to a large number of instances equipped with high-performance GPUs, so all the models were trained in parallel using Amazon SageMaker Training jobs with GPU accelerated ML instances. Inference has the same structure and is orchestrated by a Step Functions state machine that governs several SageMaker processing and training jobs that, despite the name, are as usable in training as in inference.

The following is a high-level architecture of the ML pipeline with its main steps.

This diagram shows the simplified architecture of the ODIN image inference pipeline, which extracts and analyzes ROIs (such as electricity posts) from dataset images. The pipeline further drills down on ROIs, extracting and analyzing electrical elements (transformers, insulators, and so on). After the components (ROIs and elements) are finalized, the reidentification process begins: images and poles in the network map are matched based on 3D metadata. This allows the clustering of ROIs referring to the same pole. After that, anomalies get finalized and reports are generated.

Extracting precise measurements using LiDAR point clouds

High-resolution photographs are very useful, but because they’re 2D, it’s impossible to extract precise measurements from them. LiDAR point clouds come to the rescue here, because they are 3D and have each point in the cloud a position with an associated error of less than a handful of centimeters.

However, in many cases, a raw point cloud is not useful, because you can’t do much with it if you don’t know whether a set of points represents a tree, a power line, or a house. For this reason, Enel uses KPConv, a semantic point cloud segmentation algorithm, to assign a class to each point. After the cloud is classified, it’s possible to figure out whether vegetation is too close to the power line rather than measuring the tilt of poles. Due to the flexibility of SageMaker services, the pipeline of this solution is not much different from the one already described, with the only difference being that in this case it is necessary to use GPU instances for inference as well.

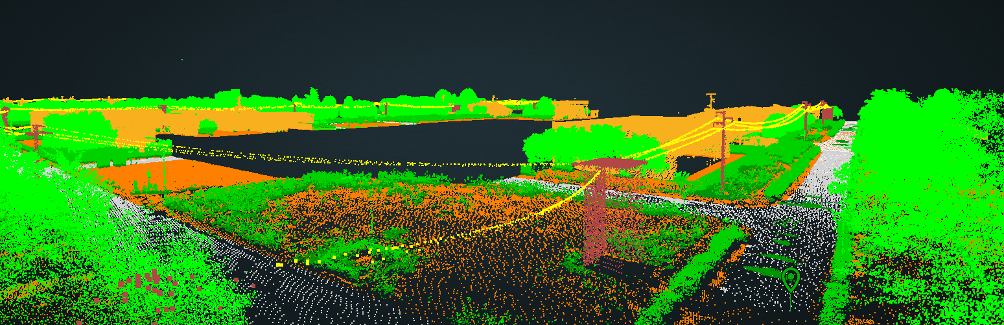

The following are some examples of point cloud images.

Looking at the power grid from space: Mapping vegetation to prevent service disruptions

Inspecting the power grid with helicopters and other means is generally very expensive and can’t be done too frequently. On the other hand, having a system to monitor vegetation trends in short time intervals is extremely useful for optimizing one of the most expensive processes of an energy distributor: tree pruning. This is why Enel also included in its solution the analysis of satellite images, from which with a multitask approach is identified where vegetation is present, its density, and the type of plants divided into macro classes.

For this use case, after experimenting with different resolutions, Enel concluded that the free Sentinel 2 images provided by the Copernicus program had the best cost-benefit ratio. In addition to vegetation, Enel also uses satellite imagery to identify buildings, which is useful information to understand if there are discrepancies between their presence and where Enel delivers power and therefore any irregular connections or problems in the databases. For the latter use case, the resolution of Sentinel 2, where one pixel represents an area of 10 square meters, is not sufficient, and so paid-for images with a resolution of 50 square centimeters are purchased. This solution also doesn’t differ much from the previous ones in terms of services used and flow.

The following is an aerial picture with identification of assets (pole and insulators).

Angela Italiano, Director of Data Science at ENEL Grid, says,

“At Enel, we use computer vision models to inspect our electricity distribution network by reconstructing 3D images of our network using tens of millions of high-quality images and LiDAR point clouds. The training of these ML models requires access to a large number of instances equipped with high-performance GPUs and the ability to handle large volumes of data efficiently. With Amazon SageMaker, we can quickly train all of our models in parallel without needing to manage the infrastructure as Amazon SageMaker training scales the compute resources up and down as needed. Using Amazon SageMaker, we are able to build 3D images of our systems, monitor for anomalies, and serve over 60 million customers efficiently.”

Conclusion

In this post, we saw how a top player in the energy world like Enel used computer vision models and SageMaker training and processing jobs to solve one of the main problems of those who have to manage an infrastructure of this colossal size, keep track of installed assets, and identify anomalies and sources of danger for a power line such as vegetation too close to it.

Learn more about the related features of SageMaker.

About the Authors

Mario Namtao Shianti Larcher is the Head of Computer Vision at Enel. He has a background in mathematics, statistics, and a profound expertise in machine learning and computer vision, he leads a team of over ten professionals. Mario’s role entails implementing advanced solutions that effectively utilize the power of AI and computer vision to leverage Enel’s extensive data resources. In addition to his professional endeavors, he nurtures a personal passion for both traditional and AI-generated art.

Mario Namtao Shianti Larcher is the Head of Computer Vision at Enel. He has a background in mathematics, statistics, and a profound expertise in machine learning and computer vision, he leads a team of over ten professionals. Mario’s role entails implementing advanced solutions that effectively utilize the power of AI and computer vision to leverage Enel’s extensive data resources. In addition to his professional endeavors, he nurtures a personal passion for both traditional and AI-generated art.

Cristian Gavazzeni is a Senior Solution Architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes playing golf with friends and travelling abroad with only fly and drive bookings.

Cristian Gavazzeni is a Senior Solution Architect at Amazon Web Services. He has more than 20 years of experience as a pre-sales consultant focusing on Data Management, Infrastructure and Security. During his spare time he likes playing golf with friends and travelling abroad with only fly and drive bookings.

Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering an ML background, he works with customers of any size to deeply understand their business and technical needs and design AI and Machine Learning solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, Computer Vision, NLP, and involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football.

Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering an ML background, he works with customers of any size to deeply understand their business and technical needs and design AI and Machine Learning solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, Computer Vision, NLP, and involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football.