Posted by Sara Beery, Student Researcher, and Jonathan Huang, Research Scientist, Google Research

Ecological monitoring helps researchers to understand the dynamics of global ecosystems, quantify biodiversity, and measure the effects of climate change and human activity, including the efficacy of conservation and remediation efforts. In order to monitor effectively, ecologists need high-quality data, often expending significant efforts to place monitoring sensors, such as static cameras, in the field. While it is increasingly cost effective to build and operate networks of such sensors, the manual data analysis of global biodiversity data remains a bottleneck to accurate, global, real-time ecological monitoring. While there are ways to automate this analysis via machine learning, the data from static cameras, widely used to monitor the world around us for purposes ranging from mountain pass road conditions to ecosystem phenology, still pose a strong challenge for traditional computer vision systems — due to power and storage constraints, sampling frequencies are low, often no faster than one frame per second, and sometimes are irregular due to the use of a motion trigger.

In order to perform well in this setting, computer vision models must be robust to objects of interest that are often off-center, out of focus, poorly lit, or at a variety of scales. In addition, a static camera will always take images of the same scene unless it is moved, which causes the data from any one camera to be highly repetitive. Without sufficient data variability, machine learning models may learn to focus on correlations in the background, leading to poor generalization to novel deployments. The machine learning and ecological communities have been working together through venues like LILA BC and Wildlife Insights to curate expert-labeled training data from many research groups, each of which may operate anywhere from one to hundreds of camera traps, in order to increase data variability. This process of data collection and annotation is slow, and is confounded by the need to have diverse, representative data across geographic regions and taxonomic groups.

|

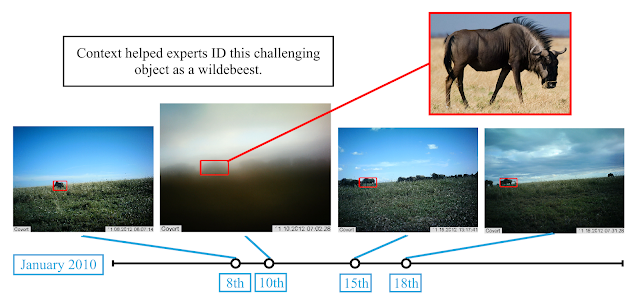

| What’s in this image? Objects in images from static cameras can be very challenging to detect and categorize. Here, a foggy morning has made it very difficult to see a herd of wildebeest walking along the crest of a hill. [Image from Snapshot Serengeti] |

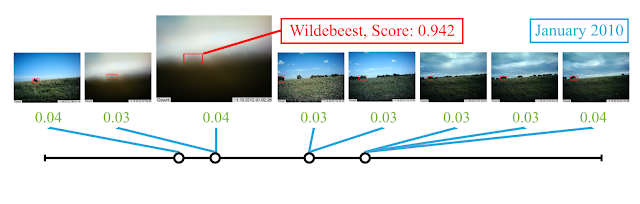

In Context R-CNN: Long Term Temporal Context for Per-Camera Object Detection, we present a complementary approach that increases global scalability by improving generalization to novel camera deployments algorithmically. This new object detection architecture leverages contextual clues across time for each camera deployment in a network, improving recognition of objects in novel camera deployments without relying on additional training data from a large number of cameras. Echoing the approach a person might use when faced with challenging images, Context R-CNN leverages up to a month’s worth of images from the same camera for context to determine what objects might be present and identify them. Using this method, the model outperforms a single-frame Faster R-CNN baseline by significant margins across multiple domains, including wildlife camera traps. We have open sourced the code and models for this work as part of the TF Object Detection API to make it easy to train and test Context R-CNN models on new static camera datasets.

The Context R-CNN Model

Context R-CNN is designed to take advantage of the high degree of correlation within images taken by a static camera to boost performance on challenging data and improve generalization to new camera deployments without additional human data labeling. It is an adaptation of Faster R-CNN, a popular two-stage object detection architecture. To extract context for a camera, we first use a frozen feature extractor to build up a contextual memory bank from images across a large time horizon (up to a month or more). Next, objects are detected in each image using Context R-CNN which aggregates relevant context from the memory bank to help detect objects under challenging conditions (such as the heavy fog obscuring the wildebeests in our previous example). This aggregation is performed using attention, which is robust to the sparse and irregular sampling rates often seen in static monitoring cameras.

|

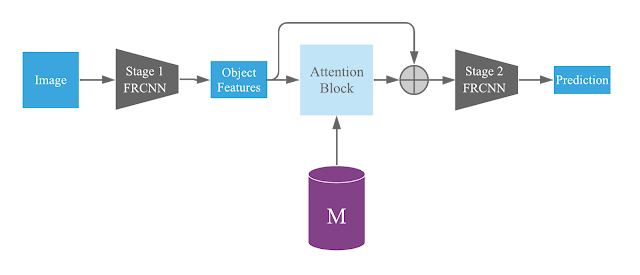

| High-level architecture diagram, showing how Context R-CNN incorporates long-term context within the Faster R-CNN model architecture. |

The first stage of Faster R-CNN proposes potential objects, and the second stage categorizes each proposal as either background or one of the target classes. In Context R-CNN, we take the proposed objects from the first stage of Faster R-CNN, and for each one we use similarity-based attention to determine how relevant each of the features in our memory bank (M) is to the current object, and construct a per-object context feature by taking a relevance-weighted sum over M and adding it back to the original object features. Then each object, now with added contextual information, is finally categorized using the second stage of Faster R-CNN.

|

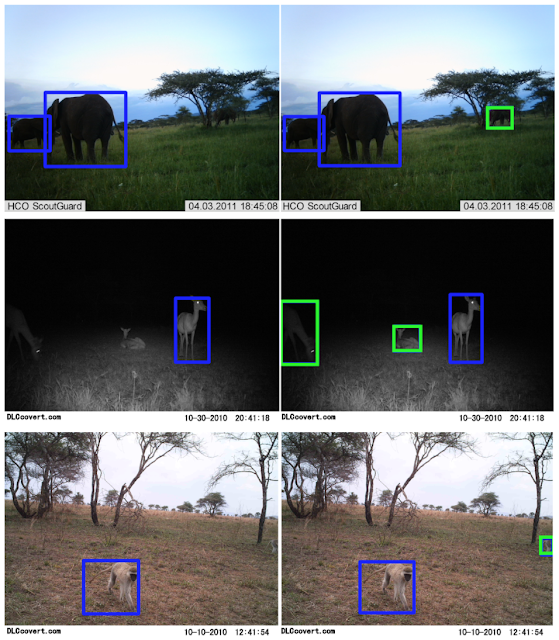

| Compared to a Faster R-CNN baseline (left), Context R-CNN (right) is able to capture challenging objects such as an elephant occluded by a tree, two poorly-lit impala, and a vervet monkey leaving the frame. [Images from Snapshot Serengeti] |

Results

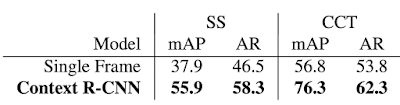

We have tested Context R-CNN on Snapshot Serengeti (SS) and Caltech Camera Traps (CCT), both ecological datasets of animal species in camera traps but from highly different geographic regions (Tanzania vs. the Southwestern United States). Improvements over the Faster R-CNN baseline for each dataset can be seen in the table below. Notably, we see a 47.5% relative increase in mean average precision (mAP) on SS, and a 34.3% relative mAP increase on CCT. We also compare Context R-CNN to S3D (a 3D convolution based baseline) and see performance improve from 44.7% mAP to 55.9% mAP (a 25.1% relative increase). Finally, we find that the performance increases as the contextual time horizon increases, from a minute of context to a month.

|

| Comparison to a single frame Faster R-CNN baseline, showing both mean average precision (mAP) and average recall (AR) detection metrics. |

Ongoing and Future Work

We are working to implement Context R-CNN within the Wildlife Insights platform, to facilitate large-scale, global ecological monitoring via camera traps. We also host competitions such as the yearly iWildCam species identification competition at the CVPR Fine-Grained Visual Recognition Workshop to help bring these challenges to the attention of the computer vision community. The challenges seen in automatic species identification in static cameras are shared by numerous applications of static cameras outside of the ecological monitoring domain, as well as other static sensors used to monitor biodiversity, such as audio and sonar devices. Our method is general, and we anticipate the per-sensor context approach taken by Context R-CNN would be beneficial for any static sensor.

Acknowledgements

This post reflects the work of the authors as well as the following group of core contributors: Vivek Rathod, Guanhang Wu, Ronny Votel. We are also grateful to Zhichao Lu, David Ross, Tanya Birch and the Wildlife Insights AI team, and Pietro Perona and the Caltech Computational Vision Lab.