Editor’s note: This post is a part of our Meet the Omnivore series, which features individual creators and developers who use NVIDIA Omniverse to accelerate their 3D workflows and create virtual worlds.

When not engrossed in his studies toward a Ph.D. in statistics, conducting data-driven research on AI and robotics, or enjoying his favorite hobby of sailing, Yizhou Zhao is winning contests for developers who use NVIDIA Omniverse — a platform for connecting and building custom 3D pipelines and metaverse applications.

The fifth-year doctoral candidate at the University of California, Los Angeles recently received first place in the inaugural #ExtendOmniverse contest, where developers were invited to create their own Omniverse extension for a chance to win an NVIDIA RTX GPU.

Omniverse extensions are core building blocks that let anyone create and extend functions of Omniverse apps using the popular Python programming language.

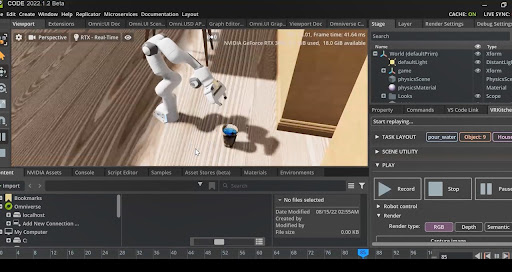

Zhao’s winning entry, called “IndoorKit,” allows users to easily load and record robotics simulation tasks in indoor scenes. It sets up robotics manipulation tasks by automatically populating scenes with the indoor environment, the bot and other objects with just a few clicks.

“Typically, it’s hard to deploy a robotics task in simulation without a lot of skills in scene building, layout sampling and robot control,” Zhao said. “By bringing assets into Omniverse’s powerful user interface using the Universal Scene Description framework, my extension achieves instant scene setup and accurate control of the robot.”

Within “IndoorKit,” users can simply click “add object,” “add house,” “load scene,” “record scene” and other buttons to manipulate aspects of the environment and dive right into robotics simulation.

With Universal Scene Description (USD), an open-source, extensible file framework, Zhao seamlessly brought 3D models into his environments using Omniverse Connectors for Autodesk Maya and Blender software.

The “IndoorKit” extension also relies on assets from the NVIDIA Isaac Sim robotics simulation platform and Omniverse’s built-in PhysX capabilities for accurate, articulated manipulation of the bots.

In addition, “IndoorKit” can randomize a scene’s lighting, room materials and more. One scene Zhao built with the extension is highlighted in the feature video above.

Omniverse for Robotics

The “IndoorKit” extension bridges Omniverse and robotics research in simulation.

“I don’t see how accurate robot control was performed prior to Omniverse,” Zhao said. He provides four main reasons for why Omniverse was the ideal platform on which to build this extension:

First, Python’s popularity means many developers can build extensions with it to unlock machine learning and deep learning research for a broader audience, he said.

Second, using NVIDIA RTX GPUs with Omniverse greatly accelerates robot control and training.

Third, Omniverse’s ray-tracing technology enables real-time, photorealistic rendering of his scenes. This saves 90% of the time Zhao used to spend for experiment setup and simulation, he said.

And fourth, Omniverse’s real-time advanced physics simulation engine, PhysX, supports an extensive range of features — including liquid, particle and soft-body simulation — which “land on the frontier of robotics studies,” according to Zhao.

“The future of art, engineering and research is in the spirit of connecting everything: modeling, animation and simulation,” he said. “And Omniverse brings it all together.”

Join In on the Creation

Creators and developers across the world can download NVIDIA Omniverse for free, and enterprise teams can use the platform for their 3D projects.

Discover how to build an Omniverse extension in less than 10 minutes.

For a deeper dive into developing on Omniverse, watch the on-demand NVIDIA GTC session, “How to Build Extensions and Apps for Virtual Worlds With NVIDIA Omniverse.”

Find additional documentation and tutorials in the Omniverse Resource Center, which details how developers like Zhao can build custom USD-based applications and extensions for the platform.

To discover more free tools, training and a community for developers, join the NVIDIA Developer Program.

Follow NVIDIA Omniverse on Instagram, Medium, Twitter and YouTube for additional resources and inspiration. Check out the Omniverse forums, and join our Discord server and Twitch channel to chat with the community.

The post Meet the Omnivore: Ph.D. Student Lets Anyone Bring Simulated Bots to Life With NVIDIA Omniverse Extension appeared first on NVIDIA Blog.