Posted by the TensorFlow team

As Google I/O took place, we published a lot of exciting new docs on tensorflow.org, including updates to model parallelism and model remediation, TensorFlow Lite, and the TensorFlow Model Garden. Let’s take a look at what new things you can learn about!

Counterfactual Logit Pairing

The Responsible AI team added a new model remediation technique as part of their Model Remediation library. The TensorFlow Model Remediation library provides training-time techniques to intervene on the model such as changing the model itself by introducing or altering model objectives. Originally, model remediation launched with its first technique, MinDiff, which minimizes the difference in performance between two slices of data.

New at I/O is Counterfactual Logit Pairing (CLP). This is a technique that seeks to ensure that a model’s prediction doesn’t change when a sensitive attribute referenced in an example is either removed or replaced. For example, in a toxicity classifier, examples such as “I am a man” and “I am a lesbian” should be equal and not classified as toxic.

Check out the basic tutorial, the Keras tutorial, and the API reference.

Model parallelism: DTensor

DTensor provides a global programming model that allows developers to operate on tensors globally while managing distribution across devices. DTensor distributes the program and tensors according to the sharding directives through a procedure called Single program, multiple data (SPMD) expansion.

By decoupling the overall application from sharding directives, DTensor enables running the same application on a single device, multiple devices, or even multiple clients, while preserving its global semantics. If you remember Mesh TensorFlow from TF1, DTensor can address the same issue that Mesh addressed: training models that may be larger than a single core.

With TensorFlow 2.9, we made DTensor, that had been in nightly builds, visible on tensorflow.org. Although DTensor is experimental, you’re welcome to try it out. Check out the DTensor Guide, the DTensor Keras Tutorial, and the API reference.

New in TensorFlow Lite

We made some big changes to the TensorFlow Lite site, including to the getting started docs.

Developer Journeys

First off, we now organize the developer journeys by platform (Android, iOS, and other edge devices) to make it easier to get started with your platform. Android gained a new learning roadmap and quickstart. We also earlier added a guide to the new beta for TensorFlow Lite in Google Play services. These quickstarts include examples in both Kotlin and Java, and upgrade our example code to CameraX, as recommended by our colleagues in Android developer relations!

If you want to immediately run an Android sample, one can now be imported directly from Android studio. When starting a new project, choose: New Project > Import Sample… and look for Artificial Intelligence > TensorFlow Lite in Play Services image classification example application. This is the sample that can help you find your mug…or other objects:

Model Maker

The TensorFlow Lite Model Maker library simplifies the process of training a TensorFlow Lite model using custom datasets. It uses transfer learning to reduce the amount of training data required and reduce training time, and comes pre-built with seven common tasks including image classification, object detection, and text search.

We added a new tutorial for text search. This type of model lets you take a text query and search for the most related entries in a text dataset, such as a database of web pages. On mobile, you might use this for auto reply or semantic document search.

We also published the full Python library reference.

TF Lite model page

Finding the right model for your use case can sometimes be confusing. We’ve written more guidance on how to choose the right model for your task, and what to consider to make that decision.You can also find links to models for common use cases.

Model Garden: State of the art models ready to go

The TensorFlow Model Garden provides implementations of many state-of-the-art machine learning (ML) models for vision and natural language processing (NLP), as well as workflow tools to let you quickly configure and run those models on standard datasets. The Model Garden covers both vision and text tasks, and a flexible training loop library called Orbit. Models come with pre-built configs to train to state-of-the-art, as well as many useful specialized ops.

We’re just getting started documenting all the great things you can do with the Model Garden. Your first stops should be the overview, lists of available models, and the image classification tutorial.

Other exciting things!

Don’t miss the crown-of-thorns starfish detector! Find your own COTS on real images from the Great Barrier reef. See the video, read the blog post, and try out the model in Colab yourself.

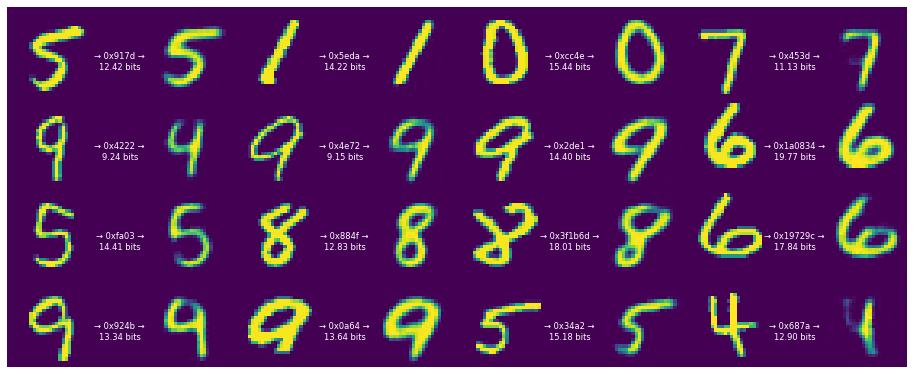

Also, there is a new tutorial on TensorFlow compression, which does lossy compression using neural networks. This example uses something like an autoencoder to compress and decompress MNIST.

And, of course, don’t miss all the great I/O talks you can watch on YouTube. Thank you!