CLIPr aspires to help save 1 billion hours of people’s time. We organize video into a first-class, searchable data source that unlocks the content most relevant to your interests using AWS machine learning (ML) services. CLIPr simplifies the extraction of information in videos, saving you hours by eliminating the need to skim through them manually to find the most relevant information. CLIPr provides simple AI-enabled tools to find, interact, and share content across videos, uncovering your buried treasure by converting unstructured information into actionable data and insights.

How CLIPr uses AWS ML services

At CLIPr, we’re leveraging the best of what AWS and the ML stack is offering to delight our customers. At its core, CLIPr uses the latest ML, serverless, and infrastructure as code (IaC) design principles. AWS allows us to consume cloud resources just when we need them, and we can deploy a completely new customer environment in a couple of minutes with just one script. The second benefit is the scale. Processing video requires an architecture that can scale vertically and horizontally by running many jobs in parallel.

As an early-stage startup, time to market is critical. Building models from the ground up for key CLIPr features like entity extraction, topic extraction, and classification would have taken us a long time to develop and train. We quickly delivered advanced capabilities by using AWS AI services for our applications and workflows. We used Amazon Transcribe to convert audio into searchable transcripts, Amazon Comprehend for text classification and organizing by relevant topics, Amazon Comprehend Medical to extract medical ontologies for a health care customer, and Amazon Rekognition to detect people’s names, faces, and meeting types for our first MVP. We were able to iterate fairly quickly and deliver quick wins that helped us close our pre-seed round with our investors.

Since then, we have started to upgrade our workflows and data pipelines to build in-house proprietary ML models, using the data we gathered in our training process. Amazon SageMaker has become an essential part of our solution. It’s a fabric that enables us to provide ML in a serverless model with unlimited scaling. The ease of use and flexibility to use any ML and deep learning framework of choice was an influencing factor. We’re using TensorFlow, Apache MXNet, and SageMaker notebooks.

Because we used open-source frameworks, we were able to attract and onboard data scientists to our team who are familiar with these platforms and quickly scale it in a cost-effective way. In just a few months, we integrated our in-house ML algorithms and workflows with SageMaker to improve customer engagement.

The following diagram shows our architecture of AWS services.

The more complex user experience is our Trainer UI, which allows human reviews of data collected via CLIPr’s AI processing engine in a timeline view. Humans can augment the AI-generated data and also fix potential issues. Human oversight helps us ensure accuracy and continuously improve and retrain models with updated predictions. An excellent example of this is speaker identification. We construct spectrographs from samples of the meeting speakers’ voices and video frames, and can identify and correlate the names and faces (if there is a video) of meeting participants. The Trainer UI also includes the ability to inspect the process workflow, and issues are flagged to help our data scientists understand what additional training may be required. A typical example of this is the visual clues to identify when speaker names differ in various meeting platforms.

Using CLIPr to create a personalized re:Invent video

We used CLIPr to process all the AWS re:Invent 2020 keynotes and leadership sessions to create a searchable video collection so you can easily find, interact, and share the moments you care about most across hundreds of re:Invent sessions. CLIPr became generally available in December 2020, and today we launched the ability for customers to upload their own content.

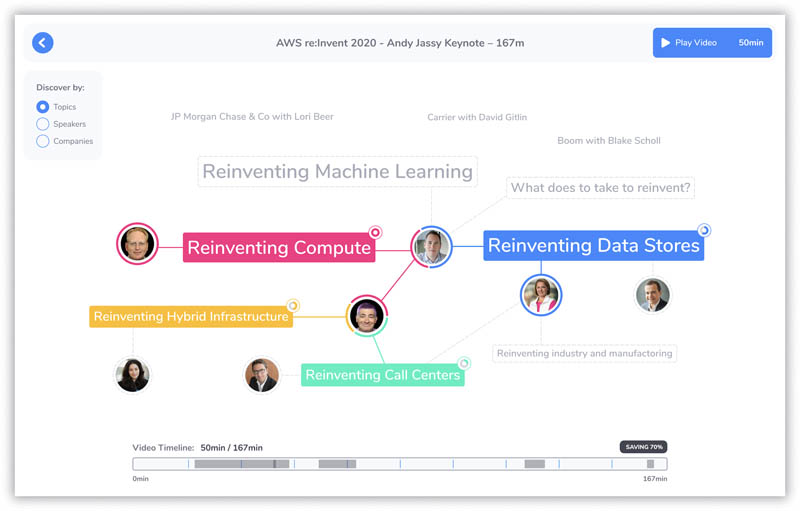

The following is an example of a CLIPr processed video of Andy’s keynote. You get to apply filters to the entire video to match topics that are auto-generated by CLIPr ML algorithms.

CLIPr dynamically creates a custom video from the keynote by aggregating the topics and moments that you select. Upon choosing Watch now, you can view your video composed of the topics and moments you selected. In this way, CLIPr is a video enrichment platform.

Our commenting and reaction features provide a co-viewing experience where you can see and interact with other users’ reactions and comments, adding collaborative value to the content. Back in the early days of AWS, low-flying-hawk was a huge contributor to the AWS user forums. The AWS team often sought low-flying-hawk’s thoughts on new features, pricing, and issues we were experiencing. Low-flying-hawk was like having a customer in our meetings without actually being there. Imagine what it would be like to have customers, AWS service owners, and presenters chime in and add context to the re:Invent presentations at scale.

Our customers very much appreciate the Smart Skip feature, where CLIPr gives you the option to skip to the beginning of the next topic of interest.

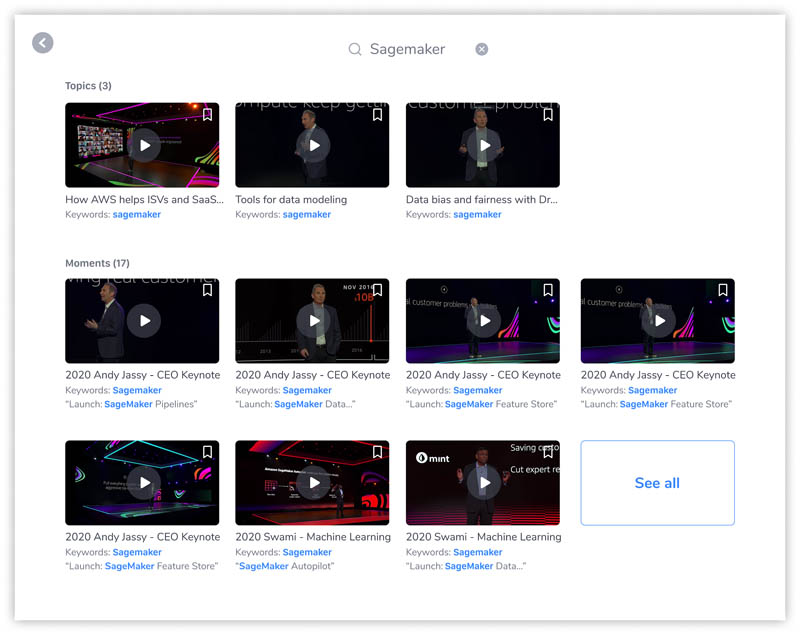

We built a natural language query and search capability so our customers can find moments easily and fast. For instance, you can search “SageMaker” in CLIPr search. We do a deep search across our entire media assets, ranging from keywords, video transcripts, topics, and moments, to present instant results. In a similar search (see the following screenshot), CLIPr highlights Andy’s keynote sessions, and also includes specific moments when SageMaker is mentioned in Swami Sivasubramanian and Matt Wood’s sessions.

CLIPr also enables advanced analytics capabilities using knowledge graphs, allowing you to understand the most important moments, including correlations across your entire video assets. The following is an example of the knowledge graph correlations from all the re:Invent 2020 videos filtered by topics, speakers, or specific organizations.

We provide a content library of re:Invent sessions, with all the keynotes and leadership sessions, to save you time and make the most out of re:Invent. Try CLIPr in action with re:Invent videos, see how CLIPr uses AWS to make it all happen.

Conclusion

Create an account at www.clipr.ai and create a personalized view of re:Invent content. You can also upload your own videos, so you can spend more time building and less time watching!

About the Authors

Humphrey Chen‘s experience spans from product management at AWS and Microsoft to advisory roles with Noom, Dialpad, and GrayMeta. At AWS, he was Head of Product and then Key Initiatives for Amazon’s Computer Vision. Humphrey knows how to take an idea and make it real. His first startup was the equivalent of shazam for FM radio and launched in 20 cities with AT&T and Sprint in 1999. Humphrey holds a Bachelor of Science degree from MIT and an MBA from Harvard.

Aaron Sloman is a Microsoft alum who launched several startups before joining CLIPr, with ventures including Nimble Software Systems, Inc., CrossFit Chalk, and speakTECH. Aaron was recently the architect and CTO for OWNZONES, a media supply chain and collaboration company, using advanced cloud and AI technologies for video processing.