Running machine learning (ML) workloads with containers is becoming a common practice. Containers can fully encapsulate not just your training code, but the entire dependency stack down to the hardware libraries and drivers. What you get is an ML development environment that is consistent and portable. With containers, scaling on a cluster becomes much easier.

In late 2022, AWS announced the general availability of Amazon EC2 Trn1 instances powered by AWS Trainium accelerators, which are purpose built for high-performance deep learning training. Trn1 instances deliver up to 50% savings on training costs over other comparable Amazon Elastic Compute Cloud (Amazon EC2) instances. Also, the AWS Neuron SDK was released to improve this acceleration, giving developers tools to interact with this technology such as to compile, runtime, and profile to achieve high-performance and cost-effective model trainings.

Amazon Elastic Container Service (Amazon ECS) is a fully managed container orchestration service that simplifies your deployment, management, and scaling of containerized applications. Simply describe your application and the resources required, and Amazon ECS will launch, monitor, and scale your application across flexible compute options with automatic integrations to other supporting AWS services that your application needs.

In this post, we show you how to run your ML training jobs in a container using Amazon ECS to deploy, manage, and scale your ML workload.

Solution overview

We walk you through the following high-level steps:

- Provision an ECS cluster of Trn1 instances with AWS CloudFormation.

- Build a custom container image with the Neuron SDK and push it to Amazon Elastic Container Registry (Amazon ECR).

- Create a task definition to define an ML training job to be run by Amazon ECS.

- Run the ML task on Amazon ECS.

Prerequisites

To follow along, familiarity with core AWS services such as Amazon EC2 and Amazon ECS is implied.

Provision an ECS cluster of Trn1 instances

To get started, launch the provided CloudFormation template, which will provision required resources such as a VPC, ECS cluster, and EC2 Trainium instance.

We use the Neuron SDK to run deep learning workloads on AWS Inferentia and Trainium-based instances. It supports you in your end-to-end ML development lifecycle to create new models, optimize them, then deploy them for production. To train your model with Trainium, you need to install the Neuron SDK on the EC2 instances where the ECS tasks will run to map the NeuronDevice associated with the hardware, as well as the Docker image that will be pushed to Amazon ECR to access the commands to train your model.

Standard versions of Amazon Linux 2 or Ubuntu 20 don’t come with AWS Neuron drivers installed. Therefore, we have two different options.

The first option is to use a Deep Learning Amazon Machine Image (DLAMI) that has the Neuron SDK already installed. A sample is available on the GitHub repo. You can choose a DLAMI based on the opereating system. Then run the following command to get the AMI ID:

aws ec2 describe-images --region us-east-1 --owners amazon --filters 'Name=name,Values=Deep Learning AMI Neuron PyTorch 1.13.? (Amazon Linux 2) ????????' 'Name=state,Values=available' --query 'reverse(sort_by(Images, &CreationDate))[:1].ImageId' --output text

The output will be as follows:

ami-06c40dd4f80434809

This AMI ID can change over time, so make sure to use the command to get the right AMI ID.

Now you can change this AMI ID in the CloudFormation script and use the ready-to-use Neuron SDK. To do this, look for EcsAmiId in Parameters:

"EcsAmiId": {

"Type": "String",

"Description": "AMI ID",

"Default": "ami-09def9404c46ac27c"

}

The second option is to create an instance filling the userdata field during stack creation. You don’t need to install it because CloudFormation will set this up. For more information, refer to the Neuron Setup Guide.

For this post, we use option 2, in case you need to use a custom image. Complete the following steps:

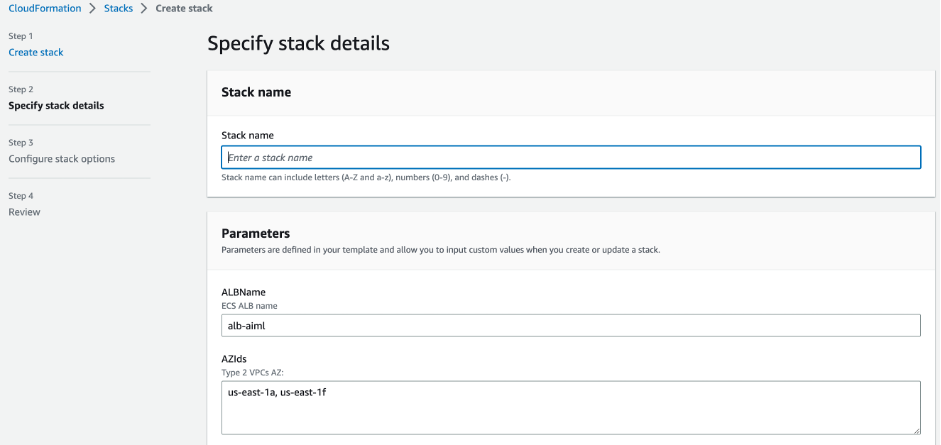

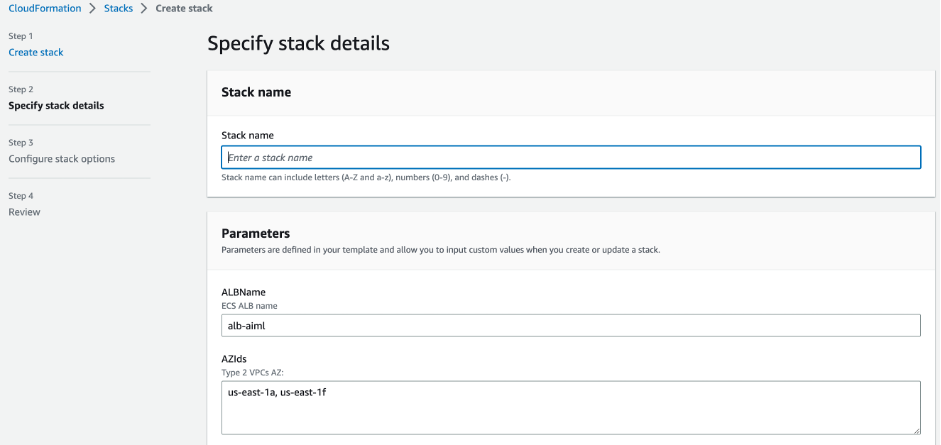

- Launch the provided CloudFormation template.

- For KeyName, enter a name of your desired key pair, and it will preload the parameters. For this post, we use

trainium-key.

- Enter a name for your stack.

- If you’re running in the

us-east-1 Region, you can keep the values for ALBName and AZIds at their default.

-

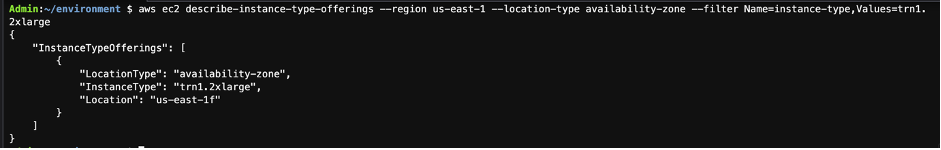

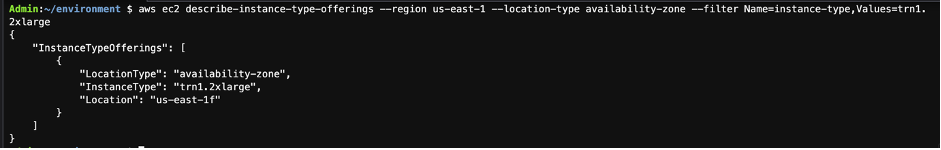

To check what Availability Zone in the Region has Trn1 available, run the following command:

aws ec2 describe-instance-type-offerings --region us-east1 --location-type availability-zone --filter Name=instance-type,Values=trn1.2xlarge

- Choose Next and finish creating the stack.

When the stack is complete, you can move to the next step.

Prepare and push an ECR image with the Neuron SDK

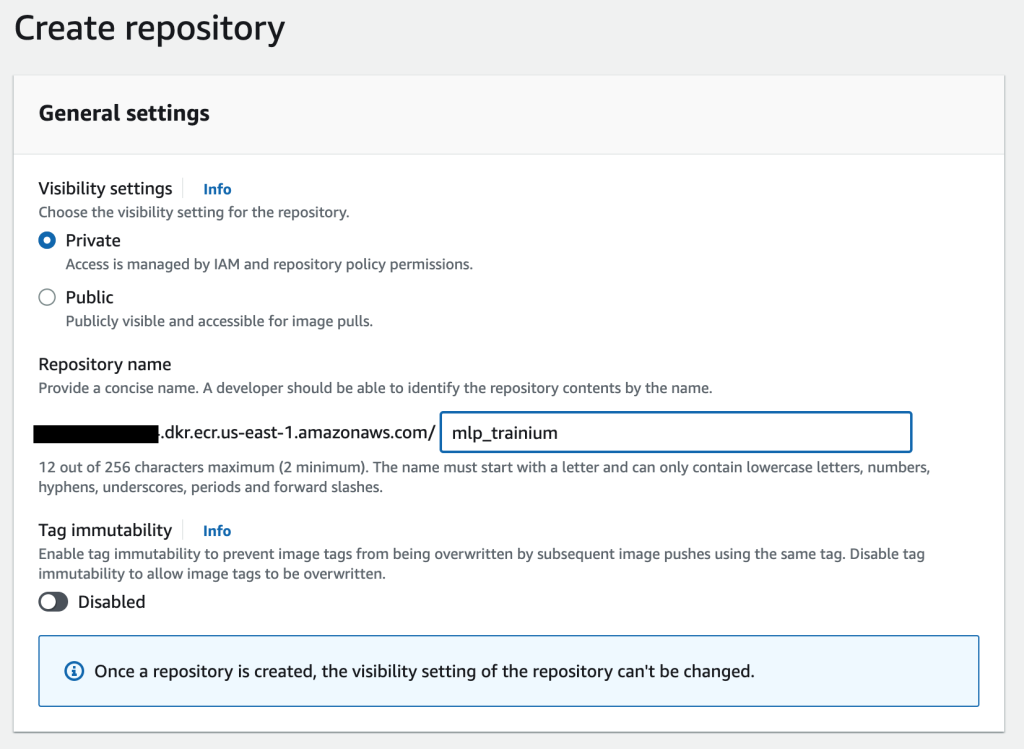

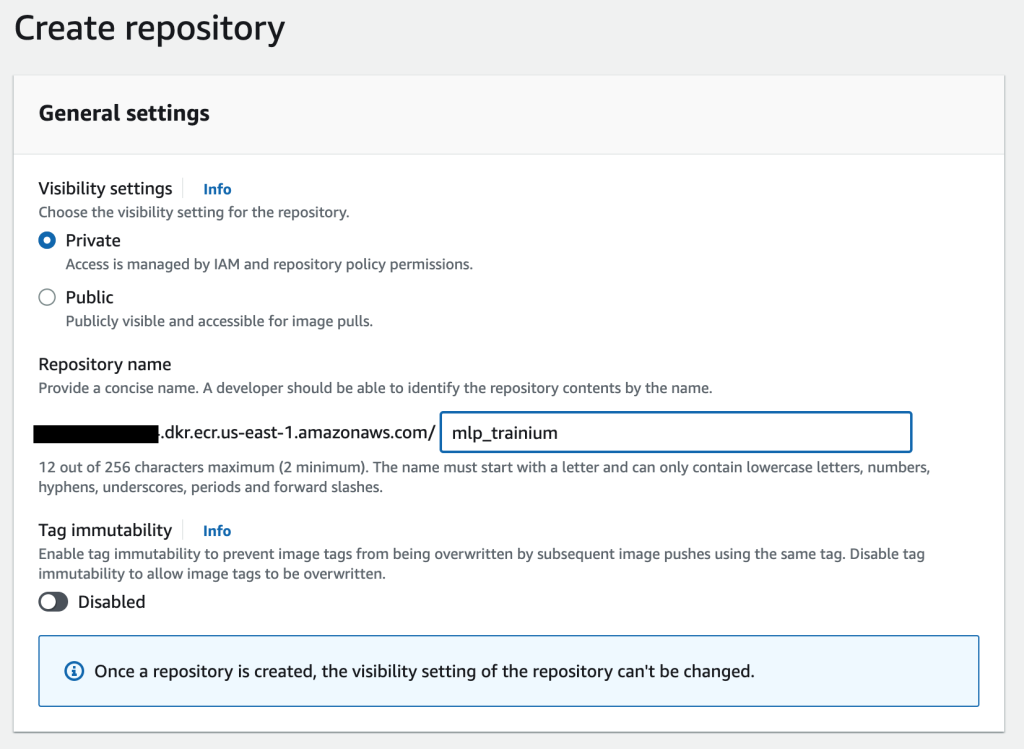

Amazon ECR is a fully managed container registry offering high-performance hosting, so you can reliably deploy application images and artifacts anywhere. We use Amazon ECR to store a custom Docker image containing our scripts and Neuron packages needed to train a model with ECS jobs running on Trn1 instances. You can create an ECR repository using the AWS Command Line Interface (AWS CLI) or AWS Management Console. For this post, we use the console. Complete the following steps:

- On the Amazon ECR console, create a new repository.

- For Visibility settings¸ select Private.

- For Repository name, enter a name.

- Choose Create repository.

Now that you have a repository, let’s build and push an image, which could be built locally (into your laptop) or in a AWS Cloud9 environment. We are training a multi-layer perceptron (MLP) model. For the original code, refer to Multi-Layer Perceptron Training Tutorial.

- Copy the train.py and model.py files into a project.

It’s already compatible with Neuron, so you don’t need to change any code.

- 5. Create a Dockerfile that has the commands to install the Neuron SDK and training scripts:

FROM amazonlinux:2

RUN echo $'[neuron] n

name=Neuron YUM Repository n

baseurl=https://yum.repos.neuron.amazonaws.com n

enabled=1' > /etc/yum.repos.d/neuron.repo

RUN rpm --import https://yum.repos.neuron.amazonaws.com/GPG-PUB-KEY-AMAZON-AWS-NEURON.PUB

RUN yum install aws-neuronx-collectives-2.* -y

RUN yum install aws-neuronx-runtime-lib-2.* -y

RUN yum install aws-neuronx-tools-2.* -y

RUN yum install -y tar gzip pip

RUN yum install -y python3 python3-pip

RUN yum install -y python3.7-venv gcc-c++

RUN python3.7 -m venv aws_neuron_venv_pytorch

# Activate Python venv

ENV PATH="/aws_neuron_venv_pytorch/bin:$PATH"

RUN python -m pip install -U pip

RUN python -m pip install wget

RUN python -m pip install awscli

RUN python -m pip config set global.extra-index-url https://pip.repos.neuron.amazonaws.com

RUN python -m pip install torchvision tqdm torch-neuronx neuronx-cc==2.* pillow

RUN mkdir -p /opt/ml/mnist_mlp

COPY model.py /opt/ml/mnist_mlp/model.py

COPY train.py /opt/ml/mnist_mlp/train.py

RUN chmod +x /opt/ml/mnist_mlp/train.py

CMD ["python3", "/opt/ml/mnist_mlp/train.py"]

To create your own Dockerfile using Neuron, refer to Develop on AWS ML accelerator instance, where you can find guides for other OS and ML frameworks.

- 6. Build an image and then push it to Amazon ECR using the following code (provide your Region, account ID, and ECR repository):

aws ecr get-login-password --region us-east-1 | docker login --username AWS --password-stdin {your-account-id}.dkr.ecr.{your-region}.amazonaws.com

docker build -t mlp_trainium .

docker tag mlp_trainium:latest {your-account-id}.dkr.ecr.us-east-1.amazonaws.com/mlp_trainium:latest

docker push {your-account-id}.dkr.ecr.{your-region}.amazonaws.com/{your-ecr-repo-name}:latest

After this, your image version should be visible in the ECR repository that you created.

Run the ML training job as an ECS task

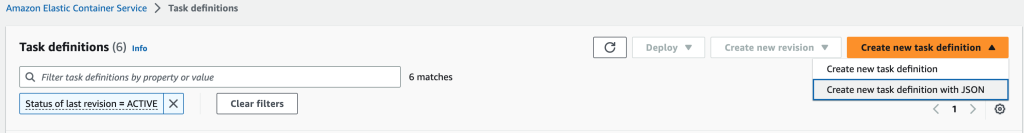

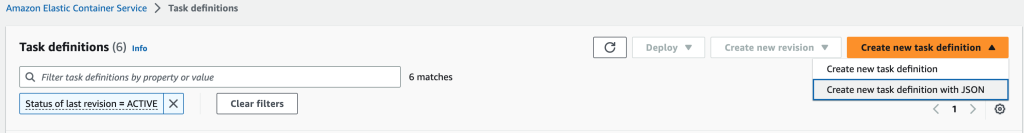

To run the ML training task on Amazon ECS, you first need to create a task definition. A task definition is required to run Docker containers in Amazon ECS.

- On the Amazon ECS console, choose Task definitions in the navigation pane.

- On the Create new task definition menu, choose Create new task definition with JSON.

You can use the following task definition template as a baseline. Note that in the image field, you can use the one generated in the previous step. Make sure it includes your account ID and ECR repository name.

To make sure that Neuron is installed, you can check if the volume /dev/neuron0 is mapped in the devices block. This maps to a single NeuronDevice running on the trn1.2xlarge instance with two cores.

- Create your task definition using the following template:

{

"family": "mlp_trainium",

"containerDefinitions": [

{

"name": "mlp_trainium",

"image": "{your-account-id}.dkr.ecr.us-east-1.amazonaws.com/{your-ecr-repo-name}",

"cpu": 0,

"memoryReservation": 1000,

"portMappings": [],

"essential": true,

"environment": [],

"mountPoints": [],

"volumesFrom": [],

"linuxParameters": {

"capabilities": {

"add": [

"IPC_LOCK"

]

},

"devices": [

{

"hostPath": "/dev/neuron0",

"containerPath": "/dev/neuron0",

"permissions": [

"read",

"write"

]

}

]

},

,

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-create-group": "true",

"awslogs-group": "/ecs/task-logs",

"awslogs-region": "us-east-1",

"awslogs-stream-prefix": "ecs"

}

}

}

],

"networkMode": "awsvpc",

"placementConstraints": [

{

"type": "memberOf",

"expression": "attribute:ecs.os-type == linux"

},

{

"type": "memberOf",

"expression": "attribute:ecs.instance-type == trn1.2xlarge"

}

],

"requiresCompatibilities": [

"EC2"

],

"cpu": "1024",

"memory": "3072"

}

You can also complete this step on the AWS CLI using the following task definition or with the following command:

aws ecs register-task-definition

--family mlp-trainium

--container-definitions '[{

"name": "my-container-1",

"image": "{your-account-id}.dkr.ecr.us-east-1.amazonaws.com/{your-ecr-repo-name}",

"cpu": 0,

"memoryReservation": 1000,

"portMappings": [],

"essential": true,

"environment": [],

"mountPoints": [],

"volumesFrom": [],

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-create-group": "true",

"awslogs-group": "/ecs/task-logs",

"awslogs-region": "us-east-1",

"awslogs-stream-prefix": "ecs"

}

},

"linuxParameters": {

"capabilities": {

"add": [

"IPC_LOCK"

]

},

"devices": [{

"hostPath": "/dev/neuron0",

"containerPath": "/dev/neuron0",

"permissions": ["read", "write"]

}]

}

}]'

--requires-compatibilities EC2

--cpu "8192"

--memory "16384"

--placement-constraints '[{

"type": "memberOf",

"expression": "attribute:ecs.instance-type == trn1.2xlarge"

}, {

"type": "memberOf",

"expression": "attribute:ecs.os-type == linux"

}]'

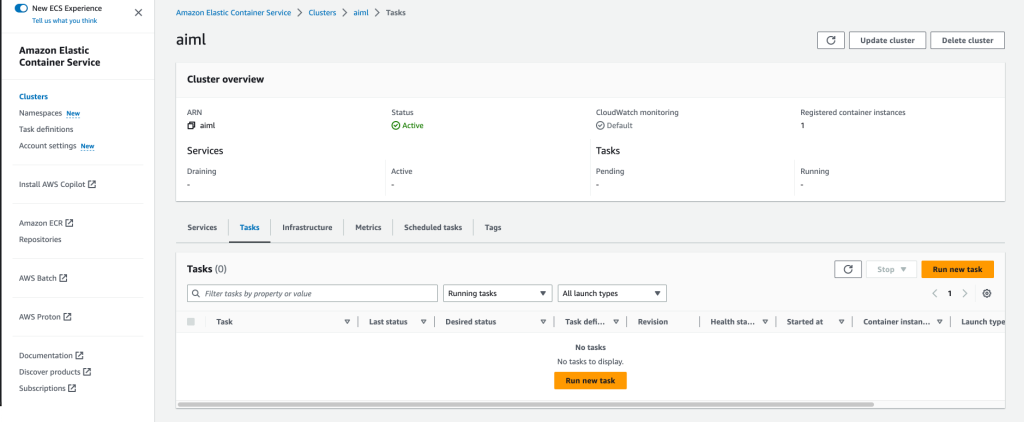

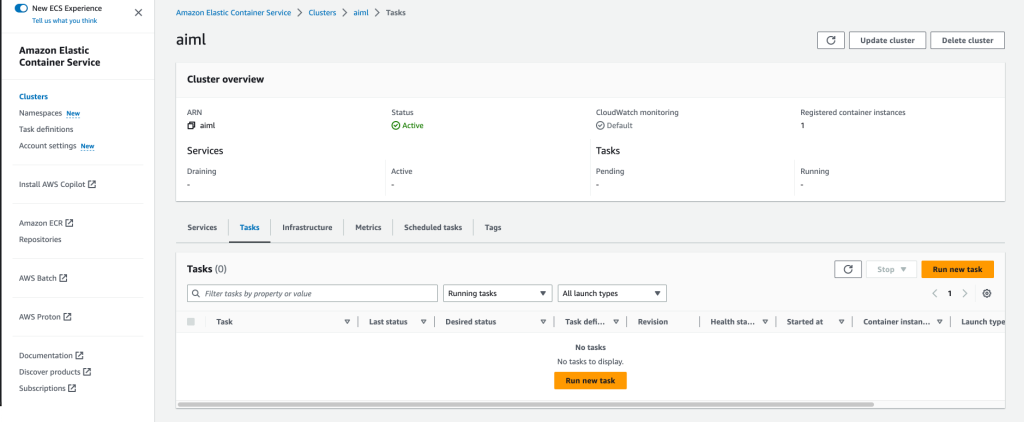

Run the task on Amazon ECS

After we have created the ECS cluster, pushed the image to Amazon ECR, and created the task definition, we run the task definition to train a model on Amazon ECS.

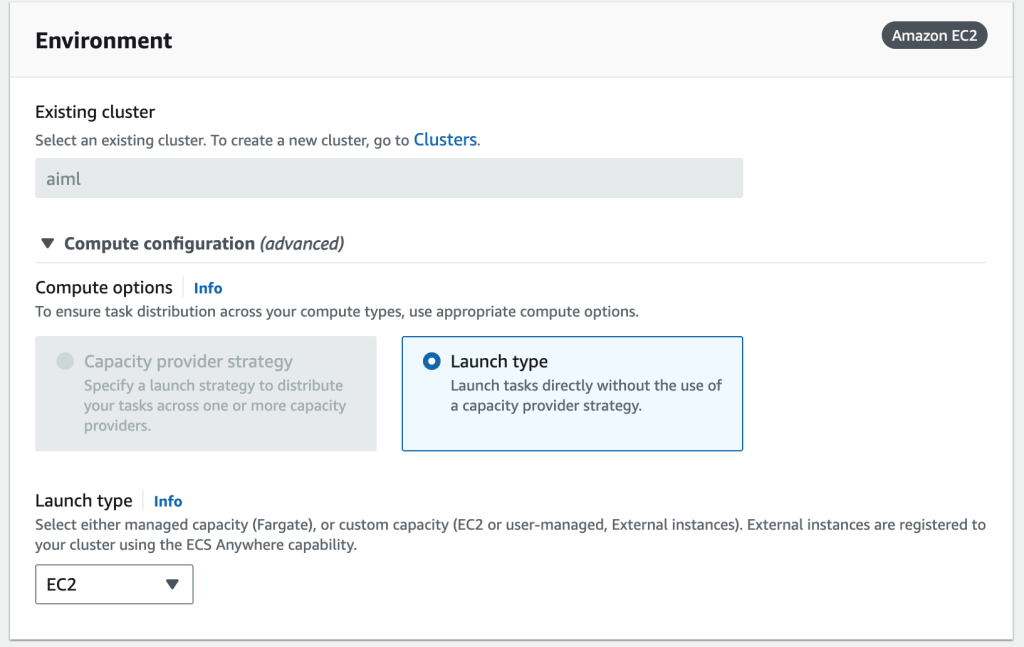

- On the Amazon ECS console, choose Clusters in the navigation pane.

- Open your cluster.

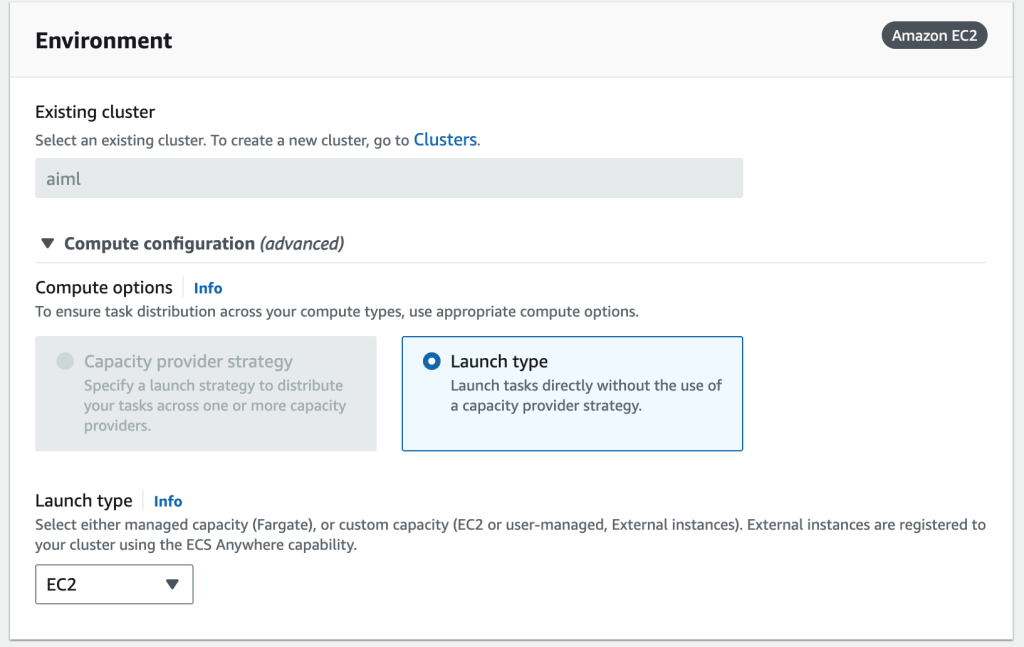

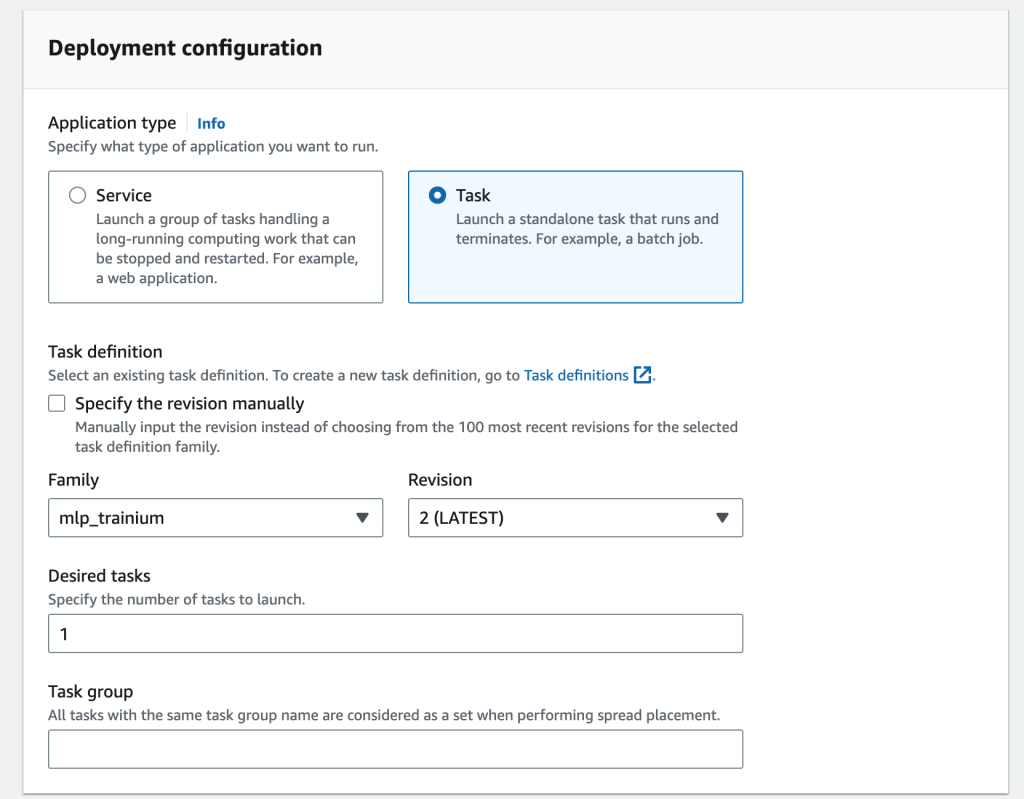

- On the Tasks tab, choose Run new task.

- For Launch type, choose EC2.

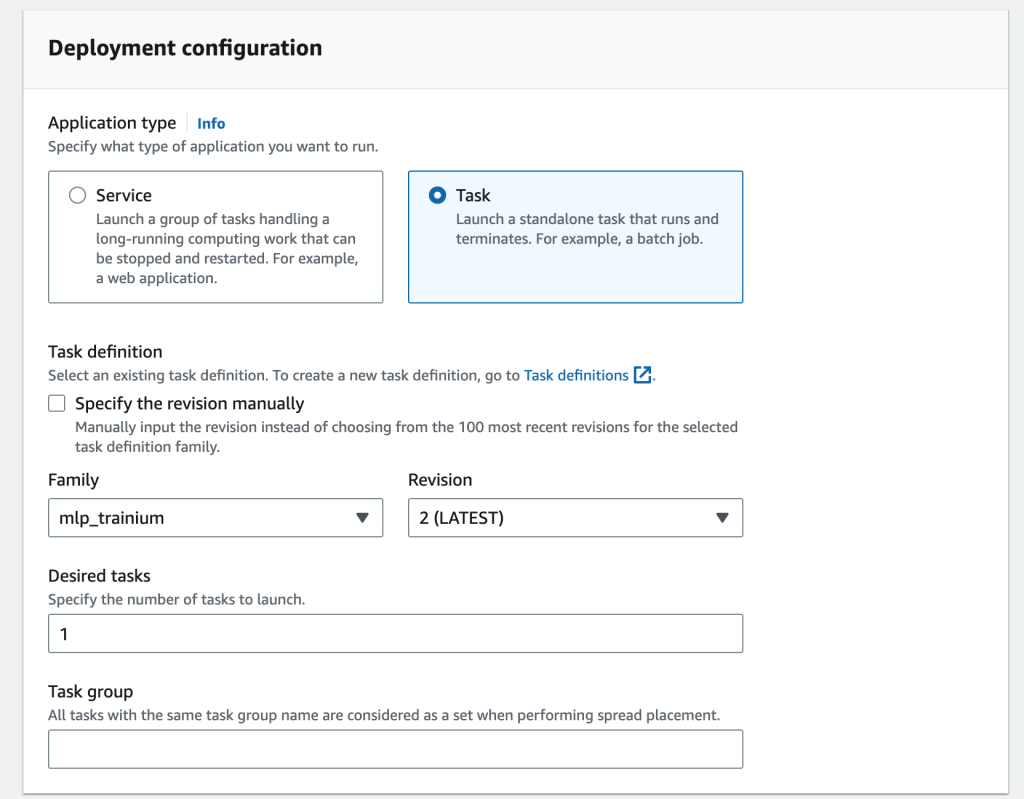

- For Application type, select Task.

- For Family, choose the task definition you created.

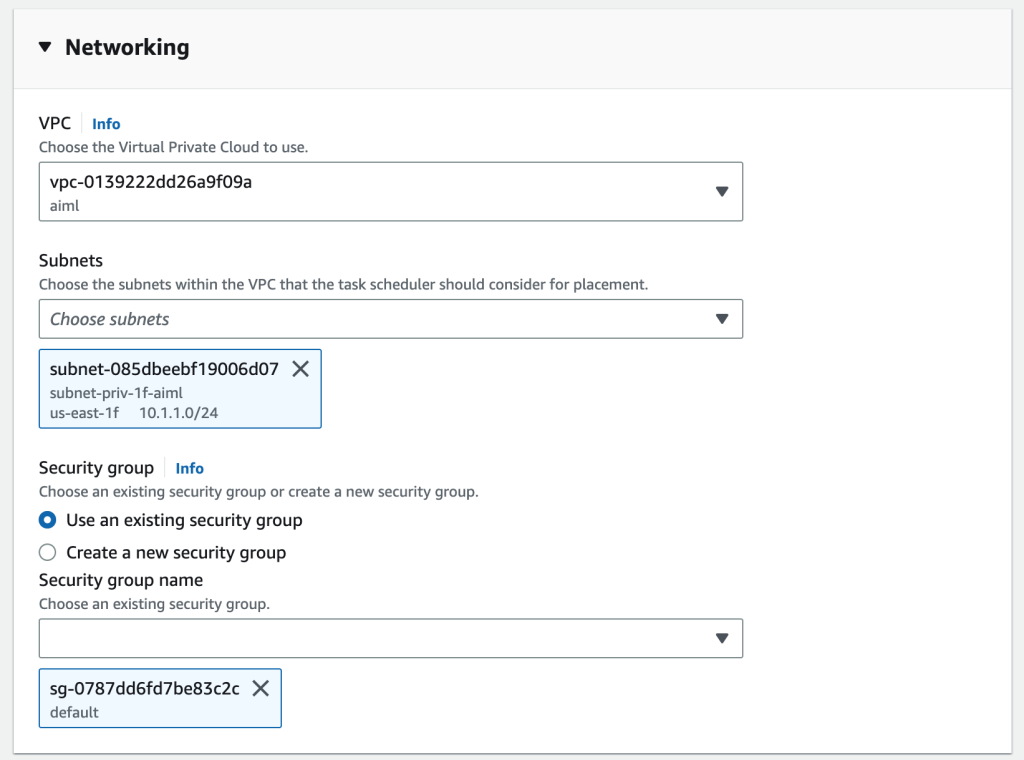

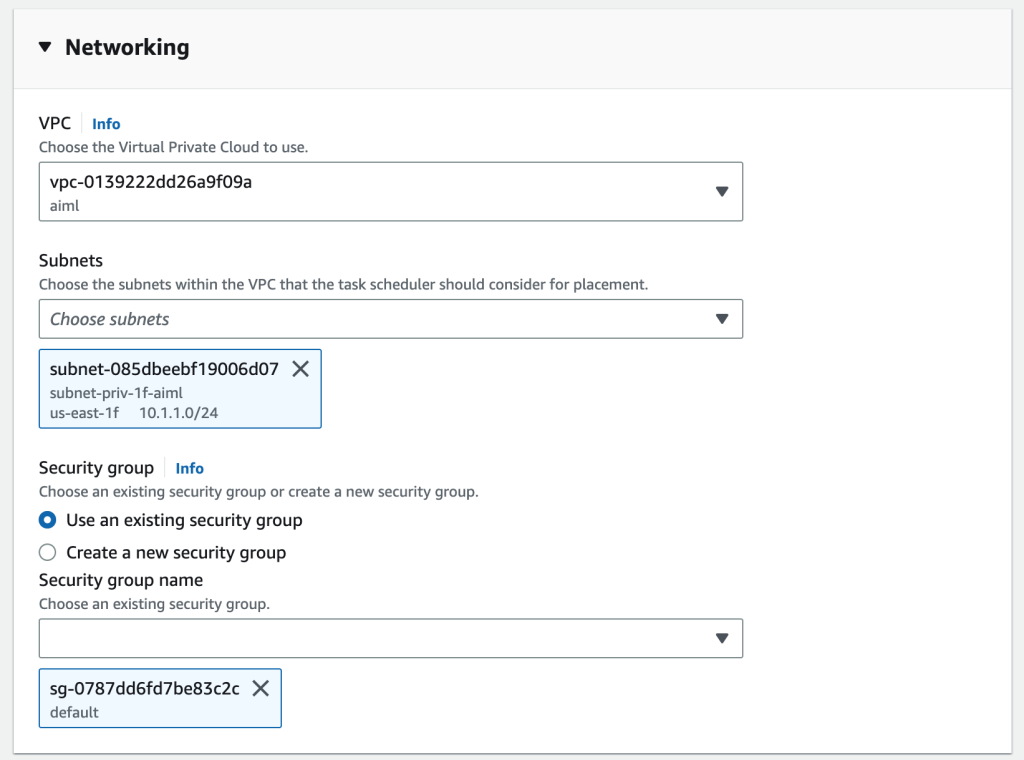

- In the Networking section, specify the VPC created by the CloudFormation stack, subnet, and security group.

- Choose Create.

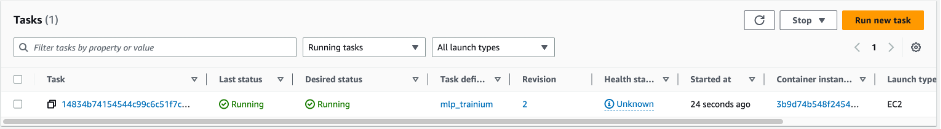

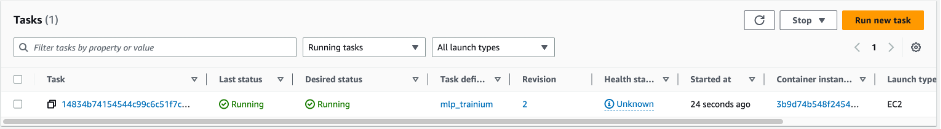

You can monitor your task on the Amazon ECS console.

You can also run the task using the AWS CLI:

aws ecs run-task --cluster <your-cluster-name> --task-definition <your-task-name> --count 1 --network-configuration '{"awsvpcConfiguration": {"subnets": ["<your-subnet-name> "], "securityGroups": ["<your-sg-name> "] }}'

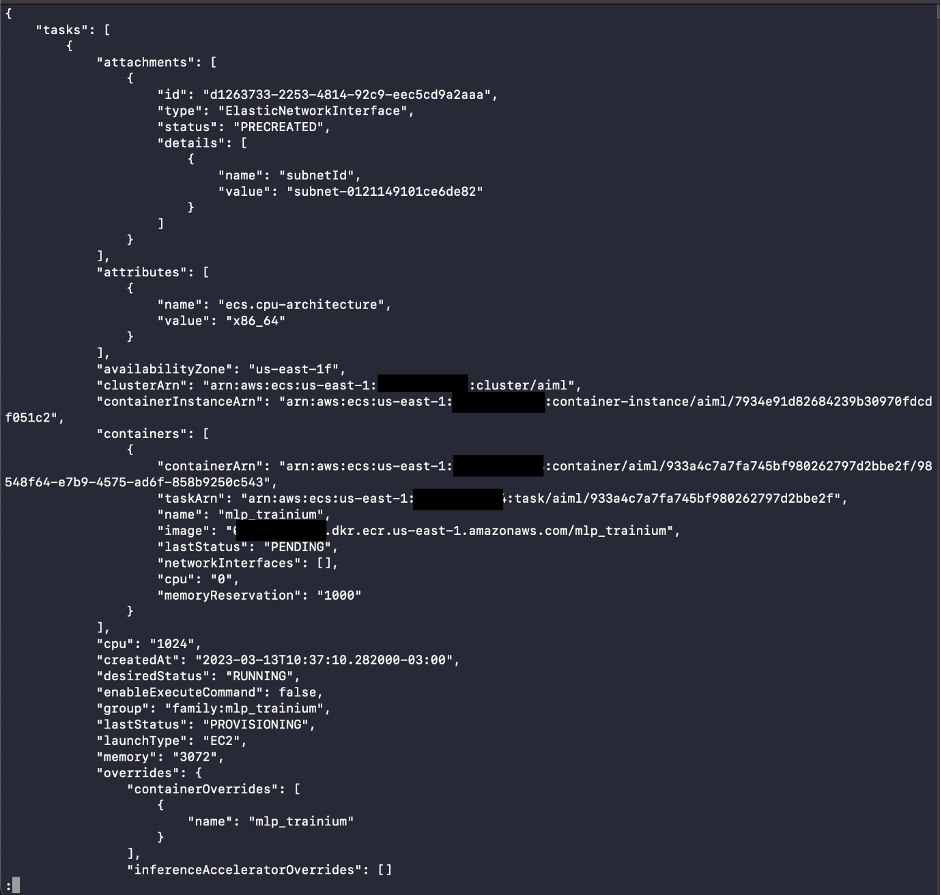

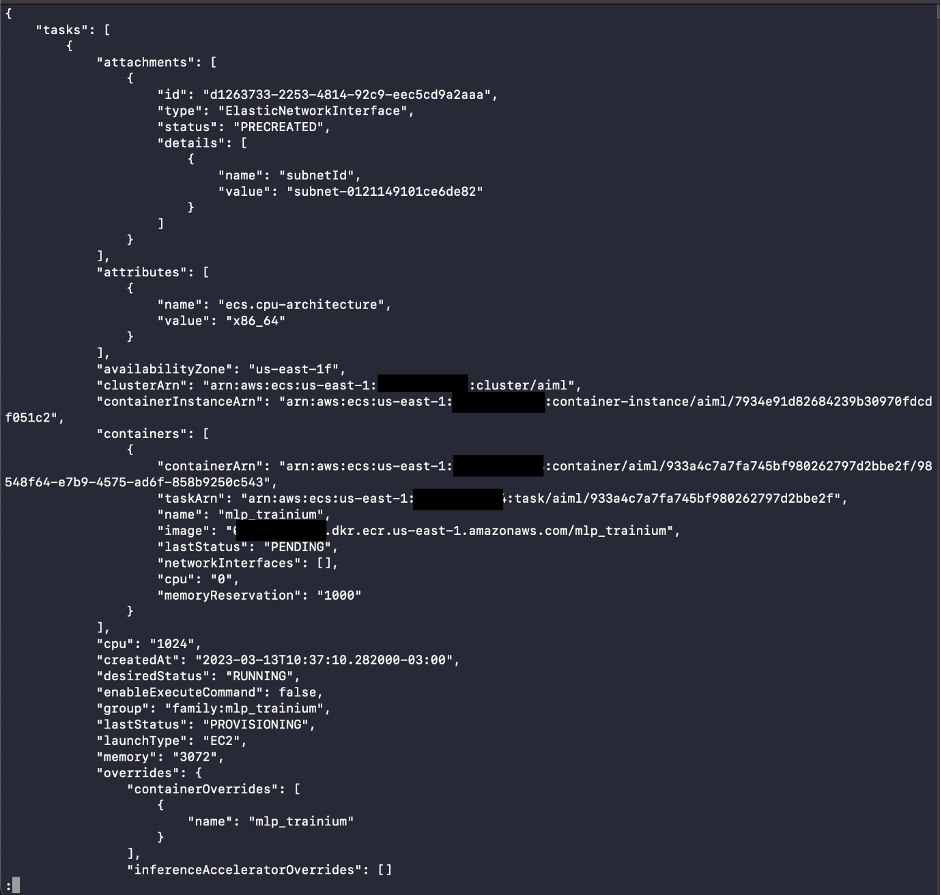

The result will look like the following screenshot.

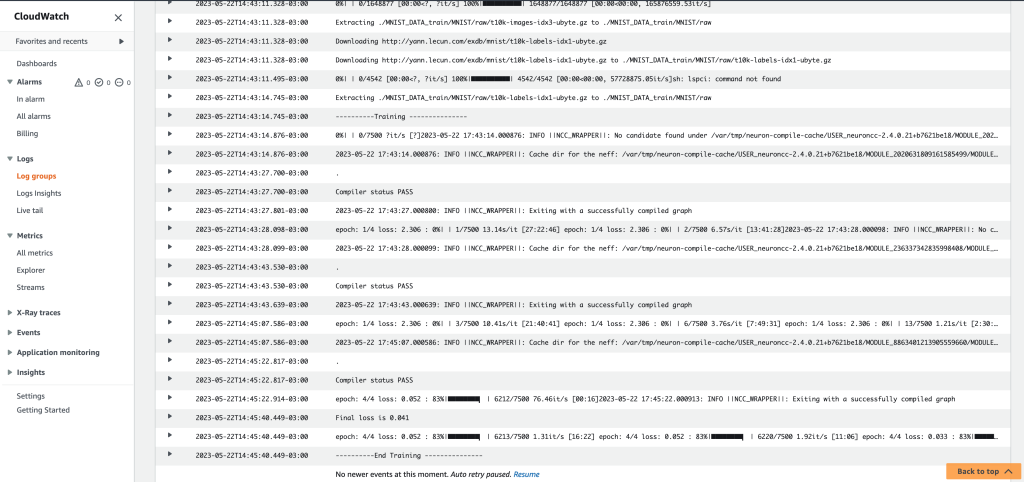

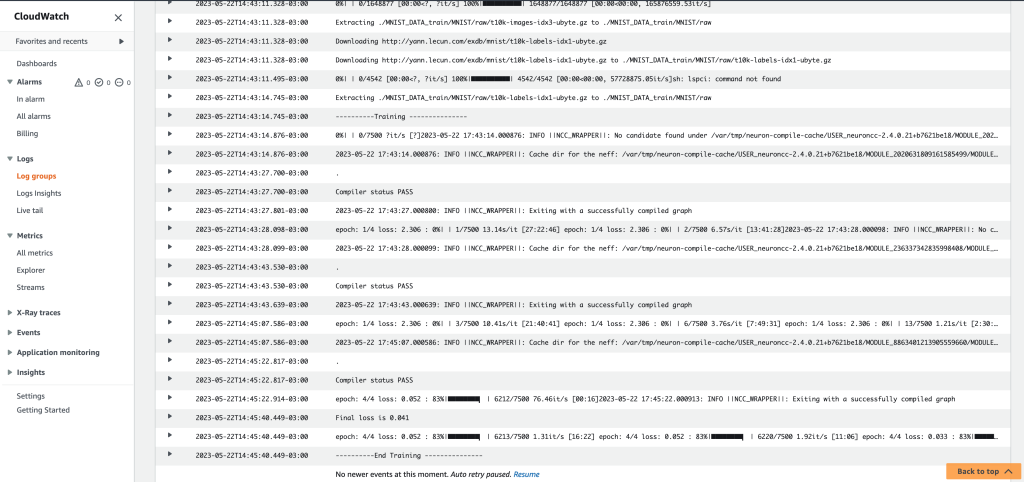

You can also check the details of the training job through the Amazon CloudWatch log group.

After you train your models, you can store them in Amazon Simple Storage Service (Amazon S3).

Clean up

To avoid additional expenses, you can change the Auto Scaling group to Minimum capacity and Desired capacity to zero, to shut down the Trainium instances. To do a complete cleanup, delete the CloudFormation stack to remove all resources created by this template.

Conclusion

In this post, we showed how to use Amazon ECS to deploy your ML training jobs. We created a CloudFormation template to create the ECS cluster of Trn1 instances, built a custom Docker image, pushed it to Amazon ECR, and ran the ML training job on the ECS cluster using a Trainium instance.

For more information about Neuron and what you can do with Trainium, check out the following resources:

About the Authors

Guilherme Ricci is a Senior Startup Solutions Architect on Amazon Web Services, helping startups modernize and optimize the costs of their applications. With over 10 years of experience with companies in the financial sector, he is currently working with a team of AI/ML specialists.

Guilherme Ricci is a Senior Startup Solutions Architect on Amazon Web Services, helping startups modernize and optimize the costs of their applications. With over 10 years of experience with companies in the financial sector, he is currently working with a team of AI/ML specialists.

Evandro Franco is an AI/ML Specialist Solutions Architect working on Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 15 years working with technology, from software development, infrastructure, serverless, to machine learning.

Evandro Franco is an AI/ML Specialist Solutions Architect working on Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 15 years working with technology, from software development, infrastructure, serverless, to machine learning.

Matthew McClean leads the Annapurna ML Solution Architecture team that helps customers adopt AWS Trainium and AWS Inferentia products. He is passionate about generative AI and has been helping customers adopt AWS technologies for the last 10 years.

Matthew McClean leads the Annapurna ML Solution Architecture team that helps customers adopt AWS Trainium and AWS Inferentia products. He is passionate about generative AI and has been helping customers adopt AWS technologies for the last 10 years.

Read More

Guilherme Ricci is a Senior Startup Solutions Architect on Amazon Web Services, helping startups modernize and optimize the costs of their applications. With over 10 years of experience with companies in the financial sector, he is currently working with a team of AI/ML specialists.

Guilherme Ricci is a Senior Startup Solutions Architect on Amazon Web Services, helping startups modernize and optimize the costs of their applications. With over 10 years of experience with companies in the financial sector, he is currently working with a team of AI/ML specialists. Evandro Franco is an AI/ML Specialist Solutions Architect working on Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 15 years working with technology, from software development, infrastructure, serverless, to machine learning.

Evandro Franco is an AI/ML Specialist Solutions Architect working on Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 15 years working with technology, from software development, infrastructure, serverless, to machine learning. Matthew McClean leads the Annapurna ML Solution Architecture team that helps customers adopt AWS Trainium and AWS Inferentia products. He is passionate about generative AI and has been helping customers adopt AWS technologies for the last 10 years.

Matthew McClean leads the Annapurna ML Solution Architecture team that helps customers adopt AWS Trainium and AWS Inferentia products. He is passionate about generative AI and has been helping customers adopt AWS technologies for the last 10 years.