According to Gartner, hyperautomation is the number one trend in 2022 and will continue advancing in future. One of the main barriers to hyperautomation is in areas where we’re still struggling to reduce human involvement. Intelligent systems have a hard time matching human visual recognition abilities, despite great advancements in deep learning in computer vision. This is mainly due to the lack of annotated data (or when data is sparse) and in areas such as quality control, where trained human eyes still dominate. Another reason is the feasibility of human access in all areas of the product supply chain, such as quality control inspection on the production line. Visual inspection is widely used for performing internal and external assessment of various equipment in a production facility, such as storage tanks, pressure vessels, piping, vending machines, and other equipment, which expands to many industries, such as electronics, medical, CPG, and raw materials and more.

Using Artificial Intelligence (AI) for automated visual inspection or augmenting the human visual inspection process with AI can help address the challenges outlined below.

Challenges of human visual inspection

Human-led visual inspection has the following high-level issues:

- Scale – Most products go through multiple stages, from assembly to supply chain to quality control, before being made available to the end consumer. Defects can occur during the manufacturing process or assembly at different points in space and time. Therefore, it’s not always feasible or cost-effective to use in-person human visual inspection. This inability to scale can result in disasters such as the BP Deepwater Horizon oil spill and Challenger space shuttle explosion, the overall negative impact of which (to humans and nature) overshoots the monetary cost by quite a distance.

- Human visual error – In areas where human-led visual inspection can be conveniently performed, human error is a major factor that often goes overlooked. According to the following report, most inspection tasks are complex and typically exhibit error rates of 20–30%, which directly translates to cost and undesirable outcomes.

- Personnel and miscellaneous costs – Although the overall cost of quality control can vary greatly depending on industry and location, according to some estimates, a trained quality inspector salary ranges between $26,000–60,000 (USD) per year. There are also other miscellaneous costs that may not always be accounted for.

SageMaker JumpStart is a great place to get started with various Amazon SageMaker features and capabilities through curated one-click solutions, example notebooks, and pre-trained Computer Vision, Natural Language Processing and Tabular data models that users can choose, fine-tune (if needed) and deploy using AWS SageMaker infrastructure.

In this post, we walk through how to quickly deploy an automated defect detection solution, from data ingestion to model inferencing, using a publicly available dataset and SageMaker JumpStart.

Solution overview

This solution uses a state-of-the-art deep learning approach to automatically detect surface defects using SageMaker. The Defect Detection Network or DDN model enhances the Faster R-CNN and identifies possible defects in an image of a steel surface. The NEU surface defect database, is a balanced dataset that contains six kinds of typical surface defects of a hot-rolled steel strip: rolled-in scale (RS), patches (Pa), crazing (Cr), pitted surface (PS), inclusion (In), and scratches (Sc). The database includes 1,800 grayscale images: 300 samples each of type of defect.

Content

The JumpStart solution contains the following artifacts, which are available to you from the JupyterLab File Browser:

- cloudformation/ – AWS CloudFormation configuration files to create relevant SageMaker resources and apply permissions. Also includes cleanup scripts to delete created resources.

-

src/ – Contains the following:

- prepare_data/ – Data preparation for NEU datasets.

-

sagemaker_defect_detection/ – Main package containing the following:

- dataset – Contains NEU dataset handling.

- models – Contains Automated Defect Inspection (ADI) System called Defect Detection Network. See the following paper for details.

- utils – Various utilities for visualization and COCO evaluation.

- classifier.py – For the classification task.

- detector.py – For the detection task.

- transforms.py – Contains the image transformations used in training.

- notebooks/ – The individual notebooks, discussed in more detail later in this post.

- scripts/ – Various scripts for training and building.

Default dataset

This solution trains a classifier on the NEU-CLS dataset and a detector on the NEU-DET dataset. This dataset contains 1800 images and 4189 bounding boxes in total. The type of defects in our dataset are as follows:

- Crazing (class:

Cr, label: 0) - Inclusion (class:

In, label: 1) - Pitted surface (class:

PS, label: 2) - Patches (class: Pa, label: 3)

- Rolled-in scale (class:

RS, label: 4) - Scratches (class:

Sc, label: 5)

The following are sample images of the six classes.

The following images are sample detection results. From left to right, we have the original image, the ground truth detection, and the SageMaker DDN model output.

Architecture

The JumpStart solution comes pre-packaged with Amazon SageMaker Studio notebooks that download the required datasets and contain the code and helper functions for training the model/s and deployment using a real-time SageMaker endpoint.

All notebooks download the dataset from a public Amazon Simple Storage Service (Amazon S3) bucket and import helper functions to visualize the images. The notebooks allow the user to customize the solution, such as hyperparameters for model training or perform transfer learning in case you choose to use the solution for your defect detection use case.

The solution contains the following four Studio notebooks:

- 0_demo.ipynb – Creates a model object from a pre-trained DDN model on the NEU-DET dataset and deploys it behind a real-time SageMaker endpoint. Then we send some image samples with defects for detection and visualize the results.

- 1_retrain_from_checkpoint.ipynb – Retrains our pre-trained detector for a few more epochs and compares results. You can also bring your own dataset; however, we use the same dataset in the notebook. Also included is a step to perform transfer learning by fine-tuning the pre-trained model. Fine-tuning a deep learning model on one particular task involves using the learned weights from a particular dataset to enhance the performance of the model on another dataset. You can also perform fine-tuning over the same dataset used in the initial training but perhaps with different hyperparameters.

- 2_detector_from_scratch.ipynb – Trains our detector from scratch to identify if defects exist in an image.

- 3_classification_from_scratch.ipynb – Trains our classifier from scratch to classify the type of defect in an image.

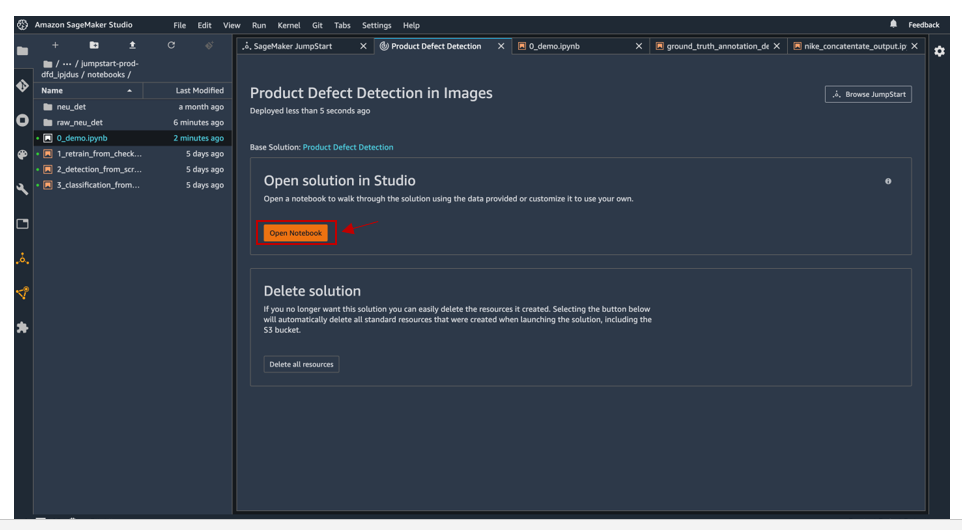

Each notebook contains boilerplate code which deploys a SageMaker real-time endpoint for model inferencing. You can view the list of notebooks by going to the JupyterLab file browser and navigating to the “notebooks” folder in the JumpStart Solution directory or by clicking “Open Notebook” on the JumpStart solution, specifically “Product Defect Detection” solution page (See below).

Prerequisites

The solution outlined in this post is part of Amazon SageMaker JumpStart. To run this SageMaker JumpStart 1P Solution and have the infrastructure deploy to your AWS account, you need to create an active Amazon SageMaker Studio instance (see Onboard to Amazon SageMaker Domain).

JumpStart features are not available in SageMaker notebook instances, and you can’t access them through the AWS Command Line Interface (AWS CLI).

Deploy the solution

We provide walkthrough videos for the high-level steps on this solution. To start, launch SageMaker JumpStart and choose the Product Defect Detection solution on the Solutions tab.

The provided SageMaker notebooks download the input data and launch the later stages. The input data is located in an S3 bucket.

We train the classifier and detector models and evaluate the results in SageMaker. If desired, you can deploy the trained models and create SageMaker endpoints.

The SageMaker endpoint created from the previous step is an HTTPS endpoint and is capable of producing predictions.

You can monitor the model training and deployment via Amazon CloudWatch.

Clean up

When you’re finished with this solution, make sure that you delete all unwanted AWS resources. You can use AWS CloudFormation to automatically delete all standard resources that were created by the solution and notebook. On the AWS CloudFormation console, delete the parent stack. Deleting the parent stack automatically deletes the nested stacks.

You need to manually delete any extra resources that you may have created in this notebook, such as extra S3 buckets in addition to the solution’s default bucket or extra SageMaker endpoints (using a custom name).

Conclusion

In this post, we introduced a solution using SageMaker JumpStart to address issues with the current state of visual inspection, quality control, and defect detection in various industries. We recommended a novel approach called Automated Defect Inspection system built using a pre-trained DDN model for defect detection on steel surfaces. After you launched the JumpStart solution and downloaded the public NEU datasets, you deployed a pre-trained model behind a SageMaker real-time endpoint and analyzed the endpoint metrics using CloudWatch. We also discussed other features of the JumpStart solution, such as how to bring your own training data, perform transfer learning, and retrain the detector and classifier.

Try out this JumpStart solution on SageMaker Studio, either retraining the existing model on a new dataset for defect detection or pick from SageMaker JumpStart’s library of computer vision models, NLP models or tabular models and deploy them for your specific use case.

About the Authors

Vedant Jain is a Sr. AI/ML Specialist Solutions Architect, helping customers derive value out of the Machine Learning ecosystem at AWS. Prior to joining AWS, Vedant has held ML/Data Science Specialty positions at various companies such as Databricks, Hortonworks (now Cloudera) & JP Morgan Chase. Outside of his work, Vedant is passionate about making music, using Science to lead a meaningful life & exploring delicious vegetarian cuisine from around the world.

Vedant Jain is a Sr. AI/ML Specialist Solutions Architect, helping customers derive value out of the Machine Learning ecosystem at AWS. Prior to joining AWS, Vedant has held ML/Data Science Specialty positions at various companies such as Databricks, Hortonworks (now Cloudera) & JP Morgan Chase. Outside of his work, Vedant is passionate about making music, using Science to lead a meaningful life & exploring delicious vegetarian cuisine from around the world.

Tao Sun is an Applied Scientist in AWS. He obtained his Ph.D. in Computer Science from University of Massachusetts, Amherst. His research interests lie in deep reinforcement learning and probabilistic modeling. He contributed to AWS DeepRacer, AWS DeepComposer. He likes ballroom dance and reading during his spare time.

Tao Sun is an Applied Scientist in AWS. He obtained his Ph.D. in Computer Science from University of Massachusetts, Amherst. His research interests lie in deep reinforcement learning and probabilistic modeling. He contributed to AWS DeepRacer, AWS DeepComposer. He likes ballroom dance and reading during his spare time.