Posted by Shilpa Kancharla

Video data contains a rich amount of information, and has a more complex and large structure than image data. Being able to classify videos in a memory-efficient way using deep learning can help us better understand the contents within the data. On tensorflow.org, we have published a series of tutorials on how to load, preprocess, and classify video data. Here are quick links to each of these tutorials:

- Load video data

- Video classification with a 3D convolutional neural network

- MoViNet for streaming action recognition

- Transfer learning for video classification with MoViNet

|

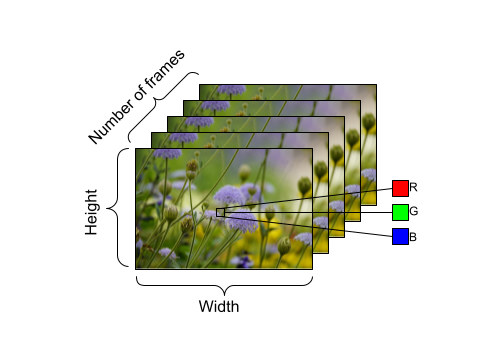

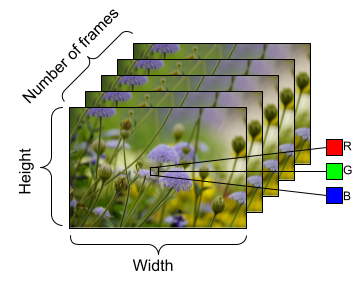

| Example of shape of video data, with the following dimensions: number of frames (time) x height x width x channels. |

FrameGenerator to load video data

From the Load video data tutorial, let’s take the opportunity to talk about the main workhorse of the majority of these tutorials: the FrameGenerator class. Through this class, we are able to yield the tensor representation of the video and the label, or class, of the video.

|

class FrameGenerator: |

Upon creating the generator class, we use the function from_generator() to feed in the data to our deep learning models. Specifically, the from_generator() API will create a dataset whose contents are generated by a generator. Using Python generators can be more memory-efficient than storing an entire sequence of data in memory. Consider creating a generator class similar to FrameGenerator and using the from_generator() API to load data into your TensorFlow and Keras models.

|

output_signature = (tf.TensorSpec(shape = (None, None, None, 3), dtype = tf.float32), dtype = tf.int16)) train_ds = tf.data.Dataset.from_generator(FrameGenerator(subset_paths[‘train’], 10, training=True), output_signature = output_signature) |

einops library for resizing video data |

We use the functions parse_shape() and rearrange() from the einops library. The parse_shape() function used here maps the names of the axes to their corresponding lengths. It will return a dictionary containing this information, called old_shape. Next, we use the rearrange() function that allows you to reorder the axes for multidimensional tensors. Pass in the tensor, alongside the names of the axes you are trying to rearrange.

The notation b t h w c -> (b t) h w c here means we want to squeeze together the batch size (denoted by b) and time (denoted by t) dimensions to pass this data into the Keras Resizing layer object. When we instantiate the ResizeVideo class, we pass in the height and width values that we want to resize the frame to. Once this resizing is complete, we use the rearrange() function again to unsqueeze (using the notation (b t) h w c -> b t h w c) the batch size and time dimensions.

|

class ResizeVideo(keras.layers.Layer): |

What’s next?

These are just a few ways you can leverage TensorFlow to work with video data in a memory-efficient manner, but such techniques aren’t just limited to video data. Medical data such as MRI scans or 3D image data also require efficient data loading and potential resizing of the shape of data. These techniques could prove useful when you are working with limited computational resources. We hope you find these tutorials helpful, and thank you for reading!