The head of Amazon Web Services’ quantum communication program on the Nobel winners’ influence on her field.Read More

What Is Green Computing?

Everyone wants green computing.

Mobile users demand maximum performance and battery life. Businesses and governments increasingly require systems that are powerful yet environmentally friendly. And cloud services must respond to global demands without making the grid stutter.

For these reasons and more, green computing has evolved rapidly over the past three decades, and it’s here to stay.

What Is Green Computing?

Green computing, or sustainable computing, is the practice of maximizing energy efficiency and minimizing environmental impact in the ways computer chips, systems and software are designed and used.

Also called green information technology, green IT or sustainable IT, green computing spans concerns across the supply chain, from the raw materials used to make computers to how systems get recycled.

In their working lives, green computers must deliver the most work for the least energy, typically measured by performance per watt.

Why Is Green Computing Important?

Green computing is a significant tool to combat climate change, the existential threat of our time.

Global temperatures have risen about 1.2°C over the last century. As a result, ice caps are melting, causing sea levels to rise about 20 centimeters and increasing the number and severity of extreme weather events.

The rising use of electricity is one of the causes of global warming. Data centers represent a small fraction of total electricity use, about 1% or 200 terawatt-hours per year, but they’re a growing factor that demands attention.

Powerful, energy-efficient computers are part of the solution. They’re advancing science and our quality of life, including the ways we understand and respond to climate change.

What Are the Elements of Green Computing?

Engineers know green computing is a holistic discipline.

“Energy efficiency is a full-stack issue, from the software down to the chips,” said Sachin Idgunji, co-chair of the power working group for the industry’s MLPerf AI benchmark and a distinguished engineer working on performance analysis at NVIDIA.

For example, in one analysis he found NVIDIA DGX A100 systems delivered a nearly 5x improvement in energy efficiency in scale-out AI training benchmarks compared to the prior generation.

“My primary role is analyzing and improving energy efficiency of AI applications at everything from the GPU and the system node to the full data center scale,” he said.

Idgunji’s work is a job description for a growing cadre of engineers building products from smartphones to supercomputers.

What’s the History of Green Computing?

Green computing hit the public spotlight in 1992, when the U.S. Environmental Protection Agency launched Energy Star, a program for identifying consumer electronics that met standards in energy efficiency.

A 2017 report found nearly 100 government and industry programs across 22 countries promoting what it called green ICTs, sustainable information and communication technologies.

One such organization, the Green Electronics Council, provides the Electronic Product Environmental Assessment Tool, a registry of systems and their energy-efficiency levels. The council claims it’s saved nearly 400 million megawatt-hours of electricity through use of 1.5 billion green products it’s recommended to date.

Work on green computing continues across the industry at every level.

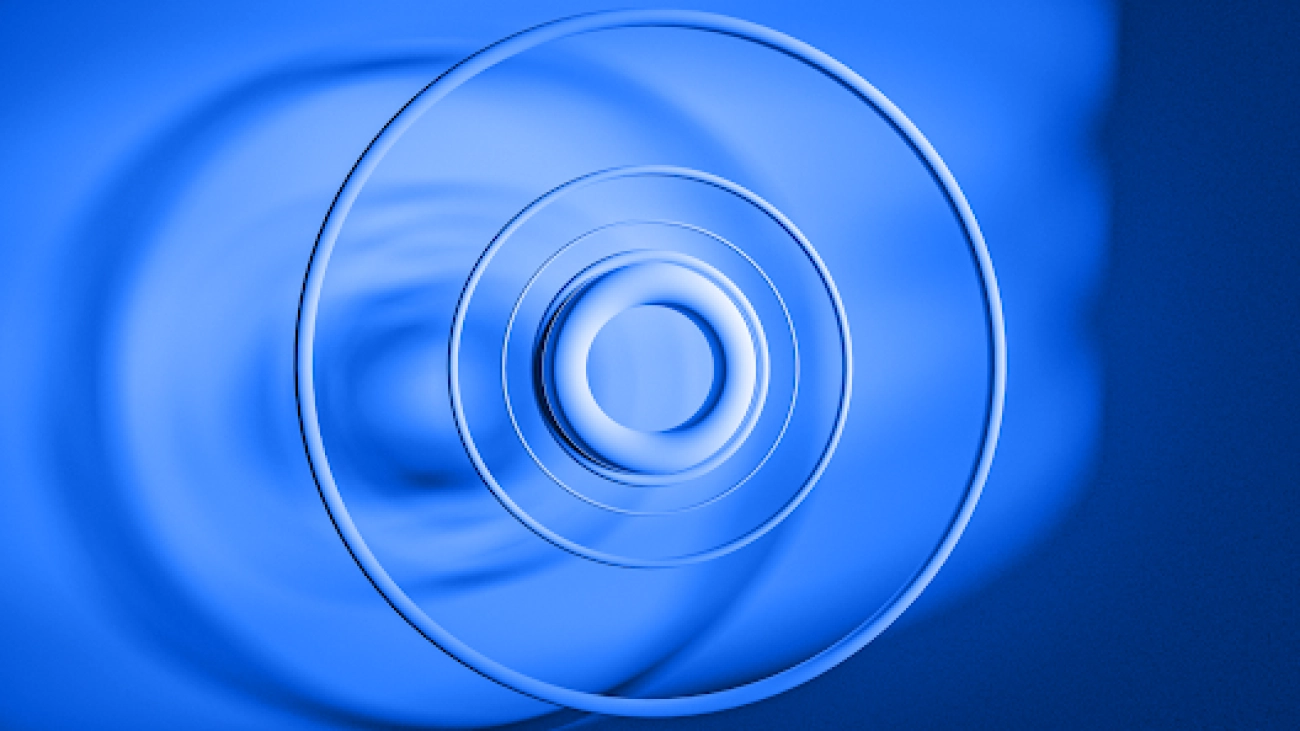

For example, some large data centers use liquid-cooling while others locate data centers where they can use cool ambient air. Schneider Electric recently released a whitepaper recommending 23 metrics for determining the sustainability level of data centers.

A Pioneer in Energy Efficiency

Wu Feng, a computer science professor at Virginia Tech, built a career pushing the limits of green computing. It started out of necessity while he was working at the Los Alamos National Laboratory.

A computer cluster for open science research he maintained in an external warehouse had twice as many failures in summers versus winters. So, he built a lower-power system that wouldn’t generate as much heat.

He demoed the system, dubbed Green Destiny, at the Supercomputing conference in 2001. Covered by the BBC, CNN and the New York Times, among others, it sparked years of talks and debates in the HPC community about the potential reliability as well as efficiency of green computing.

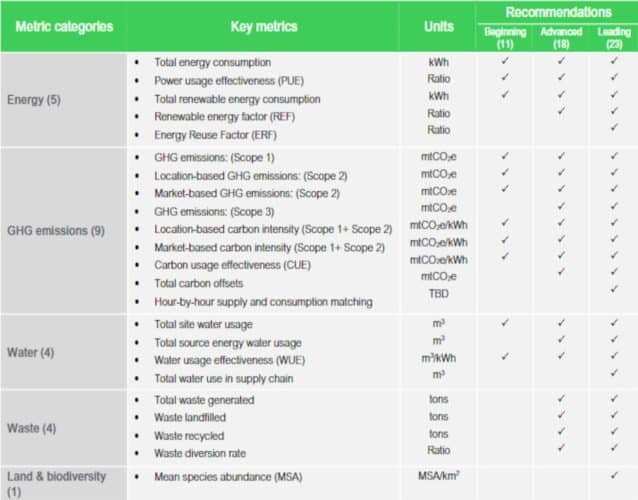

Interest rose as supercomputers and data centers grew, pushing their boundaries in power consumption. In November 2007, after working with some 30 HPC luminaries and gathering community feedback, Feng launched the first Green500 List, the industry’s benchmark for energy-efficient supercomputing.

A Green Computing Benchmark

The Green500 became a rallying point for a community that needed to reign in power consumption while taking performance to new heights.

“Energy efficiency increased exponentially, flops per watt doubled about every year and a half for the greenest supercomputer at the top of the list,” said Feng.

By some measures, the results showed the energy efficiency of the world’s greenest systems increased two orders of magnitude in the last 14 years.

Feng attributes the gains mainly to the use of accelerators such as GPUs, now common among the world’s fastest systems.

“Accelerators added the capability to execute code in a massively parallel way without a lot of overhead — they let us run blazingly fast,” he said.

He cited two generations of the Tsubame supercomputers in Japan as early examples. They used NVIDIA Kepler and Pascal architecture GPUs to lead the Green500 list in 2014 and 2017, part of a procession of GPU-accelerated systems on the list.

“Accelerators have had a huge impact throughout the list,” said Feng, who will receive an award for his green supercomputing work at the Supercomputing event in November.

“Notably, NVIDIA was fantastic in its engagement and support of the Green500 by ensuring its energy-efficiency numbers were reported, thus helping energy efficiency become a first-class citizen in how supercomputers are designed today,” he added.

AI and Networking Get More Efficient

Today, GPUs and data processing units (DPUs) are bringing greater energy efficiency to AI and networking tasks, as well as HPC jobs like simulations run on supercomputers and enterprise data centers.

AI, the most powerful technology of our time, will become a part of every business. McKinsey & Co. estimates AI will add a staggering $13 trillion to global GDP by 2030 as deployments grow.

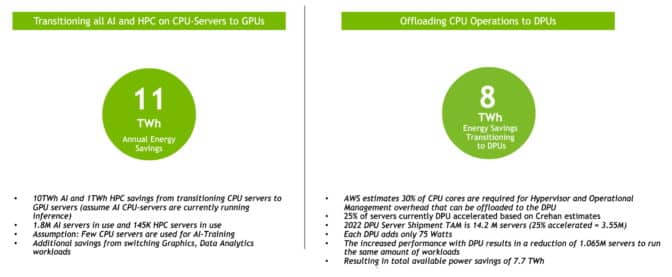

NVIDIA estimates data centers could save a whopping 19 terawatt-hours of electricity a year if all AI, HPC and networking offloads were run on GPU and DPU accelerators (see the charts below). That’s the equivalent of the energy consumption of 2.9 million passenger cars driven for a year.

It’s an eye-popping measure of the potential for energy efficiency with accelerated computing.

AI Benchmark Measures Efficiency

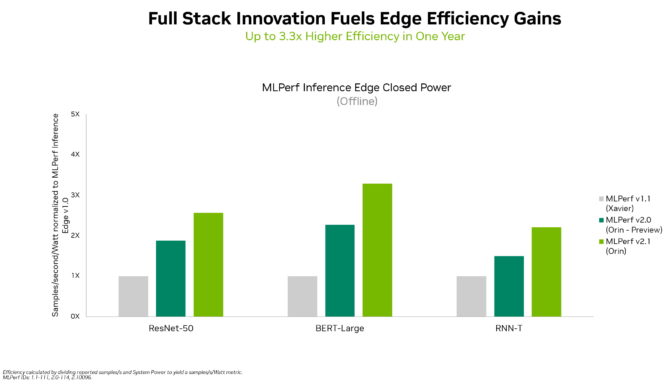

Because AI represents a growing part of enterprise workloads, the MLPerf industry benchmarks for AI have been measuring performance per watt on submissions for data center and edge inference since February 2021.

“The next frontier for us is to measure energy efficiency for AI on larger distributed systems, for HPC workloads and for AI training — it’s similar to the Green500 work,” said Idgunji, whose power group at MLPerf includes members from six other chip and systems companies.

The public results motivate participants to make significant improvements with each product generation. They also help engineers and developers understand ways to balance performance and efficiency across the major AI workloads that MLPerf tests.

“Software optimizations are a big part of work because they can lead to large impacts in energy efficiency, and if your system is energy efficient, it’s more reliable, too,” Idgunji said.

Green Computing for Consumers

In PCs and laptops, “we’ve been investing in efficiency for a long time because it’s the right thing to do,” said Narayan Kulshrestha, a GPU power architect at NVIDIA who’s been working in the field nearly two decades.

For example, Dynamic Boost 2.0 uses deep learning to automatically direct power to a CPU, a GPU or a GPU’s memory to increase system efficiency. In addition, NVIDIA created a system-level design for laptops, called Max-Q, to optimize and balance energy efficiency and performance.

Building a Cyclical Economy

When a user replaces a system, the standard practice in green computing is that the old system gets broken down and recycled. But Matt Hull sees better possibilities.

“Our vision is a cyclical economy that enables everyone with AI at a variety of price points,” said Hull, the vice president of sales for data center AI products at NVIDIA.

So he aims to find the system a new home with users in developing countries who find it useful and affordable. It’s a work in progress seeking the right partner and writing a new chapter in an existing lifecycle management process.

Green Computing Fights Climate Change

Energy-efficient computers are among the sharpest tools fighting climate change.

Scientists in government labs and universities have long used GPUs to model climate scenarios and predict weather patterns. Recent advances in AI, driven by NVIDIA GPUs, can now help model weather forecasting 100,000x quicker than traditional models. Watch the following video for details:

In an effort to accelerate climate science, NVIDIA announced plans to build Earth-2, an AI supercomputer dedicated to predicting the impacts of climate change. It will use NVIDIA Omniverse, a 3D design collaboration and simulation platform, to build a digital twin of Earth so scientists can model climates in ultra-high resolution.

In addition, NVIDIA is working with the United Nations Satellite Centre to accelerate climate-disaster management and train data scientists across the globe in using AI to improve flood detection.

Meanwhile, utilities are embracing machine learning to move toward a green, resilient and smart grid. Power plants are using digital twins to predict costly maintenance and model new energy sources, such as fusion-reactor designs.

What’s Ahead in Green Computing?

Feng sees the core technology behind green computing moving forward on multiple fronts.

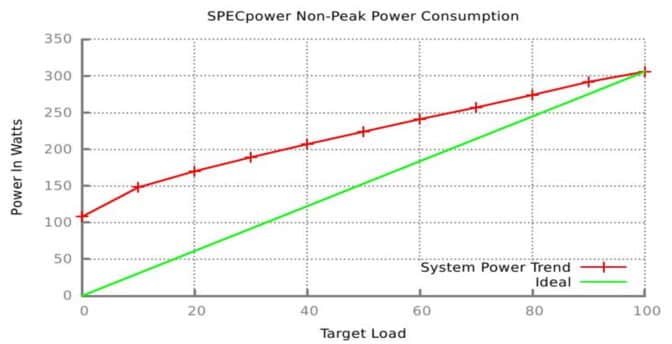

In the short term, he’s working on what’s called energy proportionality, that is, ways to make sure systems get peak power when they need peak performance and scale gracefully down to zero power as they slow to an idle, like a modern car engine that slows its RPMs and then shuts down at a red light.

Long term, he’s exploring ways to minimize data movement inside and between computer chips to reduce their energy consumption. And he’s among many researchers studying the promise of quantum computing to deliver new kinds of acceleration.

It’s all part of the ongoing work of green computing, delivering ever more performance at ever greater efficiency.

The post What Is Green Computing? appeared first on NVIDIA Blog.

GeForce RTX 4090 GPU Arrives, Enabling New World-Building Possibilities for 3D Artists This Week ‘In the NVIDIA Studio’

Editor’s note: This post is part of our weekly In the NVIDIA Studio series, which celebrates featured artists, offers creative tips and tricks, and demonstrates how NVIDIA Studio technology improves creative workflows. In the coming weeks, we’ll deep dive on new GeForce RTX 40 Series GPU features, technologies and resources, and how they dramatically accelerate content creation.

Creators can now pick up the GeForce RTX 4090 GPU, available from top add-in card providers including ASUS, Colorful, Gainward, Galaxy, GIGABYTE, INNO3D, MSI, Palit, PNY and ZOTAC, as well as from system integrators and builders worldwide.

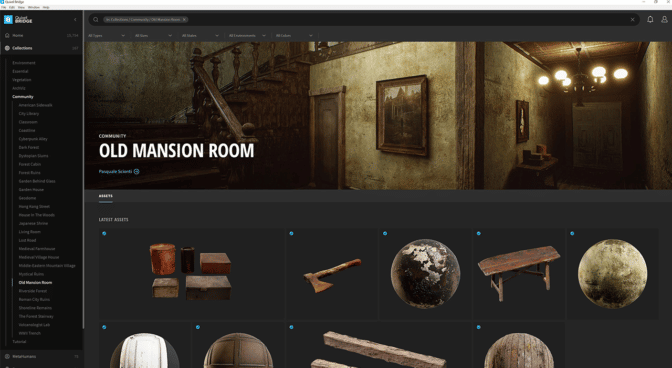

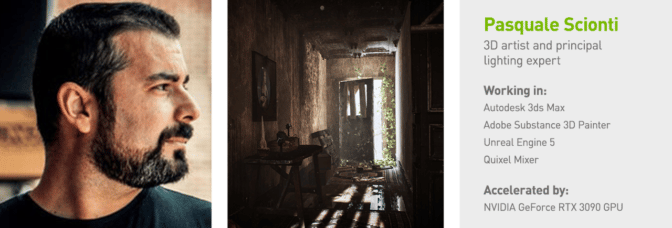

Fall has arrived, and with it comes the perfect time to showcase the beautiful, harrowing video, Old Abandoned Haunted Mansion, created by 3D artist and principal lighting expert Pasquale Scionti this week In the NVIDIA Studio.

Artists like Scionti can create at the speed of light with the help of RTX 40 Series GPUs alongside 110 RTX-accelerated apps, the NVIDIA Studio suite of software and dedicated Studio Drivers.

A Quantum Leap in Creative Performance

The new GeForce RTX 4090 GPU brings an extraordinary boost in performance, third-generation RT Cores, fourth-generation Tensor Cores, an eighth-generation NVIDIA Dual AV1 Encoder and 24GB of Micron G6X memory capable of reaching 1TB/s bandwidth.

3D artists can now build scenes in fully ray-traced environments with accurate physics and realistic materials — all in real time, without proxies. DLSS 3 technology uses the AI-powered RTX Tensor Cores and a new Optical Flow Accelerator to generate additional frames and dramatically increase frames per second (FPS). This improves smoothness and speeds up movement in the viewport. NVIDIA is working with popular 3D apps Unity and Unreal Engine 5 to integrate DLSS 3.

DLSS 3 will also benefit workflows in the NVIDIA Omniverse platform for building and connecting custom 3D pipelines. New Omniverse tools such as NVIDIA RTX Remix for modders, which was used to create Portal with RTX, will be game changers for 3D content creation.

Video and live-streaming creative workflows are also turbocharged as the new AV1 encoder delivers 40% increased efficiency, unlocking higher resolution and crisper image quality. Expect AV1 integration in OBS Studio, DaVinci Resolve and Adobe Premiere Pro (though the Voukoder plugin) later this month.

The new dual encoders capture up to 8K resolution at 60 FPS in real time via GeForce Experience and OBS Studio, and cut video export times nearly in half. These encoders will be enabled in popular video-editing apps including Blackmagic Design’s DaVinci Resolve, the Voukoder plugin for Adobe Premiere Pro, and Jianying Pro — China’s top video-editing app — later this month.

State-of-the-art AI technology, like AI image generators and new video-editing tools in DaVinci Resolve, is ushering in the next step in the AI revolution, delivering up to a 2x increase in performance over the previous generation.

To break technological barriers and expand creative possibilities, pick up the GeForce RTX 4090 GPU today. Check out this product finder for retail availability.

Haunted Mansion Origins

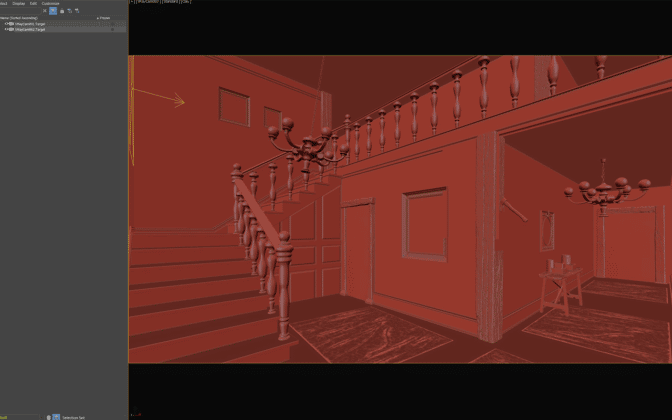

The visual impact of Old Abandoned Haunted Mansion is nothing short of remarkable, with photorealistic details for lighting and shadows and stunningly accurate textures.

However, it’s Scionti’s intentional omission of specific detail that allows viewers to construct their own narrative, a staple of his work.

Scionti highlighted additional mysterious features he created within the haunted mansion: a painting with a specter on the stairs, knocked-over furniture, a portrait of a woman who might’ve lived there and a mirror smashed in the middle as if someone struck it.

“Perhaps whatever happened is still in these walls,” mused Scionti. “Abandoned, reclaimed by nature.”

Scionti said he finds inspiration in the works of H.R. Giger, H.P. Lovecraft and Edgar Allan Poe, and often dreams of the worlds he aspires to build before bringing them to life in 3D. He stressed, however, “I don’t have a dark side! It just appears in my work!”

For Old Abandoned Haunted Mansion, the artist began by creating a moodboard featuring abandoned places. He specifically included structures that were reclaimed by nature to create a warm mood with the sun filtering in from windows, doors and broken walls.

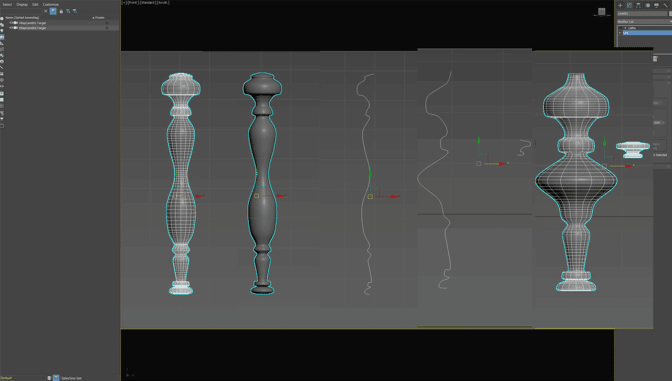

Scionti then modeled the scene’s objects, such as the ceiling lamp, painting frames and staircase, using Autodesk 3ds Max. By using a GeForce RTX 3090 GPU and selecting the default Autodesk Arnold renderer, he deployed RTX-accelerated AI denoising, resulting in interactive renders that were easy to edit while maintaining photorealism.

The versatile Autodesk 3ds Max software supports third-party GPU-accelerated renderers such as V-Ray, OctaneRender and Redshift, giving RTX owners additional options for their creative workflows.

When it comes time to export the renders, Scionti will soon be able to use GeForce RTX 40 Series GPUs to do so up to 80% faster than the previous generation.

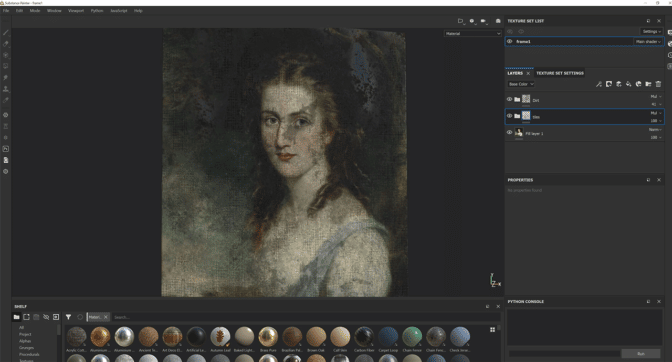

Scionti imported the models, like the ceiling lamp and various paintings, into Adobe Substance 3D Painter to apply unique textures. The artist used RTX-accelerated light and ambient occlusion to bake his assets in mere seconds.

Modeling techniques for the curtains, the drape on the armchair and the ghostly figure were created using Marvelous Designer, a realistic cloth-making program for 3D artists. In a system-requirements page, the Marvelous Designer team recommends using GeForce RTX 30 and other NVIDIA RTX GPU class GPUs, as well as downloading the latest NVIDIA Studio Driver.

Additional objects like the wooden ceiling were created using Quixel Mixer, an all-in-one texturing and material-creation tool designed to be intuitive and extremely fast.

Scionti then searched Quixel Megascans, the largest and fastest growing 3D can library, to acquire the remaining assets to round out the piece.

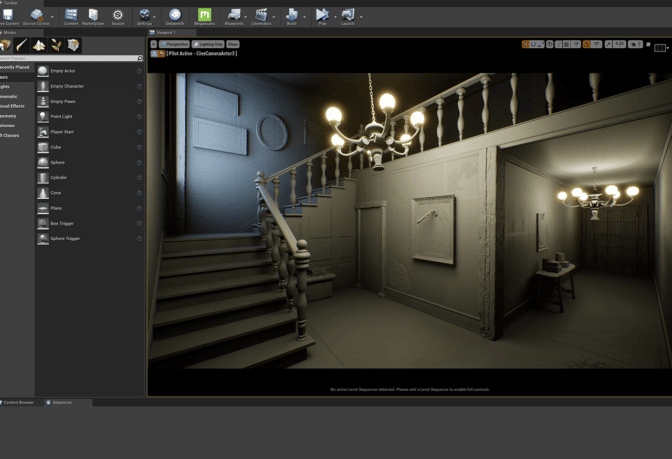

With the composition in place, Scionti applied final details in Unreal Engine 5.

RTX ON in Unreal Engine 5

Scionti used Unreal Engine 5, activating hardware-accelerated RTX ray tracing for high-fidelity, interactive visualization of 3D designs. He was further aided by NVIDIA DLSS, which uses AI to upscale frames rendered at lower resolution while retaining high-fidelity detail. The artist then constructed the scene rich with beautiful lighting, shadows and textures.

The new GeForce RTX 40 Series GPU lineup will use DLSS 3 — coming soon to UE5 — with AI Frame Generation to further enhance interactivity in the viewport.

Scionti perfected his lighting with Lumen, UE5’s fully dynamic global illumination and reflections system, supported by GeForce RTX GPUs.

“Nanite meshes were useful to have high polygons for close up details,” noted Scionti. “For lighting, I used the sun and sky, but to add even more light, I inserted rectangular light sources outside each opening, like the windows and the broken wall.”

To complete the video, Scionti added a deliberately paced, instrumental score which consists of a piano, violin, synthesizer and drum. The music injects an unexpected emotional element to the piece.

Scionti reflected on his creative journey, which he considers a relentless pursuit of knowledge and perfecting his craft. “The pride of seeing years of commitment and passion being recognized is incredible, and that drive has led me to where I am today,” he said.

To embark on an Unreal Engine 5-powered creative journey through desert scenes, alien landscapes, abandoned towns, castle ruins and beyond, check out the latest NVIDIA Studio Standout featuring some of the most talented 3D artists, including Scionti.

For more, explore Scionti’s Instagram.

Join the #From2Dto3D challenge

Scionti brought Old Abandoned Haunted Mansion from 2D beauty into 3D realism — and the NVIDIA Studio team wants to see more 2D to 3D progress.

Join the #From2Dto3D challenge this month for a chance to be featured on the NVIDIA Studio social media channels, like @juliestrator, whose delightfully cute illustration is elevated in 3D:

Here’s another example for inspiration…

Credit: @juliestrator pic.twitter.com/RsY6nLg8pT

— NVIDIA Studio (@NVIDIAStudio) October 3, 2022

Entering is quick and easy. Simply post a 2D piece of art next to a 3D rendition of it on Instagram, Twitter or Facebook. And be sure to tag #From2Dto3D to enter.

Get creativity-inspiring updates directly to your inbox by subscribing to the NVIDIA Studio newsletter.

The post GeForce RTX 4090 GPU Arrives, Enabling New World-Building Possibilities for 3D Artists This Week ‘In the NVIDIA Studio’ appeared first on NVIDIA Blog.

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More

Measuring perception in AI models

Perception – the process of experiencing the world through senses – is a significant part of intelligence. And building agents with human-level perceptual understanding of the world is a central but challenging task, which is becoming increasingly important in robotics, self-driving cars, personal assistants, medical imaging, and more. So today, we’re introducing the Perception Test, a multimodal benchmark using real-world videos to help evaluate the perception capabilities of a model.Read More