[MUSIC FADES]

All right, so, Sriram, let’s dive right in. I think it’s fairly obvious for me to say at this point that ChatGPT—and generative AI more broadly—is a worldwide phenomenon. But what’s so striking to me about this is the way that so many people around the world can pick up the technology and use it in their context, in their own way. I was on a panel discussion a few weeks ago where I saw a comedian discover in real time that GPT-4 could write jokes that are actually funny. And shortly after that, I spoke to a student who was using ChatGPT to write an application to obtain a grazing permit for cattle. You know, the work of your lab is situated in its own unique societal context. So, what I really want to know and start with here today is, like, what’s the buzz been like for you in your part of the world around this new wave of AI?

SRIRAM RAJAMANI: Yeah. First of all, Ashley, you know, thank you for having this conversation with me. You’re absolutely right that our lab is situated in a very unique context on how this technology is going to play out in, you know, this part of the world, certainly. And you might remember, Ashley, a sort of a mic drop moment that happened for Satya [Nadella] when he visited India earlier this year, in January. So one of our researchers, Pratyush Kumar—he’s also co-founder of our partner organization called AI4Bhārat—he works also with the government on a project called Bhashini, which the government endeavors to bring conversational AI to the many Indian languages that are spoken in India. And what Pratyush did was he connected some of the AI4Bhārat translation models, language translation models, together with one of the GPT models to build a bot for a farmer to engage and ask questions about the government’s agricultural programs so the farmer could speak in their own language—you know, it could be Hindi—and what the AI4Bhārat models would do is to convert the Hindi speech into text and then translate it into English. And then he taught, you know, either fine-tuned or integrated with augmented generation … I don’t … I’m not … I don’t quite remember which one … it was one of those … where he made a GPT model customized to understand the agricultural program of the government. And he chained it together with this speech recognition and translation model. And the farmer could just now talk to the system, the AI system, in Hindi and ask, you know, are they eligible for their benefits and many details. And the, and the model had a sensible conversation with him, and Satya was just really amazed by that, and he calls … he called that as the mic drop moment of his trip in India, which I think is indicative of the speed at which this disruption is impacting very positively the various parts of the world, including the Indian subcontinent.

LLORENS: You referenced the many Indian languages written and spoken. Can you just bring, bring that to life for us? How many, how many languages are we talking about?

RAJAMANI: So, I think there are at least, you know, 30 or 40, you know, main, mainstream languages. I mean, the government recognizes 22. We call them as IN22. But I would think that there are about 30-plus languages that are spoken very, very broadly, each of them with, you know, several tens of millions, hundreds of millions of speakers. And then there is a long tail of maybe a hundred more languages which are spoken by people with … in, in smaller population counts. The real … they’re also very low-resource languages like Gondi and Idu Mishmi, which are just spoken by maybe just only a million speakers or even under a million speakers who probably … those languages probably don’t have enough data resources. So, India is an amazing testbed because of this huge diversity and distribution of languages in terms of the number of speakers, the amount of available data, and, and many of these tail languages have unique, you know, sociocultural nuances. So I think in that sense, there’s a really good testbed for, you know, how conversational AI can inclusively impact the entire world.

LLORENS: And, and what’s the … you mentioned tail languages. And so maybe we mean they’re low-resource languages like you also mentioned. What’s the gap like between what languages AI is accessible in today versus the full extent of all those languages that you just described, even just for, you know, for the Indian subcontinent?

RAJAMANI: So what is … what we’re seeing is that with IN22, the top languages, if you look at successive versions of the GPT models, for example, the performance is definitely improving. So if you just go from, you know, GPT-2 to GPT-3 to 3.5 to 4, right, you can sort of see that these models are increasingly getting capable. But still there is a gap between what these models are able to do and what custom models are able to do, particularly if you go towards languages in which there’s not enough training data. So, so people in our lab, you know, are doing very systematic work in this area. There is a benchmarking work that my colleagues are doing called MEGA, where there is systematic benchmark being done on various tasks on a matrix that consists of, you know, tasks on one axis and languages on another axis to just systematically, empirically study, you know, what these models are able to do. And also, we are able to build models to predict how much more data is needed in each of these languages in order for the performance to be comparable to, say, languages like English. What is the … what is the gap, and how much data is needed? The other thing is that it turns out that these models, they, they learn also from related languages. So if you want to improve the performance of a language, it turns out there are other languages in the world and in India that have similar characteristics, you know, syntactic and semantic characteristics, to the language that you’re thinking about. So we can also sort of recommend, you know, what distribution of data we should collect so that all the languages improve. So that’s the kind of work that we’re doing.

LLORENS: Yeah, it’s one of the most fascinating parts of all of this—how diversity in the training dataset improves, you know, across the board, like even the addition of code, for example, in addition to language, and now we’re even seeing even other modalities. And, you know, the, the wave of AI and the unprecedented capabilities we’re seeing has significant implications for just about all of computing research. In fact, those of us in and around the field are undergoing now a process that I call, you know, reimagining computing research. And, you know, that’s a somewhat artful way to put it. But beyond the technical journey, there’s an emotional journey happening across the research community and many other communities, as well. So what has that journey been like for you and the folks at the India lab?

RAJAMANI: Yeah, that’s a good question, Ashley. You know, our work in the lab spans four areas. You know, we do work in theory and algorithms. We do work in AI and machine learning. We do systems work, and we also have an area called “Technology and Empowerment.” It’s about making sure that technology benefits people. And so far, our conversation has been about the last area. But all these four areas have been affected in a big way using this disruption. Maybe, maybe I’ll just say a few more things about the empowerment area first and then move on to the other ones. If you look at our work in the empowerment area, Ashley, right, this lab has had a track record of doing work that makes technology inclusive not just from an academic perspective, but by also deploying the work via spun-off startups, many startups, that have taken projects in the lab and scaled them to the community. Examples are Digital Green, which is an agricultural extension; 99DOTS, which is a tuberculosis medication adherence system. Karya is a, is a platform for dignified digital labor to enable underprivileged users, rural users, to contribute data and get paid for it. You know, HAMS is a system that we have built to improve road safety. You know, we’ve built a system called BlendNet that enables rural connectivity. And almost all of these, we have spun them off into startups that are … that have been funded by, you know, venture capitalists, impact investors, and we have a vibrant community of these partners that are taking the work from the lab and deploying them in the community. So the second thing that is actually happening in this area is that, as you may have heard, India is playing a pivotal role in digital public infrastructure. Advances like the Aadhaar biometric authentication system; UPI, which is a payment system—they are pervasively deployed in India, and they reach, you know, several hundreds of millions of people. And in the case of Aadhaar, more than a billion people and so on. And the world is taking note. India is now head of the G20, and many countries now want to be inspired by India and build such a digital public infrastructure in their own countries, right. And so, so, so what you saw is the mic drop moment, right? That … it actually has been coming for a long time. There has been a lot of groundwork that has been laid by our lab, by our partners, you know, such as AI4Bhārat, the people that work on digital public goods to get the technical infrastructure and our know-how to a stage where we can really build technology that benefits people, right. So, so going forward, in addition to these two major advancements, which is the building of the partner and alumni ecosystem, the digital public good infrastructure, I think AI is going to be a third and extremely important pillar that is going to enable citizen-scale digital services to reach people who may only have spoken literacy and who might speak in their own native languages and the public services can be accessible to them.

LLORENS: So you mentioned AI4Bhārat, and I’d love for you to say a bit more about that organization and how researchers are coming together with collaborators across sectors to make some of these technology ideas real.

RAJAMANI: Yeah. So AI4Bhārat is a center in IIT Madras, which is an academic institution. It has multiple stakeholders, not just Microsoft Research, but our search technology center in India also collaborates with them. Nandan Nilekani is a prominent technologist and philanthropist. He’s behind a lot of India’s digital public infrastructure. He also, you know, funds that center significantly through his philanthropic efforts. And there are a lot of academics that have come together. And what the center does is data collection. I talked about the diversity of, you know, Indian languages. They collect various kinds of data. They also look at various applications. Like in the judicial system, in the Indian judicial system, they are thinking about, you know, how to transcribe, you know, judgments, enabling various kinds of technological applications in that context, and really actually thinking about how these kinds of AI advances can help right on top of digital public goods. So that’s actually the context in which they are working on.

LLORENS: Digital public goods. Can you, can you describe that? What, what do we mean in this context by digital public good?

RAJAMANI: So what we mean is if you look at Indian digital public infrastructure, right, that is, as I mentioned, that is Aadhaar, which is the identity system that is now enrolled more than 1.3 billion Indians. There is actually a payment infrastructure called UPI. There are new things that are coming up, like something that’s, that’s called Beckn. There’s something called ONDC that is poised to revolutionize how e-commerce is done. So these are all, you know, sort of protocols that through private-public partnership, right, government together with think tanks have developed, that are now deployed in a big way in India. And they are now pervasively impacting education, health, and agriculture. And every area of public life is now being impacted by these digital public infrastructures. And there is a huge potential for AI and AI-enabled systems to ride on top of this digital public infrastructure to really reach people.

LLORENS: You know, you talked about some of the, you know, the infrastructure considerations, and so what are the challenges in bringing, you know, digital technologies to, you know, to, to the Indian context? And, and you mentioned the G20 and other countries that are following the patterns. What are, what are some of the common challenges there?

RAJAMANI: So, I mean, there are many, many challenges. One of them is lack of access. You know, though India has made huge strides in lifting people out of poverty, people out there don’t have the same access to technology that you and I have. Another challenge is awareness. People just don’t know, you know, how technology can help them, right. You know, people hearing this podcast know about, you know, LinkedIn to get jobs. They know about, you know, Netflix or other streaming services to get entertainment. But there are many people out there that don’t even know that these things exist, right. So awareness is another issue. Affordability is another issue. So … many of the projects that I mentioned, what they do is actually they start not with the technology; they start with the users and their context and this situation, and what they’re trying to do and then map back. And technology is just really one of the pieces that these systems, that all of these systems that I mentioned, right … technology is just only one component. There’s a sociotechnical piece that deals with exactly these kinds of access and awareness and these kinds of issues.

LLORENS: And we’re, we’re kind of taking a walk right now through the work of the lab. And there are some other areas that you, you want to get into, but I want to come back to this … maybe this is a good segue into the emotional journey part of the question I asked a few minutes ago. As you get into some of the, you know, the deep technical work of the lab, what were some of the first impressions of the new technologies, and what were, what were some of the first things that, you know, you and your colleagues there and our colleagues, you know, felt, you know, in observing these new capabilities?

RAJAMANI: So I, I think Peter [Lee] mentioned this very eloquently as stages of grief. And me and my colleagues, I think, went through the same thing. I mean, the … there was … we went from, you know, disbelief, saying, “Oh, wow, this is just amazing. I can’t believe this is happening” to sort of understanding what this technology can do and, over time, understanding what its limitations are and what the opportunities are as a scientist and technologist and engineering organization to really push this forward and make use of it. So that’s, I think, the stages that we went through. Maybe I can be a little bit more specific. As I mentioned, the three other areas we work on are theory in algorithms, in machine learning, and in systems. And I can sort of see … say how my colleagues are evolving, you know, their own technical and research agendas in the, in the light of the disruption. If you take our work in theory, this lab has had a track record of, you know, cracking longstanding open problems. For example, problems like the Kadison-Singer conjecture that was open for many years, many decades, was actually solved by people from the lab. Our lab has incredible experts in arithmetic and circuit complexity. They came so close to resolving the VP versus VNP conjecture, which is the arithmetic analog of the P versus NP problem. So we have incredible people working on, working on theoretical computer science, and a lot of them are now shifting their attention to understanding these large language models, right. Instead of understanding just arithmetic circuits, you know, people like Neeraj Kayal and Ankit Garg are now thinking about mathematically what does it take to understand transformers, how do we understand … how might we evolve these models or training data so that these models improve even further in performance in their capabilities and so on. So that’s actually a journey that the theory people are going through, you know, bringing their brainpower to bear on understanding these models foundationally. Because as you know, currently our understanding of these foundation models is largely empirical. We don’t have a deep scientific understanding of them. So that’s the opportunity that the, that the theoreticians see in this space. If you look at our machine learning work, you know, that actually is going through a huge disruption. I remember now one of the things that we do in this lab is work on causal ML … Amit Sharma, together with Emre Kiciman and other colleagues working on causal machine learning. And I heard a very wonderful podcast that you hosted them some time ago. Maybe you can say a little bit about what, what you heard from them, and then I can pick up back and then connect that with the rest of the lab.

LLORENS: Sure. Well, it’s … you know, I think the, the common knowledge … there’s, there’s so many, there’s so many things about machine learning over the last few decades that have become kind of common knowledge and conventional wisdom. And one of those things is that, you know, correlation is not causation and that, you know, you know, learned models don’t, you know, generally don’t do causal reasoning. And so we, you know, we’ve had very specialized tools created to do the kind of causal reasoning that Amit and Emre do. And it was interesting. I asked them some of the same questions I’m asking you now, you know, about the journey and the initial skepticism. But it has been really interesting to see how they’re moving forward. They recently published a position paper on arXiv where they conducted some pretty compelling experiments, in some cases, showing something like, you know, causal reasoning, you know, being, being exhibited, or at least I’ll say convincing performance on causal reasoning tasks.

RAJAMANI: Yeah, absolutely.

LLORENS: Yeah, go ahead.

RAJAMANI: Yeah, yeah, yeah, absolutely. So, so, so, you know, I would say that their journey was that initially they realized that … of course, they build specialized causal reasoning tools like DoWhy, which they’ve been building for many years. And one of the things they realized was that, “Oh, some of the things that DoWhy can do with sophisticated causal reasoning these large language models were just able to do out of the box.” And that was sort of stunning for them, right. And so the question then becomes, you know, does specific vertical research in causal reasoning is even needed, right. So that’s actually the shock and the awe and the emotional journey that these people went through. But actually, after the initial shock faded, they realized that there is actually [a] “better together” story that is emerging in the sense that, you know, once you understand the details, what they realized was that natural language contains a lot of causal information. Like if you just look at the literature, the literature has many things like, you know, A causes B and if there is, if there is, you know, hot weather, then ice cream sales go up. You know, this information is present in the literature. So if you look at tools like DoWhy, what they do is that in order to provide causal machine learning, they need assumptions from the user on what the causal model is. They need assumptions about what the causal graph is, what is the user’s assumptions about which variables depend on which variables, right? And then … and, and, and what they’ve realized is that models like GPT-4 can now provide this information. Previously, only humans were able to provide this information. And … but in addition to that, right, tools like DoWhy are still needed to confirm or refute these assumptions, statistically, using data. So this division of labor between getting assumptions from either a human or from a large language model and then using the mathematics of DoWhy to confirm or refute the assumptions now is emerging as a real advance in the way we do causal reasoning, right? So I think that’s actually what I heard in your podcast, and that’s indicative of actually what the rest of my colleagues are going through. You know, moving from first thinking about, “Oh, GPT-4 is like a threat, you know, in the sense that it really obviates my research area” to understand, “Oh, no, no. It’s really a friend. It, it really helps me do, you know, some of the things that required primarily human intervention. And if I combine GPT or these large language models together with, you know, domain specific research, we can actually go after bigger problems that we didn’t even dare going after before.”

LLORENS: Mmm. Let me, let me ask you … I’m going to, I’m going to pivot here in a moment, but did you … have you covered, you know, the areas of research in the lab that you wanted to walk through?

RAJAMANI: Yeah, yeah, there’s, there’s more. You know, thank you for reminding me. Even in the machine learning area, there is another work direction that we have called extreme classification, which is about building very, very … classifiers with a large number of labels, you know, hundreds of millions and billions of labels. And, you know, these people are also benefiting from large language encoders. You know, they have come up with clever ways of taking these language encoders that are built using self-supervised learning together with supervised signals from things like, you know, clicks and logs from search engines and so on to improve performance of classifiers. Another work that we’ve been doing is called DiskANN, or approximate nearest neighbor search. As you know, Ashley, in this era of deep learning, retrieval works by converting everything in the world, you know, be it a document, be it an image, you know, be it an audio or video file, everything into an embedding, and relevance … relevant retrieval is done by nearest neighbor search in a geometric space. And our lab has been doing … I mean, we have probably the most scalable vector index that has been built. And, and, and these people are positively impacted by these large language models because, you know, as you know, retrieval augmented generation is one of the most common design patterns in making these large language models work for applications. And so their work is becoming increasingly relevant, and they are being placed huge demands on, you know, pushing the scale and the functionality of the nearest neighbor retrieval API to do things like, oh, can I actually add predicates, can I add streaming queries, and so on. So they are just getting stretched with more demand, you know, for their work. You know, if you look at our systems work, which is the last area that I want to cover, you know, we have, we have been doing work on using GPUs and managing GPU resources for training as well as inference. And this area is also going through a lot of disruption. And prior to these large language models, these people were looking at relatively smaller models, you know, maybe not, you know, hundreds of billions to trillions of parameters. But, but, you know, maybe hundreds of millions and so on. And they invented several techniques to share a GPU cluster among training jobs. So the disruption that they had was all these models are so large that nobody is actually sharing clusters for them. But it turned out that some of the techniques that they invented to deal with, you know, migration of jobs and so on are now used for failure recovery in very, very large models. So it turns out that, you know, at the beginning it seems like, “Oh, my work is not relevant anymore,” but once you get into the details, you find that there are actually still many important problems. And the insights you have from solving problems for smaller models can now carry over to the larger ones. And one other area I would say is the area of, you know, programming. You know, I myself work in this area. We have been doing … combining machine learning together with program analysis to build a new generation of programing tools. And the disruption that I personally faced was that the custom models that I was building were no longer relevant; they’re, they’re not even needed. So that was a disruption. But actually, what me and my colleagues went through was that, “OK, that is true, but we can now go after problems that we didn’t dare to go before.” Like, for example, you know, we can now see that, you know, copilot and so on let you give recommendations in the context of the particular file that you are editing. But can we now edit an entire repository which might contain, you know, millions of files with hundreds of millions of code? Can I just say, let’s take, for example, the whole of the Xbox code base or the Windows code base, and in the whole code base, I want to do this refactoring, or I want to, you know, migrate this package from … migrate this code base from using now this serialization package to that serialization package. Can we just do that, right? I think we wouldn’t even dare going after such a problem two years ago. But now with large language models, we are thinking, can we do that? And large language models cannot do this right now because, you know, whatever context size you have, you can’t have 100-million-line code as a context to a large language model. And so this requires, you know, combining program analysis with these techniques. That’s as an example. And actually, furthermore, there are, you know, many things that we are doing that are not quite affected by large language models. You know, for example, Ashley, you know about the HyWay project, where we’re thinking about technology to make hybrid work work better. And, you know, we are doing work on using GPUs and accelerators for, you know, database systems and so on. And we do networking work. We do a low-earth orbit satellite work for connectivity and so on. And those we are doubling down, you know, though, they have nothing to do with large language models because those are problems that are important. So, I think, you know, to summarize, I would say that, you know, most of us have gone through a journey from, you know, shock and awe to sort of somewhat of an insecurity, saying is my work even relevant, to sort of understanding, oh, these things are really aides for us. These are not threats for us. These are really aides, and we can use them to solve problems that we didn’t even dream of before. That’s the journey I think my colleagues have gone through.

LLORENS: I want to, I want to step into two of the concepts that you just laid out, maybe just to get into some of the intuitions as to what problem is being solved and how generative AI is sort of changing the way that those, those problems are solved. So the first one is extreme classification. I think, you know, a flagship use of generative AI and foundation models is, is Bing chat. And so I think this idea of, of internet search as a, as a, you know, as a, a home for, for these new technologies is, is in the popular imagination now. And I know that extreme classification seeks to solve some challenges related to search and information retrieval. But what is the challenge problem there? What, you know … how is extreme classification addressing that, and how is that, you know, being done differently now?

RAJAMANI: So as I mentioned, where my colleagues have already made a lot of progress is in combining language encoders with extreme classifiers to do retrieval. So there are these models called NLR. Like, for example, there’s a tooling NLR model, which is a large language model which does representation, right. It actually represents, you know, keywords, keyword phrases, documents, and so on in the encodings, you know, based on, you know, self-supervised learning. But it is a very important problem to combine the knowledge that these large language models have, you know, from understanding a text. We have to combine that with supervised signals that we have from click logs. Because we have search engine click logs, we know, you know, for example, when somebody searches for this information and we show these results, what users click on. That’s supervised signals, and we have that in huge amounts. And what our researchers have done is they have figured out how to combine these encoders together with the supervised signals from click logs in order to improve both the quality and cost of retrieval, right. And, Ashley, as you said, retrieval is an extremely important part of experiences like Bing chat and retrieval augmented generation is what prevents hallucination and grounds these large language models with appropriate information retrieved and presented so that the, the relevant results are grounded without hallucination, right. Now, the new challenge that this team is now facing is, OK, that’s so far so good as far as retrieval is concerned, right? But can we do similar things with generation, right? Can we now combine these NLG models, which are these generative models, together with supervised signals, so that even generation can actually be guided in this manner, improved in both performance, as well as accuracy. And that is an example of a challenging problem that the team is going after.

LLORENS: Now let’s do the same thing with programming, and maybe I’m going to engage you on a slightly higher level of abstraction than the deep work you’re doing. And then, we can, we can, we can get back down into the work. But one of the things … one of, one of the, one of the popular ideas about these new foundation models is that you can … effectively through interacting with them, you’re sort of programming them in natural language. How does that concept sit with you as someone who, you know, is an expert in programming languages? What do you, what do you think, what do you think when someone says, you know, sort of programming the, you know, the system in natural language?

RAJAMANI: Yeah, so I, I find it fascinating and, you know, for one, you know, can we … an important topic in programming language research has been always that can we get end users or, you know, people who are nonprogrammers to program. I think that has been a longstanding open problem. And if you look at the programming language community, right, the programming language community has been able to solve it only in, in narrow domains. You know, for example, Excel has Flash Fill, where, through examples, you know, people can program Excel macros and so on. But those are not as general as these kinds of, you know, LLM-based models, right. And, and it is for the whole community, not just me, right. It was stunning when users can just describe in natural language what program they want to write and these models emit in a Python or Java or C# code. But there is a gap between that capability and having programmers just program in natural language, right. Like, you know, the obvious one is … and I can sort of say, you know, write me Python code to do this or that, and it can generate Python code, and I could run it. And if that works, then that’s a happy path. But if it doesn’t work, what am I supposed to do if I don’t know Python? What am I supposed to do, right? I still have to now break that abstraction boundary of natural language and go down into Python and debug Python. So one of the opportunities that I see is then can we build representations that are also in natural language, but that sort of describe, you know, what the application the user is trying to build and enable nonprogrammers—could be lawyers, could be accountants, could be doctors—to engage with a system purely in natural language and the system should talk back to you, saying, “Oh, so far this is what I’ve understood. This is the kind of program that I am writing,” without the user having to break that natural language abstraction boundary and going and having to go and understand Python, right? I think this is a huge opportunity in programming languages to see whether … can we build, like, for example, right, Ashley, right, I’m a programmer, and one of the things I love about programming is that I can write code. I can run it, see what it produces, and if I don’t like the results, I can go change the code and rerun it. And that’s sort of the, you know, coding, evaluating … we call it the REPL loop, right. So that’s, that’s what a programmer faces, right. Can we now provide that to natural language programmers? And since … and I want to say, “Here’s a program I want to write,” and now I want to say, “Well, I want to run this program with this input.” And if it doesn’t work, I want to say, “Oh, this is something I don’t like. I want to change this code this way,” right. So can I now provide that kind of experience to natural language programming? I think that’s a huge opportunity if you managed to pull that off.

LLORENS: And now let’s, let’s maybe return to some of the more societally oriented, you know, topics that, that you were talking about at the top of the episode in the context of, of, of programming. Because being able to program in natural language, I think, really changes, you know, who can use the technologies, who can develop technologies, what a program … what a software development team can actually be, and who, who that, who that kind of a team can consist of. So can you paint a picture? You know, what, what, what kind of opportunities for, for, you know, software development does this open up when you can sort of program in natural languages, assuming we can make the AI compatible with your language, whatever that happens to be?

RAJAMANI: Yeah, I think there are a lot of opportunities, and maybe I’ll, I’ll, I’ll describe a few things that we’re already doing. My, my colleagues are working on a project called VeLLM, which is now a copilot assistant for societal-scale applications. And one application they are going after is education. So, you know, India, like many other countries, has made a lot of educational resources available to teachers in government schools and so on so that if a teacher wants to make a lesson plan, you know, there is enough information available for them to search, find out many videos that their colleagues have created from different parts of the country, and put them together to create a lesson plan for their class, right. But that is a very laborious process. I mean, you have information overload when you deal with it. So my colleagues are thinking about, can we now think about, in some sense, the teacher as a programmer and have the teacher talk to the VeLLM system saying, “Hey, and here is my lesson plan. Here is what I’m trying to put together in terms of what I want to teach. And I now want the AI system to collect the relevant resources that are relevant to my lesson plan and get them in my language, the language that my students speak. You know, how do I do that,” right? And all of the things that I mentioned, right, you have to now index all of the existing information using vector indices. You have to now [use] retrieval augmented generation to get the correct thing. You have to now deal with the trunk and tail languages because this teacher might be speaking in, in, in a language that is not English, right. And, and, and, and the teacher might get a response that they don’t like, right. And how do they now … but they are not a programmer, right? How are they going to deal with it, right? So that’s actually an example. If we, if we pull this off, right, and a teacher in rural India is able to access this information in their own language and create a lesson plan which contains the best resources throughout the country, right, we would have really achieved something.

LLORENS: Yeah, you know, it’s a, it’s a hugely compelling vision. And I’m really looking forward to seeing where you and, you know, our colleagues in Microsoft Research India Lab and MSR [Microsoft Research] more broadly, you know, take all these different directions.

[MUSIC PLAYS] So I really appreciate you spending this time with me today.

RAJAMANI: Thank you, Ashley. And I was very happy that I could share the work that my colleagues are doing here and, and bringing this to your audience. Thank you so much.

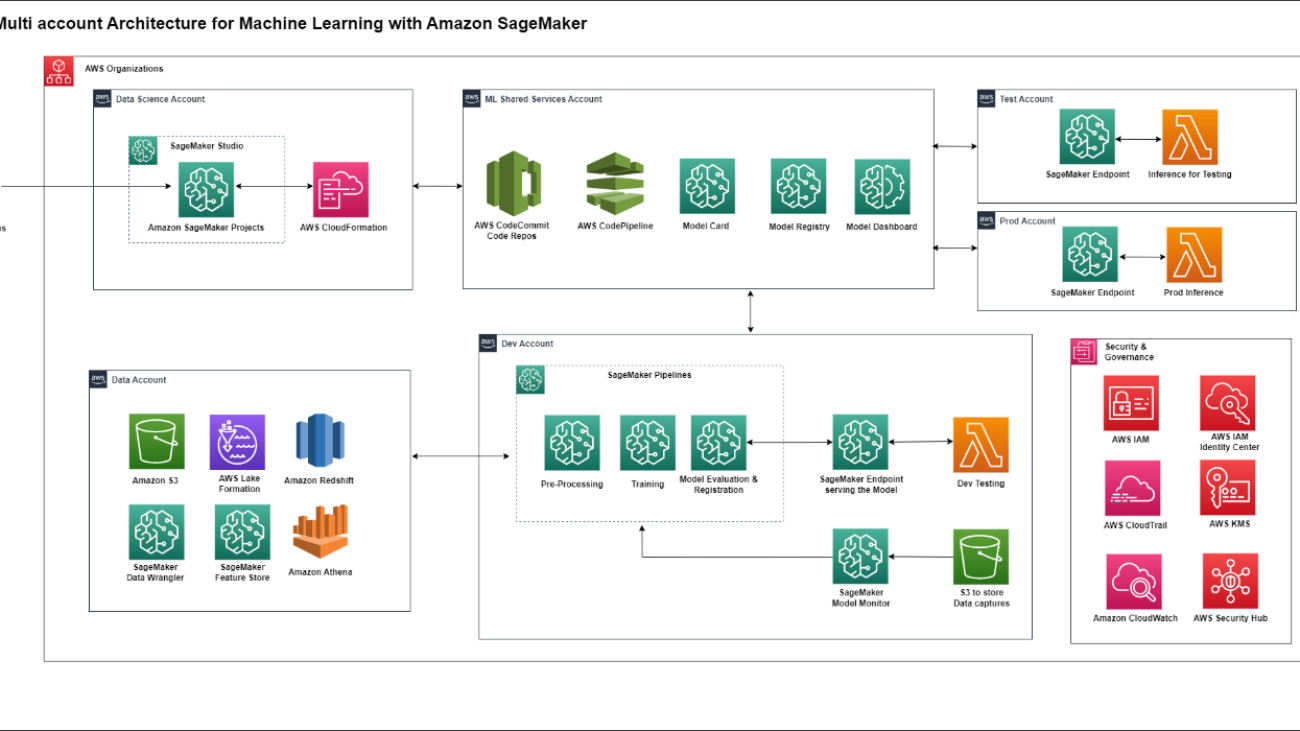

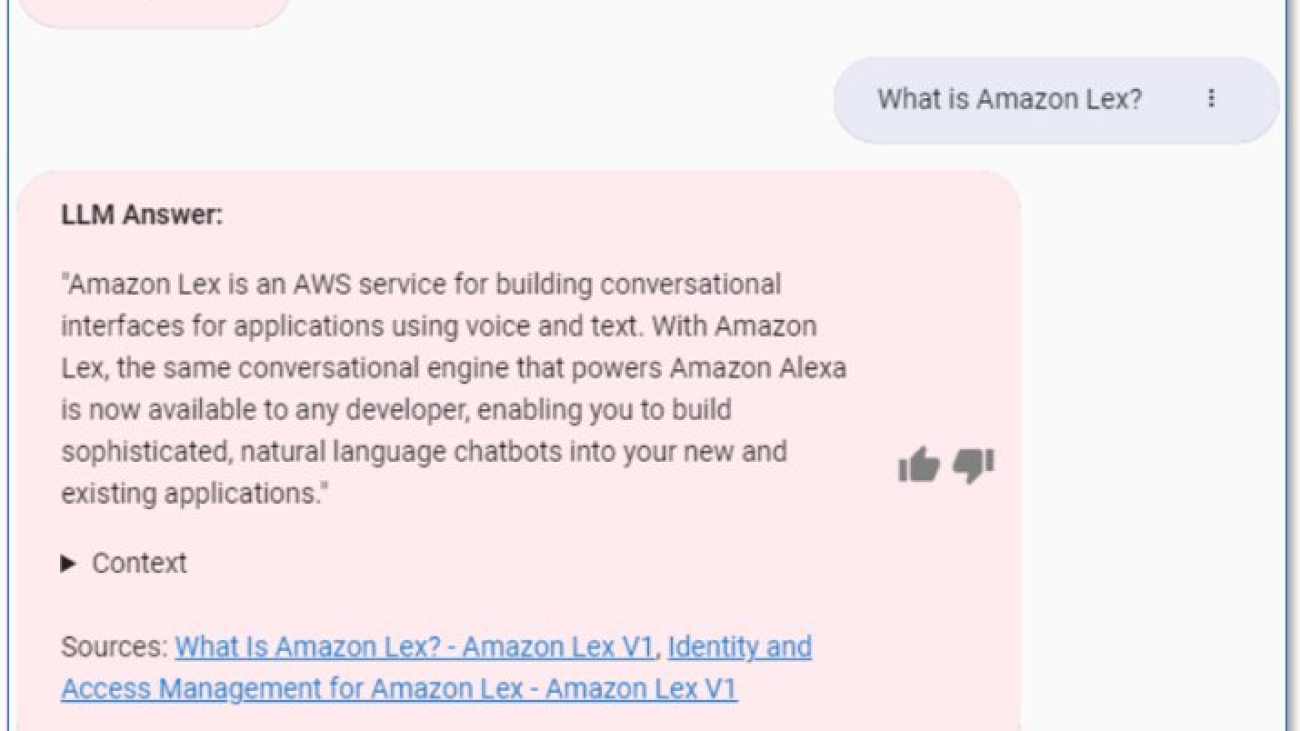

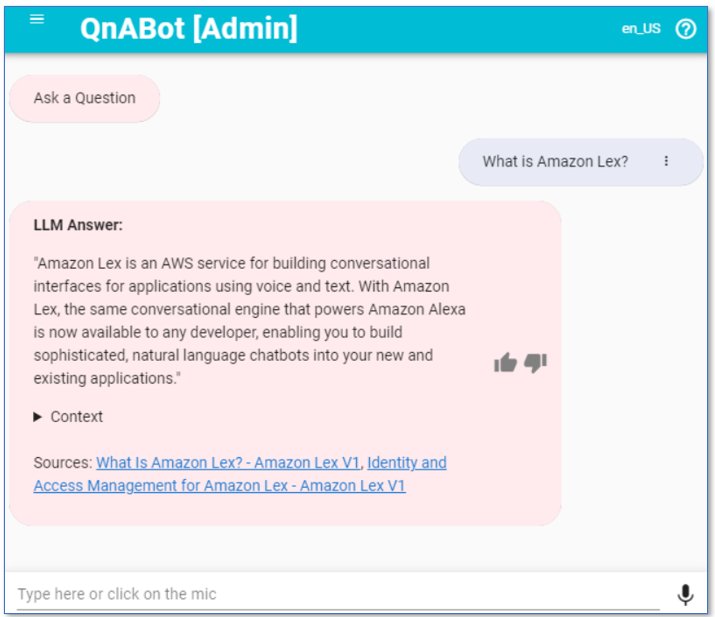

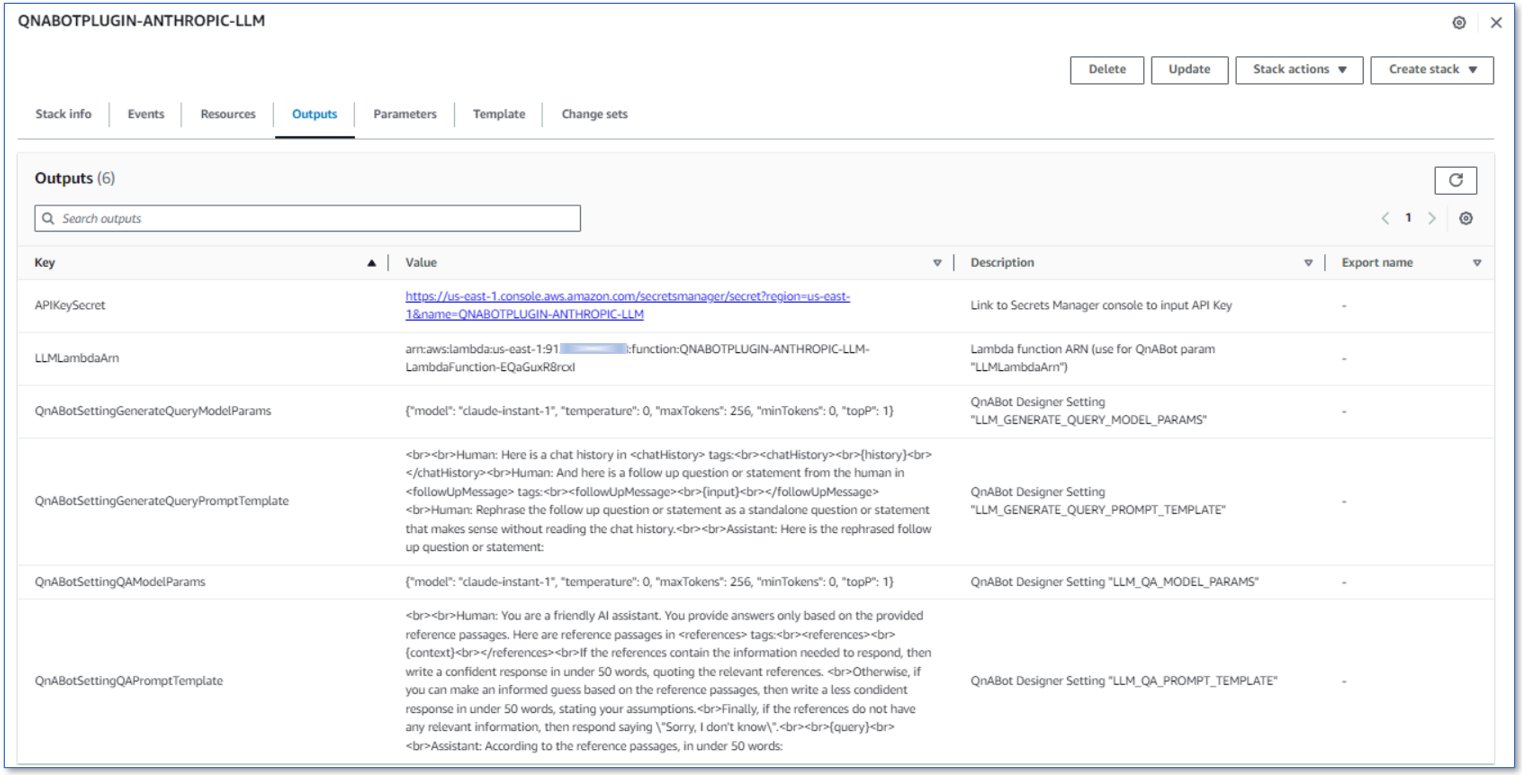

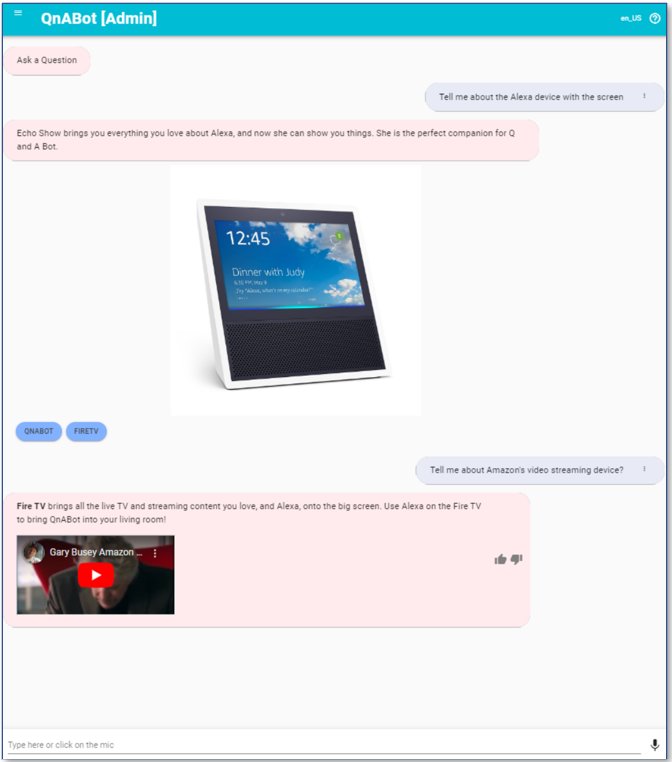

Vishal Naik is a Sr. Solutions Architect at Amazon Web Services (AWS). He is a builder who enjoys helping customers accomplish their business needs and solve complex challenges with AWS solutions and best practices. His core area of focus includes Machine Learning, DevOps, and Containers. In his spare time, Vishal loves making short films on time travel and alternate universe themes.

Vishal Naik is a Sr. Solutions Architect at Amazon Web Services (AWS). He is a builder who enjoys helping customers accomplish their business needs and solve complex challenges with AWS solutions and best practices. His core area of focus includes Machine Learning, DevOps, and Containers. In his spare time, Vishal loves making short films on time travel and alternate universe themes. Ram Vittal is a Principal ML Solutions Architect at AWS. He has over 20 years of experience architecting and building distributed, hybrid, and cloud applications. He is passionate about building secure and scalable AI/ML and big data solutions to help enterprise customers with their cloud adoption and optimization journey to improve their business outcomes. In his spare time, he rides his motorcycle and walks with his 2-year-old sheep-a-doodle!

Ram Vittal is a Principal ML Solutions Architect at AWS. He has over 20 years of experience architecting and building distributed, hybrid, and cloud applications. He is passionate about building secure and scalable AI/ML and big data solutions to help enterprise customers with their cloud adoption and optimization journey to improve their business outcomes. In his spare time, he rides his motorcycle and walks with his 2-year-old sheep-a-doodle!

NVIDIA GeForce NOW (@NVIDIAGFN)

NVIDIA GeForce NOW (@NVIDIAGFN)

We’re bringing generative AI capabilities in Search (SGE) to more people, making Search Labs available in India and Japan.

We’re bringing generative AI capabilities in Search (SGE) to more people, making Search Labs available in India and Japan.

Clevester Teo is a Senior Partner Solutions Architect at AWS, focused on the Public Sector partner ecosystem. He enjoys building prototypes, staying active outdoors, and experiencing new cuisines. Clevester is passionate about experimenting with emerging technologies and helping AWS partners innovate and better serve public sector customers.

Clevester Teo is a Senior Partner Solutions Architect at AWS, focused on the Public Sector partner ecosystem. He enjoys building prototypes, staying active outdoors, and experiencing new cuisines. Clevester is passionate about experimenting with emerging technologies and helping AWS partners innovate and better serve public sector customers. Windrich is a Solutions Architect at AWS who works with customers in industries such as finance and transport, to help accelerate their cloud adoption journey. He is especially interested in Serverless technologies and how customers can leverage them to bring values to their business. Outside of work, Windrich enjoys playing and watching sports, as well as exploring different cuisines around the world.

Windrich is a Solutions Architect at AWS who works with customers in industries such as finance and transport, to help accelerate their cloud adoption journey. He is especially interested in Serverless technologies and how customers can leverage them to bring values to their business. Outside of work, Windrich enjoys playing and watching sports, as well as exploring different cuisines around the world.