We believe generative AI has the potential over time to transform virtually every customer experience we know. The number of companies launching generative AI applications on AWS is substantial and building quickly, including adidas, Booking.com, Bridgewater Associates, Clariant, Cox Automotive, GoDaddy, and LexisNexis Legal & Professional, to name just a few. Innovative startups like Perplexity AI are going all in on AWS for generative AI. Leading AI companies like Anthropic have selected AWS as their primary cloud provider for mission-critical workloads, and the place to train their future models. And global services and solutions providers like Accenture are reaping the benefits of customized generative AI applications as they empower their in-house developers with Amazon CodeWhisperer.

These customers are choosing AWS because we are focused on doing what we’ve always done—taking complex and expensive technology that can transform customer experiences and businesses and democratizing it for customers of all sizes and technical abilities. To do this, we’re investing and rapidly innovating to provide the most comprehensive set of capabilities across the three layers of the generative AI stack. The bottom layer is the infrastructure to train Large Language Models (LLMs) and other Foundation Models (FMs) and produce inferences or predictions. The middle layer is easy access to all of the models and tools customers need to build and scale generative AI applications with the same security, access control, and other features customers expect from an AWS service. And at the top layer, we’ve been investing in game-changing applications in key areas like generative AI-based coding. In addition to offering them choice and—as they expect from us—breadth and depth of capabilities across all layers, customers also tell us they appreciate our data-first approach, and trust that we’ve built everything from the ground up with enterprise-grade security and privacy.

This week we took a big step forward, announcing many significant new capabilities across all three layers of the stack to make it easy and practical for our customers to use generative AI pervasively in their businesses.

Bottom layer of the stack: AWS Trainium2 is the latest addition to deliver the most advanced cloud infrastructure for generative AI

The bottom layer of the stack is the infrastructure—compute, networking, frameworks, services—required to train and run LLMs and other FMs. AWS innovates to offer the most advanced infrastructure for ML. Through our long-standing collaboration with NVIDIA, AWS was the first to bring GPUs to the cloud more than 12 years ago, and most recently we were the first major cloud provider to make NVIDIA H100 GPUs available with our P5 instances. We continue to invest in unique innovations that make AWS the best cloud to run GPUs, including the price-performance benefits of the most advanced virtualization system (AWS Nitro), powerful petabit-scale networking with Elastic Fabric Adapter (EFA), and hyper-scale clustering with Amazon EC2 UltraClusters (thousands of accelerated instances co-located in an Availability Zone and interconnected in a non-blocking network that can deliver up to 3,200 Gbps for massive-scale ML training). We are also making it easier for any customer to access highly sought-after GPU compute capacity for generative AI with Amazon EC2 Capacity Blocks for ML—the first and only consumption model in the industry that lets customers reserve GPUs for future use (up to 500 deployed in EC2 UltraClusters) for short duration ML workloads.

Several years ago, we realized that to keep pushing the envelope on price performance we would need to innovate all the way down to the silicon, and we began investing in our own chips. For ML specifically, we started with AWS Inferentia, our purpose-built inference chip. Today, we are on our second generation of AWS Inferentia with Amazon EC2 Inf2 instances that are optimized specifically for large-scale generative AI applications with models containing hundreds of billions of parameters. Inf2 instances offer the lowest cost for inference in the cloud while also delivering up to four times higher throughput and up to ten times lower latency compared to Inf1 instances. Powered by up to 12 Inferentia2 chips, Inf2 are the only inference-optimized EC2 instances that have high-speed connectivity between accelerators so customers can run inference faster and more efficiently (at lower cost) without sacrificing performance or latency by distributing ultra-large models across multiple accelerators. Customers like Adobe, Deutsche Telekom, and Leonardo.ai have seen great early results and are excited to deploy their models at scale on Inf2.

On the training side, Trn1 instances—powered by AWS’s purpose-built ML training chip, AWS Trainium—are optimized to distribute training across multiple servers connected with EFA networking. Customers like Ricoh have trained a Japanese LLM with billions of parameters in mere days. Databricks is getting up to 40% better price-performance with Trainium-based instances to train large-scale deep learning models. But with new, more capable models coming out practically every week, we are continuing to push the boundaries on performance and scale, and we are excited to announce AWS Trainium2, designed to deliver even better price performance for training models with hundreds of billions to trillions of parameters. Trainium2 should deliver up to four times faster training performance than first-generation Trainium, and when used in EC2 UltraClusters should deliver up to 65 exaflops of aggregate compute. This means customers will be able to train a 300 billion parameter LLM in weeks versus months. Trainium2’s performance, scale, and energy efficiency are some of the reasons why Anthropic has chosen to train its models on AWS, and will use Trainium2 for its future models. And we are collaborating with Anthropic on continued innovation with both Trainium and Inferentia. We expect our first Trainium2 instances to be available to customers in 2024.

We’ve also been doubling down on the software tool chain for our ML silicon, specifically in advancing AWS Neuron, the software development kit (SDK) that helps customers get the maximum performance from Trainium and Inferentia. Since introducing Neuron in 2019 we’ve made substantial investments in compiler and framework technologies, and today Neuron supports many of the most popular publicly available models, including Llama 2 from Meta, MPT from Databricks, and Stable Diffusion from Stability AI, as well as 93 of the top 100 models on the popular model repository Hugging Face. Neuron plugs into popular ML frameworks like PyTorch and TensorFlow, and support for JAX is coming early next year. Customers are telling us that Neuron has made it easy for them to switch their existing model training and inference pipelines to Trainium and Inferentia with just a few lines of code.

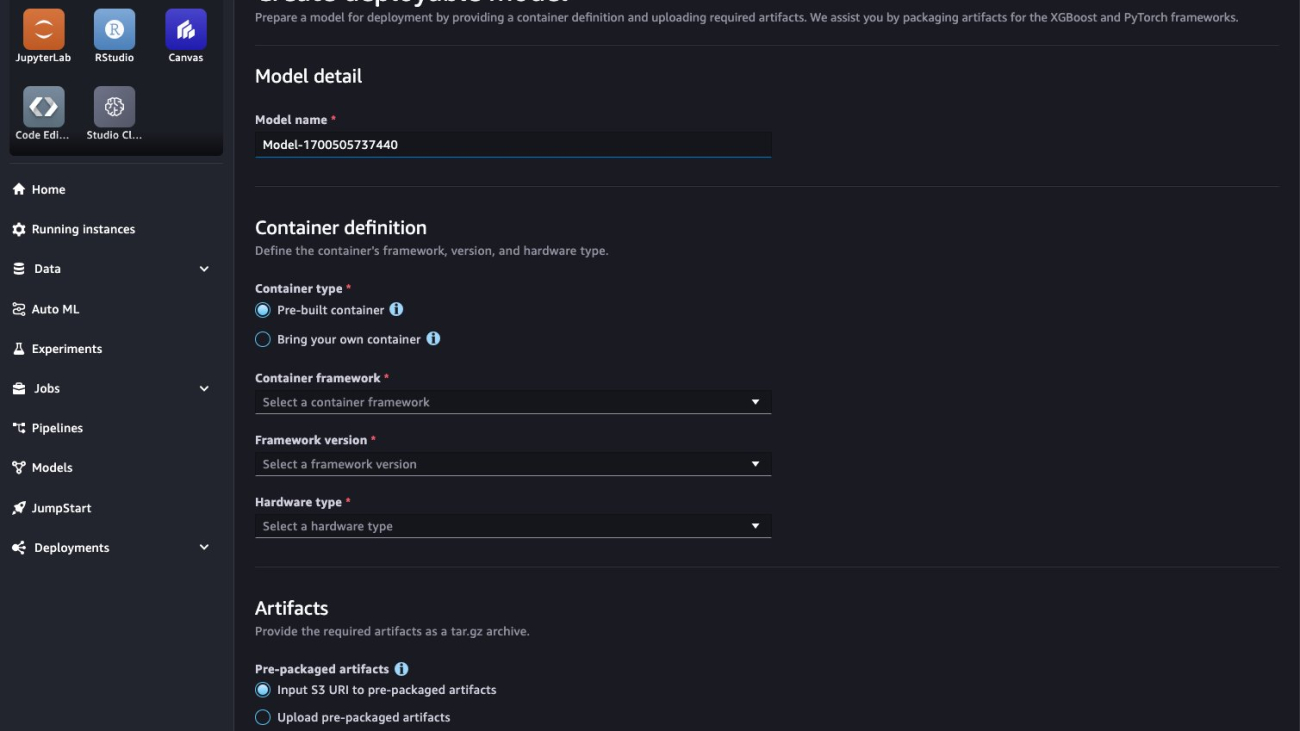

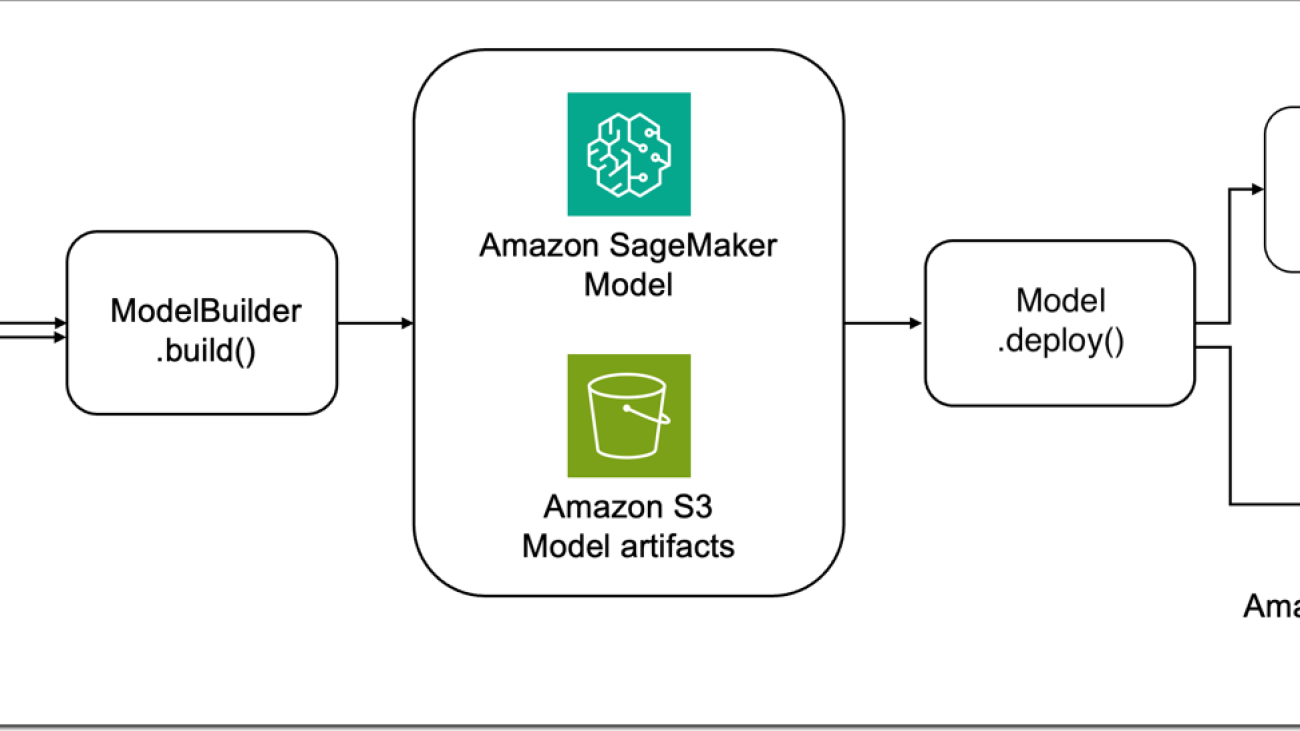

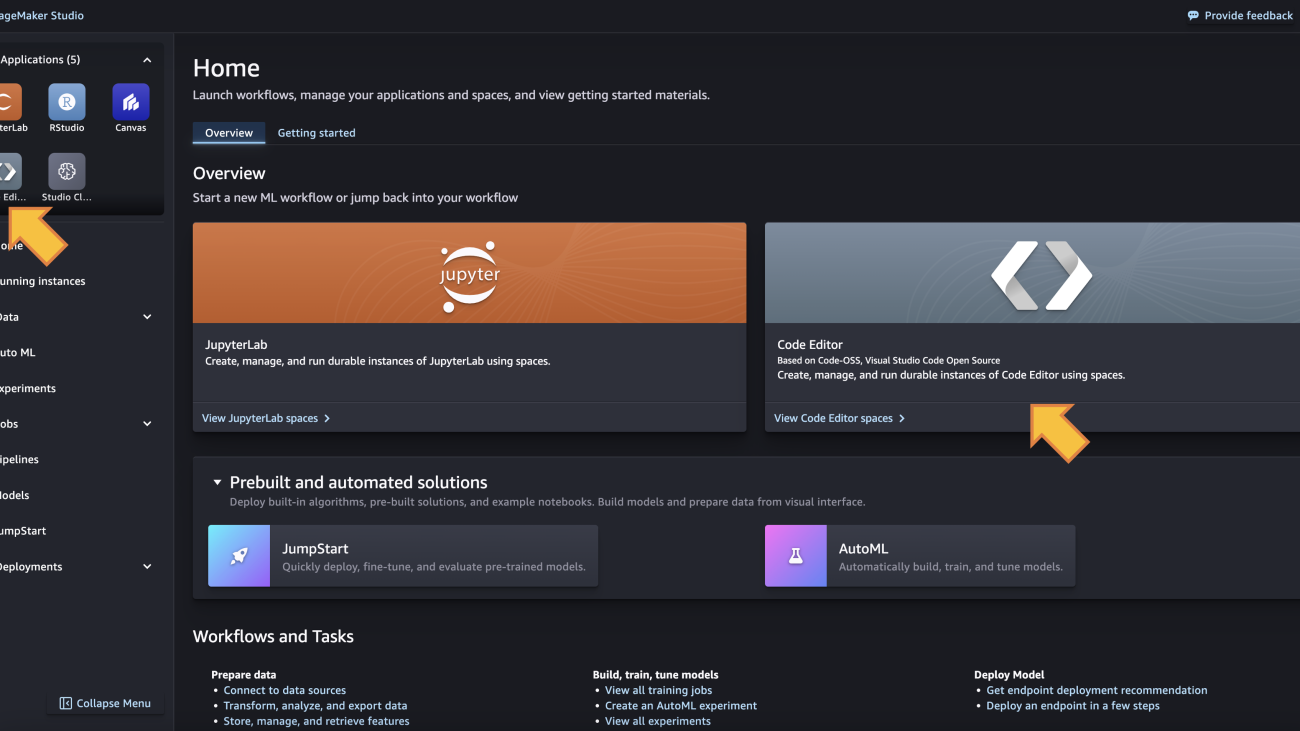

Nobody else offers this same combination of choice of the best ML chips, super-fast networking, virtualization, and hyper-scale clusters. And so, it’s not surprising that some of the most well-known generative AI startups like AI21 Labs, Anthropic, Hugging Face, Perplexity AI, Runway, and Stability AI run on AWS. But, you still need the right tools to effectively leverage this compute to build, train, and run LLMs and other FMs efficiently and cost-effectively. And for many of these startups, Amazon SageMaker is the answer. Whether building and training a new, proprietary model from scratch or starting with one of the many popular publicly available models, training is a complex and expensive undertaking. It’s also not easy to run these models cost-effectively. Customers must acquire large amounts of data and prepare it. This typically involves a lot of manual work cleaning data, removing duplicates, enriching and transforming it. Then they have to create and maintain large clusters of GPUs/accelerators, write code to efficiently distribute model training across clusters, frequently checkpoint, pause, inspect and optimize the model, and manually intervene and remediate hardware issues in the cluster. Many of these challenges aren’t new, they’re some of the reasons why we launched SageMaker six years ago—to break down the many barriers involved in model training and deployment and give developers a much easier way. Tens of thousands of customers use Amazon SageMaker, and an increasing number of them like LG AI Research, Perplexity AI, AI21, Hugging Face, and Stability AI are training LLMs and other FMs on SageMaker. Just recently, Technology Innovation Institute (creators of the popular Falcon LLMs) trained the largest publicly available model—Falcon 180B—on SageMaker. As model sizes and complexity have grown, so has SageMaker’s scope.

Over the years, we’ve added more than 380 game-changing features and capabilities to Amazon SageMaker like automatic model tuning, distributed training, flexible model deployment options, tools for ML OPs, tools for data preparation, feature stores, notebooks, seamless integration with human-in-the-loop evaluations across the ML lifecycle, and built-in features for responsible AI. We keep innovating rapidly to make sure SageMaker customers are able to keep building, training, and running inference for all models—including LLMs and other FMs. And we’re making it even easier and more cost-effective for customers to train and deploy large models with two new capabilities. First, to simplify training we’re introducing Amazon SageMaker HyperPod which automates more of the processes required for high-scale fault-tolerant distributed training (e.g., configuring distributed training libraries, scaling training workloads across thousands of accelerators, detecting and repairing faulty instances), speeding up training by as much as 40%. As a result, customers like Perplexity AI, Hugging Face, Stability, Hippocratic, Alkaid, and others are using SageMaker HyperPod to build, train, or evolve models. Second, we’re introducing new capabilities to make inference more cost-effective while reducing latency. SageMaker now helps customers deploy multiple models to the same instance so that they can share compute resources—reducing inference cost by 50% (on average). SageMaker also actively monitors instances that are processing inference requests and intelligently routes requests based on which instances are available—achieving 20% lower inference latency (on average). Conjecture, Salesforce, and Slack are already using SageMaker for hosting models due to these inference optimizations.

Middle layer of the stack: Amazon Bedrock adds new models and a wave of new capabilities make it even easier for customers to securely build and scale generative AI applications

While a number of customers will build their own LLMs and other FMs, or evolve any number of the publicly available options, many will not want to spend the resources and time to do this. For them, the middle layer of the stack offers these models as a service. Our solution here, Amazon Bedrock, allows customers to choose from industry-leading models from Anthropic, Stability AI, Meta, Cohere, AI21, and Amazon, customize them with their own data, and leverage all of the same leading security, access controls, and features they are used to in AWS—all through a managed service. We made Amazon Bedrock generally available in late September, and customer response has been overwhelmingly positive. Customers from around the world and across virtually every industry are excited to use Amazon Bedrock. adidas is enabling developers to get quick answers on everything from “getting started” info to deeper technical questions. Booking.com intends to use generative AI to write up tailored trip recommendations for every customer. Bridgewater Associates is developing an LLM-powered Investment Analyst Assistant to help generate charts, compute financial indicators, and summarize results. Carrier is making more precise energy analytics and insights accessible to customers so they reduce energy consumption and cut carbon emissions. Clariant is empowering its team members with an internal generative AI chatbot to accelerate R&D processes, support sales teams with meeting preparation, and automate customer emails. GoDaddy is helping customers easily set up their businesses online by using generative AI to build their websites, find suppliers, connect with customers, and more. Lexis Nexis Legal & Professional is transforming legal work for lawyers and increasing their productivity with Lexis+ AI conversational search, summarization, and document drafting and analysis capabilities. Nasdaq is helping to automate investigative workflows on suspicious transactions and strengthen their anti–financial crime and surveillance capabilities. All of these—and many more—diverse generative AI applications are running on AWS.

We are excited about the momentum for Amazon Bedrock, but it is still early days. What we’ve seen as we’ve worked with customers is that everyone is moving fast, but the evolution of generative AI continues at a rapid pace with new options and innovations happening practically daily. Customers are finding there are different models that work better for different use cases, or on different sets of data. Some models are great for summarization, others are great for reasoning and integration, and still others have really awesome language support. And then there is image generation, search use cases, and more—all coming from both proprietary models and from models that are publicly available to anyone. And in times when there is so much that is unknowable, the ability to adapt is arguably the most valuable tool of all. There is not going to be one model to rule them all. And certainly not just one technology company providing the models that everyone uses. Customers need to be trying out different models. They need to be able to switch between them or combine them within the same use case. This means they need a real choice of model providers (which the events of the past 10 days have made even more clear). This is why we invented Amazon Bedrock, why it resonates so deeply with customers, and why we are continuing to innovate and iterate quickly to make building with (and moving between) a range of models as easy as an API call, put the latest techniques for model customization in the hands of all developers, and keep customers secure and their data private. We’re excited to introduce several new capabilities that will make it even easier for customers to build and scale generative AI applications:

- Expanding model choice with Anthropic Claude 2.1, Meta Llama 2 70B, and additions to the Amazon Titan family. In these early days, customers are still learning and experimenting with different models to determine which ones they want to use for various purposes. They want to be able to easily try the latest models, and also test to see which capabilities and features will give them the best results and cost characteristics for their use cases. With Amazon Bedrock, customers are only ever one API call away from a new model. Some of the most impressive results customers have experienced these last few months are from LLMs like Anthropic’s Claude model, which excels at a wide range of tasks from sophisticated dialog and content generation to complex reasoning while maintaining a high degree of reliability and predictability. Customers report that Claude is much less likely to produce harmful outputs, easier to converse with, and more steerable compared to other FMs, so developers can get their desired output with less effort. Anthropic’s state-of-the-art model, Claude 2, scores above the 90th percentile on the GRE reading and writing exams, and similarly on quantitative reasoning. And now, the newly released Claude 2.1 model is available in Amazon Bedrock. Claude 2.1 delivers key capabilities for enterprises such as an industry-leading 200K token context window (2x the context of Claude 2.0), reduced rates of hallucination, and significant improvements in accuracy, even at very long context lengths. Claude 2.1 also includes improved system prompts – which are model instructions that provide a better experience for end users – while also reducing the cost of prompts and completions by 25%.

For a growing number of customers who want to use a managed version of Meta’s publicly available Llama 2 model, Amazon Bedrock offers Llama 2 13B, and we’re adding Llama 2 70B. Llama 2 70B is suitable for large-scale tasks such as language modeling, text generation, and dialogue systems. The publicly available Llama models have been downloaded more than 30M times, and customers love that Amazon Bedrock offers them as part of a managed service where they don’t need to worry about infrastructure or have deep ML expertise on their teams. Additionally, for image generation, Stability AI offers a suite of popular text-to-image models. Stable Diffusion XL 1.0 (SDXL 1.0) is the most advanced of these, and it is now generally available in Amazon Bedrock. The latest edition of this popular image model has increased accuracy, better photorealism, and higher resolution.

Customers are also using Amazon Titan models, which are created and pretrained by AWS to offer powerful capabilities with great economics for a variety of use cases. Amazon has a 25 year track record in ML and AI—technology we use across our businesses—and we have learned a lot about building and deploying models. We have carefully chosen how we train our models and the data we use to do so. We indemnify customers against claims that our models or their outputs infringe on anyone’s copyright. We introduced our first Titan models in April of this year. Titan Text Lite—now generally available—is a succinct, cost-effective model for use cases like chatbots, text summarization, or copywriting, and it is also compelling to fine-tune. Titan Text Express—also now generally available—is more expansive, and can be used for a wider range of text-based tasks, such as open-ended text generation and conversational chat. We offer these text model options to give customers the ability to optimize for accuracy, performance, and cost depending on their use case and business requirements. Customers like Nexxiot, PGA Tour, and Ryanair are using our two Titan Text models. We also have an embeddings model, Titan Text Embeddings, for search use cases and personalization. Customers like Nasdaq are seeing great results using Titan Text Embeddings to enhance capabilities for Nasdaq IR Insight to generate insights from 9,000+ global companies’ documents for sustainability, legal, and accounting teams. And we’ll continue to add more models to the Titan family over time. We are introducing a new embeddings model, Titan Multimodal Embeddings, to power multimodal search and recommendation experiences for users using images and text (or a combination of both) as inputs. And we are introducing a new text-to-image model, Amazon Titan Image Generator. With Titan Image Generator, customers across industries like advertising, e-commerce, and media and entertainment can use a text input to generate realistic, studio-quality images in large volumes and at low cost. We are excited about how customers are responding to Titan Models, and you can expect that we’ll continue to innovate here.

- New capabilities to customize your generative AI application securely with your proprietary data: One of the most important capabilities of Amazon Bedrock is how easy it is to customize a model. This becomes truly exciting for customers because it’s where generative AI meets their core differentiator—their data. However, it is really important that their data remains secure, that they have control of it along the way, and that model improvements are private to them. There are a few ways that you can do this, and Amazon Bedrock offers the broadest selection of customization options across multiple models). The first is fine tuning. Fine tuning a model in Amazon Bedrock is easy. You simply select the model and Amazon Bedrock makes a copy of it. Then you point to a few labeled examples (e.g., a series of good question-answer pairs) that you store in Amazon Simple Storage Service (Amazon S3), and Amazon Bedrock “incrementally trains” (augments the copied model with the new information) on these examples, and the result is a private, more accurate fine-tuned model that delivers more relevant, customized responses. We are excited to announce that fine tuning is generally available for Cohere Command, Meta Llama 2, Amazon Titan Text (Lite and Express), Amazon Titan Multimodal Embeddings, and in preview for Amazon Titan Image Generator. And, through our collaboration with Anthropic, we will soon provide AWS customers early access to unique features for model customization and fine-tuning of its state-of-the-art model Claude.

A second technique for customizing LLMs and other FMs for your business is retrieval augmented generation (RAG), which allows you to customize a model’s responses by augmenting your prompts with data from multiple sources, including document repositories, databases, and APIs. In September, we introduced a RAG capability, Knowledge Bases for Amazon Bedrock, that securely connects models to your proprietary data sources to supplement your prompts with more information so your applications deliver more relevant, contextual, and accurate responses. Knowledge Bases is now generally available with an API that performs the entire RAG workflow from fetching text needed to augment a prompt, to sending the prompt to the model, to returning the response. Knowledge Bases supports databases with vector capabilities that store numerical representations of your data (embeddings) that models use to access this data for RAG, including Amazon OpenSearch Service, and other popular databases like Pinecone and Redis Enterprise Cloud (Amazon Aurora and MongoDB vector support coming soon).

The third way you can customize models in Amazon Bedrock is with continued pre-training. With this method, the model builds on its original pre-training for general language understanding to learn domain-specific language and terminology. This approach is for customers who have large troves of unlabeled, domain-specific information and want to enable their LLMs to understand the language, phrases, abbreviations, concepts, definitions, and jargon unique to their world (and business). Unlike in fine-tuning, which takes a fairly small amount of data, continued pre-training is performed on large data sets (e.g., thousands of text documents). Now, pre-training capabilities are available in Amazon Bedrock for Titan Text Lite and Titan Text Express.

- General availability of Agents for Amazon Bedrock to help execute multistep tasks using systems, data sources, and company knowledge. LLMs are great at having conversations and generating content, but customers want their applications to be able to do even more—like take actions, solve problems, and interact with a range of systems to complete multi-step tasks like booking travel, filing insurance claims, or ordering replacement parts. And Amazon Bedrock can help with this challenge. With agents, developers select a model, write a few basic instructions like “you are a cheerful customer service agent” and “check product availability in the inventory system,” point the selected model to the right data sources and enterprise systems (e.g., CRM or ERP applications), and write a few AWS Lambda functions to execute the APIs (e.g., check availability of an item in the ERP inventory). Amazon Bedrock automatically analyzes the request and breaks it down into a logical sequence using the selected model’s reasoning capabilities to determine what information is needed, what APIs to call, and when to call them to complete a step or solve a task. Now generally available, agents can plan and perform most business tasks—from answering customer questions about your product availability to taking their orders—and developers don’t need to be familiar with machine learning, engineer prompts, train models, or manually connect systems. And Bedrock does all of this securely and privately, and customers like Druva and Athene are already using them to improve the accuracy and speed of development of their generative AI applications.

- Introducing Guardrails for Amazon Bedrock so you can apply safeguards based on your use case requirements and responsible AI policies. Customers want to be sure that interactions with their AI applications are safe, avoid toxic or offensive language, stay relevant to their business, and align with their responsible AI policies. With guardrails, customers can specify topics to avoid, and Amazon Bedrock will only provide users with approved responses to questions that fall in those restricted categories. For example, an online banking application can be set up to avoid providing investment advice, and remove inappropriate content (such as hate speech and violence). In early 2024, customers will also be able to redact personally identifiable information (PII) in model responses. For example, after a customer interacts with a call center agent the customer service conversation is often summarized for record keeping, and guardrails can remove PII from those summaries. Guardrails can be used across models in Amazon Bedrock (including fine-tuned models), and with Agents for Amazon Bedrock so customers can bring a consistent level of protection to all of their generative AI applications.

Top layer of the stack: Continued innovation makes generative AI accessible to more users

At the top layer of the stack are applications that leverage LLMs and other FMs so that you can take advantage of generative AI at work. One area where generative AI is already changing the game is in coding. Last year, we introduced Amazon CodeWhisperer, which helps you build applications faster and more securely by generating code suggestions and recommendations in near real-time. Customers like Accenture, Boeing, Bundesliga, The Cigna Group, Kone, and Warner Music Group are using CodeWhisperer to increase developer productivity—and Accenture is enabling up to 50,000 of their software developers and IT professionals with Amazon CodeWhisperer. We want as many developers as possible to be able to get the productivity benefits of generative AI, which is why CodeWhisperer offers recommendations for free to all individuals.

However, while AI coding tools do a lot to make developers’ lives easier, their productivity benefits are limited by their lack of knowledge of internal code bases, internal APIs, libraries, packages and classes. One way to think about this is that if you hire a new developer, even if they’re world-class, they’re not going to be that productive at your company until they understand your best practices and code. Today’s AI-powered coding tools are like that new-hire developer. To help with this, we recently previewed a new customization capability in Amazon CodeWhisperer that securely leverages a customer’s internal code base to provide more relevant and useful code recommendations. With this capability, CodeWhisperer is an expert on your code and provides recommendations that are more relevant to save even more time. In a study we did with Persistent, a global digital engineering and enterprise modernization company, we found that customizations help developers complete tasks up to 28% faster than with CodeWhisperer’s general capabilities. Now a developer at a healthcare technology company can ask CodeWhisperer to “import MRI images associated with the customer ID and run them through the image classifier“ to detect anomalies. Because CodeWhisperer has access to the code base it can provide much more relevant suggestions that include the import locations of the MRI images and customer IDs. CodeWhisperer keeps customizations completely private, and the underlying FM does not use them for training, protecting customers’ valuable intellectual property. AWS is the only major cloud provider that offers a capability like this to everyone.

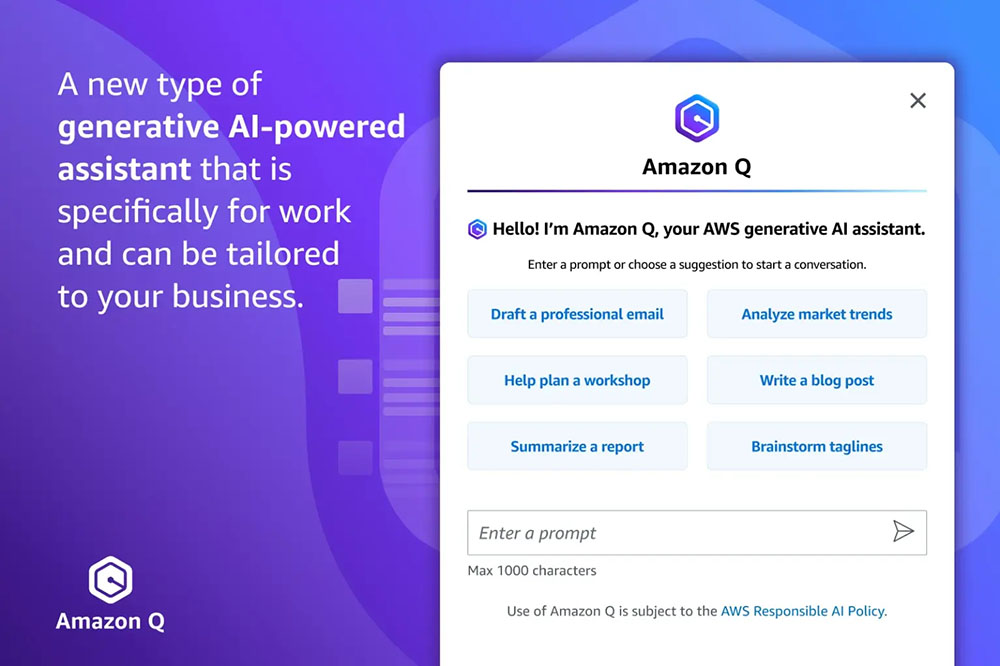

Introducing Amazon Q, the generative AI-powered assistant tailored for work

Developers certainly aren’t the only ones who are getting hands on with generative AI—millions of people are using generative AI chat applications. What early providers have done in this space is exciting and super useful for consumers, but in a lot of ways they don’t quite “work” at work. Their general knowledge and capabilities are great, but they don’t know your company, your data, your customers, your operations, or your business. That limits how much they can help you. They also don’t know much about your role—what work you do, who you work with, what information you use, and what you have access to. These limitations are understandable because these assistants don’t have access to your company’s private information, and they weren’t designed to meet the data privacy and security requirements companies need to give them this access. It’s hard to bolt on security after the fact and expect it to work well. We think we have a better way, which will allow every person in every organization to use generative AI safely in their day-to-day work.

We are excited to introduce Amazon Q, a new type of generative AI-powered assistant that is specifically for work and can be tailored to your business. Q can help you get fast, relevant answers to pressing questions, solve problems, generate content, and take actions using the data and expertise found in your company’s information repositories, code, and enterprise systems. When you chat with Amazon Q, it provides immediate, relevant information and advice to help streamline tasks, speed decision-making, and help spark creativity and innovation at work. We have built Amazon Q to be secure and private, and it can understand and respect your existing identities, roles, and permissions and use this information to personalize its interactions. If a user doesn’t have permission to access certain data without Q, they can’t access it using Q either. We have designed Amazon Q to meet stringent enterprise customers’ requirements from day one—none of their content is used to improve the underlying models.

Amazon Q is your expert assistant for building on AWS: We’ve trained Amazon Q on 17 years’ worth of AWS knowledge and experience so it can transform the way you build, deploy, and operate applications and workloads on AWS. Amazon Q has a chat interface in the AWS Management Console and documentation, your IDE (via CodeWhisperer), and your team chat rooms on Slack or other chat apps. Amazon Q can help you explore new AWS capabilities, get started faster, learn unfamiliar technologies, architect solutions, troubleshoot, upgrade, and much more —it’s an expert in AWS well-architected patterns, best practices, documentation, and solutions implementations. Here are some examples of what you can do with your new AWS expert assistant:

- Get crisp answers and guidance on AWS capabilities, services, and solutions: Ask Amazon Q to “Tell me about Agents for Amazon Bedrock,” and Q will give you a description of the feature plus links to relevant materials. You can also Ask Amazon Q virtually any question about how an AWS service works (e.g., “What are the scaling limits on a DynamoDB table?” “What is Redshift Managed Storage?”), or how to best architect any number of solutions (“What are the best practices for building event-driven architectures?”). And Amazon Q will pull together succinct answers and always cite (and link to) its sources.

- Choose the best AWS service for your use case, and get started quickly: Ask Amazon Q “What are the ways to build a Web app on AWS? ” and it will provide a list of potential services like AWS Amplify, AWS Lambda, and Amazon EC2 with the advantages of each. From there you can narrow down the options by helping Q understand your requirements, preferences, and constraints (e.g., “Which of these would be best if I want to use containers?” or “Should I use a relational or non-relational database?”). Finish up with “How do I get started?” and Amazon Q will outline some basic steps and point you towards additional resources.

- Optimize your compute resources: Amazon Q can help you select Amazon EC2 instances. If you ask it to “Help me find the right EC2 instance to deploy a video encoding workload for my gaming app with the highest performance”, Q will get you a list of instance families with reasons for each suggestion. And, you can ask any number of follow up questions to help find the best choice for your workload.

- Get assistance debugging, testing, and optimizing your code: If you encounter an error while coding in your IDE, you can ask Amazon Q to help by saying, “My code has an IO error, can you provide a fix?” and Q will generate the code for you. If you like the suggestion, you can ask Amazon Q to add the fix to your application. Since Amazon Q is in your IDE, it understands the code you are working on and knows where to insert the fix. Amazon Q can also create unit tests (“Write unit tests for the selected function”) that it can insert into your code and you can run. Finally, Amazon Q can tell you ways to optimize your code for higher performance. Ask Q to “Optimize my selected DynamoDB query,” and it will use its understanding of your code to provide a natural language suggestion on what to fix along with the accompanying code you can implement in one click.

- Diagnose and troubleshoot issues: If you encounter issues in the AWS Management Console, like EC2 permissions errors or Amazon S3 configuration errors, you can simply press the “Troubleshoot with Amazon Q” button, and it will use its understanding of the error type and service where the error is located to give you a suggestions for a fix. You can even ask Amazon Q to troubleshoot your network (e.g., “Why can’t I connect to my EC2 instance using SSH?”) and Q will analyze your end-to-end configuration and provide a diagnosis (e.g., “This instance appears to be in a private subnet, so public accessibility may need to be established”).

- Ramp up on a new code base in no time: When you chat with Amazon Q in your IDE, it combines its expertise in building software with an understanding of your code—a powerful pairing! Previously, if you took over a project from someone else, or you were new to the team, you might have to spend hours manually reviewing the code and documentation to understand how it works and what it does. Now, since Amazon Q understands the code in your IDE, you can simply ask Amazon Q to explain the code (“Provide me with a description of what this application does and how it works”) and Q will give you details like which services the code uses and what different functions do (e.g., Q might answer with something like, “This application is building a basic support ticket system using Python Flask and AWS Lambda” and go on to describe each of its core capabilities, how they are implemented, and much more).

- Clear your feature backlog faster: You can even ask Amazon Q to guide you through and automate much of the end-to-end process of adding a feature to your application in Amazon CodeCatalyst, our unified software development service for teams. To do this, you just assign Q a backlog task from your issues list – just like you would a teammate – and Q generates a step-by-step plan for how it will build and implement the feature. Once you approve the plan, Q will write the code and present the suggested changes to you as a code review. You can request rework (if necessary), approve and/or deploy!

- Upgrade your code in a fraction of the time: Most developers actually only spend a fraction of their time writing new code and building new applications. They spend a lot more of their cycles on painful, sloggy areas like maintenance and upgrades. Take language version upgrades. A large number of customers continue using older versions of Java because it will take months—even years—and thousands of hours of developer time to upgrade. Putting this off has real costs and risks—you miss out on performance improvements and are vulnerable to security issues. We think Amazon Q can be a game changer here, and are excited about Amazon Q Code Transformation, a feature which can remove a lot of this heavy lifting and reduce the time it takes to upgrade applications from days to minutes. You just open the code you want to update in your IDE, and ask Amazon Q to “/transform” your code. Amazon Q will analyze the entire source code of the application, generate the code in the target language and version, and execute tests, helping you realize the security and performance enhancements of the latest language versions. Recently, a very small team of Amazon developers used Amazon Q Code Transformation to upgrade 1,000 production applications from Java 8 to Java 17 in just two days. The average time per application was less than 10 minutes. Today Amazon Q Code Transformation performs Java language upgrades from Java 8 or Java 11 to Java 17. Coming next (and soon) is the ability to transform .NET Framework to cross-platform .NET (with even more transformations to follow in the future).

Amazon Q is your business expert: You can connect Amazon Q to your business data, information, and systems so that it can synthesize everything and provide tailored assistance to help people solve problems, generate content, and take actions that are relevant to your business. Bringing Amazon Q to your business is easy. It has 40+ built-in connectors to popular enterprise systems such as Amazon S3, Microsoft 365, Salesforce, ServiceNow, Slack, Atlassian, Gmail, Google Drive, and Zendesk. It can also connect to your internal intranet, wikis, and run books, and with the Amazon Q SDK, you can build a connection to whichever internal application you would like. Point Amazon Q at these repositories, and it will “ramp up” on your business, capturing and understanding the semantic information that makes your company unique. Then, you get your own friendly and simple Amazon Q web application so that employees across your company can interact with the conversational interface. Amazon Q also connects to your identity provider to understand a user, their role, and what systems they are permitted to access so that users can ask detailed, nuanced questions and get tailored results that include only information they are authorized to see. Amazon Q generates answers and insights that are accurate and faithful to the material and knowledge that you provide it, and you can restrict sensitive topics, block keywords, or filter out inappropriate questions and answers. Here are a few examples of what you can do with your business’s new expert assistant:

- Get crisp, super-relevant answers based on your business data and information: Employees can ask Amazon Q about anything they might have previously had to search around for across all kinds of sources. Ask “What are the latest guidelines for logo usage?”, or “How do I apply for a company credit card?”, and Amazon Q will synthesize all of the relevant content it finds and come back with fast answers plus links to the relevant sources (e.g., brand portals and logo repositories, company T&E policies, and card applications).

- Streamline day-to-day communications: Just ask, and Amazon Q can generate content (“Create a blog post and three social media headlines announcing the product described in this documentation”), create executive summaries (“Write a summary of our meeting transcript with a bulleted list of action items”), provide email updates (“Draft an email highlighting our Q3 training programs for customers in India”), and help structure meetings (“Create a meeting agenda to talk about the latest customer satisfaction report”).

- Complete tasks: Amazon Q can help complete certain tasks, reducing the amount of time employees spend on repetitive work like filing tickets. Ask Amazon Q to “Summarize customer feedback on the new pricing offer in Slack,” and then request that Q take that information and open a ticket in Jira to update the marketing team. You can ask Q to “Summarize this call transcript,” and then “Open a new case for Customer A in Salesforce.” Amazon Q supports other popular work automation tools like Zendesk and Service Now.

Amazon Q is in Amazon QuickSight: With Amazon Q in QuickSight, AWS’s business intelligence service, users can ask their dashboards questions like “Why did the number of orders increase last month?” and get visualizations and explanations of the factors that influenced the increase. And, analysts can use Amazon Q to reduce the time it takes them to build dashboards from days to minutes with a simple prompt like “Show me sales by region by month as a stacked bar chart.” Q comes right back with that diagram, and you can easily add it to a dashboard or chat further with Q to refine the visualization (e.g., “Change the bar chart into a Sankey diagram,” or “Show countries instead of regions”). Amazon Q in QuickSight also makes it easier to use existing dashboards to inform business stakeholders, distill key insights, and simplify decision-making using data stories. For example, users may prompt Amazon Q to “Build a story about how the business has changed over the last month for a business review with senior leadership,” and in seconds, Amazon Q delivers a data-driven story that is visually compelling and is completely customizable. These stories can be shared securely throughout the organization to help align stakeholders and drive better decisions.

Amazon Q is in Amazon Connect: In Amazon Connect, our contact center service, Amazon Q helps your customer service agents provide better customer service. Amazon Q leverages the knowledge repositories your agents typically use to get information for customers, and then agents can chat with Amazon Q directly in Connect to get answers that help them respond more quickly to customer requests without needing to search through the documentation themselves. And, while chatting with Amazon Q for super-fast answers is great, in customer service there is no such thing as too fast. That’s why Amazon Q In Connect turns a live customer conversation with an agent into a prompt, and automatically providing the agent possible responses, suggested actions, and links to resources. For example, Amazon Q can detect that a customer is contacting a rental car company to change their reservation, generate a response for the agent to quickly communicate how the company’s change fee policies apply, and guide the agent through the steps they need to update the reservation.

Amazon Q is in AWS Supply Chain (Coming Soon): In AWS Supply Chain, our supply chain insights service, Amazon Q helps supply and demand planners, inventory managers, and trading partners optimize their supply chain by summarizing and highlighting potential stockout or overstock risks, and visualize scenarios to solve the problem. Users can ask Amazon Q “what,” “why,” and “what if” questions about their supply chain data and chat through complex scenarios and the tradeoffs between different supply chain decisions. For example, a customer may ask, “What’s causing the delay in my shipments and how can I speed things up?” to which Amazon Q may reply, “90% of your orders are on the east coast, and a big storm in the Southeast is causing a 24-hour delay. If you ship to the port of New York instead of Miami, you’ll expedite deliveries and reduce costs by 50%.”

Our customers are adopting generative AI quickly—they are training groundbreaking models on AWS, they are developing generative AI applications at record speed using Amazon Bedrock, and they are deploying game-changing applications across their organizations like Amazon Q. With our latest announcements, AWS is bringing customers even more performance, choice, and innovation to every layer of the stack. The combined impact of all the capabilities we’re delivering at re:Invent marks a major milestone toward meeting an exciting and meaningful goal: We are making generative AI accessible to customers of all sizes and technical abilities so they can get to reinventing and transforming what is possible.

Resources

- G2 Krishnamoorthy and Rahul Pathak share their ideas on Unlocking the value of data as your differentiator

- Dive deeper into our new tools and capabilities to enable responsible AI innovation

About the Author

Swami Sivasubramanian is Vice President of Data and Machine Learning at AWS. In this role, Swami oversees all AWS Database, Analytics, and AI & Machine Learning services. His team’s mission is to help organizations put their data to work with a complete, end-to-end data solution to store, access, analyze, and visualize, and predict.

Swami Sivasubramanian is Vice President of Data and Machine Learning at AWS. In this role, Swami oversees all AWS Database, Analytics, and AI & Machine Learning services. His team’s mission is to help organizations put their data to work with a complete, end-to-end data solution to store, access, analyze, and visualize, and predict.

Raghu Ramesha is a Senior ML Solutions Architect with the Amazon SageMaker Service team. He focuses on helping customers build, deploy, and migrate ML production workloads to SageMaker at scale. He specializes in machine learning, AI, and computer vision domains, and holds a master’s degree in Computer Science from UT Dallas. In his free time, he enjoys traveling and photography.

Raghu Ramesha is a Senior ML Solutions Architect with the Amazon SageMaker Service team. He focuses on helping customers build, deploy, and migrate ML production workloads to SageMaker at scale. He specializes in machine learning, AI, and computer vision domains, and holds a master’s degree in Computer Science from UT Dallas. In his free time, he enjoys traveling and photography. Deepak Garg is a Solutions Architect at AWS. He loves diving deep into AWS services and sharing his knowledge with customers. Deepak has background in Content Delivery Networks and Telecommunications

Deepak Garg is a Solutions Architect at AWS. He loves diving deep into AWS services and sharing his knowledge with customers. Deepak has background in Content Delivery Networks and Telecommunications Ram Vegiraju is a ML Architect with the Amazon SageMaker Service team. He focuses on helping customers build and optimize their AI/ML solutions on Amazon SageMaker. In his spare time, he loves traveling and writing.

Ram Vegiraju is a ML Architect with the Amazon SageMaker Service team. He focuses on helping customers build and optimize their AI/ML solutions on Amazon SageMaker. In his spare time, he loves traveling and writing. Marc Karp is an ML Architect with the Amazon SageMaker Service team. He focuses on helping customers design, deploy, and manage ML workloads at scale. In his spare time, he enjoys traveling and exploring new places.

Marc Karp is an ML Architect with the Amazon SageMaker Service team. He focuses on helping customers design, deploy, and manage ML workloads at scale. In his spare time, he enjoys traveling and exploring new places. Shiva Raaj Kotini works as a Principal Product Manager in the Amazon SageMaker Inference product portfolio. He focuses on model deployment, performance tuning, and optimization in SageMaker for inference.

Shiva Raaj Kotini works as a Principal Product Manager in the Amazon SageMaker Inference product portfolio. He focuses on model deployment, performance tuning, and optimization in SageMaker for inference. Alwin (Qiyun) Zhao is a Senior Software Development Engineer with the Amazon SageMaker Inference Platform team. He is the lead developer of the deployment guardrails and shadow deployments, and he focuses on helping customers to manage ML workloads and deployments at scale with high availability. He also works on platform architecture evolutions for fast and secure ML jobs deployment and running ML online experiments at ease. In his spare time, he enjoys reading, gaming, and traveling.

Alwin (Qiyun) Zhao is a Senior Software Development Engineer with the Amazon SageMaker Inference Platform team. He is the lead developer of the deployment guardrails and shadow deployments, and he focuses on helping customers to manage ML workloads and deployments at scale with high availability. He also works on platform architecture evolutions for fast and secure ML jobs deployment and running ML online experiments at ease. In his spare time, he enjoys reading, gaming, and traveling. Gaurav Bhanderi is a Front End engineer with AI platforms team in SageMaker. He works on delivering Customer facing UI solutions within AWS org. In his free time, he enjoys hiking and exploring local restaurants.

Gaurav Bhanderi is a Front End engineer with AI platforms team in SageMaker. He works on delivering Customer facing UI solutions within AWS org. In his free time, he enjoys hiking and exploring local restaurants.

Melanie Li, PhD, is a Senior AI/ML Specialist TAM at AWS based in Sydney, Australia. She helps enterprise customers build solutions using state-of-the-art AI/ML tools on AWS and provides guidance on architecting and implementing ML solutions with best practices. In her spare time, she loves to explore nature and spend time with family and friends.

Melanie Li, PhD, is a Senior AI/ML Specialist TAM at AWS based in Sydney, Australia. She helps enterprise customers build solutions using state-of-the-art AI/ML tools on AWS and provides guidance on architecting and implementing ML solutions with best practices. In her spare time, she loves to explore nature and spend time with family and friends. Sam Edwards, is a Cloud Engineer (AI/ML) at AWS Sydney specialized in machine learning and Amazon SageMaker. He is passionate about helping customers solve issues related to machine learning workflows and creating new solutions for them. Outside of work, he enjoys playing racquet sports and traveling.

Sam Edwards, is a Cloud Engineer (AI/ML) at AWS Sydney specialized in machine learning and Amazon SageMaker. He is passionate about helping customers solve issues related to machine learning workflows and creating new solutions for them. Outside of work, he enjoys playing racquet sports and traveling. Mohan Gandhi is a Senior Software Engineer at AWS. He has been with AWS for the last 10 years and has worked on various AWS services like EMR, EFA and RDS. Currently, he is focused on improving the SageMaker Inference Experience. In his spare time, he enjoys hiking and marathons.

Mohan Gandhi is a Senior Software Engineer at AWS. He has been with AWS for the last 10 years and has worked on various AWS services like EMR, EFA and RDS. Currently, he is focused on improving the SageMaker Inference Experience. In his spare time, he enjoys hiking and marathons.

Eric Peña is a Senior Technical Product Manager in the AWS Artificial Intelligence Platforms team, working on Amazon SageMaker Interactive Machine Learning. He currently focuses on IDE integrations on SageMaker Studio. He holds an MBA degree from MIT Sloan and outside of work enjoys playing basketball and football.

Eric Peña is a Senior Technical Product Manager in the AWS Artificial Intelligence Platforms team, working on Amazon SageMaker Interactive Machine Learning. He currently focuses on IDE integrations on SageMaker Studio. He holds an MBA degree from MIT Sloan and outside of work enjoys playing basketball and football. Vikesh Pandey is a Machine Learning Specialist Solutions Architect at AWS, helping customers from financial industries design and build solutions on generative AI and ML. Outside of work, Vikesh enjoys trying out different cuisines and playing outdoor sports.

Vikesh Pandey is a Machine Learning Specialist Solutions Architect at AWS, helping customers from financial industries design and build solutions on generative AI and ML. Outside of work, Vikesh enjoys trying out different cuisines and playing outdoor sports. Bruno Pistone is an AI/ML Specialist Solutions Architect for AWS based in Milan. He works with large customers helping them to deeply understand their technical needs and design AI and Machine Learning solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. His expertise include: Machine Learning end to end, Machine Learning Industrialization, and Generative AI. He enjoys spending time with his friends and exploring new places, as well as travelling to new destinations.

Bruno Pistone is an AI/ML Specialist Solutions Architect for AWS based in Milan. He works with large customers helping them to deeply understand their technical needs and design AI and Machine Learning solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. His expertise include: Machine Learning end to end, Machine Learning Industrialization, and Generative AI. He enjoys spending time with his friends and exploring new places, as well as travelling to new destinations. Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering and an ML background, he works with customers of any size to understand their business and technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, computer vision, and NLP, involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football.

Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering and an ML background, he works with customers of any size to understand their business and technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, computer vision, and NLP, involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football. Sofian Hamiti is an AI/ML specialist Solutions Architect at AWS. He helps customers across industries accelerate their AI/ML journey by helping them build and operationalize end-to-end machine learning solutions.

Sofian Hamiti is an AI/ML specialist Solutions Architect at AWS. He helps customers across industries accelerate their AI/ML journey by helping them build and operationalize end-to-end machine learning solutions.

Mehran Najafi, PhD, is a Senior Solutions Architect for AWS focused on AI/ML and SaaS solutions at Scale.

Mehran Najafi, PhD, is a Senior Solutions Architect for AWS focused on AI/ML and SaaS solutions at Scale. Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing, and Artificial Intelligence. He focuses on Deep learning including NLP and Computer Vision domains. He helps customers achieve high performance model inference on SageMaker.

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing, and Artificial Intelligence. He focuses on Deep learning including NLP and Computer Vision domains. He helps customers achieve high performance model inference on SageMaker. Rielah DeJesus is a Principal Solutions Architect at AWS who has successfully helped various enterprise customers in the DC, Maryland, and Virginia area move to the cloud. A customer advocate and technical advisor, she helps organizations like Heroku/Salesforce achieve success on the AWS platform. She is a staunch supporter of Women in IT and very passionate about finding ways to creatively use technology and data to solve everyday challenges.

Rielah DeJesus is a Principal Solutions Architect at AWS who has successfully helped various enterprise customers in the DC, Maryland, and Virginia area move to the cloud. A customer advocate and technical advisor, she helps organizations like Heroku/Salesforce achieve success on the AWS platform. She is a staunch supporter of Women in IT and very passionate about finding ways to creatively use technology and data to solve everyday challenges.

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In h is spare time he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends. You can find him on

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In h is spare time he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends. You can find him on

Rupinder Grewal is a Sr Ai/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on SageMaker. Prior to this role he has worked as Machine Learning Engineer building and hosting models. Outside of work he enjoys playing tennis and biking on mountain trails.

Rupinder Grewal is a Sr Ai/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on SageMaker. Prior to this role he has worked as Machine Learning Engineer building and hosting models. Outside of work he enjoys playing tennis and biking on mountain trails. Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch and spending time with his family.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch and spending time with his family. Lakshmi Ramakrishnan is a Principal Engineer at Amazon SageMaker Machine Learning (ML) platform team in AWS, providing technical leadership for the product. He has worked in several engineering roles in Amazon for over 9 years. He has a Bachelor of Engineering degree in Information Technology from National Institute of Technology, Karnataka, India and a Master of Science degree in Computer Science from the University of Minnesota Twin Cities.

Lakshmi Ramakrishnan is a Principal Engineer at Amazon SageMaker Machine Learning (ML) platform team in AWS, providing technical leadership for the product. He has worked in several engineering roles in Amazon for over 9 years. He has a Bachelor of Engineering degree in Information Technology from National Institute of Technology, Karnataka, India and a Master of Science degree in Computer Science from the University of Minnesota Twin Cities.

Venugopal Pai is a Solutions Architect at AWS. He lives in Bengaluru, India, and helps digital-native customers scale and optimize their applications on AWS.

Venugopal Pai is a Solutions Architect at AWS. He lives in Bengaluru, India, and helps digital-native customers scale and optimize their applications on AWS.

Janisha Anand is a Senior Product Manager in the SageMaker Low/No Code ML team, which includes SageMaker Canvas and Autopilot. She enjoys coffee, staying active, and spending time with her family.

Janisha Anand is a Senior Product Manager in the SageMaker Low/No Code ML team, which includes SageMaker Canvas and Autopilot. She enjoys coffee, staying active, and spending time with her family. Indy Sawhney is a Senior Customer Solutions Leader with Amazon Web Services. Always working backwards from customer problems, Indy advises AWS enterprise customer executives through their unique cloud transformation journey. He has over 25 years of experience helping enterprise organizations adopt emerging technologies and business solutions. Indy is an area-of-depth specialist with the AWS Technical Field Community for artificial intelligence and machine learning (AI/ML), with specialization in generative AI and low-code/no-code (LCNC) SageMaker solutions.

Indy Sawhney is a Senior Customer Solutions Leader with Amazon Web Services. Always working backwards from customer problems, Indy advises AWS enterprise customer executives through their unique cloud transformation journey. He has over 25 years of experience helping enterprise organizations adopt emerging technologies and business solutions. Indy is an area-of-depth specialist with the AWS Technical Field Community for artificial intelligence and machine learning (AI/ML), with specialization in generative AI and low-code/no-code (LCNC) SageMaker solutions.