Episode 149 | Sept. 14, 2023

Powerful large-scale AI models like GPT-4 are showing dramatic improvements in reasoning, problem-solving, and language capabilities. This marks a phase change for artificial intelligence—and a signal of accelerating progress to come.

In this Microsoft Research Podcast series, AI scientist and engineer Ashley Llorens hosts conversations with his collaborators and colleagues about what these models—and the models that will come next—mean for our approach to creating, understanding, and deploying AI, its applications in areas such as healthcare and education, and its potential to benefit humanity.

This episode features Senior Principal Research Manager Ahmed H. Awadallah, whose work improving the efficiency of large-scale AI models and efforts to help move advancements in the space from research to practice have put him at the forefront of this new era of AI. Awadallah discusses the shift in dynamics between model size and amount—and quality—of data when it comes to model training; the recently published paper “Orca: Progressive Learning from Complex Explanation Traces of GPT-4,” which further explores the use of large-scale AI models to improve the performance of smaller, less powerful ones; and the need for better evaluation strategies, particularly as we move into a future in which Awadallah hopes to see gains in these models’ ability to continually learn.

Learn more:

- Orca: Progressive Learning from Complex Explanation Traces of GPT-4

Publication, June 2023 - Textbooks Are All You Need II: phi-1.5 technical report

Publication, September 2023 - AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation Framework

Publication, August 2023 - LIDA: Automatic Generation of Grammar-Agnostic Visualizations and Infographics using Large Language Models

Publication, March 2023 - AI Explainer: Foundation models and the next era of AI

Microsoft Research blog and video, March 2023 - AI and Microsoft Research

Learn more about the breadth of AI research at Microsoft

Subscribe to the Microsoft Research Podcast:

Transcript

[MUSIC PLAYS]ASHLEY LLORENS: I’m Ashley Llorens with Microsoft Research. I’ve spent the last 20 years working in AI and machine learning, but I’ve never felt more inspired to work in the field than right now. The release of GPT-4 was a watershed moment in the pursuit of artificial intelligence, and yet progress continues to accelerate. The latest large-scale AI models and the systems they power are continuing to exhibit improvements in reasoning, problem-solving, and translation across languages and domains. In this podcast series, I’m sharing conversations with fellow researchers about the latest developments in large-scale AI models, the work we’re doing to understand their capabilities and limitations, and ultimately how innovations like these can have the greatest benefit for humanity. Welcome to AI Frontiers.

Today, I’ll speak with Ahmed Awadallah. Ahmed is a Senior Principal Researcher at Microsoft Research in Redmond. Much of his work focuses on machine learning, helping to create foundation models that excel at key tasks while using less compute and energy. His work has been at the leading edge of recent progress in AI and gives him a unique perspective on where it will go next.

[MUSIC FADES]

All right, Ahmed, let’s dive right in. Among other things, I find that people are hungry to understand the drivers of the progress we’re seeing in AI. Over these last few years when people like you or I have tried to explain this, we’ve often pointed to some measure of scale. You know, I know many times as I’ve given talks in AI, I’ve shown plots that feature some kind of up-and-to-the-right trend in scale over time—the increasing size of the AI models we’re training, the increasing size of the datasets we’re using to train them on, or even the corresponding increase in the overall compute budget. But when you double-click into this general notion of scale related to large AI models, what gets exposed is really a rapidly evolving frontier of experimental science. So, Ahmed, I’m going to start with a big question and then we can kind of decompose it from there. As someone at the forefront of all of this, how has your understanding of what’s driving progress in AI changed over this last year?

AHMED AWADALLAH: Thanks, Ashley. That’s a very good question. And the short answer is it’s changed a lot. I think I have never been learning as much as I have been throughout my career. Things are moving really, really fast. The progress is amazing to witness, and we’re just learning more and more every day. To your point, for quite some time, we were thinking of scale as the main driver of progress, and scale is clearly very important and necessary. But over the last year, we have been also seeing many different things. Maybe the most prominent one is the importance of data being used for training these models. And that’s not very separate from scale, because when we think about scale, what really matters is how much compute we are spending in training these models. And you can choose to spend that compute in making the model bigger or in training it on more and more data, training it for longer. And it has been over the past few years a lot of iterations in trying to understand that. But it has been very clear over the last year that we were, in a sense, underestimating the value of data in different ways: number one, in having more data but even more important, the quality of the data, having cleaner data, having more representative data, and also the distribution or the mixing of the data that we are using. Like, for example, one of the very interesting things we have witnessed maybe over the last year to year and a half is that a lot of the language models are being trained on text and code. And surprisingly, the training on code is actually helping the model a lot—not just in coding tasks but in normal other tasks that do not really involve coding. More importantly, I think one of the big shifts last year in particular—it has been happening for quite some time but we have been seeing a lot of value for it last year—is that there are now like two stages of training these models: the pretraining stage, where you are actually training the language model in an autoregressive manner to predict the next word. And that just makes it a very good language model. But then the post-training stage with the instruction tuning and RLHF (reinforcement learning from human feedback) and reward models, using a very different form of data; this is not self-supervised, freely available data on the internet anymore. This is human-generated, human-curated, maybe a mixture of model- and human-curated data that’s trying to get the model to be better at very specific elements like being more helpful or being harmless.

LLORENS: There’s so much to unpack even in that, in that short answer. So let’s, let’s dig in to some of these core concepts here. You, you teed up this notion of ways to spend compute, you know, ways to spend a compute budget. And one of the things you said was, you know, one of the things we can do is make the model bigger. And I think to really illustrate this concept, we need to, we need to dig in to what that means. One, one concept that gets obfuscated there a little bit is the architecture of the model. So what does it mean to make the model bigger? Maybe you can tell us something about, you know, how to think about parameters in the model and how important is architecture in that, in that conversation.

AWADALLAH: So most of the progress, especially in language and other domains, as well, have been using the transformer model. And the transformer model have been actually very robust to change over the years. I don’t … I think a lot … I’ve asked a lot of experts over the years whether they had expected the transformer model to be still around five, six years later, and most of them thought we would have something very different. But it has been very robust and very universal, and, yes, there have been improvements and changes, but the core idea has still been the same. And with dense transformer models, the size of the model tends to be around the number of layers that you have in the model and then the number of parameters that you have in each layer, which is basically the depths and the widths of the model. And we have been seeing very steady exponential increase in that. It’s very, it’s very interesting to think that just like five years ago when BERT came up, the large model was like 300-something million parameters and the smaller one was 100 million parameters. And we consider these to be really large ones. Now that’s a very, very small scale. So things have been moving and moving really fast in making these models bigger. But over the time, there started to be an understanding being developed of how big should the model be. If I were to invest a certain amount of compute, what should I do with that in terms of the model size and especially on how it relates to the data side? And, perhaps, one of the most significant efforts there was the OpenAI scaling laws, which came up in 2020, late 2020, I think. And it was basically saying that if you are … if you have 10x more compute to spend, then you should dedicate maybe five of that … 5x of that to making the model bigger—more layers, more width—and maybe 2x to making the data bigger. And that translated to … for, like say, GPT-3-like model being trained on almost 300 billion tokens, and for quite some time, the 300 billion tokens was stuck, like it became the standard, and a lot of people were using that. But then fast-forward less than two years later came the second iteration of the scaling laws, the Chinchilla paper, where the, the recommendation was slightly different. It was like we were not paying enough attention to the size of the data. Actually, you should now think of the data and the size as equally … and the size of the model … as equally important. So if you were to invest in X more, you should just split them evenly between bigger models and more data. And that was quite a change, and it actually got all the people to pay more attention to the data. But then fast-forward one more year, in 2023—and maybe pioneered mostly with the Llama work from Meta and then many, many others followed suit—we started finding out that we don’t have to operate at this optimal point. We can actually push for more data and the model will continue to improve. And that’s interesting because when you are thinking about the training versus the deployment or the inference parts of the life cycle of the model, they are actually very different. When you are training the model, you would like the model to learn to generalize as best as possible. When you are actually using the model, the size of the model becomes a huge difference. I actually recall an interesting quote from a 2015 paper by Geoff Hinton and others. That’s the paper that introduced the idea of distillation for neural networks. Distillation was there before from the work of, of Rich Caruana, our colleague here at Microsoft, and others. But in 2015, there was this paper specifically discussing distilling models for neural network models, and one of the motivating sentences at the very beginning of the paper was basically talking about insects and how insects would have different forms throughout their life cycles. At the beginning of their life, they are optimized for extracting energy and nutrients from the environment, and then later on, in their adult form, they have very different forms as optimized for flying and traveling and reproduction and so on and so forth. So that, that analogy is very interesting here because like you can think about the same not just in the context of distillation, as this paper was describing, but just for pretraining the models in general. Yes, the optimal point might have been to equally split your compute between the data and the size, but actually going more towards having more and more data actually is beneficial. As long as the model is getting better, it will give you a lot more benefit because you have a smaller model to use during the inference time. And we would see that with the latest iteration of the Llama models, we are now seeing models as small as 7 billion parameters being trained on 1 to 2 trillion tokens of data, which was unheard before.

LLORENS: Let’s talk a bit more about evaluating performance. Of course, the neural scaling laws that you referenced earlier really predict how the performance of a model on the task of next word prediction will improve with the size of the model or the size of the data. But of course, that’s not what we really care about. What we’re really after is better performance on any number of downstream tasks like reasoning, document summarization, or even writing fiction. How do we predict and measure performance in that broader sense?

AWADALLAH: Yeah, that’s a very good question. And that’s another area where our understanding of evaluating generative models in general has been challenged quite a bit over the last year in particular. And I think one of the areas that I would recommend to spend a lot of time working on right now is figuring out a better strategy around evaluating generative language models. We … this field has been very benchmark driven for many, many years, and we have been seeing a lot of very well-established benchmarks that have been helping the community in general make a lot of progress. We have seen leaderboards like GLUE and SuperGLUE, and many, many others play a very important role in the development of pretrained models. But over the last year, there has been a lot of changes. One is that these benchmarks are being saturated really, really quickly. There was … this paper that I was reading a few, reading a few months back talking about how we went from times where benchmarks like Switchboard and MNIST for speech and image processing lasted for 10 to 20 years before they get saturated to times where things like SQuAD and GLUE and SuperGLUE are getting saturated in a year or two to now where many of the benchmarks just get like maybe two or three submissions and that’s it. It gets saturated very quickly after that. BIG-Bench is a prime example of that, where it was like a collaborative effort, over 400 people coming together from many different institutions designing, a benchmark to challenge language models. And then came GPT-4, and we’re seeing that it’s doing really, really, really well, even in like zero-shot and, and, and few-shot settings, where the tasks are completely new to the models. So the model out of the box is basically solving a lot of the benchmarks that we have. That’s an artifact of the significant progress that we have been seeing and the speed of that progress, but it’s actually making that, that answer to that question even harder. But there’s another thing that’s making it even harder is that the benchmarks are giving us a much more limited view of the actual capabilities of these models compared to what they can actually do, especially models like GPT-4. The, the breadth of capabilities of the model is beyond what we had benchmarks to measure it with. And we have seen once it was released, then once people started interacting with it, there are so many experiences and so many efforts just thinking about what can we do with that model. Now we figured out that it can do this new task; it can do that new task. I can use it in this way that I didn’t think about before. So that expansion in the surface of capabilities of the model is making the question of evaluating them even, even harder and, and moving forward, I think this would be one of the most interesting areas to really spend time on.

LLORENS: Why don’t we talk a bit about a paper that you recently published with some Microsoft Research colleagueS called “Orca: Progressive Learning from Complex Explanation Traces of GPT-4.” And there’s a couple of, of concepts that we’ve been talking about that I want to pull through to, to a discussion around, around this work. One is the idea of quality of data. And so it would be great to hear, you know, some of the intuitions around … yeah, what, what drove you to focus on data quality versus, you know, number of parameters or number of tokens? And then we can also come back to this notion of benchmarks, because to publish, you have to pick some benchmarks, right? [LAUGHS] So, so first, why don’t we talk about the intuitions behind this paper and what you did there, and then I’d love to understand how you thought through the process of picking benchmarks to evaluate these models.

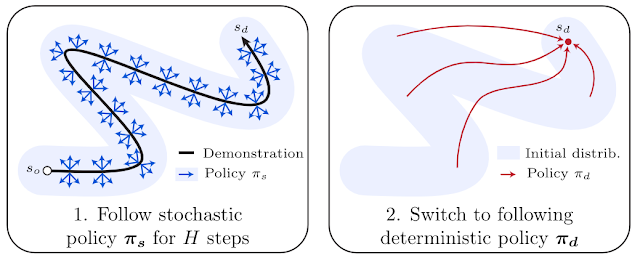

AWADALLAH: Yeah, so, so in this paper, we were basically thinking about like … there has been a lot of work actually on thinking about how do we have a very powerful model and use it to improve a less powerful model. This is not a new concept. It has been there forever, and I mentioned the Hinton et al. paper on distillation, one of the pioneer papers applying that to neural networks. And over time, this field actually continued getting better and better. And the way the large, more powerful models were used just continued evolving. So people were using the logits generated by the model and then maybe looking at intermediate layers and their output, maybe looking at attention maps and trying to map that between the models and coming up with more and more complex ways of distilling information from the powerful model to improve a less powerful model. But with models like GPT-4, we were thinking that GPT-4 is so good that you can actually start thinking about different ways of having a model teaching another model. And in that particular case, the idea was, can we actually have the powerful model explain in step by step how to do the task, and can we actually have a smaller model learn from that? And how far can this actually help the smaller one? A big part of this has to do with the data quality but also with the teacher model quality. You wouldn’t be able to … and this gets us into the whole notion of synthesized data and the role of synthesized data can play in making models better. Models like GPT-4, the level of capability where you could actually generate a lot of synthetic data at a very high quality comparable in some cases to what you’d get from a human, better in some cases than what you could get from a human. And even more than that, when you are working with a model like GPT-4, there has been a lot of work over the last few months demonstrating that you can even get the model to be a lot better by having the model reflect on what it’s doing, having the model critique what it’s doing and try to come up with even corrections and improvements to its own generation. And once you have this going, you see that you can actually create very high-quality synthetic data in so many ways, mostly because of the quality of the model but also because of like these different ways of generating the data on top of the model. And then it was really an experiment of how far can another model learn from these models. And by the way—and there is … we’re seeing some work like that, as well—it doesn’t even have to be a different model. It can be the same model improving itself. It can be the same model giving feedback to itself. That coincided with actually us having, having … we have been spending a lot of time thinking about this idea of learning from feedback or like continual improvement. How can we take a language model and continue to improve it based on interaction, based on feedback? So we started connecting these two concepts and basically thinking of it like the powerful model is just giving feedback to our much less powerful model and trying to help it improve across certain dimensions. And that’s where that line of work started. And what we were finding out is that you can actually have the more powerful model teach a smaller model. It would have definitely much narrower capabilities than the bigger model because like by virtue of this training cycle, you are just focused on teaching it particular concepts. You cannot teach it everything that the larger model can do. But also because this is another example of this like post-training step, like this model has already been pretrained language model and it’s always limited by the basic capabilities that it has. So, yes, the large language model can teach it a little bit more, but it will always be limited by that.

LLORENS: Now you mentioned … you’ve sketched out now the idea of using a powerful general-purpose model through some process of distillation to train a, a smaller, more special, more specialized model. And in the paper, you, you and your colleagues offer a number of case studies. So can you, can you pick one? Give, give us, you know, give us an example of a specialized domain and the way that you utilize GPT-4 to accomplish this training and what the performance outcome was.

AWADALLAH: Yeah, actually, when we were working on this paper, the team was thinking that what capability should we try to focus on to, to demonstrate that the small model can improve from, from the guidance of the much more powerful model. And we were thinking it would be very cool if we can demonstrate that the small model can get better at reasoning, because reasoning has been one of the capabilities that have been clearly emerging with larger and larger models, and models like GPT-4 demonstrate the level of reasoning that we have never seen with any of our systems before. So we were thinking can we … can, can GPT-4 help actually get the smaller model to be better at reasoning. And that had a lot of implications on the selection of what datasets to use for, for creating the synthetic data. In this particular paper, by the way, we’re not, we’re not using GPT-4 to answer the questions. We already have the questions and the answers. We are just asking GPT-4 to explain it in step by step. This is similar to what we have been seeing with chain-of-thought reasoning, chain-of-thought prompting, and other different prompting techniques showing that if you actually push the language model to go step by step, it can actually do a lot better. So we are basically saying, can we have these explanations and step-by-step traces and have them help the smaller language model learn to reason a little bit better. And because of that, actually—and this goes back to your earlier questions about benchmarks—in this particular paper, we chose two main benchmarks. There were more than two, but like the two main benchmarks where BIG-Bench Hard and AGIEval. BIG-Bench Hard is a 23 subset of BIG-Bench that we were just talking about earlier, and a lot of the tasks are very heavy on reasoning. AGIEval is a set of questions that are SAT-, LSAT-, GRE-, and GMAT-type of questions. They are also very heavy on reasoning. The benchmarks were selected to highlight the reasoning improvement and the reasoning capability of the model. And we had, we had a bunch of use cases there, and you would see one of the common themes there is that there is actually … even before the use cases, if you look at the, the results, the reasoning ability as measured by these two benchmarks at least of the base model significantly improved. Still far behind the teacher. The teacher is much, much more powerful and there’s no real comparison, but still the fact that collecting synthetic data from a model like GPT-4 explaining reasoning steps could help a much smaller model get better at reasoning and get better by that magnitude was a very interesting finding. We were, we were quite a bit surprised, actually, by the results. We thought that it will improve the model reasoning abilities, but it actually improved it beyond what we expected. And again, this goes back to like imagine if we were … if we wanted to do that without a model like GPT-4, that would entail having humans generate explanations for a very large number of tasks and make sure that these explanations remain faithful and align with the answers of the question. It would have been a very hard task, and the type of annotator that you would like to recruit in order to do that, it would have been … even made it harder and slower. But having, having the capabilities of a model like GPT-4 is really what made it possible to do that.

LLORENS: You’ve, you’ve outlined now, you know, your experiments around using GPT-4 to train a smaller model, but earlier, you also alluded to a pretty compelling idea that maybe even a large, powerful model could, I guess, self-improve by generate, you know, performing a generation, critiquing itself, and then somehow guiding, you know, the parameter weights in a way that, that was informed by the critique. Is that, was that part of these experiments, or what … or, or is that … does that work? [LAUGHS] Have, have we … do we have experimental evidence of that?

AWADALLAH: Yeah, I think, I think that’s a very good question. That was really how we started. That was really what we were aiming and still trying to do. The value … we started off by asking that question: can we actually have a model self-improve, self-improve itself? From an experimental perspective, it was much easier to have a powerful model help a smaller model improve. But self-improvement is really what we, what got us excited about this direction from the beginning. There has been evidence from other work showing up over the last short period actually showing that this is actually a very promising direction, too. For example, one of the very interesting findings about these powerful models—I think that the term frontier models is being used to refer to them now—is that they have a very good ability at critiquing and verifying output. And sometimes that’s even better than their ability at solving the task. So you can basically go to GPT-4 and ask it to solve a coding question. Write a Python function to do something. And then you can go again to GPT-4 and ask it to look back at that code and see if there are any bugs in there. And surprisingly, it would identify bugs in its own generation with a very high quality. And then you can go back to GPT-4 again and ask it to improve its own generation and fix the bugs. And it does that. So we actually have a couple of experiments with that. One of them in a toolkit called LIDA that one of my colleagues here, Victor [Dibia], has been working on for some time. LIDA is a tool for visualizations, and you basically go there and submit a query. The query would be, say, create a graph that shows the trends of stocks over the last year. And it’ll actually go to the data basically, engineer Python code. The Python code, when compiled and executed, would generate a visualization. But then we were finding out that we don’t have to stop there. We can actually ask GPT-4 again to go back to that visualization and critique it, and it doesn’t have to be open critique. We can define the dimensions that we would like to improve on and ask GPT-4 to critique and provide feedback across these dimensions. Like it could be the readability of the chart. It could be, is the type of chart the best fit for the data? And surprisingly it does that quite well. And then that opens the door to so many interesting experiences where you can, after coming up with the initial answer, you can actually suggest some of these improvements to a human. Or maybe if you are confident enough, you just go ahead and apply them even without involving the human in the loop and you actually get a lot better. There was another experiment like that where another colleague of mine has been working on a library called AutoGen, which basically helps with these iterative loops on top of language models, as well as figuring out values of hyperparameters and so on and so forth. And the experiments were very similar. There was a notion there of like having a separate agent that the team refers to as a user proxy agent, and that agent basically has a criteria of what the user is trying to do. And it keeps asking GPT-4 to critique the output and improve the output up until this criteria is met. And we see that we get much, much better value with using GPT-4 this way. That cycle is expensive, though, because you have to iterate and go back multiple times. The whole idea of self-improvement is basically, can we literally distill that cycle into the model itself again so that as the model is being used and being asked to maybe critique and provide feedback or maybe also getting some critique and feedback from the human user, can we use that data to continue to improve the model itself?

LLORENS: It is pretty fascinating that these models can be better at evaluating a candidate solution to a task than generating a novel solution to the task. On the other hand, maybe it’s not so surprising. One of the things that’s hard about or one of the things that can be challenging is this idea of, you know, prompt engineering, by which I’m trying to specify a task for the, for the model to solve or for the AI system to solve. But if you think about it, the best I can do at specifying the task is to actually try my best to complete the task. I’ve now specified the task to the greatest extent that I possibly can. So the machine kind of has my best task specification. With that, that information, now it becomes a kind of maybe even in some cases a superhuman evaluator. It’s doing better than I can at evaluating my own work. So that’s kind of an interesting twist there. Back, you know, back to the Orca paper, one of the things that you wouldn’t have seen … you know, earlier in the talk, you, you harkened back to say a decade ago, when benchmarks lasted a long, a longer time, one of the things that we would not necessarily have seen in a paper from that era, you know, say the CNN era of AI, is, is, a, is a safety evaluation, you know, for a specialized object recognition model. But in the Orca paper, we do have a safety evaluation. Can you, you talk a little bit about the thought process behind the particular evaluations that you did conduct and, and why these are necessary in the first place in this era of AI?

AWADALLAH: Yeah, I think in this era of AI, this is one of the most important parts of the development cycle of any LLM—large or small. And as we were just describing, we are discovering abilities of these models as we go. So just as there will be a lot of emerging capabilities that are surprising and useful and interesting, this would also open the door to a lot of misuse. And safety evaluation is at least … is the least we can do in order to make sure that we understand how, how can this model be used and what are some of the possible harms or the possible misuses that can come from using these models? So I think, I think this is, this is now definitely should be a standard for any work on language models. And here we are not, we’re not really training a language model from scratch. This is more of like a post-training or a fine-tuning of an existing language model. But even for, for, for research like that, I think safety evaluation should be a critical component of that. And, yes, we did some, and we, we, we actually have a couple of paragraphs in the paper where we say we need to do a lot more, and we are doing a lot more of that right now. I think … what we did in the paper that … we focused on only two dimensions: truthfulness and toxicity. And we were basically trying to make sure that we are trying to see the additional fine-tuning and training that we do, is it improving the model across these dimensions or is it not? And the good news that it was actually improving it in both dimensions, at least with the benchmarks that we have tried. I, I think it was interesting that actually on the, on the toxicity aspect in particular, we found that this particular type of post-training is actually improving the base model in terms of its tendency to generate toxic or biased content. But I think a big part of that is that we, we’re using Azure APIs in part of the data cleaning and data processing, and Azure has invested a lot of time and effort in making sure that we have a lot of tools and classifiers for identifying unsafe content, so the training data, the post-training data, benefited from that, which ended up helping the model, as well. But to your point, I think this is a critical component that should go into any work related to pretraining or post-training or even fine-tuning in many cases. And we did some in the paper, but I think, I think there’s a lot more to be done there.

LLORENS: Can you talk a little bit more about post-training as distinct from pretraining? How that, how that process has evolved, and, and where you see it going from here?

AWADALLAH: I, I, I see a ton of potential and, and opportunity there actually. And pretraining is the traditional language model training as we have always done it. Surprisingly, actually, if you go back to … like I, I was … in, in one of the talks, I was showing like a 20-years-ago paper by Bengio et al. doing the language model training with neural networks, and we’re still training neural networks the same way, autoregressive next word prediction. Very different architecture, a lot of detail that goes into the training process, but we are still training them as a language model to predict the next word. In a big departure from that—and it started with the InstructGPT paper and then a lot of other work had followed—there was this introduction of other steps of the language model training process. The first step is instruction tuning, which is showing the model prompts and responses and asking it to … and training the model on these prompts and responses. Often these responses are originated by a human. So you are not just training the model to learn the language model criteria only anymore, you are actually training it to respond to a way the human would want it to respond. And this was very interesting because you could see that the language models are really very good text-completion engines. And at some time actually, a lot of folks were working on framing the task such that it looks like this text completion. So if you are doing classification, you would basically list your input and then ask a question where the completion of that question would be the class that you are looking for. But then the community started figuring out that you can actually introduce this additional step of instruction tuning, where now out of all the possible ways of completing a sentence like if I’m asking a question, maybe listing other similar questions is a very good way of completion. Maybe repeating that question with more details is another way of completion, or answering the question is a third way of completion, and all of them could be highly probable. The instruction tuning is basically teaching the model the way to respond, and a big part of that has to do with safety, as well, because you could demonstrate how we want the model to be helpful, how we want the model to be harmless, in this instruction-tuning step. But the instruction tuning step is only showing the model what to do. It’s not showing it what not to do. And this is where the RLHF step came in, the reinforcement learning from human feedback. What’s happening really is that instead of showing the model a single answer, we’re showing them a little more than one answer. And we are basically showing them only a preference. We’re basically telling the model Answer A is better than Answer B. It could be better for many reasons. We are just encoding our criteria of better into these annotations, and we are training a reward model first that basically it’s job is, given any response, would assign a scalar value to it on how good it is. And then we are doing the RLHF training loop, where the reward model is used to update the original model such that it learns what are better responses or not or worse responses and tries to align more with the better responses. The post-training is, as a concept, is very related and, and sometimes referred to also as alignment, because the way post-training has been mostly used is to align the model to human values, whether this be being helpful or being harmless.

LLORENS: Ahmed, as we, as we wrap up here, typically, I would ask something like, you know, what’s next for your research, and maybe you can tell us a little bit about what’s next for your research. [LAUGHS] But, but before you do that, I’d love to understand what, what key limitation you see in the current era of AI that you would … would be on your wish list, right, as something that maybe you and your team or maybe the broader field has accomplished in the next five years. What, what new capabilities would be on your wish list for AI over the next five years?

AWADALLAH: Yeah, given, given the progress, I would say even much shorter than five years.

LLORENS: Five months. [LAUGHS]

AWADALLAH: But I would say … actually the answer to the two questions are, are very similar. Actually, I think where we are with these models right now is much better than many people anticipated, and we are able to solve problems that we didn’t think we could solve before. One of the key capabilities that I would like to see getting better over the next, few months to a few years—hopefully more toward few months—is the ability of the model to continue to learn. This like continual learning loop where the model is learning as it interacts with the humans. The model is reflecting on past experiences and getting better as we use it, and maybe also getting better in an adaptive way. Like we sometimes use this term adaptive alignment, where we are basically saying we want the model actually to continue to align and continue to align in the way it behaves across multiple dimensions. Like maybe the model will get more personal as I use it, and it will start acting more and, and behaving more in a way I want it to be. Or maybe I am developing a particular application, and for that application, I want the model to be a lot more creative or I want the model to be a lot more grounded. We can do some of that with prompting right now, but I think having more progress along this notion of continual learning, lifelong learning … this has been a heavily studied subject in machine learning in general and has been the holy grail of machine learning for many, many, many years. Having a model that’s able to continue to learn, continue to adapt, gets better every time you use it, so just when I use it today and I interact with it and it could learn about my preferences, and next time along, I don’t have to state these preferences again. Or maybe when it makes a mistake and I provide a feedback, next time along, it already knows that it had made that mistake and it already gives me a better solution.

LLORENS: That should have been the last question. But I think I have one more. That is, how will we know that the models are getting better at that, right? That’s a metric that’s sort of driven by interaction versus, you know, static evaluation. So how do you, how do you measure progress in adaptive alignment that way?

AWADALLAH: I think, I think that’s a very interesting point. And this actually ties this back with two concepts that we brought up earlier: the evaluation side and the safety side. Because from the evaluation perspective, I do think we need to move beyond static benchmark evaluation to a more dynamic human-in-the-loop evaluation, and there’s already been attempts and progress at that just over the past few months, and there is still a lot more to do there. The evaluation criteria will not also be universal. Like there will be a lot … like a lot of people talk about the, let’s say, fabrications—the models making up information, facts. Well, if I am using the model to help me write fictional stories, like this becomes a feature; it’s not a bug. But if I’m using the model to ask questions, especially in the high-stakes scenario, it becomes a very big problem. So having a way of evaluating these models that are dynamic, that are human-in-the-loop, that are adaptive, that aligns with objectives of how we are using the models will be a very important research area, and that ties back to the safety angles, as well, because if I … if we are barely … we’re, we’re … everybody is working really hard to try to understand the safety of the models after the models are being trained and they are fixed. But what if the models continue to improve? What if it’s continuing to learn? What if it’s learning things from me that are different than what it’s learning from you? Then that notion of alignment and safety and evaluation of that becomes also a very open and interesting question.

LLORENS: Well, look, I love the ambition there, Ahmed, and thanks for a fascinating discussion.

AWADALLAH: Thank you so much, Ashley.

The post AI Frontiers: The future of scale with Ahmed Awadallah and Ashley Llorens appeared first on Microsoft Research.

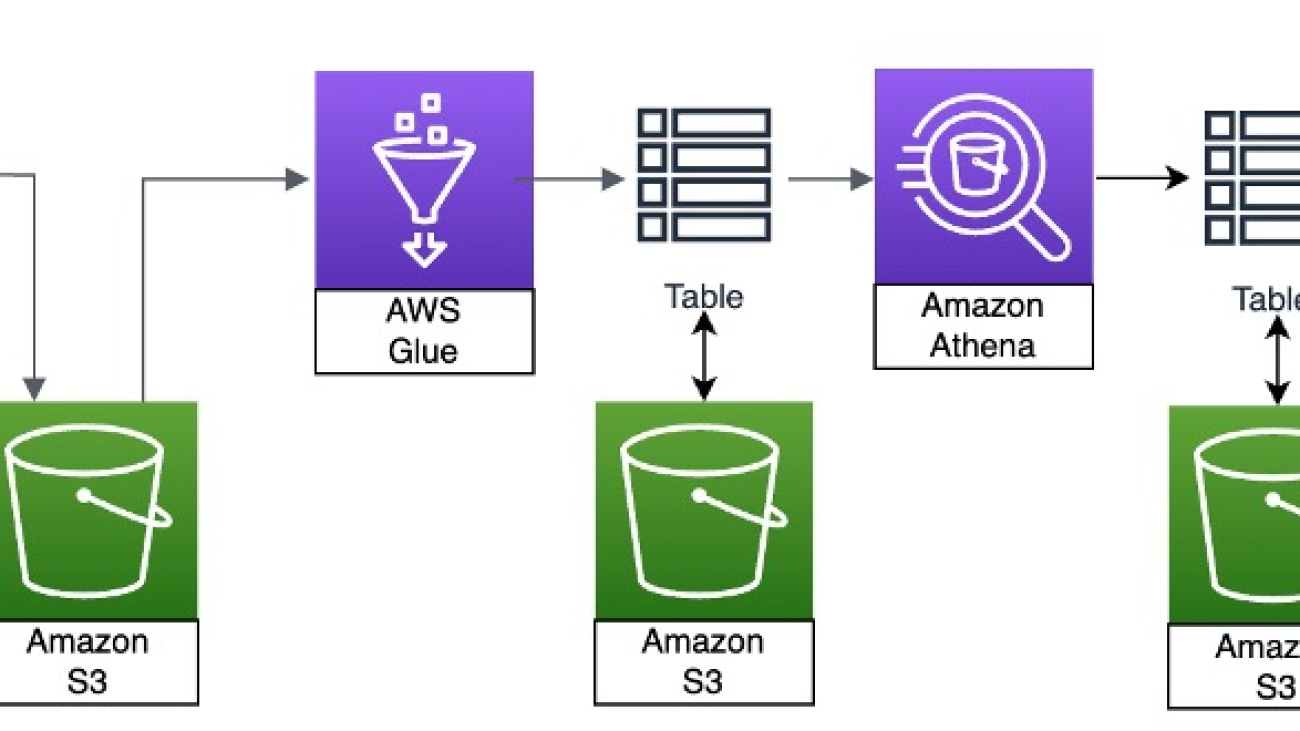

Kris Gedman is the US East sales leader for Retail & CPG at Amazon Web Services. When not working, he enjoys spending time with his friends and family, especially summers on Cape Cod. Kris is a temporarily retired Ninja Warrior but he loves watching and coaching his two sons for now.

Kris Gedman is the US East sales leader for Retail & CPG at Amazon Web Services. When not working, he enjoys spending time with his friends and family, especially summers on Cape Cod. Kris is a temporarily retired Ninja Warrior but he loves watching and coaching his two sons for now. Clark Lefavour is a Solutions Architect leader at Amazon Web Services, supporting enterprise customers in the East region. Clark is based in New England and enjoys spending time architecting recipes in the kitchen.

Clark Lefavour is a Solutions Architect leader at Amazon Web Services, supporting enterprise customers in the East region. Clark is based in New England and enjoys spending time architecting recipes in the kitchen.

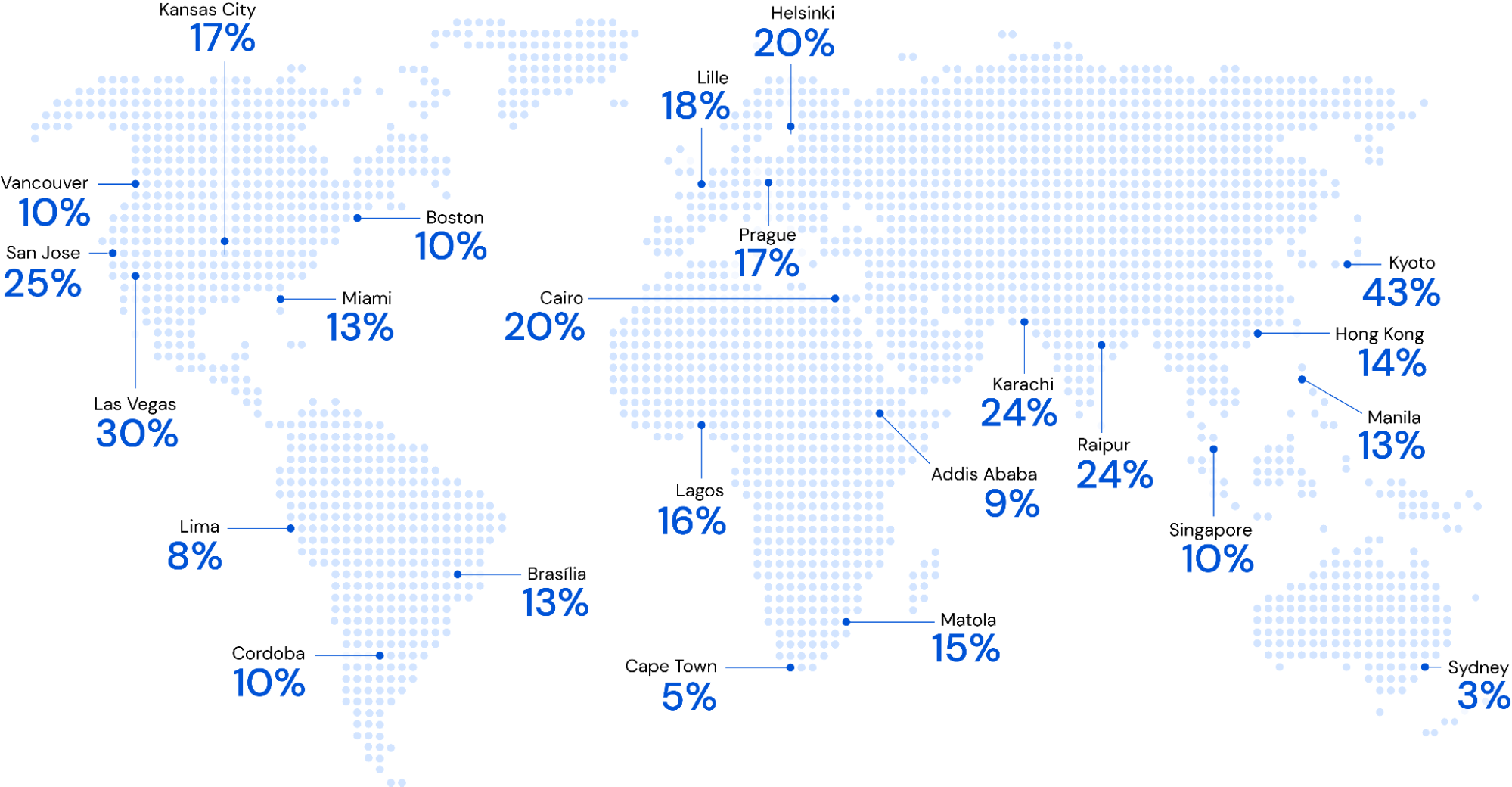

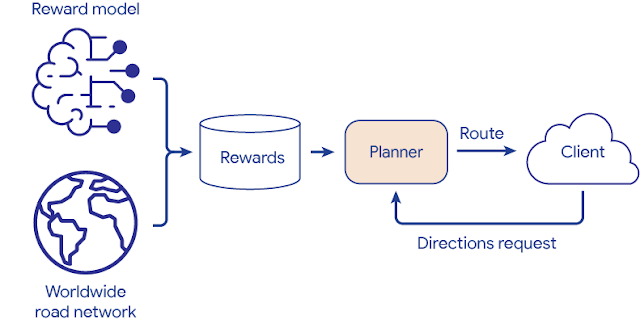

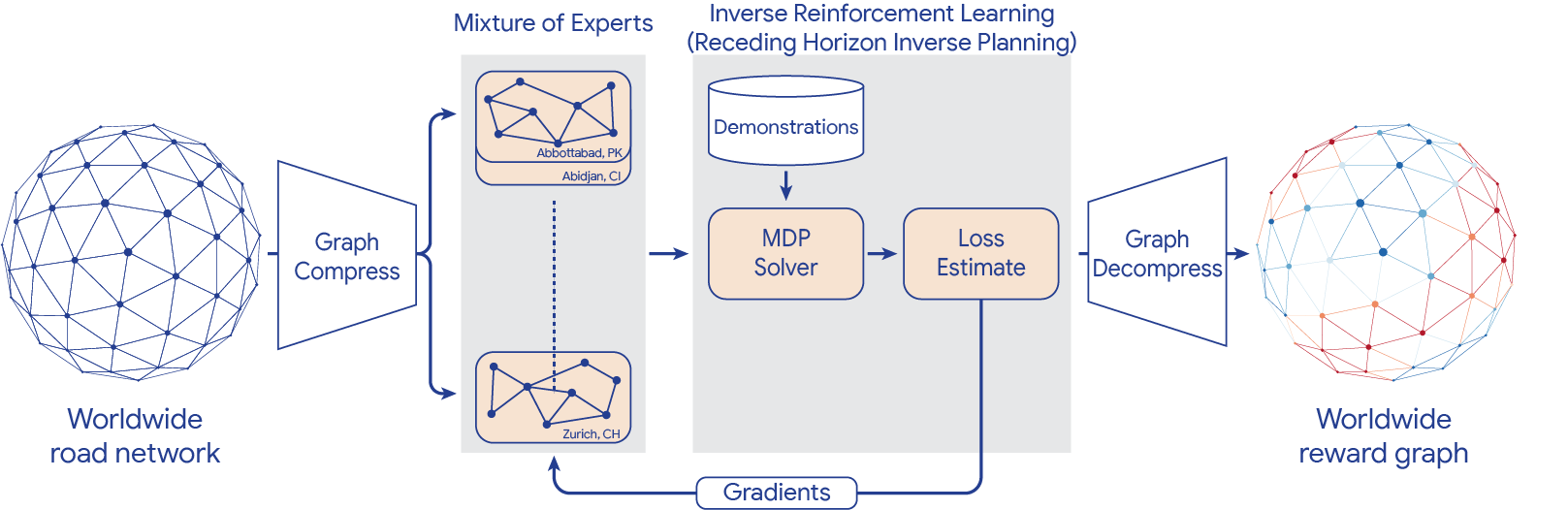

A look at Data Commons, Google’s initiative to make publicly available data more useful for people working on solutions to societal challenges.

A look at Data Commons, Google’s initiative to make publicly available data more useful for people working on solutions to societal challenges.