Organizations across industries are using automatic text summarization to more efficiently handle vast amounts of information and make better decisions. In the financial sector, investment banks condense earnings reports down to key takeaways to rapidly analyze quarterly performance. Media companies use summarization to monitor news and social media so journalists can quickly write stories on developing issues. Government agencies summarize lengthy policy documents and reports to help policymakers strategize and prioritize goals.

By creating condensed versions of long, complex documents, summarization technology enables users to focus on the most salient content. This leads to better comprehension and retention of critical information. The time savings allow stakeholders to review more material in less time, gaining a broader perspective. With enhanced understanding and more synthesized insights, organizations can make better informed strategic decisions, accelerate research, improve productivity, and increase their impact. The transformative power of advanced summarization capabilities will only continue growing as more industries adopt artificial intelligence (AI) to harness overflowing information streams.

In this post, we explore leading approaches for evaluating summarization accuracy objectively, including ROUGE metrics, METEOR, and BERTScore. Understanding the strengths and weaknesses of these techniques can help guide selection and improvement efforts. The overall goal of this post is to demystify summarization evaluation to help teams better benchmark performance on this critical capability as they seek to maximize value.

Types of summarization

Summarization can generally be divided into two main types: extractive summarization and abstractive summarization. Both approaches aim to condense long pieces of text into shorter forms, capturing the most critical information or essence of the original content, but they do so in fundamentally different ways.

Extractive summarization involves identifying and extracting key phrases, sentences, or segments from the original text without altering them. The system selects parts of the text deemed most informative or representative of the whole. Extractive summarization is useful if accuracy is critical and the summary needs to reflect the exact information from the original text. These could be use cases like highlighting specific legal terms, obligations, and rights outlined in the terms of use. The most common techniques used for extractive summarization are term frequency-inverse document frequency (TF-IDF), sentence scoring, text rank algorithm, and supervised machine learning (ML).

Abstractive summarization goes a step further by generating new phrases and sentences that were not in the original text, essentially paraphrasing and condensing the original content. This approach requires a deeper understanding of the text, because the AI needs to interpret the meaning and then express it in a new, concise form. Large language models (LLMs) are best suited for abstractive summarization because the transformer models use attention mechanisms to focus on relevant parts of the input text when generating summaries. The attention mechanism allows the model to assign different weights to different words or tokens in the input sequence, enabling it to capture long-range dependencies and contextually relevant information.

In addition to these two primary types, there are hybrid approaches that combine extractive and abstractive methods. These approaches might start with extractive summarization to identify the most important content and then use abstractive techniques to rewrite or condense that content into a fluent summary.

The challenge

Finding the optimal method to evaluate summary quality remains an open challenge. As organizations increasingly rely on automatic text summarization to distill key information from documents, the need grows for standardized techniques to measure summarization accuracy. Ideally, these evaluation metrics would quantify how well machine-generated summaries extract the most salient content from source texts and present coherent summaries reflecting the original meaning and context.

However, developing robust evaluation methodologies for text summarization presents difficulties:

- Human-authored reference summaries used for comparison often exhibit high variability based on subjective determinations of importance

- Nuanced aspects of summary quality like fluency, readability, and coherence prove difficult to quantify programmatically

- Wide variation exists across summarization methods from statistical algorithms to neural networks, complicating direct comparisons

Recall-Oriented Understudy for Gisting Evaluation (ROUGE)

ROUGE metrics, such as ROUGE-N and ROUGE-L, play a crucial role in evaluating the quality of machine-generated summaries compared to human-written reference summaries. These metrics focus on assessing the overlap between the content of machine-generated and human-crafted summaries by analyzing n-grams, which are groups of words or tokens. For instance, ROUGE-1 evaluates the match of individual words (unigrams), whereas ROUGE-2 considers pairs of words (bigrams). Additionally, ROUGE-N assesses the longest common subsequence of words between the two texts, allowing for flexibility in word order.

To illustrate this, consider the following examples:

- ROGUE-1 metric – ROUGE-1 evaluates the overlap of unigrams (single words) between a generated summary and a reference summary. For example, if a reference summary contains “The quick brown fox jumps,” and the generated summary is “The brown fox jumps quickly,” the ROUGE-1 metric would consider “brown,” “fox,” and “jumps” as overlapping unigrams. ROUGE-1 focuses on the presence of individual words in the summaries, measuring how well the generated summary captures the key words from the reference summary.

- ROGUE-2 metric – ROUGE-2 assesses the overlap of bigrams (pairs of adjacent words) between a generated summary and a reference summary. For instance, if the reference summary has “The cat is sleeping,” and the generated summary reads “A cat is sleeping,” ROUGE-2 would identify “cat is” and “is sleeping” as an overlapping bigram. ROUGE-2 provides insight into how well the generated summary maintains the sequence and context of word pairs compared to the reference summary.

- ROUGE-N metric – ROUGE-N is a generalized form where N represents any number, allowing evaluation based on n-grams (sequences of N words). Considering N=3, if the reference summary states “The sun is shining brightly,” and the generated summary is “Sun shining brightly,” ROUGE-3 would recognize “sun shining brightly” as a matching trigram. ROUGE-N offers flexibility to evaluate summaries based on different lengths of word sequences, providing a more comprehensive assessment of content overlap.

These examples illustrate how ROUGE-1, ROUGE-2, and ROUGE-N metrics function in evaluating automatic summarization or machine translation tasks by comparing generated summaries with reference summaries based on different levels of word sequences.

Calculate a ROUGE-N score

You can use the following steps to calculate a ROUGE-N score:

- Tokenize the generated summary and the reference summary into individual words or tokens using basic tokenization methods like splitting by whitespace or natural language processing (NLP) libraries.

- Generate n-grams (contiguous sequences of N words) from both the generated summary and the reference summary.

- Count the number of overlapping n-grams between the generated summary and the reference summary.

- Calculate precision, recall, and F1 score:

- Precision – The number of overlapping n-grams divided by the total number of n-grams in the generated summary.

- Recall – The number of overlapping n-grams divided by the total number of n-grams in the reference summary.

- F1 score – The harmonic mean of precision and recall, calculated as (2 * precision * recall) / (precision + recall).

- The aggregate F1 score obtained from calculating precision, recall, and F1 score for each row in the dataset is considered as the ROUGE-N score.

Limitations

ROGUE has the following limitations:

- Narrow focus on lexical overlap – The core idea behind ROUGE is to compare the system-generated summary to a set of reference or human-created summaries, and measure the lexical overlap between them. This means ROUGE has a very narrow focus on word-level similarity. It doesn’t actually evaluate semantic meaning, coherence, or readability of the summary. A system could achieve high ROUGE scores by simply extracting sentences word-for-word from the original text, without generating a coherent or concise summary.

- Insensitivity to paraphrasing – Because ROUGE relies on lexical matching, it can’t detect semantic equivalence between words and phrases. Therefore, paraphrasing and use of synonyms will often lead to lower ROUGE scores, even if the meaning is preserved. This disadvantages systems that paraphrase or summarize in an abstractive way.

- Lack of semantic understanding – ROUGE doesn’t evaluate whether the system truly understood the meanings and concepts in the original text. A summary could achieve high lexical overlap with references, while missing the main ideas or containing factual inconsistencies. ROUGE would not identify these issues.

When to use ROUGE

ROUGE is simple and fast to calculate. Use it as a baseline or benchmark for summary quality related to content selection. ROUGE metrics are most effectively employed in scenarios involving abstractive summarization tasks, automatic summarization evaluation, assessments of LLMs, and comparative analyses of different summarization approaches. By using ROUGE metrics in these contexts, stakeholders can quantitatively evaluate the quality and effectiveness of summary generation processes.

Metric for Evaluation of Translation with Explicit Ordering (METEOR)

One of the major challenges in evaluating summarization systems is assessing how well the generated summary flows logically, rather than just selecting relevant words and phrases from the source text. Simply extracting relevant keywords and sentences doesn’t necessarily produce a coherent and cohesive summary. The summary should flow smoothly and connect ideas logically, even if they aren’t presented in the same order as the original document.

The flexibility of matching by reducing words to their root or base form (For example, after stemming, words like “running,” “runs,” and “ran” all become “run”) and synonyms means METEOR correlates better with human judgements of summary quality. It can identify if important content is preserved, even if the wording differs. This is a key advantage over n-gram based metrics like ROUGE, which only look for exact token matches. METEOR also gives higher scores to summaries that focus on the most salient content from the reference. Lower scores are given to repetitive or irrelevant information. This aligns well with the goal of summarization to keep the most important content only. METEOR is a semantically meaningful metric that can overcome some of the limitations of n-gram matching for evaluating text summarization. The incorporation of stemming and synonyms allows for better assessment of information overlap and content accuracy.

To illustrate this, consider the following examples:

Reference Summary: Leaves fall during autumn.

Generated Summary 1: Leaves drop in fall.

Generated Summary 2: Leaves green in summer.

The words that match between the reference and generated summary 1 are highlighted:

Reference Summary: Leavesfall during autumn.

Generated Summary 1: Leaves drop in fall.

Even though “fall” and “autumn” are different tokens, METEOR recognizes them as synonyms through its synonym matching. “Drop” and “fall” are identified as a stemmed match. For generated summary 2, there are no matches with the reference summary besides “Leaves,” so this summary would receive a much lower METEOR score. The more semantically meaningful matches, the higher the METEOR score. This allows METEOR to better evaluate the content and accuracy of summaries compared to simple n-gram matching.

Calculate a METEOR score

Complete the following steps to calculate a METEOR score:

- Tokenize the generated summary and the reference summary into individual words or tokens using basic tokenization methods like splitting by whitespace or NLP libraries.

- Calculate the unigram precision, recall, and F-mean score, giving more weightage to recall than precision.

- Apply a penalty for exact matches to avoid overemphasizing them. The penalty is chosen based on dataset characteristics, task requirements, and the balance between precision and recall. Subtract this penalty from the F-mean score calculated in Step 2.

- Calculate the F-mean score for stemmed forms (reducing words to their base or root form) and synonyms for unigrams where applicable. Aggregate this with the earlier calculated F-mean score to obtain the final METEOR score. The METEOR score ranges from 0–1, where 0 indicates no similarity between the generated summary and reference summary, and 1 indicates perfect alignment. Typically, summarization scores fall between 0–0.6.

Limitations

When employing the METEOR metric for evaluating summarization tasks, several challenges may arise:

- Semantic complexity – METEOR’s emphasis on semantic similarity can struggle to capture the nuanced meanings and context in complex summarization tasks, potentially leading to inaccuracies in evaluation.

- Reference variability – Variability in human-generated reference summaries can impact METEOR scores, because differences in reference content may affect the evaluation of machine-generated summaries.

- Linguistic diversity – The effectiveness of METEOR may vary across languages due to linguistic variations, syntax differences, and semantic nuances, posing challenges in multilingual summarization evaluations.

- Length discrepancy – Evaluating summaries of varying lengths can be challenging for METEOR, because discrepancies in length compared to the reference summary may result in penalties or inaccuracies in assessment.

- Parameter tuning – Optimizing METEOR’s parameters for different datasets and summarization tasks can be time-consuming and require careful tuning to make sure the metric provides accurate evaluations.

- Evaluation bias – There is a risk of evaluation bias with METEOR if not properly adjusted or calibrated for specific summarization domains or tasks. This can potentially lead to skewed results and affect the reliability of the evaluation process.

By being aware of these challenges and considering them when using METEOR as a metric for summarization tasks, researchers and practitioners can navigate potential limitations and make more informed decisions in their evaluation processes.

When to use METEOR

METEOR is commonly used to automatically evaluate the quality of text summaries. It is preferable to use METEOR as an evaluation metric when the order of ideas, concepts, or entities in the summary matters. METEOR considers the order and matches n-grams between the generated summary and reference summaries. It rewards summaries that preserve sequential information. Unlike metrics like ROUGE, which rely on overlap of n-grams with reference summaries, METEOR matches stems, synonyms, and paraphrases. METEOR works better when there can be multiple correct ways of summarizing the original text. METEOR incorporates WordNet synonyms and stemmed tokens when matching n-grams. In short, summaries that are semantically similar but use different words or phrasing will still score well. METEOR has a built-in penalty for summaries with repetitive n-grams. Therefore, it discourages word-for-word extraction or lack of abstraction. METEOR is a good choice when semantic similarity, order of ideas, and fluent phrasing are important for judging summary quality. It is less appropriate for tasks where only lexical overlap with reference summaries matters.

BERTScore

Surface-level lexical measures like ROUGE and METEOR evaluate summarization systems by comparing the word overlap between a candidate summary and a reference summary. However, they rely heavily on exact string matching between words and phrases. This means they may miss semantic similarities between words and phrases that have different surface forms but similar underlying meanings. By relying only on surface matching, these metrics may underestimate the quality of system summaries that use synonymous words or paraphrase concepts differently from reference summaries. Two summaries could convey nearly identical information but receive low surface-level scores due to vocabulary differences.

BERTScore is a way to automatically evaluate how good a summary is by comparing it to a reference summary written by a human. It uses BERT, a popular NLP technique, to understand the meaning and context of words in the candidate summary and reference summary. Specifically, it looks at each word or token in the candidate summary and finds the most similar word in the reference summary based on the BERT embeddings, which are vector representations of the meaning and context of each word. It measures the similarity using cosine similarity, which tells how close the vectors are to each other. For each word in the candidate summary, it finds the most related word in the reference summary using BERT’s understanding of language. It compares all these word similarities across the whole summary to get an overall score of how semantically similar the candidate summary is to the reference summary. The more similar the words and meanings captured by BERT, the higher the BERTScore. This allows it to automatically evaluate the quality of a generated summary by comparing it to a human reference without needing human evaluation each time.

To illustrate this, imagine you have a machine-generated summary: “The quick brown fox jumps over the lazy dog.” Now, let’s consider a human-crafted reference summary: “A fast brown fox leaps over a sleeping canine.”

Calculate a BERTScore

Complete the following steps to calculate a BERTScore:

- BERTScore uses contextual embeddings to represent each token in both the candidate (machine-generated) and reference (human-crafted) sentences. Contextual embeddings are a type of word representation in NLP that captures the meaning of a word based on its context within a sentence or text. Unlike traditional word embeddings that assign a fixed vector to each word regardless of its context, contextual embeddings consider the surrounding words to generate a unique representation for each word depending on how it is used in a specific sentence.

- The metric then computes the similarity between each token in the candidate sentence with each token in the reference sentence using cosine similarity. Cosine similarity helps us quantify how closely related two sets of data are by focusing on the direction they point in a multi-dimensional space, making it a valuable tool for tasks like search algorithms, NLP, and recommendation systems.

- By comparing the contextual embeddings and computing similarity scores for all tokens, BERTScore generates a comprehensive evaluation that captures the semantic relevance and context of the generated summary compared to the human-crafted reference.

- The final BERTScore output provides a similarity score that reflects how well the machine-generated summary aligns with the reference summary in terms of meaning and context.

In essence, BERTScore goes beyond traditional metrics by considering the semantic nuances and context of sentences, offering a more sophisticated evaluation that closely mirrors human judgment. This advanced approach enhances the accuracy and reliability of evaluating summarization tasks, making BERTScore a valuable tool in assessing text generation systems.

Limitations:

Although BERTScore offers significant advantages in evaluating summarization tasks, it also comes with certain limitations that need to be considered:

- Computational intensity – BERTScore can be computationally intensive due to its reliance on pre-trained language models like BERT. This can lead to longer evaluation times, especially when processing large volumes of text data.

- Dependency on pre-trained models – The effectiveness of BERTScore is highly dependent on the quality and relevance of the pre-trained language model used. In scenarios where the pre-trained model may not adequately capture the nuances of the text, the evaluation results may be affected.

- Scalability – Scaling BERTScore for large datasets or real-time applications can be challenging due to its computational demands. Implementing BERTScore in production environments may require optimization strategies to provide efficient performance.

- Domain specificity – BERTScore’s performance may vary across different domains or specialized text types. Adapting the metric to specific domains or tasks may require fine-tuning or adjustments to produce accurate evaluations.

- Interpretability – Although BERTScore provides a comprehensive evaluation based on contextual embeddings, interpreting the specific reasons behind the similarity scores generated for each token can be complex and may require additional analysis.

- Reference-free evaluation – Although BERTScore reduces the reliance on reference summaries for evaluation, this reference-free approach may not fully capture all aspects of summarization quality, particularly in scenarios where human-crafted references are essential for assessing content relevance and coherence.

Acknowledging these limitations can help you make informed decisions when using BERTScore as a metric for evaluating summarization tasks, providing a balanced understanding of its strengths and constraints.

When to use BERTScore

BERTScore can evaluate the quality of text summarization by comparing a generated summary to a reference summary. It uses neural networks like BERT to measure semantic similarity beyond just exact word or phrase matching. This makes BERTScore very useful when semantic fidelity preserving the full meaning and content is critical for your summarization task. BERTScore will give higher scores to summaries that convey the same information as the reference summary, even if they use different words and sentence structures. The bottom line is that BERTScore is ideal for summarization tasks where retaining the full semantic meaning not just keywords or topics is vital. Its advanced neural scoring allows it to compare meaning beyond surface-level word matching. This makes it suitable for cases where subtle differences in wording can substantially alter overall meaning and implications. BERTScore, in particular, excels in capturing semantic similarity, which is crucial for assessing the quality of abstractive summaries like those produced by Retrieval Augmented Generation (RAG) models.

Model evaluation frameworks

Model evaluation frameworks are essential for accurately gauging the performance of various summarization models. These frameworks are instrumental in comparing models, providing coherence between generated summaries and source content, and pinpointing deficiencies in evaluation methods. By conducting thorough assessments and consistent benchmarking, these frameworks propel text summarization research by advocating standardized evaluation practices and enabling multifaceted model comparisons.

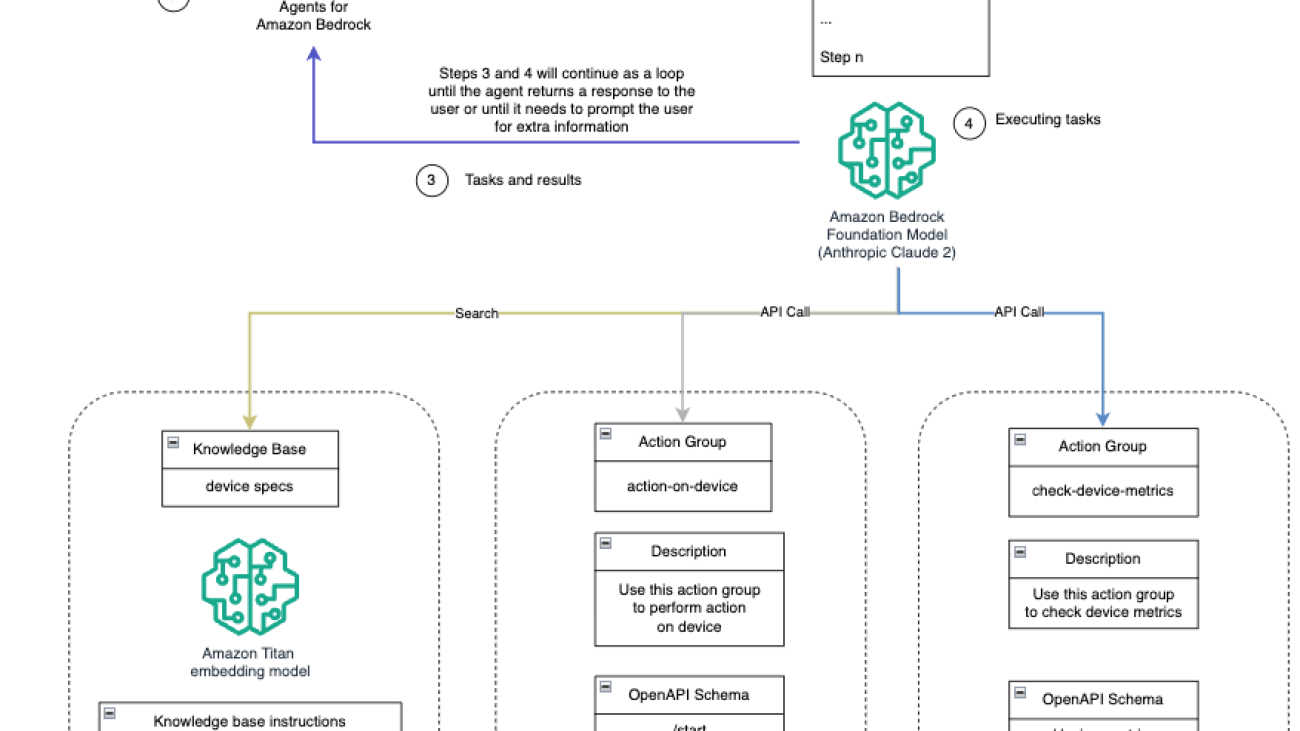

In AWS, the FMEval library within Amazon SageMaker Clarify streamlines the evaluation and selection of foundation models (FMs) for tasks like text summarization, question answering, and classification. It empowers you to evaluate FMs based on metrics such as accuracy, robustness, creativity, bias, and toxicity, supporting both automated and human-in-the-loop evaluations for LLMs. With UI-based or programmatic evaluations, FMEval generates detailed reports with visualizations to quantify model risks like inaccuracies, toxicity, or bias, helping organizations align with their responsible generative AI guidelines. In this section, we demonstrate how to use the FMEval library.

Evaluate Claude v2 on summarization accuracy using Amazon Bedrock

The following code snippet is an example of how to interact with the Anthropic Claude model using Python code:

In simple terms, this code performs the following actions:

- Import the necessary libraries, including

json, to work with JSON data. - Define the model ID as

anthropic.claude-v2and set the content type for the request. - Create a

prompt_datavariable that structures the input data for the Claude model. In this case, it asks the question “Who is Barack Obama?” and expects a response from the model. - Construct a JSON object named body that includes the prompt data, and specify additional parameters like the maximum number of tokens to generate.

- Invoke the Claude model using

bedrock_runtime.invoke_modelwith the defined parameters. - Parse the response from the model, extract the completion (generated text), and print it out.

Make sure the AWS Identity and Access Management (IAM) role associated with the Amazon SageMaker Studio user profile has access to the Amazon Bedrock models being invoked. Refer to Identity-based policy examples for Amazon Bedrock for guidance on best practices and examples of identity-based policies for Amazon Bedrock.

Using the FMEval library to evaluate the summarized output from Claude

We use the following code to evaluate the summarized output:

In the preceding code snippet, to evaluate text summarization using the FMEval library, we complete the following steps:

- Create a

ModelRunnerto perform invocation on your LLM. The FMEval library provides built-in support for Amazon SageMaker endpoints and Amazon SageMaker JumpStart LLMs. You can also extend theModelRunnerinterface for any LLMs hosted anywhere. - Use supported

eval_algorithmslike toxicity, summarization, accuracy, semantic, and robustness, based on your evaluation needs. - Customize the evaluation configuration parameters for your specific use case.

- Use the evaluation algorithm with either built-in or custom datasets to evaluate your LLM model. The dataset used in this case is sourced from the following GitHub repo.

Refer to the developer guide and examples for detailed usage of evaluation algorithms.

The following table summarizes the results of the evaluation.

| model _input | model_output | target_output | prompt | scores | meteor_score | rouge_score | bert_score |

| John Edward 0 Bates, formerly of Spalding, Linco….. |

I cannot make any definitive judgments, as th… |

A former Lincolnshire Police officer carried o… |

Human: John Edward Bates, formerly of Spalding… |

[{‘name’: ‘meteor’, ‘value’: 0.101010101010101… |

0.10101 | 0 | 0.557155 |

| 23 October 2015 Last updated at 17:44 BST|nIt’… |

Here are some key points about hurricane/trop.. | Hurricane Patricia has been rated as a categor… | Human: 23 October 2015 Last updated at 17:44 B… |

[{‘name’: meteor’, “value’: 0.102339181286549.. |

0.102339 | 0.018265 | 0.441421 |

| Ferrari appeared in a position to challenge un… | Here are the key points from the article:nin… | Lewis Hamilton stormed to pole position at the… | Human: Ferrari appeared in a position to chall… | [{‘name’: ‘meteor’, ‘value’: 0.322543352601156… |

0.322543 | 0.078212 | 0.606487 |

| The Bath-born player, 28, has made 36 appearan… |

Okay, let me summarize the key points:/nin- E….. | Newport Gwent Dragons number eight Ed Jackson | Human: The Bath-born player, 28, has made 36 a… | [{‘name’: ‘meteor’, ‘value’: 0105740181268882… |

0.10574 | 0.012987 | 0.539488 |

| Weaknesses in the way mice swapped data with c… | Here are the key points I gathered from the a… | Hackers could gain access to home and | Human: Weaknesses in the swar mice swapped data |

[{‘name’: ‘meteor’, ‘value’: 0.201048289433848… |

0.201048 | 0.021858 | 0.526947 |

Check out the sample notebook for more details about the summarization evaluation that we discussed in this post.

Conclusion

ROUGE, METEOR, and BERTScore all measure the quality of machine-generated summaries, but focus on different aspects like lexical overlap, fluency, or semantic similarity. Make sure to select the metric that aligns with what defines “good” for your specific summarization use case. You can also use a combination of metrics. This provides a more well-rounded evaluation and guards against potential weaknesses of any individual metric. With the right measurements, you can iteratively improve your summarizers to meet whichever notion of accuracy matters most.

Additionally, FM and LLM evaluation is necessary to be able to productionize these models at scale. With FMEval, you get a vast set of built-in algorithms across many NLP tasks, but also a scalable and flexible tool for large-scale evaluations of your own models, datasets, and algorithms. To scale up, you can use this package in your LLMOps pipelines to evaluate multiple models. To learn more about FMEval in AWS and how to use it effectively, refer to Use SageMaker Clarify to evaluate large language models. For further understanding and insights into the capabilities of SageMaker Clarify in evaluating FMs, see Amazon SageMaker Clarify Makes It Easier to Evaluate and Select Foundation Models.

About the Authors

Dinesh Kumar Subramani is a Senior Solutions Architect based in Edinburgh, Scotland. He specializes in artificial intelligence and machine learning, and is member of technical field community with in Amazon. Dinesh works closely with UK Central Government customers to solve their problems using AWS services. Outside of work, Dinesh enjoys spending quality time with his family, playing chess, and exploring a diverse range of music.

Dinesh Kumar Subramani is a Senior Solutions Architect based in Edinburgh, Scotland. He specializes in artificial intelligence and machine learning, and is member of technical field community with in Amazon. Dinesh works closely with UK Central Government customers to solve their problems using AWS services. Outside of work, Dinesh enjoys spending quality time with his family, playing chess, and exploring a diverse range of music.

Pranav Sharma is an AWS leader driving technology and business transformation initiatives across Europe, the Middle East, and Africa. He has experience in designing and running artificial intelligence platforms in production that support millions of customers and deliver business outcomes. He has played technology and people leadership roles for Global Financial Services organizations. Outside of work, he likes to read, play tennis with his son, and watch movies.

Pranav Sharma is an AWS leader driving technology and business transformation initiatives across Europe, the Middle East, and Africa. He has experience in designing and running artificial intelligence platforms in production that support millions of customers and deliver business outcomes. He has played technology and people leadership roles for Global Financial Services organizations. Outside of work, he likes to read, play tennis with his son, and watch movies.

A new report from inaugural Technology & Society Visiting Fellow Andrew McAfee looks at the potential economic impacts of generative AI.

A new report from inaugural Technology & Society Visiting Fellow Andrew McAfee looks at the potential economic impacts of generative AI.

NVIDIA GeForce NOW (@NVIDIAGFN)

NVIDIA GeForce NOW (@NVIDIAGFN)

Ameer Hakme is an AWS Solutions Architect based in Pennsylvania. He collaborates with Independent Software Vendors (ISVs) in the Northeast region, assisting them in designing and building scalable and modern platforms on the AWS Cloud. An expert in AI/ML and generative AI, Ameer helps customers unlock the potential of these cutting-edge technologies. In his leisure time, he enjoys riding his motorcycle and spending quality time with his family.

Ameer Hakme is an AWS Solutions Architect based in Pennsylvania. He collaborates with Independent Software Vendors (ISVs) in the Northeast region, assisting them in designing and building scalable and modern platforms on the AWS Cloud. An expert in AI/ML and generative AI, Ameer helps customers unlock the potential of these cutting-edge technologies. In his leisure time, he enjoys riding his motorcycle and spending quality time with his family. Sharon Li is an AI/ML Solutions Architect at Amazon Web Services based in Boston, with a passion for designing and building Generative AI applications on AWS. She collaborates with customers to leverage AWS AI/ML services for innovative solutions.

Sharon Li is an AI/ML Solutions Architect at Amazon Web Services based in Boston, with a passion for designing and building Generative AI applications on AWS. She collaborates with customers to leverage AWS AI/ML services for innovative solutions. Kawsar Kamal is a senior solutions architect at Amazon Web Services with over 15 years of experience in the infrastructure automation and security space. He helps clients design and build scalable DevSecOps and AI/ML solutions in the Cloud.

Kawsar Kamal is a senior solutions architect at Amazon Web Services with over 15 years of experience in the infrastructure automation and security space. He helps clients design and build scalable DevSecOps and AI/ML solutions in the Cloud.

Yunfei Bai is a Senior Solutions Architect at AWS. With a background in AI/ML, data science, and analytics, Yunfei helps customers adopt AWS services to deliver business results. He designs AI/ML and data analytics solutions that overcome complex technical challenges and drive strategic objectives. Yunfei has a PhD in Electronic and Electrical Engineering. Outside of work, Yunfei enjoys reading and music.

Yunfei Bai is a Senior Solutions Architect at AWS. With a background in AI/ML, data science, and analytics, Yunfei helps customers adopt AWS services to deliver business results. He designs AI/ML and data analytics solutions that overcome complex technical challenges and drive strategic objectives. Yunfei has a PhD in Electronic and Electrical Engineering. Outside of work, Yunfei enjoys reading and music. Elad Dwek is a Construction Technology Manager at Amazon. With a background in construction and project management, Elad helps teams adopt new technologies and data-based processes to deliver construction projects. He identifies needs and solutions, and facilitates the development of the bespoke attributes. Elad has an MBA and a BSc in Structural Engineering. Outside of work, Elad enjoys yoga, woodworking, and traveling with his family.

Elad Dwek is a Construction Technology Manager at Amazon. With a background in construction and project management, Elad helps teams adopt new technologies and data-based processes to deliver construction projects. He identifies needs and solutions, and facilitates the development of the bespoke attributes. Elad has an MBA and a BSc in Structural Engineering. Outside of work, Elad enjoys yoga, woodworking, and traveling with his family. Luca Cerabone is a Business Intelligence Engineer at Amazon. Drawing from his background in data science and analytics, Luca crafts tailored technical solutions to meet the unique needs of his customers, driving them towards more sustainable and scalable processes. Armed with an MSc in Data Science, Luca enjoys engaging in DIY projects, gardening and experimenting with culinary delights in his leisure moments.

Luca Cerabone is a Business Intelligence Engineer at Amazon. Drawing from his background in data science and analytics, Luca crafts tailored technical solutions to meet the unique needs of his customers, driving them towards more sustainable and scalable processes. Armed with an MSc in Data Science, Luca enjoys engaging in DIY projects, gardening and experimenting with culinary delights in his leisure moments.

Arik Porat is a Senior Startups Solutions Architect at Amazon Web Services. He works with startups to help them build and design their solutions in the cloud, and is passionate about machine learning and container-based solutions. In his spare time, Arik likes to play chess and video games.

Arik Porat is a Senior Startups Solutions Architect at Amazon Web Services. He works with startups to help them build and design their solutions in the cloud, and is passionate about machine learning and container-based solutions. In his spare time, Arik likes to play chess and video games. Eliran Efron is a Startups Solutions Architect at Amazon Web Services. Eliran is a data and compute enthusiast, assisting startups designing their system architectures. In his spare time, Eliran likes to build and race cars in Touring races and build IoT devices.

Eliran Efron is a Startups Solutions Architect at Amazon Web Services. Eliran is a data and compute enthusiast, assisting startups designing their system architectures. In his spare time, Eliran likes to build and race cars in Touring races and build IoT devices.