Here’s one Googler’s experience of experimenting our newest AI products and updates at Google I/O 2024.Read More

Here’s one Googler’s experience of experimenting our newest AI products and updates at Google I/O 2024.Read More

Detect email phishing attempts using Amazon Comprehend

Phishing is the process of attempting to acquire sensitive information such as usernames, passwords and credit card details by masquerading as a trustworthy entity using email, telephone or text messages. There are many types of phishing based on the mode of communication and targeted victims. In an Email phishing attempt, an email is sent as a mode of communication to group of people. There are traditional rule-based approaches to detect email phishing. However, new trends are emerging that are hard to handle with a rule-based approach. There is need to use machine learning (ML) techniques to augment rule-based approaches for email phishing detection.

In this post, we show how to use Amazon Comprehend Custom to train and host an ML model to classify if the input email is an phishing attempt or not. Amazon Comprehend is a natural-language processing (NLP) service that uses ML to uncover valuable insights and connections in text. You can use Amazon Comprehend to identify the language of the text; extract key phrases, places, people, brands, or events; understand sentiment about products or services; and identify the main topics from a library of documents. You can customize Amazon Comprehend for your specific requirements without the skillset required to build ML-based NLP solutions. Comprehend Custom builds customized NLP models on your behalf, using training data that you provide. Comprehend Custom supports custom classification and custom entity recognition.

Solution overview

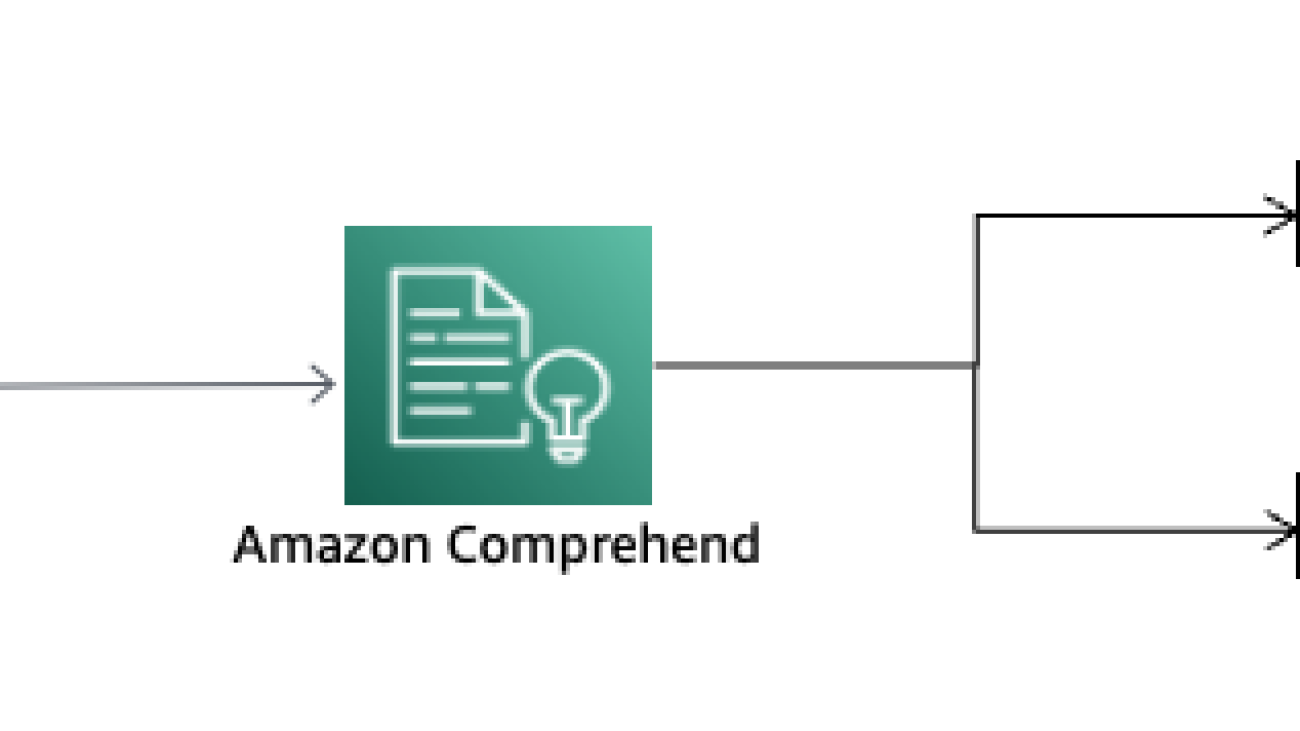

This post explains how you can use Amazon Comprehend to easily train and host an ML based model to detect phishing attempt. The following diagram shows how the phishing detection works.

You can use this solution with your email servers in which emails are passed through this phishing detector. When an email is flagged as a phishing attempt, the email recipient still gets the email in their mailbox, but they can be shown an additional banner highlighting a warning to the user.

You can use this solution for experimentation with the use case, but AWS recommends building a training pipeline for your environments. For details on how to build a classification pipeline with Amazon Comprehend, see Build a classification pipeline with Amazon Comprehend custom classification.

We walk through the following steps to build the phishing detection model:

- Collect and prepare the dataset.

- Load the data in an Amazon Simple Storage Service (Amazon S3) bucket.

- Create the Amazon Comprehend custom classification model.

- Create the Amazon Comprehend custom classification model endpoint.

- Test the model.

Prerequisites

Before diving into this use case, complete the following prerequisites:

- Set up an AWS account.

- Create an S3 bucket. For instructions, see Create your first S3 bucket.

- Download the email-trainingdata.csv and upload the file to the S3 bucket.

Collect and prepare the dataset

Your training data should have both phishing and non-phishing emails. Email users with in the organization are asked to report phishing through their email clients. Gather all these phishing reports and examples of non-phishing emails to prepare the training data. You should have a minimum 10 examples per class. Label phishing emails as phishing and non-phishing emails as nonphishing. For minimum training requirements, see General quotas for document classification. Although minimum labels per class is a starting point, it’s recommended to provide hundreds of labels per class for performance on classification tasks across new inputs.

For custom classification, you train the model in either single-label mode or multi-label mode. Single-label mode associates a single class with each document. Multi-label mode associates one or more classes with each document. For this case, we will use single-label mode – phishing or nonphishing. The individual classes are mutually exclusive. For example, you can classify an email as phishing or not-phishing, but not both.

Custom classification supports models that you train with plain-text documents and models that you train with native documents (such as PDF, Word, or images). For more information about classifier models and their supported document types, see Training classification models. For a plain-text model, you can provide classifier training data as a CSV file or as an augmented manifest file that you create using Amazon SageMaker Ground Truth. The CSV file or augmented manifest file includes the text for each training document, and its associated labels.For a native document model, you provide classifier training data as a CSV file. The CSV file includes the file name for each training document and its associated labels. You include the training documents in the S3 input folder for the training job.

For this case, we will train a plain-text model using CSV file format. For each row, the first column contains the class label value. The second column contains an example text document for that class. Each row must end with n or rn characters.

The following example shows a CSV file containing two documents.

CLASS,Text of document 1

CLASS,Text of document 2

The following example shows two rows of a CSV file that trains a custom classifier to detect whether an email message is phishing:

phishing, “Hi, we need account details and SSN information to complete the payment. Please furnish your credit card details in the attached form.”

nonphishing,” Dear Sir / Madam, your latest statement was mailed to your communication address. After your payment is received, you will receive a confirmation text message at your mobile number. Thanks, customer support”

For information about preparing your training documents, see Preparing classifier training data.

Load the data in the S3 bucket

Load the training data in CSV format to the S3 bucket you created in the prerequisite steps. For instructions, refer to Uploading objects.

Create the Amazon Comprehend custom classification model

Custom classification supports two types of classifier models: plain-text models and native document models. A plain-text model classifies documents based on their text content. You can train the plain-text model using documents in one of following languages: English, Spanish, German, Italian, French, or Portuguese. The training documents for a given classifier must all use the same language. A native document model has the ability to process both scanned or digital semi-structured documents like PDFs, Microsoft Word documents, and images in their native format. A native document model also classifies documents based on text content. A native document model can also use additional signals, such as from the layout of the document. You train a native document model with native documents for the model to learn the layout information. You train the model using semi-structured documents, which includes the following document types such as digital and scanned PDF documents and Word documents; Images sunch as JPG files, PNG files, and single-page TIFF files and Amazon Textract API output JSON files. AWS recommends using a plain-text model to classify plain-text documents and a native document model to classify semi-structured documents.

Data specification for the custom classification model can be represented as follows.

You can train a custom classifier using either the Amazon Comprehend console or API. Allow several minutes to a few hours for the classification model creation to complete. The length of time varies based on the size of your input documents.

For training a customer classifier on the Amazon Comprehend console, set the following data specification options.

On the Classifiers page of the Amazon Comprehend console, the new classifier appears in the table, showing Submitted as its status. When the classifier starts processing the training documents, the status changes to Training. When a classifier is ready to use, the status changes to Trained or Trained with warnings. If the status is Trained with Warnings, review the skipped files folder in the classifier training output.

If Amazon Comprehend encountered errors during creation or training, the status changes to In error. You can choose a classifier job in the table to get more information about the classifier, including any error messages.

After training the model, Amazon Comprehend tests the custom classifier model. If you don’t provide a test dataset, Amazon Comprehend trains the model with 90% of the training data. It reserves 10% of the training data to use for testing. If you do provide a test dataset, the test data must include at least one example for each unique label in the training dataset.

After Amazon Comprehend completes the custom classifier model training, it creates output files in the Amazon S3 output location that you specified in the CreateDocumentClassifier API request or the equivalent Amazon Comprehend console request. These output files are a confusion matrix and additional outputs for native document models. The format of the confusion matrix varies, depending on whether you trained your classifier using multi-class mode or multi-label mode.

After Amazon Comprehend creates the classifier model, the confusion matrix is available in the confusion_matrix.json file in the Amazon S3 output location. This confusion matrix provides metrics on how well the model performed in training. This matrix shows a matrix of labels that the model predicted, compared to the actual document labels. Amazon Comprehend uses a portion of the training data to create the confusion matrix. The following JSON file represents the matrix in confusion_matrix.json as an example.

Amazon Comprehend provides metrics to help you estimate how well a custom classifier performs. Amazon Comprehend calculates the metrics using the test data from the classifier training job. The metrics accurately represent the performance of the model during training, so they approximate the model performance for classification of similar data.

Use the Amazon Comprehend console or API operations such as DescribeDocumentClassifier to retrieve the metrics for a custom classifier.

The actual output of many binary classification algorithms is a prediction score. The score indicates the system’s certainty that the given observation belongs to the positive class. To make the decision about whether the observation should be classified as positive or negative, as a consumer of this score, you interpret the score by picking a classification threshold and comparing the score against it. Any observations with scores higher than the threshold are predicted as the positive class, and scores lower than the threshold are predicted as the negative class.

Create the Amazon Comprehend custom classification model endpoint

After you train a custom classifier, you can classify documents using Real-time analysis or an analysis job. Real-time analysis takes a single document as input and returns the results synchronously. An analysis job is an asynchronous job to analyze large documents or multiple documents in one batch. The following are the different options for using the custom classifier model.

Create an endpoint for the trained model. For instructions, refer to Real-tome analysis for customer classification (console). Amazon Comprehend assigns throughput to an endpoint using Inference Units (IU). An IU represents data throughput of 100 characters per second. You can provision the endpoint with up to 10 IU. You can scale the endpoint throughput either up or down by updating the endpoint. Endpoints are billed on 1-second increments, with a minimum of 60 seconds. Charges will continue to incur from the time you start the endpoint until it is deleted even if no documents are analyzed.

Test the Model

After the endpoint is ready, you can run the real-time analysis from the Amazon Comprehend console.

The sample input represents the email text, which is used for real-time analysis to detect if the email text is a phishing attempt or not.

Amazon Comprehend analyzes the input data using the custom model. Amazon Comprehend displays the discovered classes, along with a confidence assessment for each class. The insights section shows the inference results with confidence levels of the nonphishing and phishing classes. You can decide the threshold to decide the class of the inference. In this case, nonphishing is the inference results because this has more confidence than the phishing class. The model detects the input email text is a non-phishing email.

To integrate this capability of phishing detection in your real-world applications, you can use the Amazon API Gateway REST API with an AWS Lambda integration. Refer to the serverless pattern in Amazon API Gateway to AWS Lambda to Amazon Comprehend to know more.

Clean up

When you no longer need your endpoint, you should delete it so that you stop incurring costs from it. Also, delete the data file from S3 bucket. For more information on costs, see Amazon Comprehend Pricing.

Conclusion

In this post, we walked you through the steps to create a phishing attempt detector using Amazon Comprehend custom classification. You can customize Amazon Comprehend for your specific requirements without the skillset required to build ML-based NLP solutions.

You can also visit the Amazon Comprehend Developer Guide, GitHub repository and Amazon Comprehend developer resources for videos, tutorials, blogs, and more.

About the author

Ajeet Tewari is a Solutions Architect for Amazon Web Services. He works with enterprise customers to help them navigate their journey to AWS. His specialties include architecting and implementing highly scalable OLTP systems and leading strategic AWS initiatives.

Ajeet Tewari is a Solutions Architect for Amazon Web Services. He works with enterprise customers to help them navigate their journey to AWS. His specialties include architecting and implementing highly scalable OLTP systems and leading strategic AWS initiatives.

Microsoft Research Forum Episode 3: Globally inclusive and equitable AI, new use cases for AI, and more

In the latest episode of Microsoft Research Forum, researchers explored the importance of globally inclusive and equitable AI, shared updates on AutoGen and MatterGen, presented novel use cases for AI, including industrial applications and the potential of multimodal models to improve assistive technologies.

Below is a brief recap of the event, including select quotes from the presentations. Full replays of each session and presentation will be available soon.

Keynote: Building Globally Equitable AI

Jacki O’Neill, Lab Director, Microsoft Research Africa, Nairobi

Jacki O’Neill discussed the importance of creating globally equitable generative AI. She addressed the technical and sociotechnical challenges that must be tackled to positively transform the future of work worldwide.

“We’re at the very early stage of generative AI and the impacts it will have on work. This is a fast-moving field, and there’s an immense opportunity to take control of the agenda and build truly globally equitable AI systems. This requires ensuring that diverse contexts and applications, with their diverse datasets, drive the development of generative AI.”

Panel discussion: Generative AI for Global Impact: Challenges and Opportunities

Jacki O’Neill, Lab Director, Microsoft Research Africa, Nairobi (host)

Sunayana Sitaram, Principal Researcher, Microsoft Research India

Daniela Massiceti, Senior Researcher, Microsoft Research Cambridge

Tanuja Ganu, Principal Research SDE Manager, Microsoft Research India

Microsoft researchers discussed the challenges and opportunities of making AI more inclusive and impactful for everyone—from data that represents a broader range of communities and cultures to novel use cases for AI that are globally relevant.

“How can we take this power of generative AI and empower every individual, every individual across the globe—the people who are coming from different nationalities, different ethnicities, cultures, as well as with varied technology access and financial affordability?”

—Tanuja Ganu, Principal Research SDE Manager, Microsoft Research India

“One of the solutions that we’ve been using is to actually design with ‘human in the loop’ in mind because we know that these technologies are not perfect. And so, we really want to figure out ways in which humans and AI systems can work together in order to create the most effective outcome.”

—Sunayana Sitaram, Principal Researcher, Microsoft Research India

“We really need multidisciplinary research that goes beyond anything that we’ve done before, involving researchers and practitioners and community members. And it’s important to remember that machine learning engineers and researchers on their own can’t solve the problem of building globally equitable generative AI. This is something that we really need to do in a large scale.”

—Jacki O’Neill, Lab Director, Microsoft Research Africa, Nairobi

“An estimated 1.3 billion people—around 16 percent of the global population—live with some level of disability today. So, I think it’s really exciting to see these generative AI applications coming online for these communities.”

“As we look to this next decade of generative AI solutions, I really hope to see that we’re going to see more personalized AI models and solutions come through much more strongly, solutions where you as the user have much more control, much more agency, around how your model works for you.”

—Daniela Massiceti, Senior Researcher, Microsoft Research Cambridge

Lightning talk: Insights into the Challenges and Opportunities of Large Multi-Modal Models for Blind and Low Vision Users: A Case Study on CLIP

Daniela Massiceti, Senior Researcher, Microsoft Research Cambridge

Daniela Massiceti explored the transformative potential of multimodal models such as CLIP for assistive technologies. Specifically focusing on the blind/low-vision community, the talk explored the current distance from realizing this potential and the advancements needed to bridge this gap.

“Today’s AI models hold incredible potential for assisting the Blind community—from text recognition to object identification to question answering. Apps like Seeing AI are already deploying some of these AI features. But there is potential for much more.”

Lightning talk: Driving Industry Evolution: Exploring the Impact of Generative AI on Sector Transformation

Jiang Bian, Senior Principal Research Manager, Microsoft Research Asia

Jiang Bian discussed how generative AI transforms industries by bridging gaps between AI capabilities and industrial needs.

“In our dialogues with strategic partners, we have identified crucial gaps in current generative AI capabilities versus the specific needs of industry applications. These include a too-narrow focus on human-like AI but not critical industry applications, limitations in processing complex and noisy data, and concerns about reliability in complex decision-making scenarios. Our research is crucial in addressing these limitations and amplifying the underappreciated potential of generative AI in high-value sectors.”

Lightning talk: MatterGen: A Generative Model for Materials Design

Tian Xie, Principal Research Manager, Microsoft Research

Tian Xie described MatterGen, a generative model that enables the design of new inorganic materials based on a broad range of property conditions required by the application, aiming to shift the traditional paradigm of materials design with generative AI.

“Traditionally, materials design is conducted by search-based methods. We search through a list of candidates and gradually filter them using a list of design criteria for the application. Like for batteries, we need the materials to contain lithium, to be stable, to have a high lithium-ion conductivity, and each filtering step can be conducted using simulation-based methods or AI emulators. At the end, we get five to 10 candidates that we’re sending to the lab for experimental synthesis.”

“In MatterGen, we hope to rethink this process with generative AI. We’re aiming to directly generate materials given the design requirements for the target application, bypassing the process of searching through candidates. You can think of it as using text-to-image generative models like DALL-E to generate the images given a prompt rather than needing to search through the entire internet for images via a search engine.”

Lightning talk: AutoGen Update: Complex Tasks and Agents

Adam Fourney, Principal Researcher, Microsoft Research AI Frontiers

Adam Fourney discussed the effectiveness of using multiple agents, working together, to complete complex multi-step tasks. He showcased their capability to outperform previous single-agent solutions on benchmarks like GAIA, utilizing customizable arrangements of agents that collaborate, reason, and utilize tools to achieve complex outcomes.

“We’re starting to tackle increasingly more complex benchmarks and real-world scenarios with this configuration. And we’re really excited about opportunities to introduce new agents that, for example, learn and self-improve with experience; that understand images and screenshots a little better for maybe more effective web surfing or use of interfaces; and that are maybe a bit more systematic about exploring that solution space. So rather than just updating that ledger and then restarting when they get stuck, they can be a bit more pragmatic about the strategies that they’re employing.”

The post Microsoft Research Forum Episode 3: Globally inclusive and equitable AI, new use cases for AI, and more appeared first on Microsoft Research.

Microsoft at FAccT 2024: Advancing responsible AI research and practice

The integration of AI and other computational technologies is becoming increasingly common in high-stakes sectors such as finance, healthcare, and government, where their capacity to influence critical decisions is growing. While these systems offer numerous benefits, they also introduce risks, such as entrenching systemic biases and reducing accountability. The ACM Conference on Fairness, Accountability, and Transparency (ACM FaccT 2024) tackles these issues, bringing together experts from a wide range of disciplines who are committed to the responsible development of computational systems.

Microsoft is proud to return as a sponsor of ACM FAccT 2024, underscoring our commitment to supporting research on responsible AI. We’re pleased to share that members of our team have taken on key roles in organizing the event, contributing to the program committee and serving as a program co-chair. Additionally, seven papers by Microsoft researchers and their collaborators have been accepted to the program, with “Akal badi ya bias: An exploratory study of gender bias in Hindi language technology,” receiving an award for Best Paper.

Collectively, these research projects emphasize the need for AI technologies that reflect the Microsoft Responsible AI principles of accountability, inclusiveness, reliability and safety, fairness, transparency, and privacy and security. They underscore the importance of addressing potential risks and harms associated with deployment and usage. This post highlights these advances.

Microsoft Research Podcast

AI Frontiers: AI for health and the future of research with Peter Lee

Peter Lee, head of Microsoft Research, and Ashley Llorens, AI scientist and engineer, discuss the future of AI research and the potential for GPT-4 as a medical copilot.

Paper highlights

A framework for exploring the consequences of AI-mediated enterprise knowledge access and identifying risks to workers

Anna Gausen, Bhaskar Mitra, Siân Lindley

Recent AI developments, especially LLMs, are significantly impacting organizational knowledge access and reshaping workplaces. These AI systems pose risks due to their interaction with organizational power dynamics. This paper introduces the Consequence-Mechanism-Risk framework to help identify worker risks, categorizing them into issues related to value, power, and wellbeing. The framework aims to help practitioners mitigate these risks and apply it to other technologies, enabling better protection for workers.

A structured regression approach for evaluating model performance across intersectional subgroups

Christine Herlihy, Kimberly Truong, Alex Chouldechova, Miro Dudík

Disaggregated evaluation is a process used in AI fairness assessment that measures AI system performance across different subgroups. These subgroups are defined by a mix of demographic or other sensitive attributes. However, the sample size for intersectional subgroups is often very small, leading to their exclusion from analysis. This work introduces a structured regression approach for more reliable system performance estimates in these subgroups. Tested on two publicly available datasets and several variants of semi-synthetic data, this method not only yielded more accurate results but also helped to identify key factors driving performance differences.

Akal badi ya bias: An exploratory study of gender bias in Hindi language technology

Best Paper Award

Rishav Hada, Safiya Husain, Varun Gumma, Harshita Diddee, Aditya Yadavalli, Agrima Seth, Nidhi Kulkarni, Ujwal Gadiraju, Aditya Vashistha, Vivek Seshadri, Kalika Bali

Existing research on gender bias in language technologies primarily focuses on English, often overlooking non-English languages. This paper introduces the first comprehensive study on gender bias in Hindi, the third most spoken language globally. Employing diverse techniques and field studies, the authors expose the limitations in current methodologies and emphasize the need for more context-specific and community-centered research. The findings deepen the understanding of gender bias in language technologies in Hindi and lay the groundwork for expanded research into other Indic languages.

“I’m not sure, but…”: Examining the impact of large language models’ uncertainty expression on user reliance and trust

Sunnie S. Y. Kim, Q. Vera Liao, Mihaela Vorvoreanu, Stephanie Ballard, Jennifer Wortman Vaughan

LLMs can produce convincing yet incorrect responses, potentially misleading users who rely on them for accuracy. To mitigate this issue, there have been recommendations for LLMs to communicate uncertainty in their responses. In a large-scale study on how users perceive and act on LLMs’ expressions of uncertainty, participants were asked medical questions. The authors found that first-person uncertainty expressions (e.g., “I’m not sure, but…”) decreased participants’ confidence in the system and their tendency to agree with the system’s answers, while increasing the accuracy of their own answers. In contrast, more general uncertainty expressions (e.g., “It’s unclear, but…”) were less effective. The findings stress the importance of more thorough user testing before deploying LLMs.

Investigating and designing for trust in AI-powered code generation tools

Ruotong Wang, Ruijia Cheng, Denae Ford, Tom Zimmermann

As tools like GitHub Copilot gain popularity, understanding the trust software developers place in these applications becomes crucial for their adoption and responsible use. In a two-stage qualitative study, the authors interviewed 17 developers to understand the challenges they face in building trust in AI code-generation tools. Challenges identified include setting expectations, configuring tools, and validating suggestions. The authors also explore several design concepts to help developers establish appropriate trust and provide design recommendations for AI-powered code-generation tools.

Less discriminatory algorithms

Emily Black, Logan Koepke, Pauline Kim, Solon Barocas, Mingwei Hsu

In fields such as housing, employment, and credit, organizations using algorithmic systems should seek to use less discriminatory alternatives. Research in computer science has shown that for any prediction problem, multiple algorithms can deliver the same level of accuracy but differ in their impacts across demographic groups. This phenomenon, known as model multiplicity, suggests that developers might be able to find an equally performant yet potentially less discriminatory alternative.

Participation in the age of foundation models

Harini Suresh, Emily Tseng, Meg Young, Mary Gray, Emma Pierson, Karen Levy

The rise of foundation models in public services brings both potential benefits and risks, including reinforcing power imbalances and harming marginalized groups. This paper explores how participatory AI/ML methods, typically context-specific, can be adapted to these context-agnostic models to empower those most affected.

Conference organizers from Microsoft

Program Co-Chair

Program Committee

Steph Ballard

Solon Barocas

Su Lin Blodgett*

Kate Crawford

Shipi Dhanorkar

Amy Heger

Jake Hofman*

Emre Kiciman*

Vera Liao*

Daniela Massiceti

Bhaskar Mitra

Besmira Nushi*

Alexandra Olteanu

Amifa Raj

Emily Sheng

Jennifer Wortman Vaughan*

Mihaela Vorvoreanu*

Daricia Wilkinson

*Area Chairs

Career opportunities

Microsoft welcomes talented individuals across various roles at Microsoft Research, Azure Research, and other departments. We are always pushing the boundaries of computer systems to improve the scale, efficiency, and security of all our offerings. You can review our open research-related positions here.

The post Microsoft at FAccT 2024: Advancing responsible AI research and practice appeared first on Microsoft Research.

How Skyflow creates technical content in days using Amazon Bedrock

This guest post is co-written with Manny Silva, Head of Documentation at Skyflow, Inc.

Startups move quickly, and engineering is often prioritized over documentation. Unfortunately, this prioritization leads to release cycles that don’t match, where features release but documentation lags behind. This leads to increased support calls and unhappy customers.

Skyflow is a data privacy vault provider that makes it effortless to secure sensitive data and enforce privacy policies. Skyflow experienced this growth and documentation challenge in early 2023 as it expanded globally from 8 to 22 AWS Regions, including China and other areas of the world such as Saudi Arabia, Uzbekistan, and Kazakhstan. The documentation team, consisting of only two people, found itself overwhelmed as the engineering team, with over 60 people, updated the product to support the scale and rapid feature release cycles.

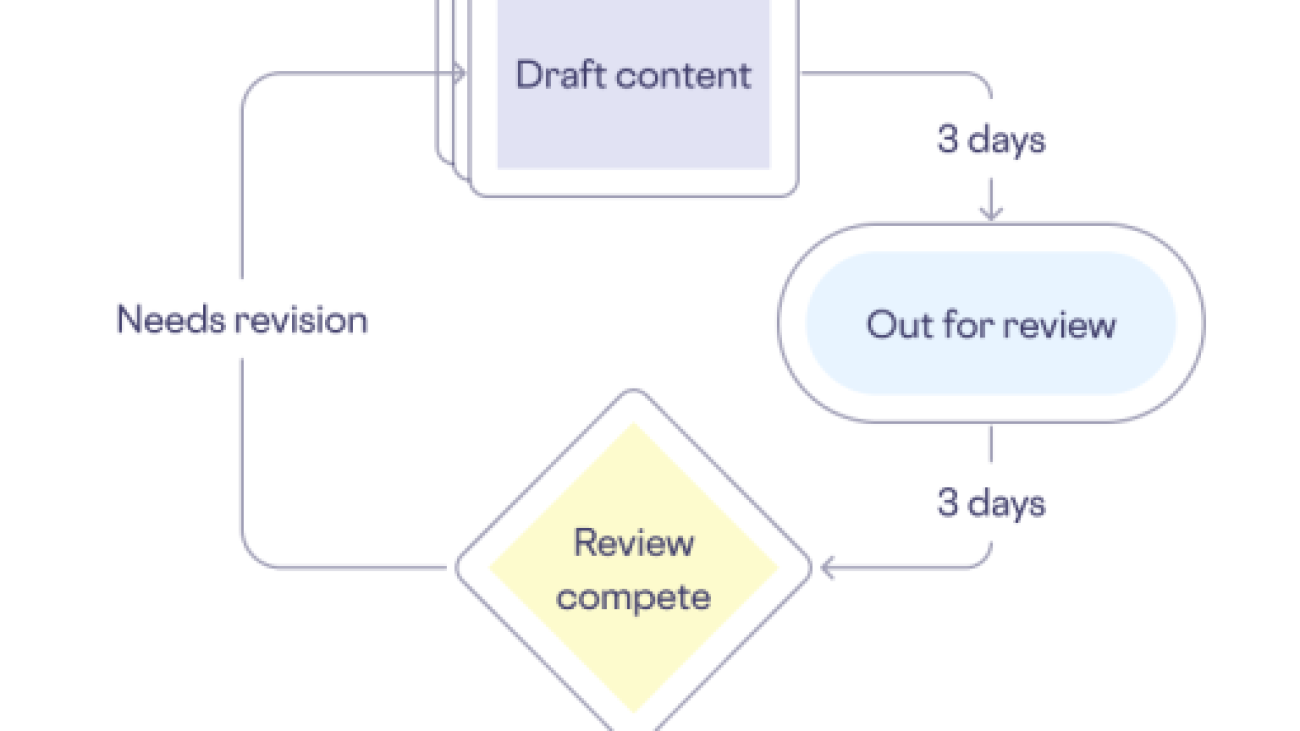

Given the critical nature of Skyflow’s role as a data privacy company, the stakes were particularly high. Customers entrust Skyflow with their data and expect Skyflow to manage it both securely and accurately. The accuracy of Skyflow’s technical content is paramount to earning and keeping customer trust. Although new features were released every other week, documentation for the features took an average of 3 weeks to complete, including drafting, review, and publication. The following diagram illustrates their content creation workflow.

Looking at our documentation workflows, we at Skyflow discovered areas where generative artificial intelligence (AI) could improve our efficiency. Specifically, creating the first draft—often referred to as overcoming the “blank page problem”—is typically the most time-consuming step. The review process could also be long depending on the number of inaccuracies found, leading to additional revisions, additional reviews, and additional delays. Both drafting and reviewing needed to be shorter to make doc target timelines match those of engineering.

To do this, Skyflow built VerbaGPT, a generative AI tool based on Amazon Bedrock. Amazon Bedrock is a fully managed service that makes foundation models (FMs) from leading AI startups and Amazon available through an API, so you can choose from a wide range of FMs to find the model that is best suited for your use case. With the Amazon Bedrock serverless experience, you can get started quickly, privately customize FMs with your own data, and integrate and deploy them into your applications using the AWS tools without having to manage any infrastructure. With Amazon Bedrock, VerbaGPT is able to prompt large language models (LLMs), regardless of model provider, and uses Retrieval Augmented Generation (RAG) to provide accurate first drafts that make for quick reviews.

In this post, we share how Skyflow improved their workflow to create documentation in days instead of weeks using Amazon Bedrock.

Solution overview

VerbaGPT uses Contextual Composition (CC), a technique that incorporates a base instruction, a template, relevant context to inform the execution of the instruction, and a working draft, as shown in the following figure. For the instruction, VerbaGPT tells the LLM to create content based on the specified template, evaluate the context to see if it’s applicable, and revise the draft accordingly. The template includes the structure of the desired output, expectations for what sort of information should exist in a section, and one or more examples of content for each section to guide the LLM on how to process context and draft content appropriately. With the instruction and template in place, VerbaGPT includes as much available context from RAG results as it can, then sends that off for inference. The LLM returns the revised working draft, which VerbaGPT then passes back into a new prompt that includes the same instruction, the same template, and as much context as it can fit, starting from where the previous iteration left off. This repeats until all context is considered and the LLM outputs a draft matching the included template.

The following figure illustrates how Skyflow deployed VerbaGPT on AWS. The application is used by the documentation team and internal users. The solution involves deploying containers on Amazon Elastic Kubernetes Service (Amazon EKS) that host a Streamlit user interface and a backend LLM gateway that is able to invoke Amazon Bedrock or local LLMs, as needed. Users upload documents and prompt VerbaGPT to generate new content. In the LLM gateway, prompts are processed in Python using LangChain and Amazon Bedrock.

When building this solution on AWS, Skyflow followed these steps:

- Choose an inference toolkit and LLMs.

- Build the RAG pipeline.

- Create a reusable, extensible prompt template.

- Create content templates for each content type.

- Build an LLM gateway abstraction layer.

- Build a frontend.

Let’s dive into each step, including the goals and requirements and how they were addressed.

Choose an inference toolkit and LLMs

The inference toolkit you choose, if any, dictates your interface with your LLMs and what other tooling is available to you. VerbaGPT uses LangChain instead of directly invoking LLMs. LangChain has broad adoption in the LLM community, so there was a present and likely future ability to take advantage of the latest advancements and community support.

When building a generative AI application, there are many factors to consider. For instance, Skyflow wanted the flexibility to interact with different LLMs depending on the use case. We also needed to keep context and prompt inputs private and secure, which meant not using LLM providers who would log that information or fine-tune their models on our data. We needed to have a variety of models with unique strengths at our disposal (such as long context windows or text labeling) and to have inference redundancy and fallback options in case of outages.

Skyflow chose Amazon Bedrock for its robust support of multiple FMs and its focus on privacy and security. With Amazon Bedrock, all traffic remains inside AWS. VerbaGPT’s primary foundation model is Anthropic Claude 3 Sonnet on Amazon Bedrock, chosen for its substantial context length, though it also uses Anthropic Claude Instant on Amazon Bedrock for chat-based interactions.

Build the RAG pipeline

To deliver accurate and grounded responses from LLMs without the need for fine-tuning, VerbaGPT uses RAG to fetch data related to the user’s prompt. By using RAG, VerbaGPT became familiar with the nuances of Skyflow’s features and procedures, enabling it to generate informed and complimentary content.

To build your own content creation solution, you collect your corpus into a knowledge base, vectorize it, and store it in a vector database. VerbaGPT includes all of Skyflow’s documentation, blog posts, and whitepapers in a vector database that it can query during inference. Skyflow uses a pipeline to embed content and store the embedding in a vector database. This embedding pipeline is a multi-step process, and everyone’s pipeline is going to look a little different. Skyflow’s pipeline starts by moving artifacts to a common data store, where they are de-identified. If your documents have personally identifiable information (PII), payment card information (PCI), personal health information (PHI), or other sensitive data, you might use a solution like Skyflow LLM Privacy Vault to make de-identifying your documentation straightforward. Next, the pipeline chunks the documents into pieces, then finally calculates vectors for the text chunks and stores them in FAISS, an open source vector store. VerbaGPT uses FAISS because it is fast and straightforward to use from Python and LangChain. AWS also has numerous vector stores to choose from for a more enterprise-level content creation solution, including Amazon Neptune, Amazon Relational Database Service (Amazon RDS) for PostgreSQL, Amazon Aurora PostgreSQL-Compatible Edition, Amazon Kendra, Amazon OpenSearch Service, and Amazon DocumentDB (with MongoDB compatibility). The following diagram illustrates the embedding generation pipeline.

When chunking your documents, keep in mind that LangChain’s default splitting strategy can be aggressive. This can result in chunks of content that are so small that they lack meaningful context and result in worse output, because the LLM has to make (largely inaccurate) assumptions about the context, producing hallucinations. This issue is particularly noticeable in Markdown files, where procedures were fragmented, code blocks were divided, and chunks were often only single sentences. Skyflow created its own Markdown splitter to work more accurately with VerbaGPT’s RAG output content.

Create a reusable, extensible prompt template

After you deploy your embedding pipeline and vector database, you can start intelligently prompting your LLM with a prompt template. VerbaGPT uses a system prompt that instructs the LLM how to behave and includes a directive to use content in the Context section to inform the LLM’s response.

The inference process queries the vector database with the user’s prompt, fetches the results above a certain similarity threshold, and includes the results in the system prompt. The solution then sends the system prompt and the user’s prompt to the LLM for inference.

The following is a sample prompt for drafting with Contextual Composition that includes all the necessary components, system prompt, template, context, a working draft, and additional instructions:

Create content templates

To round out the prompt template, you need to define content templates that match your desired output, such as a blog post, how-to guide, or press release. You can jumpstart this step by sourcing high-quality templates. Skyflow sourced documentation templates from The Good Docs Project. Then, we adapted the how-to and concept templates to align with internal styles and specific needs. We also adapted the templates for use in prompt templates by providing instructions and examples per section. By clearly and consistently defining the expected structure and intended content of each section, the LLM was able to output content in the formats needed, while being both informative and stylistically consistent with Skyflow’s brand.

LLM gateway abstraction layer

Amazon Bedrock provides a single API to invoke a variety of FMs. Skyflow also wanted to have inference redundancy and fallback options in case VerbaGPT experienced Amazon Bedrock service limit exceeded errors. To that end, VerbaGPT has an LLM gateway that acts as an abstraction layer that is invoked.

The main component of the gateway is the model catalog, which can return a LangChain llm model object for the specified model, updated to include any parameters. You can create this with a simple if/else statement like that shown in the following code:

By mapping standard input formats into the function and handling all custom LLM object construction within the function, the rest of the code stays clean by using LangChain’s llm object.

Build a frontend

The final step was to add a UI on top of the application to hide the inner workings of LLM calls and context. A simple UI is key for generative AI applications, so users can efficiently prompt the LLMs without worrying about the details unnecessary to their workflow. As shown in the solution architecture, VerbaGPT uses Streamlit to quickly build useful, interactive UIs that allow users to upload documents for additional context and draft new documents rapidly using Contextual Composition. Streamlit is Python based, which makes it straightforward for data scientists to be efficient at building UIs.

Results

By using the power of Amazon Bedrock for inferencing and Skyflow for data privacy and sensitive data de-identification, your organization can significantly speed up the production of accurate, secure technical documents, just like the solution shown in this post. Skyflow was able to use existing technical content and best-in-class templates to reliably produce drafts of different content types in minutes instead of days. For example, given a product requirements document (PRD) and an engineering design document, VerbaGPT can produce drafts for a how-to guide, conceptual overview, summary, release notes line item, press release, and blog post within 10 minutes. Normally, this would take multiple individuals from different departments multiple days each to produce.

The new content flow shown in the following figure moves generative AI to the front of all technical content Skyflow creates. During the “Create AI draft” step, VerbaGPT generates content in the approved style and format in just 5 minutes. Not only does this solve the blank page problem, first drafts are created with less interviewing or asking engineers to draft content, freeing them to add value through feature development instead.

The security measures Amazon Bedrock provides around prompts and inference aligned with Skyflow’s commitment to data privacy, and allowed Skyflow to use additional kinds of context, such as system logs, without the concern of compromising sensitive information in third-party systems.

As more people at Skyflow used the tool, they wanted additional content types available: VerbaGPT now has templates for internal reports from system logs, email templates from common conversation types, and more. Additionally, although Skyflow’s RAG context is clean, VerbaGPT is integrated with Skyflow LLM Privacy Vault to de-identify sensitive data in user inference inputs, maintaining Skyflow’s stringent standards of data privacy and security even while using the power of AI for content creation.

Skyflow’s journey in building VerbaGPT has drastically shifted content creation, and the toolkit wouldn’t be as robust, accurate, or flexible without Amazon Bedrock. The significant reduction in content creation time—from an average of around 3 weeks to as little as 5 days, and sometimes even a remarkable 3.5 days—marks a substantial leap in efficiency and productivity, and highlights the power of AI in enhancing technical content creation.

Conclusion

Don’t let your documentation lag behind your product development. Start creating your technical content in days instead of weeks, while maintaining the highest standards of data privacy and security. Learn more about Amazon Bedrock and discover how Skyflow can transform your approach to data privacy.

If you’re scaling globally and have privacy or data residency needs for your PII, PCI, PHI, or other sensitive data, reach out to your AWS representative to see if Skyflow is available in your region.

About the authors

Manny Silva is Head of Documentation at Skyflow and the creator of Doc Detective. Technical writer by day and engineer by night, he’s passionate about intuitive and scalable developer experiences and likes diving into the deep end as the 0th developer.

Manny Silva is Head of Documentation at Skyflow and the creator of Doc Detective. Technical writer by day and engineer by night, he’s passionate about intuitive and scalable developer experiences and likes diving into the deep end as the 0th developer.

Jason Westra is a Senior Solutions Architect for AWS AI/ML startups. He provides guidance and technical assistance that enables customers to build scalable, highly available, secure AI and ML workloads in AWS Cloud.

Jason Westra is a Senior Solutions Architect for AWS AI/ML startups. He provides guidance and technical assistance that enables customers to build scalable, highly available, secure AI and ML workloads in AWS Cloud.

Creativity Accelerated: New RTX-Powered AI Hardware and Software Announced at COMPUTEX

NVIDIA launched NVIDIA Studio at COMPUTEX in 2019. Five years and more than 500 NVIDIA RTX-accelerated apps and games later, it’s bringing AI to even more creators with an array of new RTX technology integrations announced this week at COMPUTEX 2024.

Newly announced NVIDIA GeForce RTX AI laptops — including the ASUS ProArt PX13 and P16 and MSI Stealth 16 AI+ laptops — will feature dedicated RTX Tensor Cores to accelerate AI performance and power-efficient systems-on-a-chip with Windows 11 AI PC features. They join over 200 laptops already accelerated with RTX AI technology.

NVIDIA RTX Video, a collection of technologies including RTX Video Super Resolution and RTX Video HDR that enhance video content streamed in browsers like Google Chrome, Microsoft Edge and Mozilla Firefox, is coming to the free VLC Media Player. And for the first time in June, creators can enjoy these AI-enhanced video effects in popular creative apps like DaVinci Resolve and Wondershare Filmora.

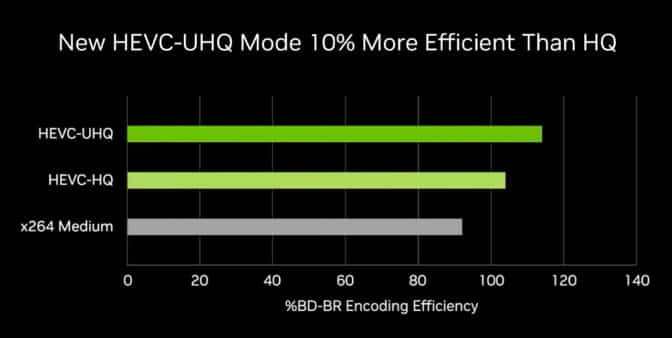

DaVinci Resolve and Cyberlink PowerDirector are adding NVIDIA’s new H.265 Ultra-High-Quality (UHQ) mode, which uses the NVIDIA NVENC to increase high-efficiency video coding (HEVC) and encoding efficiency by 10%.

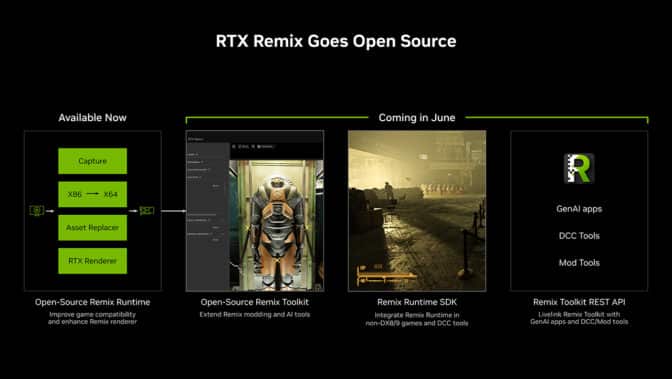

NVIDIA RTX Remix, a modding platform for remastering classic games with RTX, will soon be made open source, allowing more modders to streamline how assets are replaced and scenes are relit. RTX Remix will also be made accessible via a new REST application programming interface (API) to connect the platform to other modding tools like Blender and Hammer.

Creative apps are continuing to adopt AI-powered NVIDIA DLSS for higher-quality ray-traced visuals in the viewport, with 3D modeling platform Womp being the latest to integrate DLSS 3.5 with Ray Reconstruction.

NVIDIA unveiled Project G-Assist, an RTX-powered AI-assistant technology demo that provides context-aware help for PC games and apps.

The new NVIDIA app beta update adds 120 frames per second AV1 video capture and one-click performance-tuning.

And the latest Game Ready Driver and NVIDIA Studio Driver are available for installation today.

Video Gets the AI Treatment

RTX Video is a collection of real-time, AI-based video enhancements — powered by RTX GPUs equipped with AI Tensor Cores — to dramatically improve video quality.

It includes RTX Video Super Resolution — an upscaling technology that removes compression artifacts and generates additional pixels to improve video sharpness and clarity up to 4K — and RTX Video HDR, which transforms standard dynamic range videos into stunning high-dynamic range on HDR10 displays.

NVIDIA has released the RTX Video software development kit, which allows app developers to add RTX Video effects to creator workflows.

Blackmagic Design’s DaVinci Resolve, a powerful video editing app with color correction, visual effects, graphics and audio post-production capabilities, will be one of the first to integrate RTX Video. The integration is being demoed on the COMPUTEX show floor.

Wondershare Filmora, a video editing app with AI tools and pro-level social media video editing features, will support RTX Video HDR, coming soon.

VLC Media Player — an open-source, cross-platform media player, has added RTX Video HDR in its latest beta release, following its recently added support for Mozilla Firefox.

NVIDIA also released a new UHQ mode in NVENC, a dedicated hardware encoder on RTX GPUs, for the HEVC video compression standard (also known as H.265). The new mode increases compression by 10% without diminishing quality, making NVENC HEVC 34% more efficient than the typically used x264 Medium compression standard.

DaVinci Resolve and Cyberlink PowerDirector video editing software will be adding support for the new UHQ mode in their next updates. Stay tuned for official launch dates.

RTX Remix Open Sources Creator Toolkit

NVIDIA RTX Remix allows modders to easily capture game assets, automatically enhance materials with generative AI tools and create stunning RTX remasters with full ray tracing.

RTX Remix open beta recently added DLSS 3.5 support featuring Ray Reconstruction, an AI model that creates higher-quality images for intensive ray-traced games and apps.

Later this month, NVIDIA will make the RTX Remix Toolkit open source, allowing more modders to streamline how assets are replaced and scenes are relit. The company is also increasing the supported file formats for RTX Remix’s asset ingestor and bolstering RTX Remix’s AI Texture Tools with new models.

NVIDIA is also making the capabilities of RTX Remix accessible via a new powerful REST API, allowing modders to livelink RTX Remix to other DCC tools such as Blender and modding tools such as Hammer. NVIDIA is also providing an SDK for the RTX Remix runtime to allow modders to deploy RTX Remix’s renderer into other applications and games beyond DirectX 8 and 9 classics.

Catch Some Rays

NVIDIA DLSS 3.5 with Ray Reconstruction enhances ray-traced image quality on NVIDIA RTX and GeForce RTX GPUs by replacing hand-tuned denoisers with an NVIDIA supercomputer-trained AI network that generates higher-quality pixels in between sampled rays.

Previewing content in the viewport, even with high-end hardware, can sometimes offer less-than-ideal image quality, as traditional denoisers require hand-tuning for every scene. With DLSS 3.5, the AI neural network recognizes a wide variety of scenes, producing high-quality preview images and drastically reducing time spent rendering.

The free browser-based 3D modeling platform Womp has added DLSS 3.5 to enhance interactive, photorealistic modeling in the viewport.

Chaos Vantage and D5 Render, two popular professional-grade 3D apps that feature real-time preview modes with ray tracing, have also seen drastic performance increases with DLSS 3.5 — up to a 60% boost from Ray Reconstruction and 4x from all DLSS technologies.

Tools That Accelerate AI Apps

The vast ecosystem of open-source AI models currently available are usually pretrained for general purposes and run in data centers.

To create more effective app-specific AI tools that run on local PCs, NVIDIA has introduced the RTX AI Toolkit — an end-to-end workflow for the customization, optimization and deployment of AI models on RTX AI PCs.

Partners such as Adobe, Topaz and Blackmagic Design are integrating RTX AI Toolkit within their popular creative apps to accelerate AI performance on RTX PCs.

Developers can learn more on the NVIDIA Technical Blog.

Yotta CEO Sunil Gupta on Supercharging India’s Fast-Growing AI Market

India’s AI market is expected to be massive. Yotta Data Services is setting its sights on supercharging it. In this episode of NVIDIA’s AI Podcast, Sunil Gupta, cofounder, managing director and CEO of Yotta Data Services, speaks with host Noah Kravitz about the company’s Shakti Cloud offering, which provides scalable GPU services for enterprises of all sizes. Yotta is the first Indian cloud services provider in the NVIDIA Partner Network, and its Shakti Cloud is India’s fastest AI supercomputing infrastructure, with 16 exaflops of compute capacity supported by over 16,000 NVIDIA H100 Tensor Core GPUs. Tune in to hear Gupta’s insights on India’s potential as a major AI market and how to balance data center growth with sustainability and energy efficiency.

Stay tuned for more AI Podcast episodes recorded live from GTC.

Time Stamps

1:18: Background on Yotta

2:58: What is Shakti Cloud?

6:44: What does Shakti Cloud mean for India’s tech sector?

10:36: The self-service, scalable capabilities of Shakti Cloud

19:48: Balancing data center growth with sustainability

24:35: Yotta’s work with NVIDIA

27:48: What’s next for Yotta?

You Might Also Like…

Performance AI: Insights From Arthur’s Adam Wenchel – Ep. 221

In this episode of the NVIDIA AI Podcast, recorded live at the GTC 2024, host Noah Kravitz sits down with Adam Wenchel, co-founder and CEO of Arthur, about the company’s technology, which enhances the performance of AI systems across various metrics like accuracy, explainability and fairness.

How the Ohio Supercomputing Center Drives the Future of Computing – Ep. 213

NASCAR races are all about speed, but even the fastest cars need to factor in safety, especially as rules and tracks change. The Ohio Supercomputer Center is ready to help. In this episode of NVIDIA’s AI Podcast, host Noah Kravitz speaks with Alan Chalker, the director of strategic programs at the OSC, about all things supercomputing.

Replit CEO Amjad Masad on Empowering the Next Billion Software Creators – Ep. 201

Replit aims to empower the next billion software creators. In this week’s episode of NVIDIA’s AI Podcast, host Noah Kraviz dives into a conversation with Replit CEO Amjad Masad about bridging the gap between ideas and software, a task simplified by advances in generative AI.

Rendered.ai CEO Nathan Kundtz on Using AI to Build Better AI – Ep. 177

Data is the fuel that makes artificial intelligence run. Training machine learning and AI systems requires data, but compiling quality real-world data for AI and ML can be difficult and expensive. That’s where synthetic data comes in. In this episode of NVIDIA’s AI Podcast, Nathan Kundtz, founder and CEO of Rendered.ai, speaks with host Noah Kravtiz about a platform as a service for creating synthetic data to train AI models.

Subscribe to the AI Podcast

Get the AI Podcast through iTunes, Google Play, Amazon Music, Castbox, DoggCatcher, Overcast, PlayerFM, Pocket Casts, Podbay, PodBean, PodCruncher, PodKicker, Soundcloud, Spotify, Stitcher and TuneIn.

Make the AI Podcast better: Have a few minutes to spare? Fill out this listener survey.

Apply to our new program for startups using AI to improve U.S. infrastructure

Technology companies based in the U.S. can now apply for the new Google for Startups AI Academy: American Infrastructure program.Read More

Technology companies based in the U.S. can now apply for the new Google for Startups AI Academy: American Infrastructure program.Read More

Streamline custom model creation and deployment for Amazon Bedrock with Provisioned Throughput using Terraform

As customers seek to incorporate their corpus of knowledge into their generative artificial intelligence (AI) applications, or to build domain-specific models, their data science teams often want to conduct A/B testing and have repeatable experiments. In this post, we discuss a solution that uses infrastructure as code (IaC) to define the process of retrieving and formatting data for model customization and initiating the model customization. This enables you to version and iterate as needed.

With Amazon Bedrock, you can privately and securely customize foundation models (FMs) with your own data to build applications that are specific to your domain, organization, and use case. With custom models, you can create unique user experiences that reflect your company’s style, voice, and services.

Amazon Bedrock supports two methods of model customization:

- Fine-tuning allows you to increase model accuracy by providing your own task-specific labeled training dataset and further specialize your FMs.

- Continued pre-training allows you to train models using your own unlabeled data in a secure and managed environment and supports customer-managed keys. Continued pre-training helps models become more domain-specific by accumulating more robust knowledge and adaptability—beyond their original training.

In this post, we provide guidance on how to create an Amazon Bedrock custom model using HashiCorp Terraform that allows you to automate the process, including preparing datasets used for customization.

Terraform is an IaC tool that allows you to manage AWS resources, software as a service (SaaS) resources, datasets, and more, using declarative configuration. Terraform provides the benefits of automation, versioning, and repeatability.

Solution overview

We use Terraform to download a public dataset from the Hugging Face Hub, convert it to JSONL format, and upload it to an Amazon Simple Storage Service (Amazon S3) bucket with a versioned prefix. We then create an Amazon Bedrock custom model using fine-tuning, and create a second model using continued pre-training. Lastly, we configure Provisioned Throughput for our new models so we can test and deploy the custom models for wider usage.

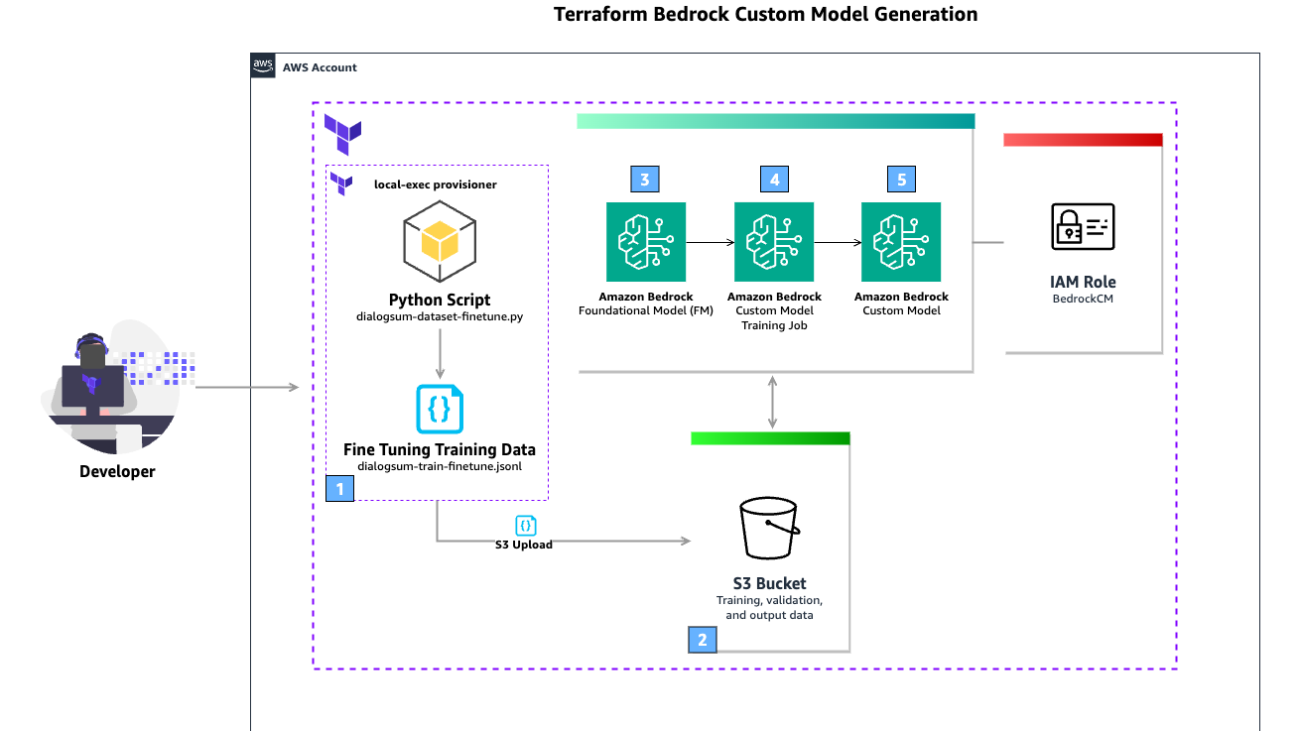

The following diagram illustrates the solution architecture.

The workflow includes the following steps:

- The user runs the

terraform applyThe Terraformlocal-execprovisioner is used to run a Python script that downloads the public dataset DialogSum from the Hugging Face Hub. This is then used to create a fine-tuning training JSONL file. - An S3 bucket stores training, validation, and output data. The generated JSONL file is uploaded to the S3 bucket.

- The FM defined in the Terraform configuration is used as the source for the custom model training job.

- The custom model training job uses the fine-tuning training data stored in the S3 bucket to enrich the FM. Amazon Bedrock is able to access the data in the S3 bucket (including output data) due to the AWS Identity and Access Management (IAM) role defined in the Terraform configuration, which grants access to the S3 bucket.

- When the custom model training job is complete, the new custom model is available for use.

The high-level steps to implement this solution are as follows:

- Create and initialize a Terraform project.

- Create data sources for context lookup.

- Create an S3 bucket to store training, validation, and output data.

- Create an IAM service role that allows Amazon Bedrock to run a model customization job, access your training and validation data, and write your output data to your S3 bucket.

- Configure your local Python virtual environment.

- Download the DialogSum public dataset and convert it to JSONL.

- Upload the converted dataset to Amazon S3.

- Create an Amazon Bedrock custom model using fine-tuning.

- Configure custom model Provisioned Throughput for your models.

Prerequisites

This solution requires the following prerequisites:

- An AWS account. If you don’t have an account, you can sign up for one.

- Access to an IAM user or role that has the required permissions for creating a custom service role, creating an S3 bucket, uploading objects to that S3 bucket, and creating Amazon Bedrock model customization jobs.

- Configured model access for Cohere Command-Light (if you plan to use that model).

- Terraform v1.0.0 or newer installed.

- python v3.8 or newer installed.

Create and initialize a Terraform project

Complete the following steps to create a new Terraform project and initialize it. You can work in a local folder of your choosing.

- In your preferred terminal, create a new folder named

bedrockcmand change to that folder:- If on Windows, use the following code:

- If on Mac or Linux, use the following code:

Now you can work in a text editor and enter in code.

- In your preferred text editor, add a new file with the following Terraform code:

- Save the file in the root of the

bedrockcmfolder and name itmain.tf. - In your terminal, run the following command to initialize the Terraform working directory:

The output will contain a successful message like the following:

“Terraform has been successfully initialized”

- In your terminal, validate the syntax for your Terraform files:

Create data sources for context lookup

The next step is to add configurations that define data sources that look up information about the context Terraform is currently operating in. These data sources are used when defining the IAM role and policies and when creating the S3 bucket. More information can be found in the Terraform documentation for aws_caller_identity, aws_partition, and aws_region.

- In your text editor, add the following Terraform code to your

main.tffile:

- Save the file.

Create an S3 bucket

In this step, you use Terraform to create an S3 bucket to use during model customization and associated outputs. S3 bucket names are globally unique, so you use the Terraform data source aws_caller_identity, which allows you to look up the current AWS account ID, and use string interpolation to include the account ID in the bucket name. Complete the following steps:

- Add the following Terraform code to your

main.tffile:

- Save the file.

Create an IAM service role for Amazon Bedrock

Now you create the service role that Amazon Bedrock will assume to operate the model customization jobs.

You first create a policy document, assume_role_policy, which defines the trust relationship for the IAM role. The policy allows the bedrock.amazonaws.com service to assume this role. You use global condition context keys for cross-service confused deputy prevention. There are also two conditions you specify: the source account must match the current account, and the source ARN must be an Amazon Bedrock model customization job operating from the current partition, AWS Region, and current account.

Complete the following steps:

- Add the following Terraform code to your

main.tffile:

The second policy document, bedrock_custom_policy, defines permissions for accessing the S3 bucket you created for model training, validation, and output. The policy allows the actions GetObject, PutObject, and ListBucket on the resources specified, which are the ARN of the model_training S3 bucket and all of the buckets contents. You will then create an aws_iam_policy resource, which creates the policy in AWS.

- Add the following Terraform code to your

main.tffile:

Finally, the aws_iam_role resource, bedrock_custom_role, creates an IAM role with a name prefix of BedrockCM- and a description. The role uses assume_role_policy as its trust policy and bedrock_custom_policy as a managed policy to allow the actions specified.

- Add the following Terraform code to your

main.tffile:

- Save the file.

Configure your local Python virtual environment

Python supports creating lightweight virtual environments, each with their own independent set of Python packages installed. You create and activate a virtual environment, and then install the datasets package.

- In your terminal, in the root of the

bedrockcmfolder, run the following command to create a virtual environment:

- Activate the virtual environment:

- If on Windows, use the following command:

- If on Mac or Linux, use the following command:

Now you install the datasets package via pip.

- In your terminal, run the following command to install the datasets package:

Download the public dataset

You now use Terraform’s local-exec provisioner to invoke a local Python script that will download the public dataset DialogSum from the Hugging Face Hub. The dataset is already divided into training, validation, and testing splits. This example uses just the training split.

You prepare the data for training by removing the id and topic columns, renaming the dialogue and summary columns, and truncating the dataset to 10,000 records. You then save the dataset in JSONL format. You could also use your own internal private datasets; we use a public dataset for example purposes.

You first create the local Python script named dialogsum-dataset-finetune.py, which is used to download the dataset and save it to disk.

- In your text editor, add a new file with the following Python code:

- Save the file in the root of the

bedrockcmfolder and name itdialogsum-dataset-finetune.py.

Next, you edit the main.tf file you have been working in and add the terraform_data resource type, uses a local provisioner to invoke your Python script.

- In your text editor, edit the

main.tffile and add the following Terraform code:

Upload the converted dataset to Amazon S3

Terraform provides the aws_s3_object resource type, which allows you to create and manage objects in S3 buckets. In this step, you reference the S3 bucket you created earlier and the terraform_data resource’s output attribute. This output attribute is how you instruct the Terraform resource graph that these resources need to be created with a dependency order.

- In your text editor, edit the

main.tffile and add the following Terraform code:

Create an Amazon Bedrock custom model using fine-tuning

Amazon Bedrock has multiple FMs that support customization with fine-tuning. To see a list of the models available, use the following AWS Command Line Interface (AWS CLI) command:

- In your terminal, run the following command to list the FMs that support customization by fine-tuning:

You use the Cohere Command-Light FM for this model customization. You add a Terraform data source to query the foundation model ARN using the model name. You then create the Terraform resource definition for aws_bedrock_custom_model, which creates a model customization job, and immediately returns.

The time it takes for model customization is non-deterministic, and is based on the input parameters, model used, and other factors.

- In your text editor, edit the

main.tffile and add the following Terraform code:

- Save the file.

Now you use Terraform to create the data sources and resources defined in your main.tf file, which will start a model customization job.

- In your terminal, run the following command to validate the syntax for your Terraform files:

- Run the following command to apply the configuration you created. Before creating the resources, Terraform will describe all the resources that will be created so you can verify your configuration:

Terraform will generate a plan and ask you to approve the actions, which will look similar to the following code:

- Enter

yesto approve the changes.

Terraform will now apply your configuration. This process runs for a few minutes. At this time, your custom model is not yet ready for use; it will be in a Training state. Wait for training to finish before continuing. You can review the status on the Amazon Bedrock console on the Custom models page.

When the process is complete, you receive a message like the following:

You can also view the status on the Amazon Bedrock console.

You have now created an Amazon Bedrock custom model using fine-tuning.

Configure custom model Provisioned Throughput

Amazon Bedrock allows you to run inference on custom models by purchasing Provisioned Throughput. This guarantees a consistent level of throughput in exchange for a term commitment. You specify the number of model units needed to meet your application’s performance needs. For evaluating custom models initially, you can purchase Provisioned Throughput hourly (on-demand) with no long-term commitment. With no commitment, a quota of one model unit is available per Provisioned Throughput.

You create a new resource for Provisioned Throughput, associate one of your custom models, and provide a name. You omit the commitment_duration attribute to use on-demand.

- In your text editor, edit the

main.tffile and add the following Terraform code:

- Save the file.

Now you use Terraform to create the resources defined in your main.tf file.

- In your terminal, run the following command to re-initialize the Terraform working directory:

The output will contain a successful message like the following:

- Validate the syntax for your Terraform files:

- Run the following command to apply the configuration you created:

Best practices and considerations

Note the following best practices when using this solution:

- Data and model versioning – You can version your datasets and models by using version identifiers in your S3 bucket prefixes. This allows you to compare model efficacy and outputs. You could even operate a new model in a shadow deployment so that your team can evaluate the output relative to your models being used in production.

- Data privacy and network security – With Amazon Bedrock, you are in control of your data, and all your inputs and customizations remain private to your AWS account. Your data, such as prompts, completions, custom models, and data used for fine-tuning or continued pre-training, is not used for service improvement and is never shared with third-party model providers. Your data remains in the Region where the API call is processed. All data is encrypted in transit and at rest. You can use AWS PrivateLink to create a private connection between your VPC and Amazon Bedrock.

- Billing – Amazon Bedrock charges for model customization, storage, and inference. Model customization is charged per tokens processed. This is the number of tokens in the training dataset multiplied by the number of training epochs. An epoch is one full pass through the training data during customization. Model storage is charged per month, per model. Inference is charged hourly per model unit using Provisioned Throughput. For detailed pricing information, see Amazon Bedrock Pricing.

- Custom models and Provisioned Throughput – Amazon Bedrock allows you to run inference on custom models by purchasing Provisioned Throughput. This guarantees a consistent level of throughput in exchange for a term commitment. You specify the number of model units needed to meet your application’s performance needs. For evaluating custom models initially, you can purchase Provisioned Throughput hourly with no long-term commitment. With no commitment, a quota of one model unit is available per Provisioned Throughput. You can create up to two Provisioned Throughputs per account.

- Availability – Fine-tuning support on Meta Llama 2, Cohere Command Light, and Amazon Titan Text FMs is available today in Regions US East (N. Virginia) and US West (Oregon). Continued pre-training is available today in public preview in Regions US East (N. Virginia) and US West (Oregon). To learn more, visit the Amazon Bedrock Developer Experience and check out Custom models.

Clean up

When you no longer need the resources created as part of this post, clean up those resources to save associated costs. You can clean up the AWS resources created in this post using Terraform with the terraform destroy command.

First, you need to modify the configuration of the S3 bucket in the main.tf file to enable force destroy so the contents of the bucket will be deleted, so the bucket itself can be deleted. This will remove all of the sample data contained in the S3 bucket as well as the bucket itself. Make sure there is no data you want to retain in the bucket before proceeding.

- Modify the declaration of your S3 bucket to set the

force_destroyattribute of the S3 bucket:

- Run the terraform apply command to update the S3 bucket with this new configuration:

- Run the terraform destroy command to delete all resources created as part of this post:

Conclusion

In this post, we demonstrated how to create Amazon Bedrock custom models using Terraform. We introduced GitOps to manage model configuration and data associated with your custom models.

We recommend testing the code and examples in your development environment, and making appropriate changes as required to use them in production. Consider your model consumption requirements when defining your Provisioned Throughput.

We welcome your feedback! If you have questions or suggestions, leave them in the comments section.

About the Authors

Josh Famestad is a Solutions Architect at AWS helping public sector customers accelerate growth, add agility, and reduce risk with cloud-based solutions.

Josh Famestad is a Solutions Architect at AWS helping public sector customers accelerate growth, add agility, and reduce risk with cloud-based solutions.

Kevon Mayers is a Solutions Architect at AWS. Kevon is a Core Contributor for Terraform and has led multiple Terraform initiatives within AWS. Prior to joining AWS, he was working as a DevOps engineer and developer, and before that was working with the GRAMMYs/The Recording Academy as a studio manager, music producer, and audio engineer.

Kevon Mayers is a Solutions Architect at AWS. Kevon is a Core Contributor for Terraform and has led multiple Terraform initiatives within AWS. Prior to joining AWS, he was working as a DevOps engineer and developer, and before that was working with the GRAMMYs/The Recording Academy as a studio manager, music producer, and audio engineer.

Tyler Lynch is a Principal Solution Architect at AWS. Tyler leads Terraform provider engineering at AWS and is a Core Contributor for Terraform.

Tyler Lynch is a Principal Solution Architect at AWS. Tyler leads Terraform provider engineering at AWS and is a Core Contributor for Terraform.

Boost productivity with video conferencing transcripts and summaries with the Amazon Chime SDK Meeting Summarizer solution

Businesses today heavily rely on video conferencing platforms for effective communication, collaboration, and decision-making. However, despite the convenience these platforms offer, there are persistent challenges in seamlessly integrating them into existing workflows. One of the major pain points is the lack of comprehensive tools to automate the process of joining meetings, recording discussions, and extracting actionable insights from them. This gap results in inefficiencies, missed opportunities, and limited productivity, hindering the seamless flow of information and decision-making processes within organizations.

To address this challenge, we’ve developed the Amazon Chime SDK Meeting Summarizer application deployed with the Amazon Cloud Development Kit (AWS CDK). This application uses an Amazon Chime SDK SIP media application, Amazon Transcribe, and Amazon Bedrock to seamlessly join meetings, record meeting audio, and process recordings for transcription and summarization. By integrating these services programmatically through the AWS CDK, we aim to streamline the meeting workflow, empower users with actionable insights, and drive better decision-making outcomes. Our solution currently integrates with popular platforms such as Amazon Chime, Zoom, Cisco Webex, Microsoft Teams, and Google Meet.

In addition to deploying the solution, we’ll also teach you the intricacies of prompt engineering in this post. We guide you through addressing parsing and information extraction challenges, including speaker diarization, call scheduling, summarization, and transcript cleaning. Through detailed instructions and structured approaches tailored to each use case, we illustrate the effectiveness of Amazon Bedrock, powered by Anthropic Claude models.

Solution overview

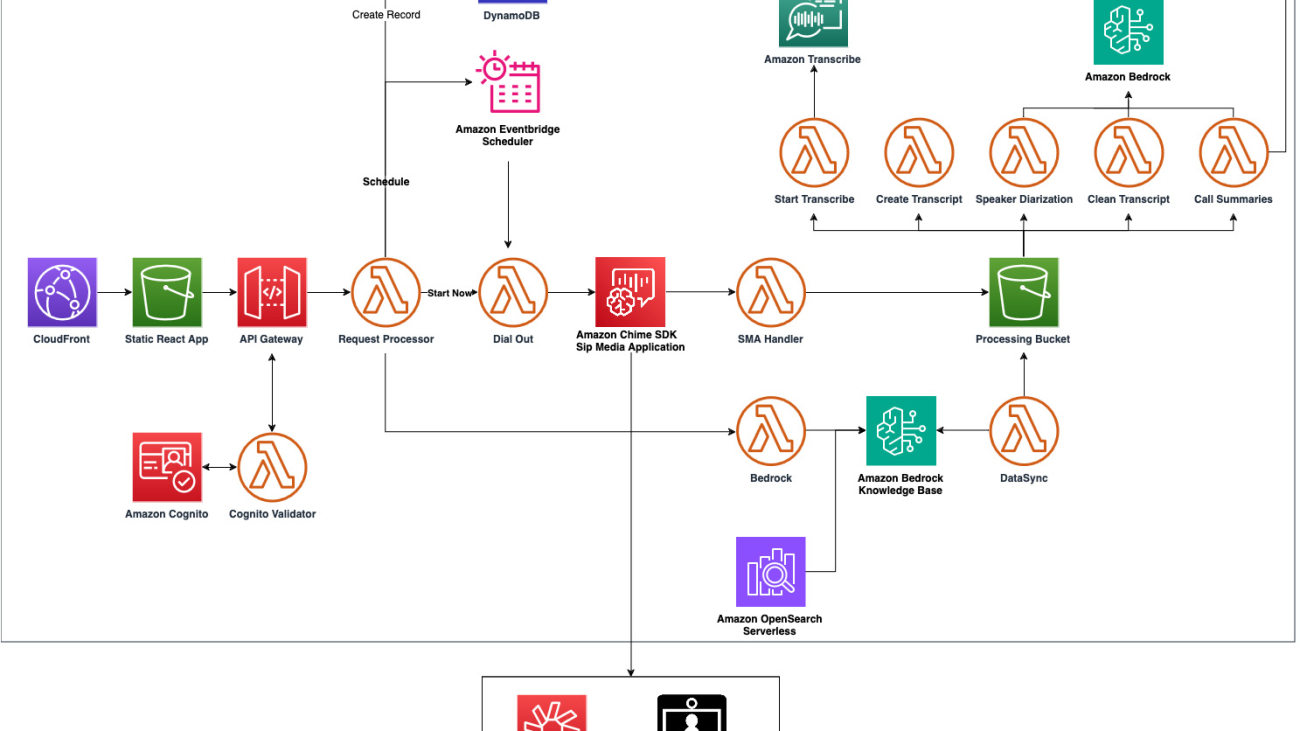

The following infrastructure diagram provides an overview of the AWS services that are used to create this meeting summarization bot. The core services used in this solution are:

- An Amazon Chime SDK SIP Media Application is used to dial into the meeting and record meeting audio

- Amazon Transcribe is used to perform speech-to-text processing of the recorded audio, including speaker diarization

- Anthropic Claude models in Amazon Bedrock are used to identify names, improve the quality of the transcript, and provide a detailed summary of the meeting

For a detailed explanation of the solution, refer to the Amazon Chime Meeting Summarizer documentation.

Prerequisites

Before diving into the project setup, make sure you have the following requirements in place:

- Yarn – Yarn must be installed on your machine.

- AWS account – You’ll need an active AWS account.

- Enable Claude Anthropic models – These models should be enabled in your AWS account. For more information, see Model access.

- Enable Amazon Titan – Amazon Titan should be activated in your AWS account. For more information, see Amazon Titan Models.

Refer to our GitHub repository for a step-by-step guide on deploying this solution.

Access the meeting with Amazon Chime SDK