With last month’s blog, I started a series of posts that highlight the key factors that are driving customers to choose Amazon Bedrock. I explored how Bedrock enables customers to build a secure, compliant foundation for generative AI applications. Now I’d like to turn to a slightly more technical, but equally important differentiator for Bedrock—the multiple techniques that you can use to customize models and meet your specific business needs.

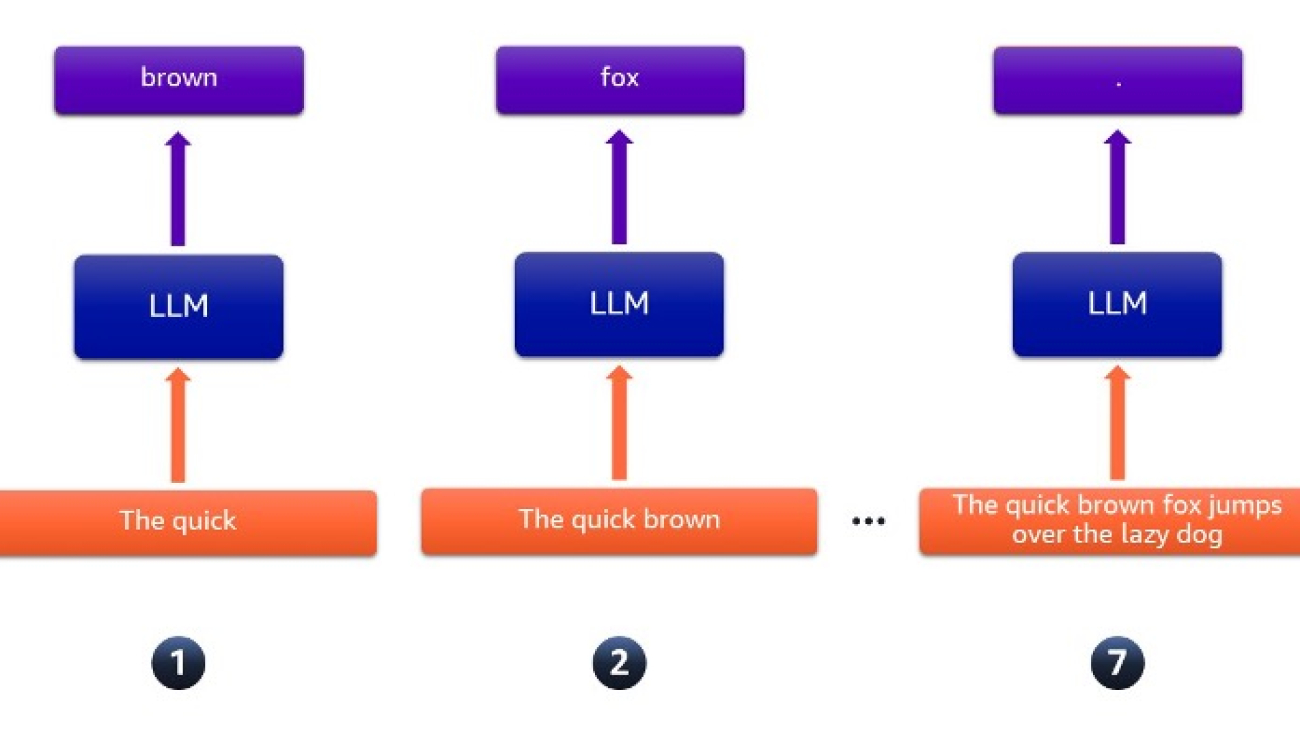

As we’ve all heard, large language models (LLMs) are transforming the way we leverage artificial intelligence (AI) and enabling businesses to rethink core processes. Trained on massive datasets, these models can rapidly comprehend data and generate relevant responses across diverse domains, from summarizing content to answering questions. The wide applicability of LLMs explains why customers across healthcare, financial services, and media and entertainment are moving quickly to adopt them. However, our customers tell us that while pre-trained LLMs excel at analyzing vast amounts of data, they often lack the specialized knowledge necessary to tackle specific business challenges.

Customization unlocks the transformative potential of large language models. Amazon Bedrock equips you with a powerful and comprehensive toolset to transform your generative AI from a one-size-fits-all solution into one that is finely tailored to your unique needs. Customization includes varied techniques such as Prompt Engineering, Retrieval Augmented Generation (RAG), and fine-tuning and continued pre-training. Prompt Engineering involves carefully crafting prompts to get a desired response from LLMs. RAG combines knowledge retrieved from external sources with language generation to provide more contextual and accurate responses. Model Customization techniques—including fine-tuning and continued pre-training involve further training a pre-trained language model on specific tasks or domains for improved performance. These techniques can be used in combination with each other to train base models in Amazon Bedrock with your data to deliver contextual and accurate outputs. Read the below examples to understand how customers are using customization in Amazon Bedrock to deliver on their use cases.

Thomson Reuters, a global content and technology company, has seen positive results with Claude 3 Haiku, but anticipates even better results with customization. The company—which serves professionals in legal, tax, accounting, compliance, government, and media—expects that it will see even faster and more relevant AI results by fine-tuning Claude with their industry expertise.

“We’re excited to fine-tune Anthropic’s Claude 3 Haiku model in Amazon Bedrock to further enhance our Claude-powered solutions. Thomson Reuters aims to provide accurate, fast, and consistent user experiences. By optimizing Claude around our industry expertise and specific requirements, we anticipate measurable improvements that deliver high-quality results at even faster speeds. We’ve already seen positive results with Claude 3 Haiku, and fine-tuning will enable us to tailor our AI assistance more precisely.”

– Joel Hron, Chief Technology Officer at Thomson Reuters.

At Amazon, we see Buy with Prime using Amazon Bedrock’s cutting-edge RAG-based customization capabilities to drive greater efficiency. Their order on merchants’ sites are covered by Buy with Prime Assist, 24/7 live chat customer service. They recently launched a chatbot solution in beta capable of handling product support queries. The solution is powered by Amazon Bedrock and customized with data to go beyond traditional email-based systems. My colleague Amit Nandy, Product Manager at Buy with Prime, says,

“By indexing merchant websites, including subdomains and PDF manuals, we constructed tailored knowledge bases that provided relevant and comprehensive support for each merchant’s unique offerings. Combined with Claude’s state-of-the-art foundation models and Guardrails for Amazon Bedrock, our chatbot solution delivers a highly capable, secure, and trustworthy customer experience. Shoppers can now receive accurate, timely, and personalized assistance for their queries, fostering increased satisfaction and strengthening the reputation of Buy with Prime and its participating merchants.”

Stories like these are the reason why we continue to double down on our customization capabilities for generative AI applications powered by Amazon Bedrock.

In this blog, we’ll explore the three major techniques for customizing LLMs in Amazon Bedrock. And, we’ll cover related announcements from the recent AWS New York Summit.

Prompt Engineering: Guiding your application toward desired answers

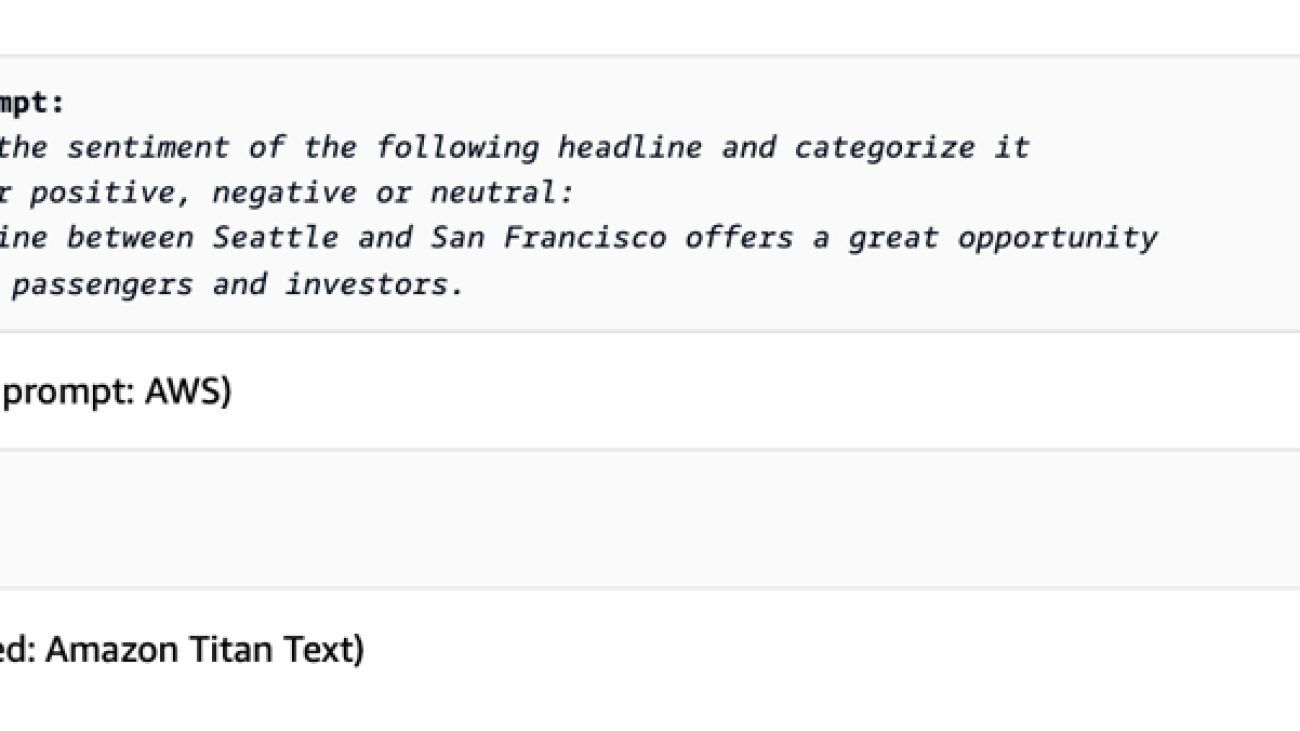

Prompts are the primary inputs that drive LLMs to generate answers. Prompt engineering is the practice of carefully crafting these prompts to guide LLMs effectively. Learn more here. Well-designed prompts can significantly boost a model’s performance by providing clear instructions, context, and examples tailored to the task at hand. Amazon Bedrock supports multiple prompt engineering techniques. For example, few-shot prompting provides examples with desired outputs to help models better understand tasks, such as sentiment analysis samples labeled “positive” or “negative.” Zero-shot prompting provides task descriptions without examples. And chain-of-thought prompting enhances multi-step reasoning by asking models to break down complex problems, which is useful for arithmetic, logic, and deductive tasks.

Our Prompt Engineering Guidelines outline various prompting strategies and best practices for optimizing LLM performance across applications. Leveraging these techniques can help practitioners achieve their desired outcomes more effectively. However, developing optimal prompts that elicit the best responses from foundational models is a challenging and iterative process, often requiring weeks of refinement by developers.

| Zero-shot prompting | Few-shot prompting |

|

|

| Chain-of-thought prompting with Prompt Flows Visual Builder | |

|

|

Retrieval-Augmented Generation: Augmenting results with retrieved data

LLMs generally lack specialized knowledge, jargon, context, or up-to-date information needed for specific tasks. For instance, legal professionals seeking reliable, current, and accurate information within their domain may find interactions with generalist LLMs inadequate. Retrieval-Augmented Generation (RAG) is the process of allowing a language model to consult an authoritative knowledge base outside of its training data sources—before generating a response.

The RAG process involves three main steps:

- Retrieval: Given an input prompt, a retrieval system identifies and fetches relevant passages or documents from a knowledge base or corpus.

- Augmentation: The retrieved information is combined with the original prompt to create an augmented input.

- Generation: The LLM generates a response based on the augmented input, leveraging the retrieved information to produce more accurate and informed outputs.

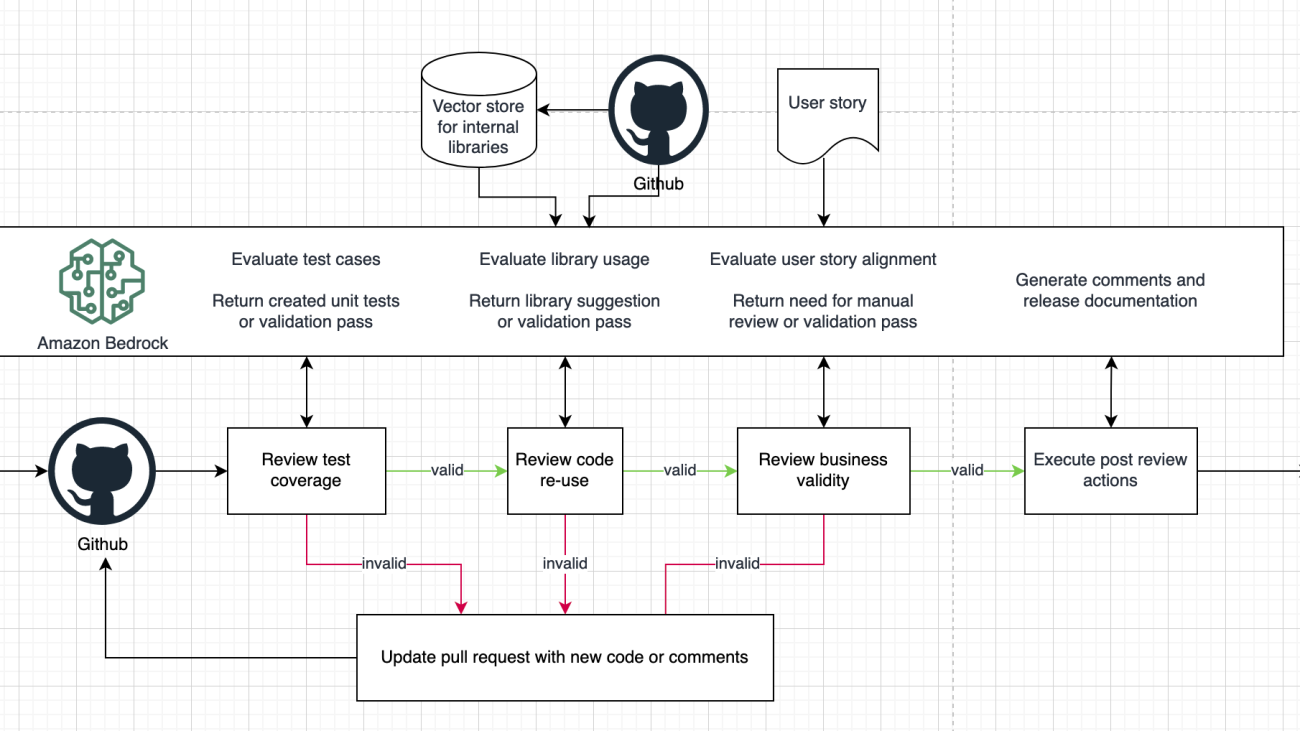

Amazon Bedrock’s Knowledge Bases is a fully managed RAG feature that allows you to connect LLMs to internal company data sources—delivering relevant, accurate, and customized responses. To offer greater flexibility and accuracy in building RAG-based applications, we announced multiple new capabilities at the AWS New York Summit. For example, now you can securely access data from new sources like the web (in preview), allowing you to index public web pages, or access enterprise data from Confluence, SharePoint, and Salesforce (all in preview). Advanced chunking options are another exciting new feature, enabling you to create custom chunking algorithms tailored to your specific needs, as well as leverage built-in semantic and hierarchical chunking options. You now have the capability to extract information with precision from complex data formats (e.g., complex tables within PDFs), thanks to advanced parsing techniques. Plus, the query reformulation feature allows you to deconstruct complex queries into simpler sub-queries, enhancing retrieval accuracy. All these new features help you reduce the time and cost associated with data access and construct highly accurate and relevant knowledge resources—all tailored to your specific enterprise use cases.

Model Customization: Enhancing performance for specific tasks or domains

Model customization in Amazon Bedrock is a process to customize pre-trained language models for specific tasks or domains. It involves taking a large, pre-trained model and further training it on a smaller, specialized dataset related to your use case. This approach leverages the knowledge acquired during the initial pre-training phase while adapting the model to your requirements, without losing the original capabilities. The fine-tuning process in Amazon Bedrock is designed to be efficient, scalable, and cost-effective, enabling you to tailor language models to your unique needs, without the need for extensive computational resources or data. In Amazon Bedrock, model fine-tuning can be combined with prompt engineering or the Retrieval-Augmented Generation (RAG) approach to further enhance the performance and capabilities of language models. Model customization can be implemented both for labeled and unlabeled data.

Fine-Tuning with labeled data involves providing labeled training data to improve the model’s performance on specific tasks. The model learns to associate appropriate outputs with certain inputs, adjusting its parameters for better task accuracy. For instance, if you have a dataset of customer reviews labeled as positive or negative, you can fine-tune a pre-trained model within Bedrock on this data to create a sentiment analysis model tailored to your domain. At the AWS New York Summit, we announced Fine-tuning for Anthropic’s Claude 3 Haiku. By providing task-specific training datasets, users can fine-tune and customize Claude 3 Haiku, boosting its accuracy, quality, and consistency for their business applications.

Continued Pre-training with unlabeled data, also known as domain adaptation, allows you to further train the LLMs on your company’s proprietary, unlabeled data. It exposes the model to your domain-specific knowledge and language patterns, enhancing its understanding and performance for specific tasks.

Customization holds the key to unlocking the true power of generative AI

Large language models are revolutionizing AI applications across industries, but tailoring these general models with specialized knowledge is key to unlocking their full business impact. Amazon Bedrock empowers organizations to customize LLMs through Prompt Engineering techniques, such as Prompt Management and Prompt Flows, that help craft effective prompts. Retrieval-Augmented Generation—powered by Amazon Bedrock’s Knowledge Bases—lets you integrate LLMs with proprietary data sources to generate accurate, domain-specific responses. And Model Customization techniques, including fine-tuning with labeled data and continued pre-training with unlabeled data, help optimize LLM behavior for your unique needs. After taking a close look at these three main customization methods, it’s clear that while they may take different approaches, they all share a common goal—to help you address your specific business problems..

Resources

For more information on customization with Amazon Bedrock, check the below resources:

- Learn more about Amazon Bedrock

- Learn more about Amazon Bedrock Knowledge Bases

- Read announcement blog on additional data connectors in Knowledge Bases for Amazon Bedrock

- Read blog on advanced chunking and parsing options in Knowledge Bases for Amazon Bedrock

- Learn more about Prompt Engineering

- Learn more about Prompt Engineering techniques and best practices

- Read announcement blog on Prompt Management and Prompt Flows

- Learn more about fine-tuning and continued pre-training

- Read the announcement blog on fine-tuning Anthropic’s Claude 3 Haiku

About the author

Vasi Philomin is VP of Generative AI at AWS. He leads generative AI efforts, including Amazon Bedrock and Amazon Titan.

Vasi Philomin is VP of Generative AI at AWS. He leads generative AI efforts, including Amazon Bedrock and Amazon Titan.

Ian Lenora is an experienced software development leader who focuses on building high-quality cloud native software, and exploring the potential of artificial intelligence. He has successfully led teams in delivering complex projects across various industries, optimizing efficiency and scalability. With a strong understanding of the software development lifecycle and a passion for innovation, Ian seeks to leverage AI technologies to solve complex problems and create intelligent, adaptive software solutions that drive business value.

Ian Lenora is an experienced software development leader who focuses on building high-quality cloud native software, and exploring the potential of artificial intelligence. He has successfully led teams in delivering complex projects across various industries, optimizing efficiency and scalability. With a strong understanding of the software development lifecycle and a passion for innovation, Ian seeks to leverage AI technologies to solve complex problems and create intelligent, adaptive software solutions that drive business value. Cody Collins is a New York-based Solutions Architect at Amazon Web Services, where he collaborates with ISV customers to build cutting-edge solutions in the cloud. He has extensive experience in delivering complex projects across diverse industries, optimizing for efficiency and scalability. Cody specializes in AI/ML technologies, enabling customers to develop ML capabilities and integrate AI into their cloud applications.

Cody Collins is a New York-based Solutions Architect at Amazon Web Services, where he collaborates with ISV customers to build cutting-edge solutions in the cloud. He has extensive experience in delivering complex projects across diverse industries, optimizing for efficiency and scalability. Cody specializes in AI/ML technologies, enabling customers to develop ML capabilities and integrate AI into their cloud applications. Samit Kumbhani is an AWS Senior Solutions Architect in the New York City area with over 18 years of experience. He currently collaborates with Independent Software Vendors (ISVs) to build highly scalable, innovative, and secure cloud solutions. Outside of work, Samit enjoys playing cricket, traveling, and biking.

Samit Kumbhani is an AWS Senior Solutions Architect in the New York City area with over 18 years of experience. He currently collaborates with Independent Software Vendors (ISVs) to build highly scalable, innovative, and secure cloud solutions. Outside of work, Samit enjoys playing cricket, traveling, and biking.

Pavan Kumar Rao Navule is a Solutions Architect at Amazon Web Services, where he works with ISVs in India to help them innovate on the AWS platform. He is specialized in architecting AI/ML and generative AI services at AWS. Pavan is a published author for the book “Getting Started with V Programming.” In his free time, Pavan enjoys listening to the great magical voices of Sia and Rihanna.

Pavan Kumar Rao Navule is a Solutions Architect at Amazon Web Services, where he works with ISVs in India to help them innovate on the AWS platform. He is specialized in architecting AI/ML and generative AI services at AWS. Pavan is a published author for the book “Getting Started with V Programming.” In his free time, Pavan enjoys listening to the great magical voices of Sia and Rihanna. David John Chakram is a Principal Solutions Architect at AWS. He specializes in building data platforms and architecting seamless data ecosystems. With a profound passion for databases, data analytics, and machine learning, he excels at transforming complex data challenges into innovative solutions and driving businesses forward with data-driven insights.

David John Chakram is a Principal Solutions Architect at AWS. He specializes in building data platforms and architecting seamless data ecosystems. With a profound passion for databases, data analytics, and machine learning, he excels at transforming complex data challenges into innovative solutions and driving businesses forward with data-driven insights. Sudhanshu Hate is a principal AI/ML specialist with AWS and works with clients to advise them on their MLOps and generative AI journey. In his previous role before Amazon, he conceptualized, created, and led teams to build ground-up open source-based AI and gamification platforms, and successfully commercialized it with over 100 clients. Sudhanshu has to his credit a couple of patents, has written two books and several papers and blogs, and has presented his points of view in various technical forums. He has been a thought leader and speaker, and has been in the industry for nearly 25 years. He has worked with Fortune 1000 clients across the globe and most recently with digital native clients in India.

Sudhanshu Hate is a principal AI/ML specialist with AWS and works with clients to advise them on their MLOps and generative AI journey. In his previous role before Amazon, he conceptualized, created, and led teams to build ground-up open source-based AI and gamification platforms, and successfully commercialized it with over 100 clients. Sudhanshu has to his credit a couple of patents, has written two books and several papers and blogs, and has presented his points of view in various technical forums. He has been a thought leader and speaker, and has been in the industry for nearly 25 years. He has worked with Fortune 1000 clients across the globe and most recently with digital native clients in India. Rupesh Bajaj is a Solutions Architect at Amazon Web Services, where he collaborates with ISVs in India to help them leverage AWS for innovation. He specializes in providing guidance on cloud adoption through well-architected solutions and holds seven AWS certifications. With 5 years of AWS experience, Rupesh is also a Gen AI Ambassador. In his free time, he enjoys playing chess.

Rupesh Bajaj is a Solutions Architect at Amazon Web Services, where he collaborates with ISVs in India to help them leverage AWS for innovation. He specializes in providing guidance on cloud adoption through well-architected solutions and holds seven AWS certifications. With 5 years of AWS experience, Rupesh is also a Gen AI Ambassador. In his free time, he enjoys playing chess.

Google for Startups AI Academy: American Infrastructure provides mentorship, training, and resources to help founders scale solutions and benefit communities.

Google for Startups AI Academy: American Infrastructure provides mentorship, training, and resources to help founders scale solutions and benefit communities.

Sandeep Singh is a Senior Generative AI Data Scientist at Amazon Web Services, helping businesses innovate with generative AI. He specializes in generative AI, machine learning, and system design. He has successfully delivered state-of-the-art AI/ML-powered solutions to solve complex business problems for diverse industries, optimizing efficiency and scalability.

Sandeep Singh is a Senior Generative AI Data Scientist at Amazon Web Services, helping businesses innovate with generative AI. He specializes in generative AI, machine learning, and system design. He has successfully delivered state-of-the-art AI/ML-powered solutions to solve complex business problems for diverse industries, optimizing efficiency and scalability. Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. With a keen interest in exploring new frontiers in the field, she continuously strives to push boundaries. Outside of work, she loves traveling, working out, and exploring new things.

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. With a keen interest in exploring new frontiers in the field, she continuously strives to push boundaries. Outside of work, she loves traveling, working out, and exploring new things. Mani Khanuja is a Tech Lead – Generative AI Specialists, author of the book Applied Machine Learning and High Performance Computing on AWS, and a member of the Board of Directors for Women in Manufacturing Education Foundation Board. She leads machine learning projects in various domains such as computer vision, natural language processing, and generative AI. She speaks at internal and external conferences such AWS re:Invent, Women in Manufacturing West, YouTube webinars, and GHC 23. In her free time, she likes to go for long runs along the beach.

Mani Khanuja is a Tech Lead – Generative AI Specialists, author of the book Applied Machine Learning and High Performance Computing on AWS, and a member of the Board of Directors for Women in Manufacturing Education Foundation Board. She leads machine learning projects in various domains such as computer vision, natural language processing, and generative AI. She speaks at internal and external conferences such AWS re:Invent, Women in Manufacturing West, YouTube webinars, and GHC 23. In her free time, she likes to go for long runs along the beach.

Syl Taylor is a Specialist Solutions Architect for Efficient Compute. She advises customers across EMEA on Amazon EC2 cost optimization and improving application performance using AWS-designed chips. Syl previously worked in software development and AI/ML for AWS Professional Services, designing and implementing cloud native solutions. She’s based in the UK and loves spending time in nature.

Syl Taylor is a Specialist Solutions Architect for Efficient Compute. She advises customers across EMEA on Amazon EC2 cost optimization and improving application performance using AWS-designed chips. Syl previously worked in software development and AI/ML for AWS Professional Services, designing and implementing cloud native solutions. She’s based in the UK and loves spending time in nature. Emir Ayar is a Senior Tech Lead Solutions Architect with the AWS Prototyping team. He specializes in assisting customers with building ML and generative AI solutions, and implementing architectural best practices. He supports customers in experimenting with solution architectures to achieve their business objectives, emphasizing agile innovation and prototyping. He lives in Luxembourg and enjoys playing synthesizers.

Emir Ayar is a Senior Tech Lead Solutions Architect with the AWS Prototyping team. He specializes in assisting customers with building ML and generative AI solutions, and implementing architectural best practices. He supports customers in experimenting with solution architectures to achieve their business objectives, emphasizing agile innovation and prototyping. He lives in Luxembourg and enjoys playing synthesizers.