New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

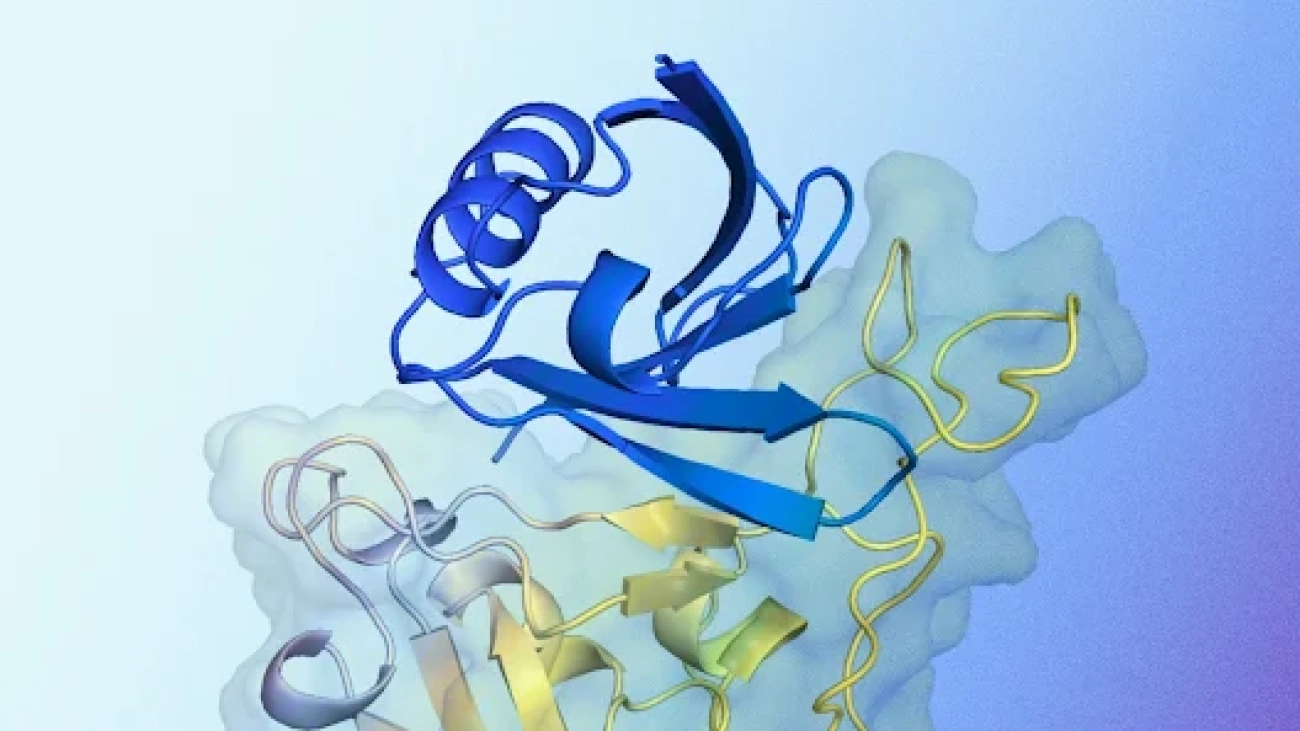

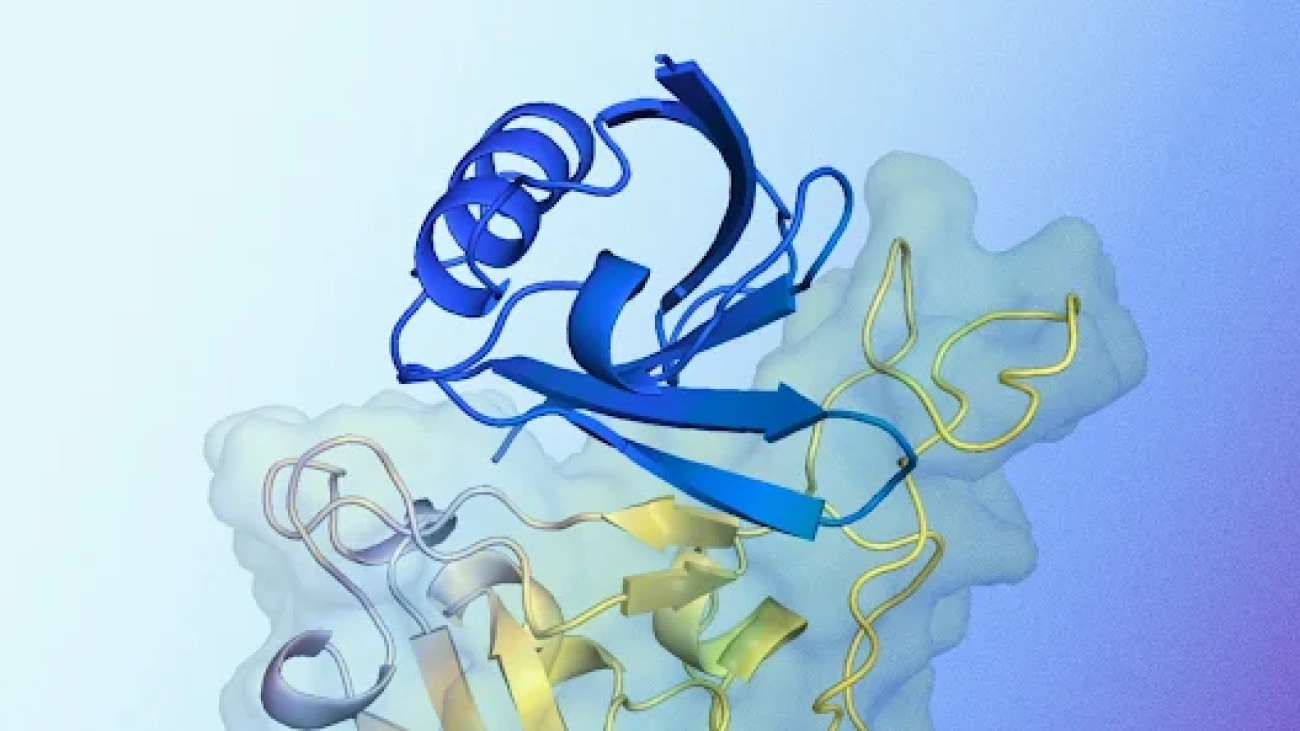

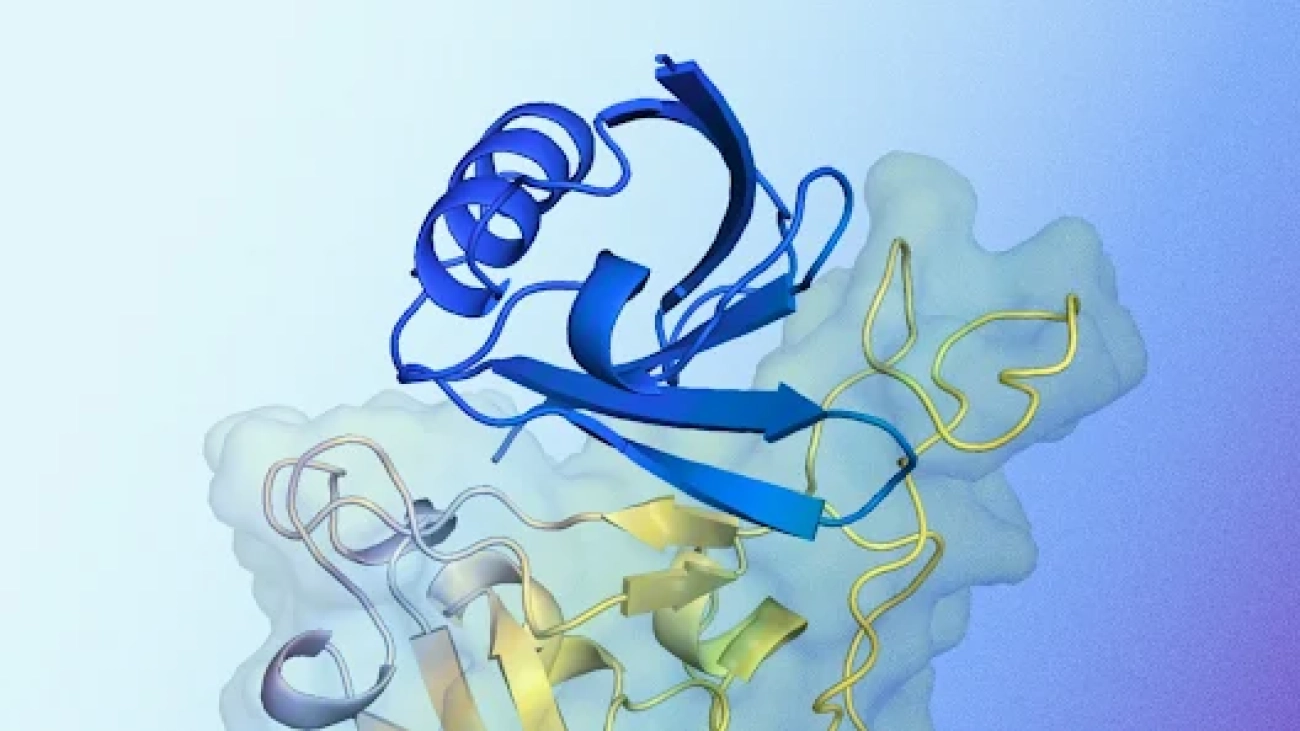

AlphaProteo generates novel proteins for biology and health research

New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

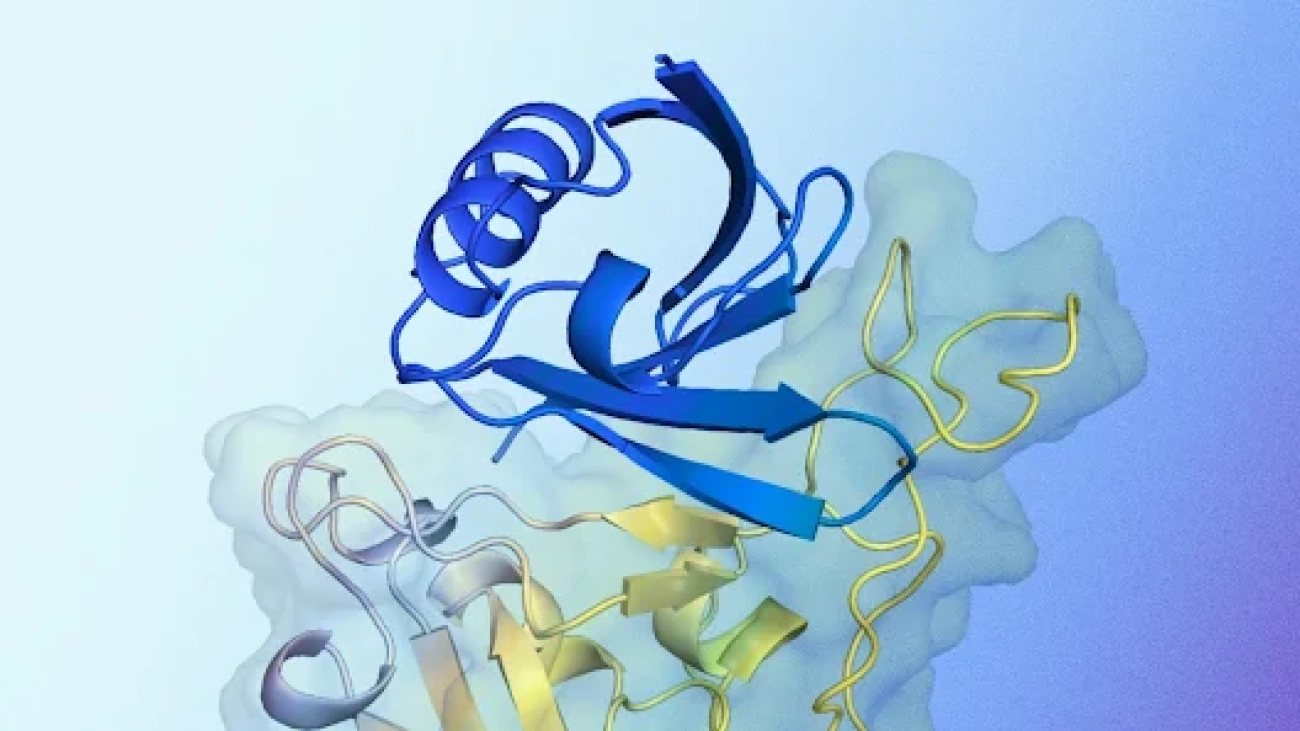

AlphaProteo generates novel proteins for biology and health research

New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

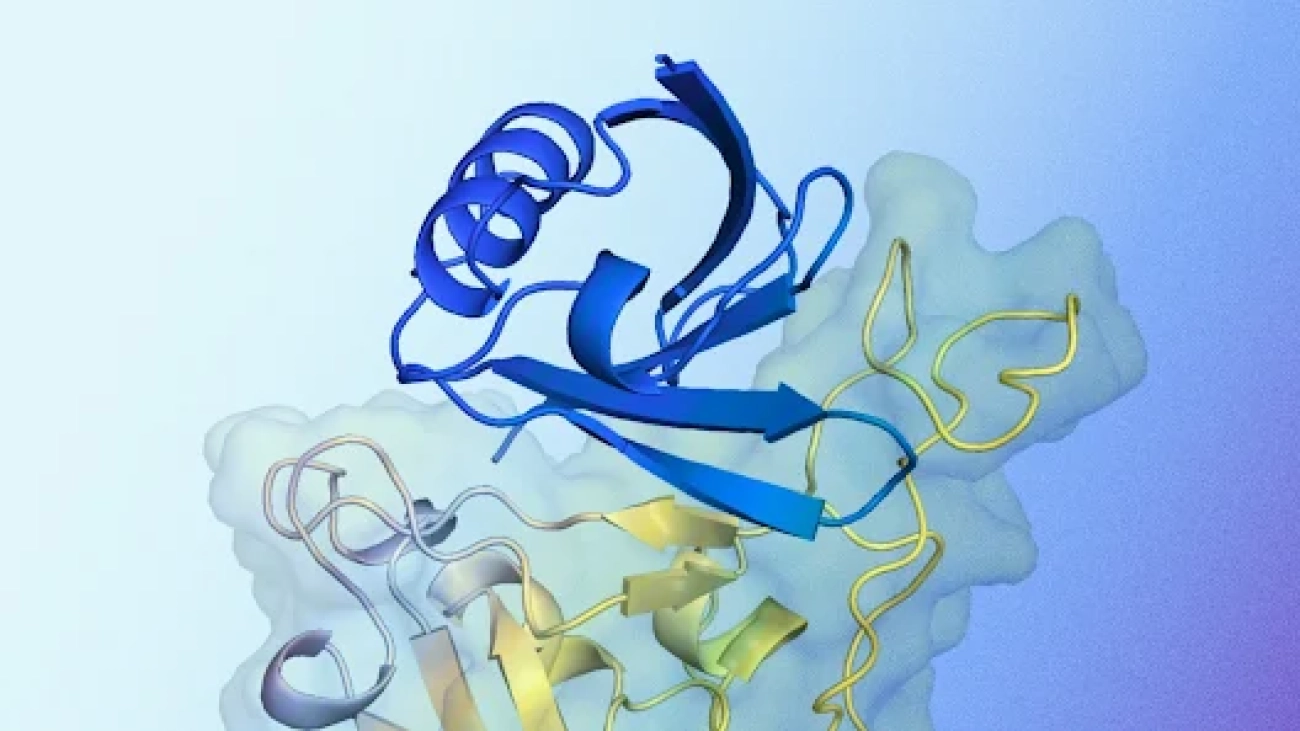

AlphaProteo generates novel proteins for biology and health research

New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

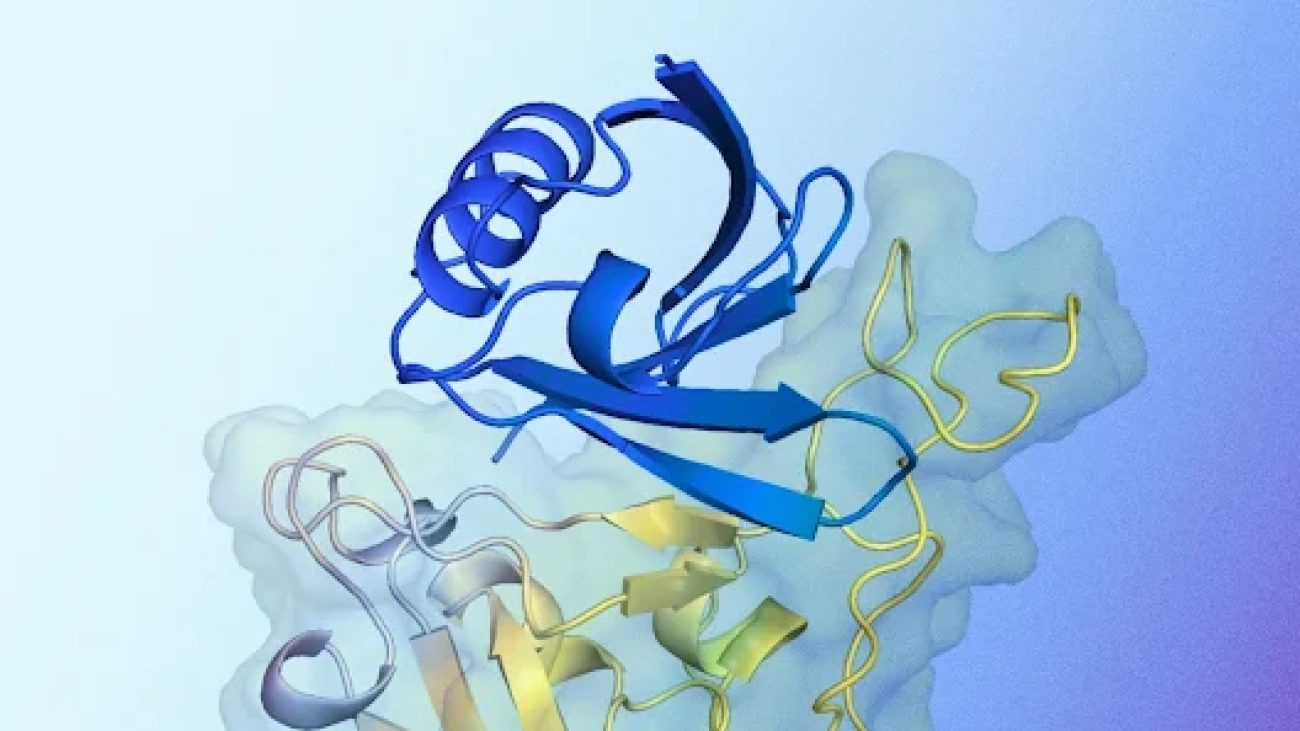

AlphaProteo generates novel proteins for biology and health research

New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

AlphaProteo generates novel proteins for biology and health research

New AI system designs proteins that successfully bind to target molecules, with potential for advancing drug design, disease understanding and more.Read More

FermiNet: Quantum physics and chemistry from first principles

Using deep learning to solve fundamental problems in computational quantum chemistry and explore how matter interacts with lightRead More

FermiNet: Quantum physics and chemistry from first principles

Using deep learning to solve fundamental problems in computational quantum chemistry and explore how matter interacts with lightRead More

FermiNet: Quantum physics and chemistry from first principles

Using deep learning to solve fundamental problems in computational quantum chemistry and explore how matter interacts with lightRead More

FermiNet: Quantum physics and chemistry from first principles

Using deep learning to solve fundamental problems in computational quantum chemistry and explore how matter interacts with lightRead More