Posted by Cat Armato, Program Manager, University Relations

Google is a leader in machine learning (ML) research with groups innovating across virtually all aspects of the field, from theory to application. We build machine learning systems to solve deep scientific and engineering challenges in areas of language, music, visual processing, algorithm development, and more. Core to our approach is to actively engage with the broader research community by open-sourcing datasets and models, publishing our discoveries, and actively participating in leading conferences.

Google is proud to be a Diamond Sponsor of the thirty-ninth International Conference on Machine Learning (ICML 2022), a premier annual conference, which is being held this week in Baltimore, Maryland. Google has a strong presence at this year’s conference with over 100 accepted publications and active involvement in a number of workshops and tutorials. We look forward to sharing some of our extensive ML research and expanding our partnership with the broader ML research community.

Registered for ICML 2022? We hope you’ll visit the Google booth to learn more about the exciting work, creativity, and fun that goes into solving a portion of the field’s most interesting challenges. Take a look below to learn more about the Google research being presented at ICML 2022 (Google affiliations in bold).

Organizing Committee

Tutorial Chairs include: Hanie Sedghi

Emeritus Members include: Andrew McCallum

Board Members include: Hugo Larochelle, Csaba Szepesvari, Corinna Cortes

Publications

Individual Preference Stability for Clustering

Saba Ahmadi, Pranjal Awasthi, Samir Khuller, Matthäus Kleindessner, Jamie Morgenstern, Pattara Sukprasert, Ali Vakilian

Head2Toe: Utilizing Intermediate Representations for Better Transfer Learning

Utku Evci, Vincent Dumoulin, Hugo Larochelle, Michael Mozer

H-Consistency Bounds for Surrogate Loss Minimizers

Pranjal Awasthi, Anqi Mao, Mehryar Mohri, Yutao Zhong

Cooperative Online Learning in Stochastic and Adversarial MDPs

Tal Lancewicki, Aviv Rosenberg, Yishay Mansour

Do More Negative Samples Necessarily Hurt in Contrastive Learning?

Pranjal Awasthi, Nishanth Dikkala, Pritish Kamath

Deletion Robust Submodular Maximization Over Matroids

Paul Dütting, Federico Fusco*, Silvio Lattanzi, Ashkan Norouzi-Fard, Morteza Zadimoghaddam

Tight and Robust Private Mean Estimation with Few Users

Hossein Esfandiari, Vahab Mirrokni, Shyam Narayanan*

Generative Trees: Adversarial and Copycat

Richard Nock, Mathieu Guillame-Bert

Agnostic Learnability of Halfspaces via Logistic Loss

Ziwei Ji*, Kwangjun Ahn*, Pranjal Awasthi, Satyen Kale, Stefani Karp

Adversarially Trained Actor Critic for Offline Reinforcement Learning

Ching-An Cheng, Tengyang Xie, Nan Jiang, Alekh Agarwal

Unified Scaling Laws for Routed Language Models

Aidan Clark, Diego de Las Casas, Aurelia Guy, Arthur Mensch, Michela Paganini, Jordan Hoffmann, Bogdan Damoc, Blake Hechtman, Trevor Cai, Sebastian Borgeaud, George van den Driessche, Eliza Rutherford, Tom Hennigan, Matthew Johnson, Albin Cassirer, Chris Jones, Elena Buchatskaya, David Budden, Laurent Sifre, Simon Osindero, Oriol Vinyals, Marc’Aurelio Ranzato, Jack Rae, Erich Elsen, Koray Kavukcuogu, Karen Simonyan

Large Batch Experience Replay

Thibault Lahire, Matthieu Geist, Emmanuel Rachelson

Robust Training of Neural Networks Using Scale Invariant Architectures

Zhiyuan Li*, Srinadh Bhojanapalli, Manzil Zaheer, Sashank J. Reddi, Sanjiv Kumar

The Poisson Binomial Mechanism for Unbiased Federated Learning with Secure Aggregation

Wei-Ning Chen, Ayfer Ozgur, Peter Kairouz

Global Optimization Networks

Sen Zhao, Erez Louidor, Maya Gupta

A Joint Exponential Mechanism for Differentially Private Top-k

Jennifer Gillenwater, Matthew Joseph, Andres Munoz Medina, Mónica Ribero

On the Practicality of Deterministic Epistemic Uncertainty

Janis Postels, Mattia Segu, Tao Sun, Luc Van Gool, Fisher Yu, Federico Tombari

Balancing Discriminability and Transferability for Source-Free Domain Adaptation

Jogendra Nath Kundu, Akshay Kulkarni, Suvaansh Bhambri, Deepesh Mehta, Shreyas Kulkarni, Varun Jampani, Venkatesh Babu Radhakrishnan

Transfer and Marginalize: Explaining Away Label Noise with Privileged Information

Mark Collier, Rodolphe Jenatton, Efi Kokiopoulou, Jesse Berent

In Defense of Dual-Encoders for Neural Ranking

Aditya Menon, Sadeep Jayasumana, Ankit Singh Rawat, Seungyeon Kim, Sashank Jakkam Reddi, Sanjiv Kumar

Surrogate Likelihoods for Variational Annealed Importance Sampling

Martin Jankowiak, Du Phan

Translatotron 2: High-Quality Direct Speech-to-Speech Translation with Voice Preservation (see blog post)

Ye Jia, Michelle Tadmor Ramanovich, Tal Remez, Roi Pomerantz

Differentially Private Approximate Quantiles

Haim Kaplan, Shachar Schnapp, Uri Stemmer

Continuous Control with Action Quantization from Demonstrations

Robert Dadashi, Léonard Hussenot, Damien Vincent, Sertan Girgin, Anton Raichuk, Matthieu Geist, Olivier Pietquin

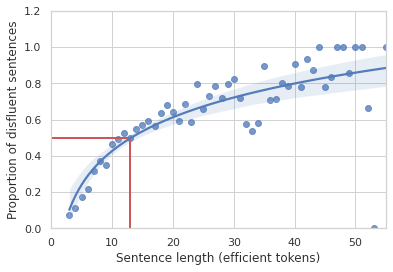

Data Scaling Laws in NMT: The Effect of Noise and Architecture

Yamini Bansal*, Behrooz Ghorbani, Ankush Garg, Biao Zhang, Maxim Krikun, Colin Cherry, Behnam Neyshabur, Orhan Firat

Debiaser Beware: Pitfalls of Centering Regularized Transport Maps

Aram-Alexandre Pooladian, Marco Cuturi, Jonathan Niles-Weed

A Context-Integrated Transformer-Based Neural Network for Auction Design

Zhijian Duan, Jingwu Tang, Yutong Yin, Zhe Feng, Xiang Yan, Manzil Zaheer, Xiaotie Deng

Algorithms for the Communication of Samples

Lucas Theis, Noureldin Yosri

Being Properly Improper

Tyler Sypherd, Richard Nock, Lalitha Sankar

Guarantees for Epsilon-Greedy Reinforcement Learning with Function Approximation

Chris Dann, Yishay Mansour, Mehryar Mohri, Ayush Sekhari, Karthik Sridharan

Why Should I Trust You, Bellman? The Bellman Error is a Poor Replacement for Value Error

Scott Fujimoto, David Meger, Doina Precup, Ofir Nachum, Shixiang Shane Gu

Public Data-Assisted Mirror Descent for Private Model Training

Ehsan Amid, Arun Ganesh*, Rajiv Mathews, Swaroop Ramaswamy, Shuang Song, Thomas Steinke, Vinith M. Suriyakumar*, Om Thakkar, Abhradeep Thakurta

Deep Hierarchy in Bandits

Joey Hong, Branislav Kveton, Sumeet Katariya, Manzil Zaheer, Mohammad Ghavamzadeh

Scalable Deep Reinforcement Learning Algorithms for Mean Field Games

Mathieu Lauriere, Sarah Perrin, Sertan Girgin, Paul Muller, Ayush Jain, Theophile Cabannes, Georgios Piliouras, Julien Perolat, Romuald Elie, Olivier Pietquin, Matthieu Geist

Faster Privacy Accounting via Evolving Discretization

Badih Ghazi, Pritish Kamath, Ravi Kumar, Pasin Manurangsi

HyperPrompt: Prompt-Based Task-Conditioning of Transformers

Yun He*, Huaixiu Steven Zheng, Yi Tay, Jai Gupta, Yu Du, Vamsi Aribandi, Zhe Zhao, YaGuang Li, Zhao Chen, Donald Metzler, Heng-Tze Cheng, Ed H. Chi

Blocks Assemble! Learning to Assemble with Large-Scale Structured Reinforcement Learning

Seyed Kamyar, Seyed Ghasemipour, Daniel Freeman, Byron David, Shixiang Shane Gu, Satoshi Kataoka, Igor Mordatch

Latent Diffusion Energy-Based Model for Interpretable Text Modelling

Peiyu Yu, Sirui Xie, Xiaojian Ma, Baoxiong Jia, Bo Pang, Ruiqi Gao, Yixin Zhu, Song-Chun Zhu, Ying Nian Wu

On the Optimization Landscape of Neural Collapse Under MSE Loss: Global Optimality with Unconstrained Features

Jinxin Zhou, Xiao Li, Tianyu Ding, Chong You, Qing Qu, Zhihui Zhu

Efficient Reinforcement Learning in Block MDPs: A Model-Free Representation Learning Approach

Xuezhou Zhang, Yuda Song, Masatoshi Uehara, Mengdi Wang, Alekh Agarwal, Wen Sun

Robust Training Under Label Noise by Over-Parameterization

Sheng Liu, Zhihui Zhu, Qing Qu, Chong You

FriendlyCore: Practical Differentially Private Aggregation

Eliad Tsfadia, Edith Cohen, Haim Kaplan, Yishay Mansour, Uri Stemmer

Adaptive Data Analysis with Correlated Observations

Aryeh Kontorovich, Menachem Sadigurschi,Uri Stemmer

A Resilient Distributed Boosting Algorithm

Yuval Filmus, Idan Mehalel, Shay Moran

On Learning Mixture of Linear Regressions in the Non-Realizable Setting

Avishek Ghosh, Arya Mazumdar,Soumyabrata Pal, Rajat Sen

Online and Consistent Correlation Clustering

Vincent Cohen-Addad, Silvio Lattanzi, Andreas Maggiori, Nikos Parotsidis

From Block-Toeplitz Matrices to Differential Equations on Graphs: Towards a General Theory for Scalable Masked Transformers

Krzysztof Choromanski, Han Lin, Haoxian Chen, Tianyi Zhang, Arijit Sehanobish, Valerii Likhosherstov, Jack Parker-Holder, Tamas Sarlos, Adrian Weller, Thomas Weingarten

Parsimonious Learning-Augmented Caching

Sungjin Im, Ravi Kumar, Aditya Petety, Manish Purohit

General-Purpose, Long-Context Autoregressive Modeling with Perceiver AR

Curtis Hawthorne, Andrew Jaegle, Cătălina Cangea, Sebastian Borgeaud, Charlie Nash, Mateusz Malinowski, Sander Dieleman, Oriol Vinyals, Matthew Botvinick, Ian Simon, Hannah Sheahan, Neil Zeghidour, Jean-Baptiste Alayrac, Joao Carreira, Jesse Engel

Conformal Prediction Sets with Limited False Positives

Adam Fisch, Tal Schuster, Tommi Jaakkola, Regina Barzilay

Dialog Inpainting: Turning Documents into Dialogs

Zhuyun Dai, Arun Tejasvi Chaganty, Vincent Zhao, Aida Amini, Qazi Mamunur Rashid, Mike Green, Kelvin Guu

Benefits of Overparameterized Convolutional Residual Networks: Function Approximation Under Smoothness Constraint

Hao Liu, Minshuo Chen, Siawpeng Er, Wenjing Liao, Tong Zhang, Tuo Zhao

Congested Bandits: Optimal Routing via Short-Term Resets

Pranjal Awasthi, Kush Bhatia, Sreenivas Gollapudi, Kostas Kollias

Provable Stochastic Optimization for Global Contrastive Learning: Small Batch Does Not Harm Performance

Zhuoning Yuan, Yuexin Wu, Zihao Qiu, Xianzhi Du, Lijun Zhang, Denny Zhou, Tianbao Yang

Examining Scaling and Transfer of Language Model Architectures for Machine Translation

Biao Zhang*, Behrooz Ghorbani, Ankur Bapna, Yong Cheng, Xavier Garcia, Jonathan Shen, Orhan Firat

GLaM: Efficient Scaling of Language Models with Mixture-of-Experts (see blog post)

Nan Du, Yanping Huang, Andrew M. Dai, Simon Tong, Dmitry Lepikhin, Yuanzhong Xu, Maxim Krikun, Yanqi Zhou, Adams Wei Yu, Orhan Firat, Barret Zoph, Liam Fedus, Maarten Bosma, Zongwei Zhou, Tao Wang, Yu Emma Wang, Kellie Webster, Marie Pellat, Kevin Robinson, Kathy Meier-Hellstern, Toju Duke, Lucas Dixon, Kun Zhang, Quoc V Le, Yonghui Wu, Zhifeng Chen, Claire Cui

How to Leverage Unlabeled Data in Offline Reinforcement Learning?

Tianhe Yu, Aviral Kumar, Yevgen Chebotar, Karol Hausman, Chelsea Finn, Sergey Levine

Distributional Hamilton-Jacobi-Bellman Equations for Continuous-Time Reinforcement Learning

Harley Wiltzer, David Meger, Marc G. Bellemare

On the Robustness of CountSketch to Adaptive Inputs

Edith Cohen, Xin Lyu, Jelani Nelson, Tamás Sarlós, Moshe Shechner, Uri Stemmer

Model Selection in Batch Policy Optimization

Jonathan N. Lee, George Tucker, Ofir Nachum, Bo Dai

The Fundamental Price of Secure Aggregation in Differentially Private Federated Learning

Wei-Ning Chen, Christopher A. Choquette-Choo, Peter Kairouz, Ananda Theertha Suresh

Linear-Time Gromov Wasserstein Distances Using Low Rank Couplings and Costs

Meyer Scetbon, Gabriel Peyré, Marco Cuturi*

Active Sampling for Min-Max Fairness

Jacob Abernethy, Pranjal Awasthi, Matthäus Kleindessner, Jamie Morgenstern, Chris Russell, Jie Zhang

Making Linear MDPs Practical via Contrastive Representation Learning

Tianjun Zhang, Tongzheng Ren, Mengjiao Yang, Joseph E. Gonzalez, Dale Schuurmans, Bo Dai

Achieving Minimax Rates in Pool-Based Batch Active Learning

Claudio Gentile, Zhilei Wang, Tong Zhang

Private Adaptive Optimization with Side Information

Tian Li, Manzil Zaheer, Sashank J. Reddi, Virginia Smith

Self-Supervised Learning With Random-Projection Quantizer for Speech Recognition

Chung-Cheng Chiu, James Qin, Yu Zhang, Jiahui Yu, Yonghui Wu

Wide Bayesian Neural Networks Have a Simple Weight Posterior: Theory and Accelerated Sampling

Jiri Hron, Roman Novak, Jeffrey Pennington, Jascha Sohl-Dickstein

The State of Sparse Training in Deep Reinforcement Learning

Laura Graesser, Utku Evci, Erich Elsen, Pablo Samuel Castro

Constrained Discrete Black-Box Optimization Using Mixed-Integer Programming

Theodore P. Papalexopoulos, Christian Tjandraatmadja, Ross Anderson, Juan Pablo Vielma, David Belanger

Massively Parallel k-Means Clustering for Perturbation Resilient Instances

Vincent Cohen-Addad, Vahab Mirrokni, Peilin Zhong

What Language Model Architecture and Pre-training Objective Works Best for Zero-Shot Generalization?

Thomas Wang, Adam Roberts, Daniel Hesslow, Teven Le Scao, Hyung Won Chung, Iz Beltagy, Julien Launay, Colin Raffel

Model Soups: Averaging Weights of Multiple Fine-Tuned Models Improves Accuracy Without Increasing Inference Time

Mitchell Wortsman, Gabriel Ilharco, Samir Yitzhak Gadre, Rebecca Roelofs, Raphael Gontijo-Lopes, Ari S. Morcos, Hongseok Namkoong, Ali Farhadi, Yair Carmon, Simon Kornblith, Ludwig Schmidt

Synergy and Symmetry in Deep Learning: Interactions Between the Data, Model, and Inference Algorithm

Lechao Xiao, Jeffrey Pennington

Fast Finite Width Neural Tangent Kernel

Roman Novak, Jascha Sohl-Dickstein, Samuel S. Schoenholz

The Combinatorial Brain Surgeon: Pruning Weights that Cancel One Another in Neural Networks

Xin Yu, Thiago Serra, Srikumar Ramalingam, Shandian Zhe

Bayesian Imitation Learning for End-to-End Mobile Manipulation

Yuqing Du, Daniel Ho, Alexander A. Alemi, Eric Jang, Mohi Khansari

HyperTransformer: Model Generation for Supervised and Semi-Supervised Few-Shot Learning

Andrey Zhmoginov, Mark Sandler, Max Vladymyrov

Marginal Distribution Adaptation for Discrete Sets via Module-Oriented Divergence Minimization

Hanjun Dai, Mengjiao Yang, Yuan Xue, Dale Schuurmans, Bo Dai

Correlated Quantization for Distributed Mean Estimation and Optimization

Ananda Theertha Suresh, Ziteng Sun, Jae Hun Ro, Felix Yu

Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents

Wenlong Huang, Pieter Abbeel, Deepak Pathak, Igor Mordatch

Only Tails Matter: Average-Case Universality and Robustness in the Convex Regime

Leonardo Cunha, Gauthier Gidel, Fabian Pedregosa, Damien Scieur, Courtney Paquette

Learning Iterative Reasoning through Energy Minimization

Yilun Du, Shuang Li, Josh Tenenbaum, Igor Mordatch

Interactive Correlation Clustering with Existential Cluster Constraints

Rico Angell, Nicholas Monath, Nishant Yadav, Andrew McCallum

Building Robust Ensembles via Margin Boosting

Dinghuai Zhang, Hongyang Zhang, Aaron Courville, Yoshua Bengio, Pradeep Ravikumar, Arun Sai Suggala

Probabilistic Bilevel Coreset Selection

Xiao Zhou, Renjie Pi, Weizhong Zhang, Yong Lin, Tong Zhang

Model Agnostic Sample Reweighting for Out-of-Distribution Learning

Xiao Zhou, Yong Lin, Renjie Pi, Weizhong Zhang, Renzhe Xu, Peng Cui, Tong Zhang

Sparse Invariant Risk Minimization

Xiao Zhou, Yong Lin, Weizhong Zhang, Tong Zhang

RUMs from Head-to-Head Contests

Matteo Almanza, Flavio Chierichetti, Ravi Kumar, Alessandro Panconesi, Andrew Tomkins

A Parametric Class of Approximate Gradient Updates for Policy Optimization

Ramki Gummadi, Saurabh Kumar, Junfeng Wen, Dale Schuurmans

On Implicit Bias in Overparameterized Bilevel Optimization

Paul Vico, Jonathan Lorraine, Fabian Pedregosa, David Duvenaud, Roger Grosse

Feature and Parameter Selection in Stochastic Linear Bandits

Ahmadreza Moradipari, Berkay Turan, Yasin Abbasi-Yadkori, Mahnoosh Alizadeh, Mohammad Ghavamzadeh

Neural Network Poisson Models for Behavioural and Neural Spike Train Data

Moein Khajehnejad, Forough Habibollahi, Richard Nock, Ehsan Arabzadeh, Peter Dayan and Amir Dezfouli

Deep Equilibrium Networks are Sensitive to Initialization Statistics

Atish Agarwala, Samuel Schoenholz

A Regret Minimization Approach to Multi-Agent Control

Udaya Ghai, Udari Madhushani, Naomi Leonard, Elad Hazan

Transformer Quality in Linear Time

Weizhe Hua, Zihang Dai, Hanxiao Liu, Quoc V. Le

Workshops

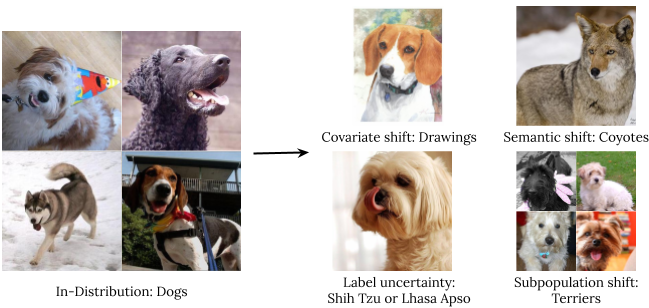

Shift Happens: Crowdsourcing Metrics and Test Datasets Beyond ImageNet

Organizing Committee includes: Roland S. Zimmerman

Invited Speakers include: Chelsea Finn, Lucas Beyer

Machine Learning for Audio Synthesis

Organizing Committee includes: Yu Zhang

Invited Speakers include: Chris Donahue

New Frontiers in Adversarial Machine Learning

Organizing Committee includes: Sanmi Koyejo

Spurious Correlations, Invariance, and Stability (SIC)

Organizing Committee includes: Victor Veitch

DataPerf: Benchmarking Data for Data-Centric AI

Organizing Committee includes: Lora Aroyo, Peter Mattson, Praveen Paritosh

DataPerf Speakers include: Lora Aroyo, Peter Mattson, Praveen Paritosh

Invited Speakers include: Jordi Pont-Tuset

Machine Learning for Astrophysics

Invited Speakers include: Dustin Tran

Dynamic Neural Networks

Organizing Committee includes: Carlos Riquelme

Panel Chairs include: Neil Houlsby

Interpretable Machine Learning in Healthcare (IMLH)

Organizing Committee includes: Ramin Zabih

Invited Speakers include: Been Kim

Human-Machine Collaboration and Teaming

Invited Speakers include: Fernanda Viégas, Martin Wattenberg, Yuhuai (Tony) Wu

Pre-training: Perspectives, Pitfalls, and Paths Forward

Organizing Committee includes: Hugo Larochelle, Chelsea Finn

Invited Speakers include: Hanie Sedgh, Charles Sutton

Responsible Decision Making in Dynamic Environments

Invited Speakers include: Craig Boutilier

Principles of Distribution Shift (PODS)

Organizing Committee includes: Hossein Mobahi

Hardware-Aware Efficient Training (HAET)

Invited Speakers include: Tien-Ju Yang

Updatable Machine Learning

Invited Speakers include: Chelsea Finn, Nicolas Papernot

Organizing Committee includes: Ananda Theertha Suresh, Badih Ghazi, Chiyuan Zhang, Kate Donahue, Peter Kairouz, Ziteng Sun

Knowledge Retrieval and Language Models

Invited Speakers include: Fernando Diaz, Quoc Le, Kenton Lee, Ellie Pavlick

Organizing Committee includes: Urvashi Khandelwal, Chiyuan Zhang

Theory and Practice of Differential Privacy

Organizing Committee includes: Badih Ghazi, Matthew Joseph, Peter Kairouz, Om Thakkar, Thomas Steinke, Ziteng Sun

Beyond Bayes: Paths Towards Universal Reasoning Systems

Invited Speakers include: Charles Sutton

Spotlight Talk: Language Model Cascades | David Dohan, Winnie Xu, Jacob Austin, David Bieber, Raphael Gontijo Lopes, Yuhuai Wu, Henryk Michalewski, Rif A. Saurous, Jascha Sohl-dickstein, Kevin Murphy, Charles Sutton

Safe Learning for Autonomous Driving (SL4AD)

Invited Speakers include: Chelsea Finn

*Work done while at Google. ↩

Read More

.png)