MADELEINE DAEPP: Welcome to Intern Insights, a Microsoft Research Podcast featuring the brilliant students who are contributing to the research and advances at Microsoft as part of the renowned internship program at Microsoft Research. I’m Dr. Madeleine Daepp, a Senior Researcher at Microsoft Research, and today I’m talking with two of my interns, Jennifer Scurrell and Alejandro Cuevas Villalba, about their work and their experience this summer at Microsoft Research. Welcome, Jennifer and Alejandro!

JENNIFER SCURRELL: Thanks.

ALEJANDRO CUEVAS: Hi. Glad to be here.

DAEPP: So, of course, I know both of you, but many of the listeners do not. So can you tell us a little bit about yourselves? Let’s start with you, Jennifer.

SCURRELL: Yeah, sure. So I’m from Switzerland. I live in Zurich. I’m a PhD candidate at the Center for Security Studies at ETH Zurich. And I’m actually a political scientist by training. So I did my bachelor’s and my master’s at the University of Zurich in political science, and now I’m actually entering my last year of PhD-ing at ETH. And what I look at in my dissertation is how AI-enhanced bots influence political opinion formation online.

DAEPP: Awesome. Well, it’s been so great to have you, especially with that background, and we’ll talk a little bit about how relevant that is to some of the work all of us are doing this summer. Alejandro, how about you?

CUEVAS: Hi. Thank you, Madeleine. On my end, my name is Alejandro or Alejandro for our Latino listeners! I come from an infamous area of the world called the Triple Border, specifically Ciudad del Este, Paraguay. I grew up there all my life and came to the States then to study. I’m currently at Carnegie Mellon University studying societal computing. I’m going into my fifth year, and I would say I’m an interdisciplinary researcher by force—or by chance! It’s something that I happened to end up after all the work that I did in undergrad and then subsequently in PhD.

DAEPP: Can you tell me a little bit about what societal computing is?

CUEVAS: Yeah. Broadly, the way that I would define it and the way that I approach it is essentially how computer science technologies shape or impact society. And that is very broad but indeed is a very broad field.

DAEPP: Right. And it’s extremely relevant right now as we’re seeing society being reshaped by some of these new technologies. So I think a sort of next question that I have for both of you is, you know, there are so many things you could have done this summer, and I’m really curious how you ended up at Microsoft Research. So maybe, Alejandro, why don’t you start this time?

CUEVAS: Yeah, broadly, the story that I’ve told a few times already to you all, but I’m happy to share over and over again, is that I interned here in 2016 as a software engineer, and I happened to attend a research talk by MSR (Microsoft Research) at the time, and I was a sophomore in an undergrad at the time, and I thought I didn’t really know much about research, honestly. I didn’t know what a PhD really entailed. And so it was my first time sort of seeing this whole nother line of work that I could be doing. And even though, you know, I liked coding in C# at the time, I think the … seeing how researchers in CS were working on mosquitos for whatever reason at Microsoft of all places was just eye opening. And so after that, I started doing research at school, and two of the mentors that I had in undergrad, they had met at MSR in an internship a little while ago, and they both spoke very highly of it. They both had a great time. And it always seemed like whenever I went to conferences or saw papers that I liked, I kept stumbling upon researchers that I admired and that, you know, we’re doing super impactful work, and it almost seemed like a rite of passage that all these people went through. And, you know, I said, I want that. I want to experience that, as well. So I waited a little bit for the pandemic to dwindle down and not have the remote work again because I knew I wanted to be here in person. And then once that happened, I tried my best to, to, to get here. And just yesterday, I shared with my colleague Eva Brown, who is another intern in our group, and I was telling Eva and Jennifer, who’s here in our talk, as well, that I had to cold … you know, I went ahead and cold emailed Glen Weyl, who’s the director of the Plural Technology Collaboratory, and I said, hey, I’m super interested in this job position. I really want to be there. I have these ideas, and I hope you will consider my application. I really wanted to be here this summer.

DAEPP: That’s so fascinating, and I can really empathize with that feeling of sort of learning about Microsoft Research and then starting to see it everywhere. Like you see on conferences. There’s often, you know, a coauthor from Microsoft Research. You look at people’s backstories. And you start to realize sort of how many people across computer science, but also computational social science, also other fields, really sort of have some time that they spend in this sort of magical place. So, Jennifer, what about you?

SCURRELL: Yes, so I can only second that. So because I’m just entering my last PhD year, I have to decide at some point where I want to go. Do I want to stay in academia, or do I want to go to industry? And I said to myself, OK, to really find out what would suit me best, I really … I need to do an internship in, in the industry and especially, as well, because what we just discussed, right? You look at papers and see all these impactful papers with a lot of MSR researchers as authors. And so one day, my PhD colleague in my lab actually sent me the link to the internship advertisement. I was like, OK, this is just perfect. First of all, it’s Microsoft Research, which is one of the leading research facilities in tech industry. And second of all, the description was just … it was just matching. And I tried my best, and, yeah, now I’m here and I’m so happy that it all worked out!

DAEPP: That’s so funny. So just to give you both a little bit of context about some of those internship postings, you know, on our end, we sort of had this set of projects and areas that we were so excited about in the fall of 2022, and then of course in the spring of 2023, the world starts to change here at Microsoft Research, right. Like I start using ChatGPT every day and telling everybody in my family about it. In February, we get our first glimpse of GPT-4, right, through Bing Chat. And then I was sort of walking around and every day somebody is finding some new result about GPT-4 and theory of mind, or GPT-4 and discovery of causal graphs, or, you know, even that it can code 3D video games. And so we started to think, oh, there’s really an opportunity here, and we need to think about what our research is, what’s sort of most impactful. And so especially for a computational social scientist like me, it was sort of like there’s a new microscope, right. There’s this brand-new tool. What can we do? And so, for Alejandro, I think you applied to this sort of like web3 cryptocurrency project with Glen Weyl, and then you came in and I told you I wanted you to work with large language models. Tell us a little bit about how you brought a growth mindset to that context.

CUEVAS: Yeah, it’s really … it’s really funny that you’re mentioning that. I remember going through the interviews at the time and I kept wondering, why are they asking me so much about large language models? Because from my conception, I still thought that I was coming to work on web3 and DAOs—decentralized autonomous organizations—and I had my whole pitch around web3. But then all the interview questions were around large language models, but luckily, I mean, I spend a good amount of time observing internet phenomena, and it was hard to miss the LLM sort of rise, and I was happy that I could answer all those questions. But indeed, I came here, and it was a big emphasis on figuring out a project that was quite different from what I had originally envisioned. But I didn’t really mind. I mean, I wanted to do this internship either way, and throughout my research career, I had the privilege of always being able to tackle different types of problems. I think, just in undergrad, I worked all the way from usable security on one side and all the way to systems security on the other side, and so jumping headfirst into a new problem was just an average PhD day. You know, it’s just another project that needs to be thought through and scoped out and carried out. And I don’t think it ever detracted me too much that it was something that I was not super familiar. Of course, it’s always difficult to venture into a domain that you have a lot to catch up. But it’s also a fun and rewarding experience. It’s a body of literature that I got the opportunity to explore around really talented individuals. And a lot of the literature search that I would have done on my own was facilitated by all these people that were already thinking about these things. So it was a very fast-paced opportunity to acquire a whole new skill set that I think would be relevant to the rest of my work. And so when you mention growth mindset, I think it was just really an opportunity to fully come in and absorb an area that I know it’s going to be impactful—I know it’s going to have benefits on other areas of my work—and, you know, let’s go at it!

DAEPP: Can I just jump in there? I think something that’s so interesting in what you just said was, you know, sometimes when you’re doing your PhD, you’re really sort of doing a literature review, right. You’re really sort of out there searching for article after article, reading textbook chapters, trying to get up to speed. Maybe you get to take a class. But here what I saw you do was you sort of went door knocking, right. You said, oh, you know, here’s the world’s expert in this area. Or, hey, I need to do something conversationally interactive—who do I go talk to? So can you talk a little bit more about sort of what it looked like to, to ask other researchers, ask other PhD students, ask other interns for help?

CUEVAS: Yeah. Absolutely. I think one of my favorite things, which I will definitely miss outside, is in meetings, there’s this culture of sharing links and references as the meeting is going on, and all of a sudden, you end the meeting and you have this body of work in your chat log that you can go and, you know, sift through. And people did that so much at the beginning and throughout the internship that we would be talking about some topic and there will be three references on the chat, you know, regarding that topic, three papers to read. And I think that was really, you know, it was eye opening in the sense that I didn’t have to, you know, go to Google Scholar and figure out where to find papers. I … there was a network of people around me already that had all this expertise. And so I think it played a phenomenal role from the get-go of facilitating those connections. I think within my first week or two, you had already introduced me to two researchers that were working on areas that I thought were interesting and also that were relevant to the work that I wanted to do. And so having sort of that, from the beginning, really made it easy to then feel comfortable and, you know, empowered to just reach out. You know, that’s something that, from the get-go, people would tell me, you know, just reach out. They’re around the corner. Just go and have a meeting with them. And so, I figured, OK, you know, let’s do it. Why not?

DAEPP: Jennifer, that’s something that you did a lot of as well. You reached out, you sort of asked people for coffee chats. Can you talk a little bit about that experience on your end?

SCURRELL: Exactly. I just wanted to say, can I jump on this? So it was amazing. The first week, we had our initial meeting, and you said, hey, this is … this summer is an opportunity—reach out to people. Network. I was like, OK, uh, sounds easier than it might be, question mark? But it turned out to be so easy! It was really, you know, you just drop an email, you drop a, a message in Teams: hey, I’m interested in your work. I want to learn more. Can we go for a coffee? And next day, I was actually really with this, you know, super-famous researcher drinking coffee in the Building 99 atrium! And I learned so much in 20 minutes I could not read, I don’t know, 10 papers about the issue I wanted to discuss with this person. It’s really amazing how especially also open people are to, yeah, just meet and chat. And that was a really great experience, and I really enjoyed it a lot.

DAEPP: Alejandro, you also had a researcher that you wanted to get coffee with. Tell us a little bit about seeing that researcher in the halls versus how you finally decided to ask them to meet?

CUEVAS: Oh, that was so nerve wracking. This was Cormac Herley, who was just a few doors down from my office, and I would see him every day almost at the kitchen when I would go get coffee and I would look at him; he would look at me. We would do, you know, like a passive nod at the beginning. And eventually—I think only eight weeks in—I finally sort of decided to say, “Hi, hello, my name is Alejandro,” and I completely butchered actually that introduction because I was so nervous. I think my Apple Watch, you know, gave me the “high heart rate” notification. And I think I even said, “Hi, I’m, I’m Cormac!” or something like that. And he was so confused! And I just said, you know, like, I really like this work, and this work, and this work. And he was like, why are you citing this work from 2009? And I was like, it’s just so good, you know? And we happened to sit down right then and there, and I think we chatted for about an hour. Again, just being able to see somebody that you, you admire their work and you, sort of, admire their ideas and then sit down with them, have coffee, interact, chat, and see sort of how they’re thinking about future research problems … I think that was a very exciting thing, and I think it’s one of the things that was in my bucket list, and then I definitely crossed off from the summer!

DAEPP: I think this really speaks to the power also of the in-person internship. This is my first year as an in-person mentor, and I’ve had wonderful experiences with remote intern groups, but this has been really special because I think we’ve been able to, you know, have these sort of in-person meetings and also a very intense bug bash day where we were all sort of in the same room trying to resolve a problem that was very time sensitive. And so one of the things that I think makes this group a little special—although I think it’s actually true of many, many intern groups—is how incredibly interdisciplinary you all are. Like I think of you, Alejandro, really as a computational social scientist, somebody with sort of deep computer science skills. Jennifer of course you have this training in political science. Our other intern, Eva Maxfield Brown, is in an informatics PhD program, and I am a PhD urban planner. So we have really different skill sets and perspectives that we’re bringing in. And so I was wondering maybe, Jennifer, for you, if you could talk a little bit about, sort of, that teamwork experience and how we started to build these collaborations across disciplines.

SCURRELL: Sure. So I’m a political scientist, and traditionally our dissertations look sometimes very different. So I do, for example, I write a monograph. So that means not really collaborating with other people on papers. I’m, with my dissertation, I’m kind of sometimes very alone. And so, one of the goals this summer was really to explore teamwork. And I will say it was so much fun, especially as you said, Madeleine, in this really, really interdisciplinary team. And maybe an anecdote … because interdisciplinary work sometimes could be difficult, right, because we’re coming from different disciplines, we do not talk the same languages. And so in the first few weeks, I had so many meetings with our colleague, fellow intern, Eva, just to discuss projects and to understand, while talking about these projects and ideas and so on, what we’re actually really meaning when we are talking about these issues. So she’s really coming from this computer-sciencey background. I’m coming from the social sciences. And we had many, many sessions before we actually started to understand each other, what we’re actually talking about!

DAEPP: Are there particular words that stand out to you as you were using them differently, like particular barriers to understanding?

SCURRELL: Maybe it was more way of thinking … yeah.

DAEPP: I think there’s definitely a strong, sort of, you know, I, I think some folks come from this very sort of prototyping-development orientation and some folks come from this very strong research orientation. And so that cross-pollination between all of you has been quite magical because I think we’re seeing the builders do some really beautifully designed research and the researchers really think about values in building.

SCURRELL: I think it’s, it’s exactly that; that maybe it’s more the approach like the more data science-y, inductive approach versus the theoretical approach like deductive approach, and, yeah, I really have to say it was so interesting because everybody always listened to each other. I really have to say that. That was one of the things I really appreciated so much. You had so many brainstorming sessions and everybody was listening to each other. And at some point, we also started to understand each other and that was just, yeah, amazing!

DAEPP: So I, I do want to just sort of take you up just a notch and ask you a little bit about the pace that you’ve been working at. Alejandro, you set your goal of building an entire large language model-based prototype, deploying it on Azure, getting it out as a user study with over 450 Microsoft employees, and then now you’re writing up, you know, analyzing and writing up the results. So that’s a lot in three months! And, Jennifer, you are, you know, doing interviews with really sort of elite political stakeholders about artificial intelligence and its impacts on political opinion formation. And so you are running from interview to interview. You know, again, you are doing an entire study about a major timely topic in the span of three months while also making sure that Alejandro has good survey skills and that Eva has a clear theoretical approach, and so I wanted to ask, I think maybe Alejandro first, how does this pace compare? How do you like this pace of work?

CUEVAS: I think in general, the way that I approach work at least is through sprints, and I think that this summer was definitely a moment to sprint. I thought, you know, based on everything that I’ve said so far, it was a summer that I came super-excited whatever the work was going to be and whatever skill sets I needed to bring to the table. I think it was a summer that I wanted to sprint. And it started off, you know, already sprinting. I had the first week of work, I actually had a deadline that I submitted a paper for just, just before we started. And … or …

DAEPP: So that was an external paper? That was not here …

CUEVAS: Right. It was a paper that I … it was for my PhD. It just happened to be the deadline right in the middle of like onboarding, you know, the first week, and I remember, you know, coming already off that and sort of hit the ground running. I was already in that, in that state. And I wanted to do that as a challenge for myself. I wanted to take this opportunity to grow and, you know, learn all these skills that, that were in front of me. And I think the pace was very fast. I think I played a big role dictating that pace. But at the same time, the people around me were able to also match that pace. And I think in particular, a lot of the work that we did together in July felt, you know, truly collaborative and truly exciting because we were able to not only keep each other accountable, but it’s the same sort of feeling that you have when you’re running, you know, next to somebody and that you don’t want to give up because the other person is also, you know, running really hard and you’re keeping each other sort of like motivated. And I think that’s something that I got from the team as a whole. You know, there were people around me that were really excited to do and accomplish, you know, great projects during the summer. You know, looking back, it was an ambitious undertaking, and I think it was … I’m glad that it worked out and that it went well and that we had the results that we had. But I want to also lift up the fact that, you know, through the process, you played a big role in sort of like encouraging that development. You know, a lot of things that you would tell me in July were, you know, you’re working hard; you’ll be judged according to your hard work, not your results. And I think having that peace of mind was also just like, you know, it was a, a good thing to have in the back of my mind. Not only that, the reassurance of it’s not results driven, but the fact itself that you had visibility on the hard work that I was putting. And that made it more encouraging because I knew that you acknowledged and you recognized that, and that played a big role in me wanting to keep putting that effort in.

DAEPP: I was a little worried about how hard you were working! I was like, please have boundaries, you know—go out, play in Seattle! [LAUGHS] All of you, especially, you know, the two of you and Eva Maxfield Brown, but also all of the interns that we get, are so talented and so ambitious and so driven that the summer can be just really exciting. It’s just so magical to be around that energy. Thank you for bringing that. Jennifer, what about you? What was your experience of pace?

SCURRELL: Yeah, OK. I really have to say … so I never worked in industry before, so this pace was really something different than I was [LAUGHS] used to because in my PhD, as I said, I do a monograph. I’m, I’m responsible for my own project and my own time, so I can really take time to, to think about things almost as long as I want, right. I mean, OK, my supervisor has some say there, but … and I was aware that coming here, doing this internship, there will be a different pace, but I never expected it to be so fast. But I must say I really liked it, and I was never scared or stressed or anything because it’s exactly what also you said, Alejandro. I knew people have my back, and it was always a team effort, and everybody was always so passionate and motivating and it really … I felt so at ease even though there were so many stressful situations, but it was always so much fun because we went through it together.

DAEPP: That’s so nice to hear. So, you know, you sort of compared it to your PhD, but Alejandro, you also have a startup. Can you tell us a little bit about that and also how that informs some of the insights you brought to us about pace and intensity and stress and emotional management from that world?

CUEVAS: So Redoux is a fragrance company, and it’s something a little bit different from what I do as a PhD—at first glance, I would say. I’ve been able to in this past four and five years to find a lot of overlap within the, the work that I do at Redoux as well as in my PhD work. We talk a lot about interdisciplinarity in the research that we do within our academic world. But at times, I like to extend interdisciplinarity as well to other aspects just that are beyond academia, as well. Those could include your lived experiences. Those inform the type of work that you do, as well. Those definitely inform mine, and the work that I do at Redoux informs the way that I think about problems, as well. It informs the way that I think about or approach creativity. It helps me learn about storytelling, as well. It helps me learn about what another side of the world cares about or a whole different segment of people or set of problems. What I derive from, from these experiences is that there’s a hard problem, there’s a project, there’s scope, and there is an effort to do your best in whatever realm that looks like. And when I say, you know, there’s that part of how to think about things, but even here at Microsoft, there was a talk about using machine learning to discover new scents and new fragrances, and that is work that is directly related to the work that I care about in the Redoux world. So I think at the end of the day, I keep finding these overlaps between these two worlds, and I take the opportunity to merge them and inform the way that I approach problems as much as I can. And, yeah, it’s been, it’s been rewarding. It’s been tough to handle, but it’s also been, I think, a very formative part of how, I think, I communicate and how I think about broadly creativity and, and my work.

DAEPP: Yeah. I do want to lift up … I love this sort of insight about how I think all, all of you as interns really bring sort of these multifaceted identities into, you know, partly into the work, partly into the team, but also into your social lives. So, Jennifer, for you, tell us a little bit about how you’ve been spending your weekends.

SCURRELL: So, yeah, funny story here. Like, I mean, we are like 10 weeks in the internship now, almost at the end, and only I think three weeks ago or so I was so proudly saying, “Hey, Madeleine, I spent last weekend … I discovered Seattle!” And you were like, wait, it’s already six weeks into your internship. What did you do all the other weekends? I was like, I went hiking! So most of the times … it was really, really amazing to grab, for example, the other interns and go drive two hours, for example, to Mount Rainier and have a wonderful hike there. Or I also went, for example, to Mount Saint Helens. They are these huge lava caves. And, yeah, I went caving and, yeah, hit my head, but everything went fine. [LAUGHS] I’m still here. And no, it’s really amazing what you can do here, especially in nature. But also on campus of course what I really appreciated always were the ice cream socials, for example. And I will really dearly miss them!

DAEPP: There were a lot of ice cream socials for the internship community this summer, and they were wonderful.

CUEVAS: Don’t forget about Puzzle Day, too, Jennifer.

SCURRELL: Oh! Oh yeah, Puzzle Day! I mean, we became fifth, right, out of 65, I think, groups? Yeah, that was really cool.

DAEPP: Wait, tell me about Puzzle Day. I wasn’t there.

CUEVAS: So Puzzle Day was this event organized by Microsoft where you put a team of very, very talented and very smart individuals together and threw really hard puzzles at them. And we managed to assemble a very good team from fellow MSR interns, and we bunkered down and went at really tough puzzles for about five hours. And as you mentioned, we came out fifth out of plenty of teams. I think we could have officially if … you know, we should have come out first or second.

SCURRELL: Yeah … [LAUGHTER]

CUEVAS: But that’s a story for another day! But that was one of the fun things, I think, at least Jennifer and I and a few other people got to do as a nice bonding experience. I think apart from that, something that I had the chance to do a lot during the summer was have friends that came and visited me and that we got to explore Seattle with people outside of town. And that was just fun in general, having somebody from, you know, another side of the country come here and discover together a new neighborhood or a new restaurant or a new store, a new museum. I think one of my favorite ones there was when Amelia, my partner, came out here, we discovered a hidden beach in the northwest side of Bellevue. It was a hidden beach access that is not marked. And I think Seattle has this little sort of mysteries or like little hidden spots around town that we got the chance to discover. And I think that was really one of my favorite things during the summer.

SCURRELL: And can I add one more thing? Not to forget about July 4th, our fellow intern Eva invited us to spend Fourth of July with her and her friends. So we also met some locals, and we had a barbecue and we saw the fireworks and that was really, really also a very nice experience.

DAEPP: I think there’s nothing that makes me happier than when I see all of you the next morning and you say, oh, we all had dinner with all of the interns last night. Because I know for me, with my graduate school sort of research experiences, those connections to other interns are the things that have lasted for, you know, that now I look for those people at conferences. I cite their papers. Those are such, such wonderful connections. And so it’s been really cool to see all of you starting to build that sort of relationship, and I’m hopeful that that will, you know, be sort of a real source of value going forward. So I am curious for both of you, based on your experience, would you recommend this internship to other folks considering Microsoft Research?

SCURRELL: I mean, yes, of course! No, definitely. It’s just so amazing with like who you can work with, what resources you have, the whole organization, all the events you can, you can attend. And it’s really … besides learning technical skills, but also I really learned a lot about myself. And I really also take a lot back to Switzerland not only for my PhD but also for my general life. I really learned a lot here.

DAEPP: Can you say what you mean by that?

SCURRELL: It’s just this open-mindedness. I really must say … so in the coffee talks, but also in … we have had so many brainstorming sessions with so many different groups and this, this respect for each other that you’re listening, you’re truly interested in discussing things with your colleagues, and you’re getting so much valuable input. We’re all in the boat together.

DAEPP: I’ve certainly also experienced this, a strong culture of respect for one another, like especially across disciplines.

SCURRELL: Yes.

DAEPP: But then it’s also … we had this session, this weekly session, called “Show Us Your New Clunky Prototypes” by the human-computer interaction team, where people would show very early-stage demos, and it wasn’t considered “demo-able” unless it broke, right. And, Alejandro, you had a, had a quite clunky prototype in exactly that sense. But that to me is exactly sort of this example of how, how much people gave actionable, useful feedback to very clunky, very early-stage ideas, really sort of thinking about what, what is this trying to be? What can this be? And emphasizing what was good about it. Is, is that your experience?

SCURRELL: Yeah. I also … I must second that it’s really this not having to worry to fail or putting something very rough out there. People still, you know, take you serious [LAUGHS] and want you to succeed. And first, I was really nervous at the beginning of my internship because, you know, all the very famous and like super-interesting and super-successful researchers you’re around, but I soon really relaxed because I understood that people really are interested in working together successfully and supporting each other. And that was really, really nice to experience.

DAEPP: Alejandro, what about you? You had looked forward to being at Microsoft Research really for years before you came here. Did it live up to your expectations?

CUEVAS: Yeah, I think I would recommend it to anybody who wants to work with a cohort of really, really talented individuals, anybody who wants to create some long-lasting friendships and professional connections, or anybody who wants to be part of a pretty solid network of alumni interns, which I think is pretty remarkable. Or anybody who wants to come and work in a fast-paced environment with a ton of really talented individuals across numerous, numerous disciplines. And if anything from that list, I think, appeals to anybody, I think Microsoft Research is a very good place to come and spend your summer at.

DAEPP: So last question for both of you. It’s a big question. What impact do you want your work to have going forward? I mean, you are both really exciting young scholars. I’m so grateful to be a part of your journey. Can you tell me just, just think a little bit forward. What impact do you want to have with your work, and what are you hoping this next stage of your career looks like?

CUEVAS: Something that I’ve been thinking a lot and wanting to venture as I head into some of my last years of PhD is the dissemination of my work, but not necessarily in the traditional academic sense where we find other academics who find our work interesting, but in the sense that I think I want to work on problems that interest and appeal to the broader public and something that is communicated to and accessible to people who are outside of academia. I want to position the work that I’m doing particularly, you know, around cryptocurrencies and around what I like to say like the back alleys of the internet … I want to have that work accessible and to create an appeal to a broader public. I think something that, when I was younger, I wish I knew was … or I wish I had more exposure to what research was and what a PhD was, particularly coming from Paraguay, right, a place that, I shared with you, when I was in high school, had one patent a year. A place where I went to my first science fair when I was a senior in high school. And I want my work to be not only accessible from a place of interest but accessible in a way that it can tell a story that people find so compelling that they feel inspired to work in something similar or inspired to, to go out and investigate phenomena that they think is fascinating. And I want to be able to transmit this excitement and transmit sort of this joy, I guess, of working on something that interests you with the broader public and hopefully appeal and again inspire somebody who may never have thought that this is a career that they could consider or a path that is potentially open to them.

DAEPP: Yeah, I really hope that you are the first of many brilliant Paraguayan computer scientists who come here for the internship!

CUEVAS: I hope so, too.

DAEPP: Jennifer, what about you?

SCURRELL: So for me actually, it would be important that my work—because I’m a political scientist, social scientist, I’m working specifically on sociotechnical problems—that my research also really, you know, gives something back to society and that I also really have, or that my work or our work, really has impact on a higher level to solve the big problems we have in this world, specifically, for example, with my work regarding how AI impacts political opinion formation; regarding, for example, next elections that I can give some input how we could do better with AI, with technology, to solve these societal problems. And especially what I also want to say, as a first-generation academic, I hope to inspire people that are like me to, yeah, reach high!

DAEPP: Well, thank you so much to both of you. I could not be more grateful for such a wonderful team and collaboration. And I really do think you’re going to make major impacts on important sociotechnical problems, on showing new methods with large language models, and I’m excited to advocate for your career going forward.

[OUTRO MUSIC]

Thank you for a wonderful summer.

CUEVAS: Thank you so much.

SCURRELL: Thank you.

Hao Zhou is a Research Scientist with Amazon SageMaker. Before that, he worked on developing machine learning methods for fraud detection for Amazon Fraud Detector. He is passionate about applying machine learning, optimization, and generative AI techniques to various real-world problems. He holds a PhD in Electrical Engineering from Northwestern University.

Hao Zhou is a Research Scientist with Amazon SageMaker. Before that, he worked on developing machine learning methods for fraud detection for Amazon Fraud Detector. He is passionate about applying machine learning, optimization, and generative AI techniques to various real-world problems. He holds a PhD in Electrical Engineering from Northwestern University. Karthick Gopalswamy is an Applied Scientist with AWS. Before AWS, he worked as a scientist in Uber and Walmart Labs with a major focus on mixed integer optimization. At Uber, he focused on optimizing the public transit network with on-demand SaaS products and shared rides. At Walmart Labs, he worked on pricing and packing optimizations. Karthick has a PhD in Industrial and Systems Engineering with a minor in Operations Research from North Carolina State University. His research focuses on models and methodologies that combine operations research and machine learning.

Karthick Gopalswamy is an Applied Scientist with AWS. Before AWS, he worked as a scientist in Uber and Walmart Labs with a major focus on mixed integer optimization. At Uber, he focused on optimizing the public transit network with on-demand SaaS products and shared rides. At Walmart Labs, he worked on pricing and packing optimizations. Karthick has a PhD in Industrial and Systems Engineering with a minor in Operations Research from North Carolina State University. His research focuses on models and methodologies that combine operations research and machine learning. Xin Huang is a Senior Applied Scientist for Amazon SageMaker JumpStart and Amazon SageMaker built-in algorithms. He focuses on developing scalable machine learning algorithms. His research interests are in the area of natural language processing, explainable deep learning on tabular data, and robust analysis of non-parametric space-time clustering. He has published many papers in ACL, ICDM, KDD conferences, and Royal Statistical Society: Series A.

Xin Huang is a Senior Applied Scientist for Amazon SageMaker JumpStart and Amazon SageMaker built-in algorithms. He focuses on developing scalable machine learning algorithms. His research interests are in the area of natural language processing, explainable deep learning on tabular data, and robust analysis of non-parametric space-time clustering. He has published many papers in ACL, ICDM, KDD conferences, and Royal Statistical Society: Series A. Youngsuk Park is a Sr. Applied Scientist at AWS Annapurna Labs, working on developing and training foundation models on AI accelerators. Prior to that, Dr. Park worked on R&D for Amazon Forecast in AWS AI Labs as a lead scientist. His research lies in the interplay between machine learning, foundational models, optimization, and reinforcement learning. He has published over 20 peer-reviewed papers in top venues, including ICLR, ICML, AISTATS, and KDD, with the service of organizing workshop and presenting tutorials in the area of time series and LLM training. Before joining AWS, he obtained a PhD in Electrical Engineering from Stanford University.

Youngsuk Park is a Sr. Applied Scientist at AWS Annapurna Labs, working on developing and training foundation models on AI accelerators. Prior to that, Dr. Park worked on R&D for Amazon Forecast in AWS AI Labs as a lead scientist. His research lies in the interplay between machine learning, foundational models, optimization, and reinforcement learning. He has published over 20 peer-reviewed papers in top venues, including ICLR, ICML, AISTATS, and KDD, with the service of organizing workshop and presenting tutorials in the area of time series and LLM training. Before joining AWS, he obtained a PhD in Electrical Engineering from Stanford University. Yida Wang is a principal scientist in the AWS AI team of Amazon. His research interest is in systems, high-performance computing, and big data analytics. He currently works on deep learning systems, with a focus on compiling and optimizing deep learning models for efficient training and inference, especially large-scale foundation models. The mission is to bridge the high-level models from various frameworks and low-level hardware platforms including CPUs, GPUs, and AI accelerators, so that different models can run in high performance on different devices.

Yida Wang is a principal scientist in the AWS AI team of Amazon. His research interest is in systems, high-performance computing, and big data analytics. He currently works on deep learning systems, with a focus on compiling and optimizing deep learning models for efficient training and inference, especially large-scale foundation models. The mission is to bridge the high-level models from various frameworks and low-level hardware platforms including CPUs, GPUs, and AI accelerators, so that different models can run in high performance on different devices. Jun (Luke) Huan is a Principal Scientist at AWS AI Labs. Dr. Huan works on AI and Data Science. He has published more than 160 peer-reviewed papers in leading conferences and journals and has graduated 11 PhD students. He was a recipient of the NSF Faculty Early Career Development Award in 2009. Before joining AWS, he worked at Baidu Research as a distinguished scientist and the head of Baidu Big Data Laboratory. He founded StylingAI Inc., an AI start-up, and worked as the CEO and Chief Scientist in 2019–2021. Before joining the industry, he was the Charles E. and Mary Jane Spahr Professor in the EECS Department at the University of Kansas. From 2015–2018, he worked as a program director at the US NSF in charge of its big data program.

Jun (Luke) Huan is a Principal Scientist at AWS AI Labs. Dr. Huan works on AI and Data Science. He has published more than 160 peer-reviewed papers in leading conferences and journals and has graduated 11 PhD students. He was a recipient of the NSF Faculty Early Career Development Award in 2009. Before joining AWS, he worked at Baidu Research as a distinguished scientist and the head of Baidu Big Data Laboratory. He founded StylingAI Inc., an AI start-up, and worked as the CEO and Chief Scientist in 2019–2021. Before joining the industry, he was the Charles E. and Mary Jane Spahr Professor in the EECS Department at the University of Kansas. From 2015–2018, he worked as a program director at the US NSF in charge of its big data program. Shruti Koparkar is a Senior Product Marketing Manager at AWS. She helps customers explore, evaluate, and adopt Amazon EC2 accelerated computing infrastructure for their machine learning needs.

Shruti Koparkar is a Senior Product Marketing Manager at AWS. She helps customers explore, evaluate, and adopt Amazon EC2 accelerated computing infrastructure for their machine learning needs.

NVIDIA GeForce NOW (@NVIDIAGFN)

NVIDIA GeForce NOW (@NVIDIAGFN)

Ramakant Joshi is an AWS Solutions Architect, specializing in the analytics and serverless domain. He has a background in software development and hybrid architectures, and is passionate about helping customers modernize their cloud architecture.

Ramakant Joshi is an AWS Solutions Architect, specializing in the analytics and serverless domain. He has a background in software development and hybrid architectures, and is passionate about helping customers modernize their cloud architecture. Jake Wen is a Solutions Architect at AWS, driven by a passion for Machine Learning, Natural Language Processing, and Deep Learning. He assists Enterprise customers in achieving modernization and scalable deployment in the Cloud. Beyond the tech world, Jake finds delight in skateboarding, hiking, and piloting air drones.

Jake Wen is a Solutions Architect at AWS, driven by a passion for Machine Learning, Natural Language Processing, and Deep Learning. He assists Enterprise customers in achieving modernization and scalable deployment in the Cloud. Beyond the tech world, Jake finds delight in skateboarding, hiking, and piloting air drones. Sonu Kumar Singh is an AWS Solutions Architect, with a specialization in analytics domain. He has been instrumental in catalyzing transformative shifts in organizations by enabling data-driven decision-making thereby fueling innovation and growth. He enjoys it when something he designed or created brings a positive impact. At AWS his intention is to help customers extract value out of AWS’s 200+ cloud services and empower them in their cloud journey.

Sonu Kumar Singh is an AWS Solutions Architect, with a specialization in analytics domain. He has been instrumental in catalyzing transformative shifts in organizations by enabling data-driven decision-making thereby fueling innovation and growth. He enjoys it when something he designed or created brings a positive impact. At AWS his intention is to help customers extract value out of AWS’s 200+ cloud services and empower them in their cloud journey. Dariush Azimi is a Solution Architect at AWS, with specialization in Machine Learning, Natural Language Processing (NLP), and microservices architecture with Kubernetes. His mission is to empower organizations to harness the full potential of their data through comprehensive end-to-end solutions encompassing data storage, accessibility, analysis, and predictive capabilities.

Dariush Azimi is a Solution Architect at AWS, with specialization in Machine Learning, Natural Language Processing (NLP), and microservices architecture with Kubernetes. His mission is to empower organizations to harness the full potential of their data through comprehensive end-to-end solutions encompassing data storage, accessibility, analysis, and predictive capabilities.

John Kitaoka is a Solutions Architect at Amazon Web Services. John helps customers design and optimize AI/ML workloads on AWS to help them achieve their business goals.

John Kitaoka is a Solutions Architect at Amazon Web Services. John helps customers design and optimize AI/ML workloads on AWS to help them achieve their business goals. Josh Famestad is a Solutions Architect at Amazon Web Services. Josh works with public sector customers to build and execute cloud based approaches to deliver on business priorities.

Josh Famestad is a Solutions Architect at Amazon Web Services. Josh works with public sector customers to build and execute cloud based approaches to deliver on business priorities.

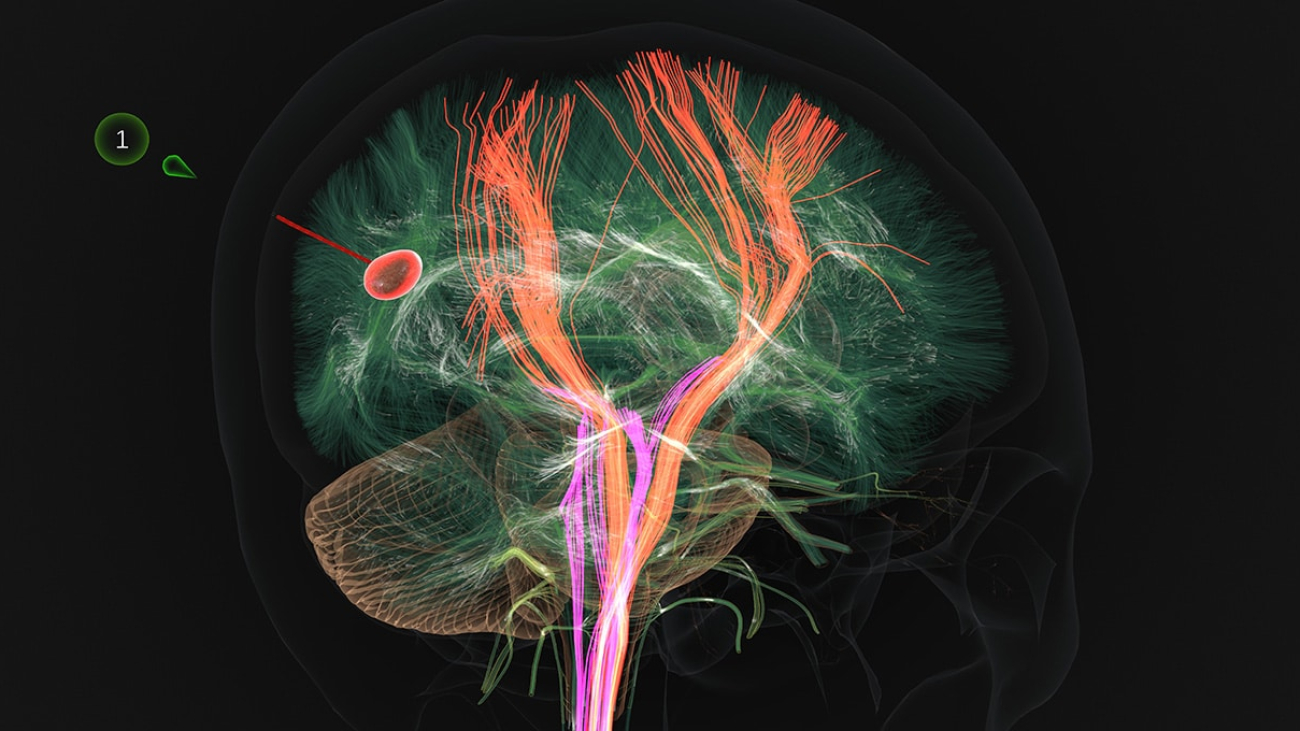

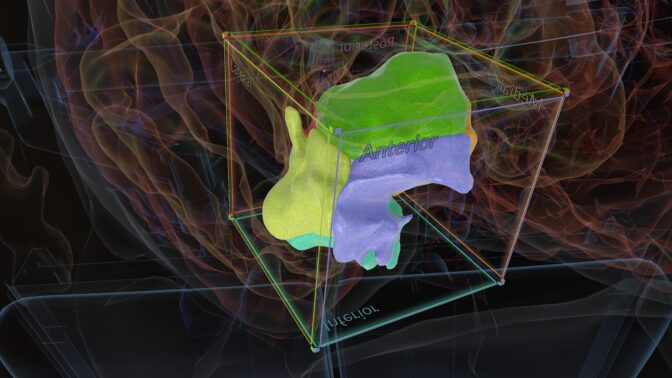

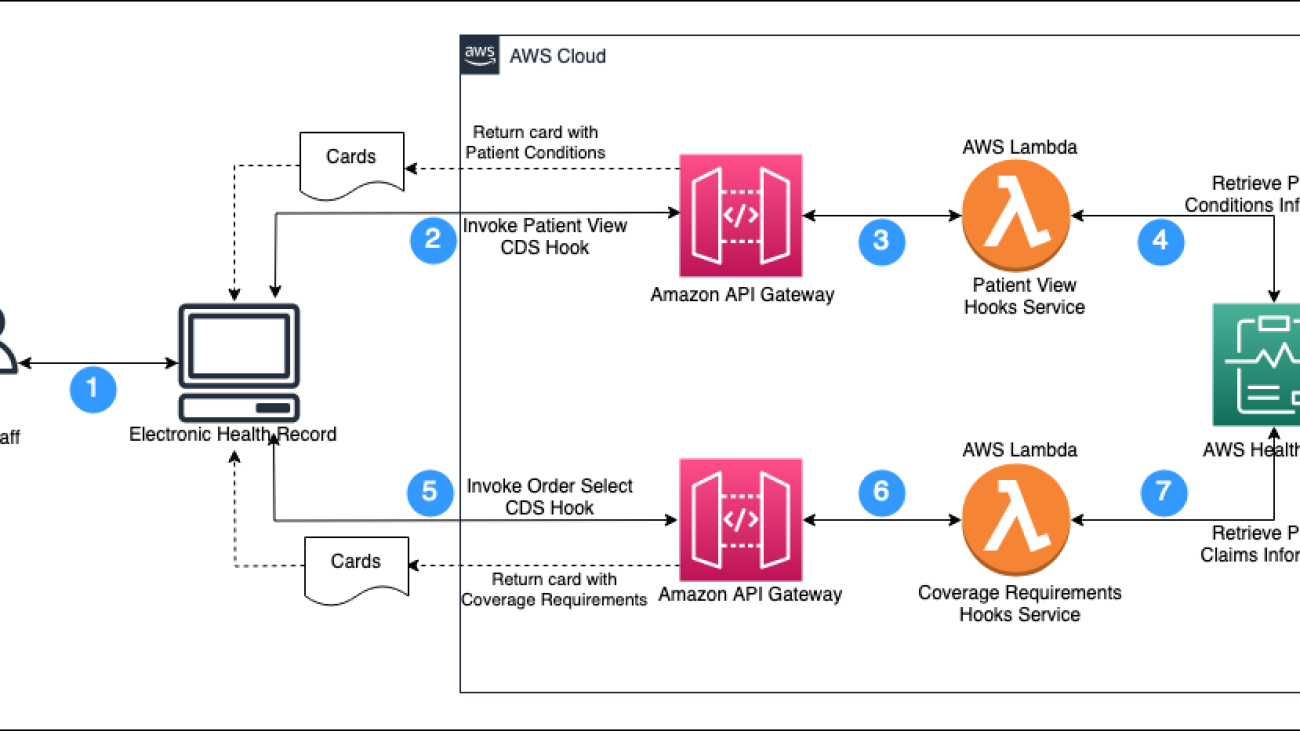

Manish Patel, a Global Partner Solutions Architect supporting Healthcare and Life Sciences at AWS. He has more than 20 years of experience building solutions for Medicare, Medicaid, Payers, Providers and Life Sciences customers. He drives go-to-market strategies along with partners to accelerate solution developments in areas such as Electronics Health Records, Medical Imaging, multi-model data solutions and Generative AI. He is passionate about using technology to transform the healthcare industry to drive better patient care outcomes.

Manish Patel, a Global Partner Solutions Architect supporting Healthcare and Life Sciences at AWS. He has more than 20 years of experience building solutions for Medicare, Medicaid, Payers, Providers and Life Sciences customers. He drives go-to-market strategies along with partners to accelerate solution developments in areas such as Electronics Health Records, Medical Imaging, multi-model data solutions and Generative AI. He is passionate about using technology to transform the healthcare industry to drive better patient care outcomes. Shravan Vurputoor is a Senior Solutions Architect at AWS. As a trusted customer advocate, he helps organizations understand best practices around advanced cloud-based architectures, and provides advice on strategies to help drive successful business outcomes across a broad set of enterprise customers through his passion for educating, training, designing, and building cloud solutions.

Shravan Vurputoor is a Senior Solutions Architect at AWS. As a trusted customer advocate, he helps organizations understand best practices around advanced cloud-based architectures, and provides advice on strategies to help drive successful business outcomes across a broad set of enterprise customers through his passion for educating, training, designing, and building cloud solutions.