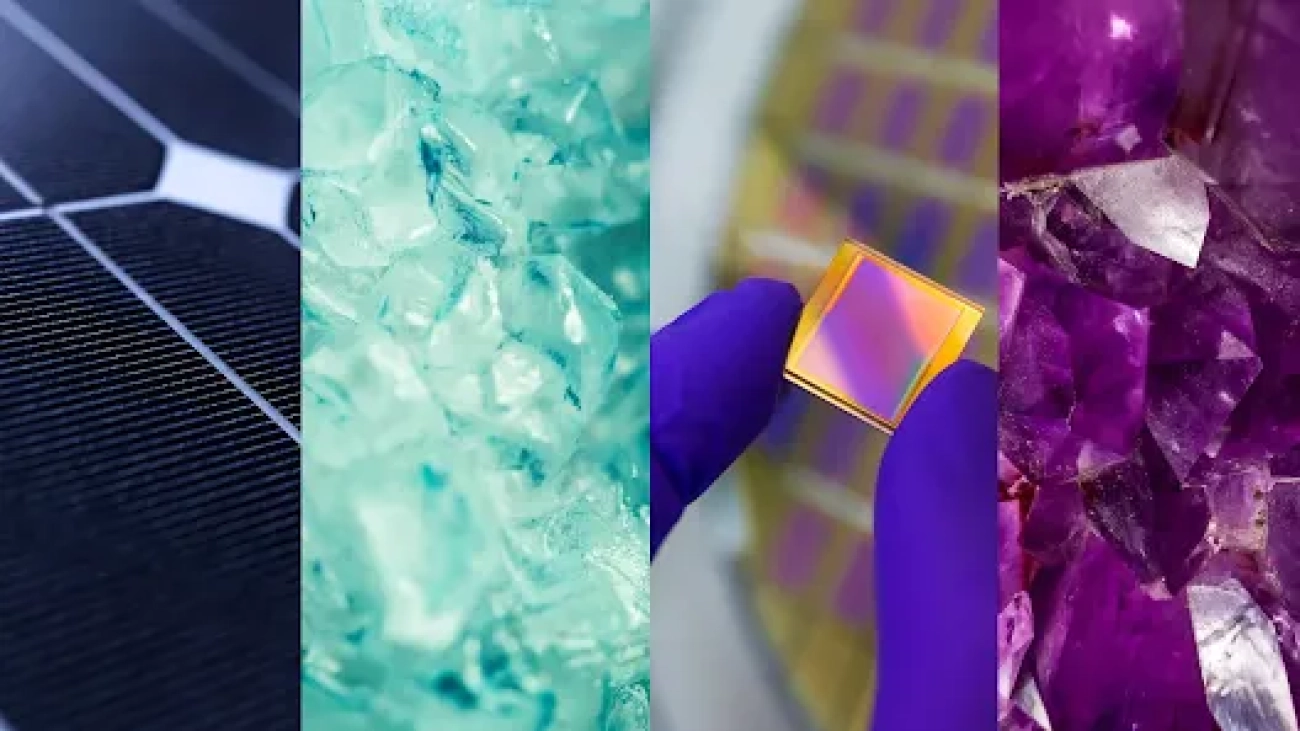

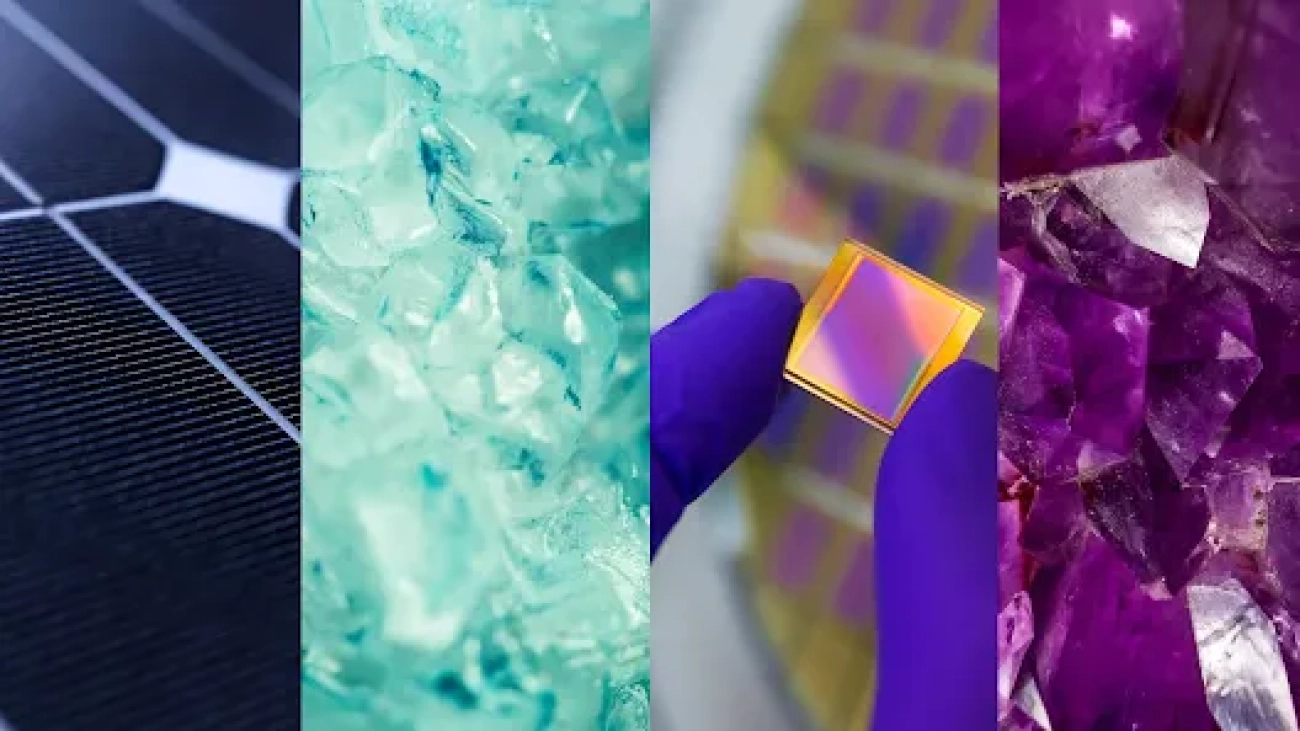

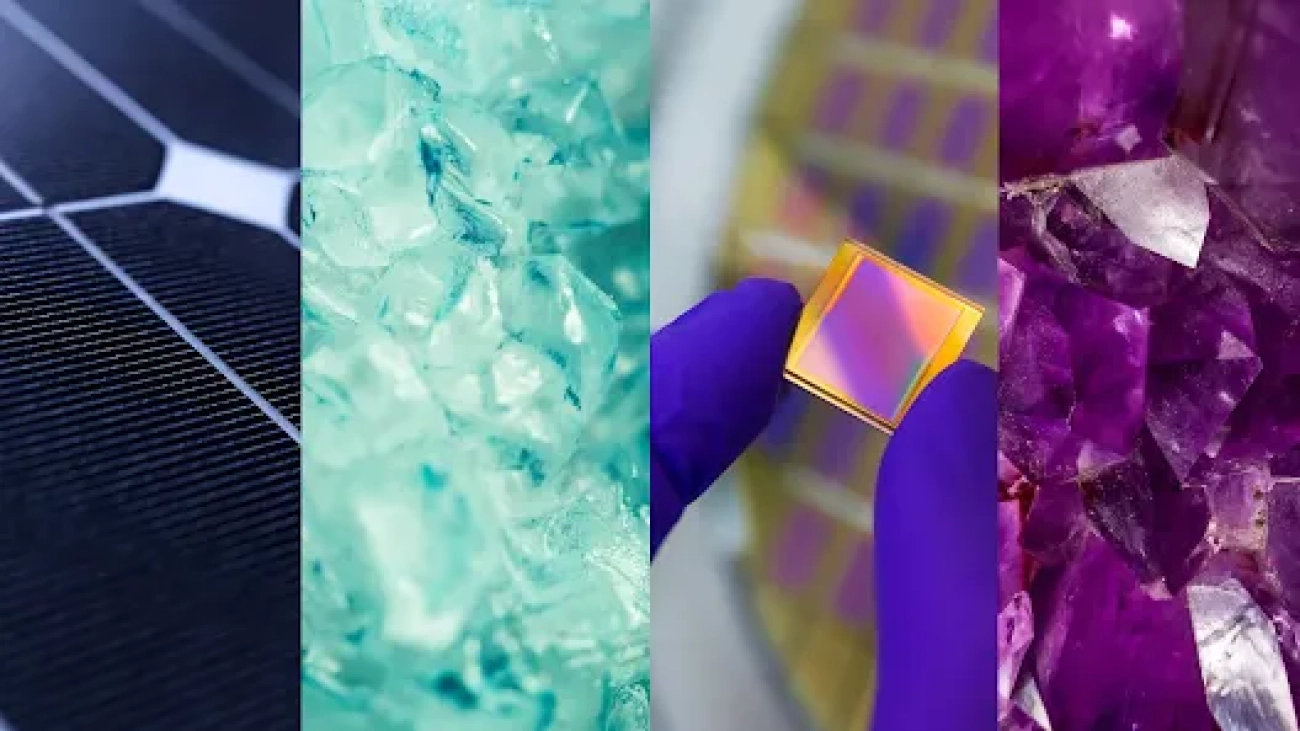

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Millions of new materials discovered with deep learning

We share the discovery of 2.2 million new crystals – equivalent to nearly 800 years’ worth of knowledge. We introduce Graph Networks for Materials Exploration (GNoME), our new deep learning tool that dramatically increases the speed and efficiency of discovery by predicting the stability of new materials.Read More

Sam Altman returns as CEO, OpenAI has a new initial board

Mira Murati as CTO, Greg Brockman returns as President. Read messages from CEO Sam Altman and board chair Bret Taylor.OpenAI Blog

PyTorch 2.1 Contains New Performance Features for AI Developers

We are excited to see the release of PyTorch 2.1. In this blog, we discuss the five features for which Intel made significant contributions to PyTorch 2.1:

- TorchInductor-CPU optimizations including Bfloat16 inference path for torch.compile

- CPU dynamic shape inference path for torch.compile

- C++ wrapper (prototype)

- Flash-attention-based scaled dot product algorithm for CPU

- PyTorch 2 export post-training auantization with an x86 back end through an inductor

At Intel, we are delighted to be part of the PyTorch community and appreciate the collaboration with and feedback from our colleagues at Meta* as we co-developed these features.

Let’s get started.

TorchInductor-CPU Optimizations

This feature optimizes bfloat16 inference performance for TorchInductor. The 3rd and 4th generation Intel® Xeon® Scalable processors have built-in hardware accelerators for speeding up dot-product computation with the bfloat16 data type. Figure 1 shows a code snippet of how to specify the BF16 inference path.

user_model = ...

user_model.eval()

with torch.no_grad(), torch.autocast("cpu"):

compiled_model = torch.compile(user_model)

y = compiled_model(x)

Figure 1. Code snippet showing the use of BF16 inference with TorchInductor

We measured the performance on three TorchInductor benchmark suites—TorchBench, Hugging Face, and TIMM—and the results are as follows in Table 1. Here we see that performance in graph mode (TorchInductor) outperforms eager mode by factors ranging from 1.25x to 2.35x.

Table 1. Bfloat16 performance geometric mean speedup in graph mode, compared with eager mode

| Bfloat16 Geometric Mean Speedup (Single-Socket Multithreads) | |||

| Compiler | torchbench | huggingface | timm_models |

| inductor | 1.81x | 1.25x | 2.35x |

| Bfloat16 Geometric Mean Speedup (Single-Core Single Thread) | |||

| Compiler | torchbench | huggingface | timm_models |

| inductor | 1.74x | 1.28x | 1.29x |

Developers can fully deploy their models on 4th generation Intel Xeon processors to take advantage of the Intel® Advanced Matrix Extensions (Intel® AMX) feature to get peak performance for torch.compile. Intel AMX has two primary components: tiles and tiled matrix multiplication (TMUL). The tiles store large amounts of data in eight two-dimensional registers, each one kilobyte in size. TMUL is an accelerator engine attached to the tiles that contain instructions to compute larger matrices in a single operation.

CPU Dynamic Shapes Inference Path for torch.compile

Dynamic shapes is one of the key features in PyTorch 2.0. PyTorch 2.0 assumes everything is static by default. If we recompile because a size changed, we will instead attempt to recompile that size as being dynamic (sizes that have changed are likely to change in the future). Dynamic shapes support is required for popular models like large language models (LLM). Dynamic shapes that provide support for a broad scope of models can help users get more benefit from torch.compile. For dynamic shapes, we provide the post-op fusion for conv/gemm operators and vectorization code-gen for non-conv/gemm operators.

Dynamic shapes is supported by both the inductor Triton back end for CUDA* and the C++ back end for CPU. The scope covers improvements for both functionality (as measured by model passing rate) and performance (as measured by inference latency/throughput). Figure 2 shows a code snippet for the use of dynamic shape inference with TorchInductor.

user_model = ...

# Training example

compiled_model = torch.compile(user_model)

y = compiled_model(x_size1)

# Here trigger the recompile because the input size changed

y = compiled_model(x_size2)

# Inference example

user_model.eval()

compiled_model = torch.compile(user_model)

with torch.no_grad():

y = compiled_model(x_size1)

# Here trigger the recompile because the input size changed

y = compiled_model(x_size2)

Figure 2. Code snippet showing the use of dynamic shape inference with TorchInductor

We again measured the performance on the three TorchInductor benchmark suites—TorchBench, Hugging Face, and TIMM—and the results are in Table 2. Here we see that performance in graph mode outperforms eager mode by factors ranging from 1.15x to 1.79x.

Table 2. Dynamic shape geometric mean speedup compared with Eager mode

| Dynamic Shape Geometric Mean Speedup (Single-Socket Multithreads) | |||

| Compiler | torchbench | huggingface | timm_models |

| inductor | 1.35x | 1.15x | 1.79x |

| Dynamic Shape Geometric Mean Speedup (Single-Core Single-Thread) | |||

| Compiler | torchbench | huggingface | timm_models |

| inductor | 1.48x | 1.15x | 1.48x |

C++ Wrapper (Prototype)

The feature generates C++ code instead of Python* code to invoke the generated kernels and external kernels in TorchInductor to reduce Python overhead. It is also an intermediate step to support deployment in environments without Python.

To enable this feature, use the following configuration:

import torch

import torch._inductor.config as config

config.cpp_wrapper = True

For light workloads where the overhead of the Python wrapper is more dominant, C++ wrapper demonstrates a higher performance boost ratio. We grouped the models in TorchBench, Hugging Face, and TIMM per the average inference time of one iteration and categorized them into small, medium, and large categories. Table 3 shows the geometric mean speedups achieved by the C++ wrapper in comparison to the default Python wrapper.

Table 3. C++ wrapper geometric mean speedup compared with Eager mode

| FP32 Static Shape Mode Geometric Mean Speedup (Single-Socket Multithreads) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.06x | 1.01x | 1.00x |

| FP32 Static Shape Mode Geometric Mean Speedup (Single-Core Single-Thread) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.13x | 1.02x | 1.01x |

| FP32 Dynamic Shape Mode Geometric Mean Speedup (Single-Socket Multithreads) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.05x | 1.01x | 1.00x |

| FP32 Dynamic Shape Mode Geometric Mean Speedup (Single-Core Single-Thread) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.14x | 1.02x | 1.01x |

| BF16 Static Shape Mode Geometric Mean Speedup (Single-Socket Multithreads) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.09x | 1.03x | 1.04x |

| BF16 Static Shape Mode Geometric Mean Speedup (Single-Core Single-Thread) | |||

| Compiler | Small (t <= 0.04s) | Medium (0.04s < t <= 1.5s) | Large (t > 1.5s) |

| inductor | 1.17x | 1.04x | 1.03x |

Flash-Attention-Based Scaled Dot Product Algorithm for CPU

Scaled dot product attention (SDPA) is one of the flagship features of PyTorch 2.0 that helps speed up transformer models. It is accelerated with optimal CUDA kernels while still lacking optimized CPU kernels. This flash-attention implementation targets both training and inference, with both FP32 and Bfloat16 data types supported. There is no front-end use change for users to leverage this SDPA optimization. When calling SDPA, a specific implementation will be chosen automatically, including this new implementation.

We have measured the SDPA-related models in Hugging Face, and they are proven effective when compared to the unfused SDPA. Shown in Table 4 are the geometric mean speedups for SDPA optimization.

Table 4. SDPA optimization performance geometric mean speedup

| SDPA Geometric Mean Speedup (Single-Socket Multithreads) | ||

| Compiler | Geometric Speedup FP32 | Geometric Speedup BF16 |

| inductor | 1.15x, 20/20 | 1.07x, 20/20 |

| SDPA Geometric Mean Speedup (Single-Core Single-Thread) | ||

| Compiler | Geometric Speedup FP32 | Geometric Speedup BF16 |

| inductor | 1.02x, 20/20 | 1.04x, 20/20 |

PyTorch 2 Export Post-Training Quantization with x86 Back End through Inductor

PyTorch provides a new quantization flow in the PyTorch 2.0 export. This feature uses TorchInductor with an x86 CPU device as the back end for post-training static quantization with this new quantization flow. An example code snippet is shown in Figure 3.

import torch

import torch._dynamo as torchdynamo

from torch.ao.quantization.quantize_pt2e import convert_pt2e, prepare_pt2e

import torch.ao.quantization.quantizer.x86_inductor_quantizer as xiq

model = ...

model.eval()

with torch.no_grad():

# Step 1: Trace the model into an FX graph of flattened ATen operators

exported_graph_module, guards = torchdynamo.export(

model,

*copy.deepcopy(example_inputs),

aten_graph=True,

)

# Step 2: Insert observers or fake quantize modules

quantizer = xiq.X86InductorQuantizer()

operator_config = xiq.get_default_x86_inductor_quantization_config()

quantizer.set_global(operator_config)

prepared_graph_module = prepare_pt2e(exported_graph_module, quantizer)

# Doing calibration here.

# Step 3: Quantize the model

convert_graph_module = convert_pt2e(prepared_graph_module)

# Step 4: Lower Quantized Model into the backend

compile_model = torch.compile(convert_graph_module)

Figure 3. Code snippet showing the use of Inductor as back end for PyTorch 2 export post-training quantization

All convolutional neural networks (CNN) models from the TorchBench test suite have been measured and proven effective when compared with the Inductor FP32 inference path. Performance metrics are shown in Table 5.

| Compiler | Geometric Speedup | Geometric Related Accuracy Loss |

| inductor | 3.25x, 12/12 | 0.44%, 12/12 |

Next Steps

Get the Software

Try out PyTorch 2.1 and realize the performance benefits for yourself from these features contributed by Intel.

We encourage you to check out Intel’s other AI Tools and framework optimizations and learn about the open, standards-based oneAPI multiarchitecture, multivendor programming model that forms the foundation of Intel’s AI software portfolio.

For more details about the 4th generation Intel Xeon Scalable processor, visit the AI platform where you can learn how Intel is empowering developers to run high-performance, efficient end-to-end AI pipelines.

PyTorch Resources

Product and Performance Information

1 Amazon EC2* m7i.16xlarge: 1-node, Intel Xeon Platinum 8488C processor with 256 GB memory (1 x 256 GB DDR5 4800 MT/s), microcode 0x2b000461, hyperthreading on, turbo on, Ubuntu* 22.04.3 LTS, kernel 6.2.0-1011-aws, GCC* 11.3.0, Amazon Elastic Block Store 200 GB, BIOS Amazon EC2 1.0 10/16/2017; Software: PyTorch 2.1.0_rc4, Intel® oneAPI Deep Neural Network Library (oneDNN) version 3.1.1, TorchBench, TorchVision, TorchText, TorchAudio, TorchData, TorchDynamo Benchmarks, tested by Intel on 9/12/2023.

2 Amazon EC2 c6i.16xlarge: 1-node, Intel Xeon Platinum 8375C processor with 128 GB memory (1 x 128 GB DDR4 3200 MT/s), microcode 0xd0003a5, hyperthreading on, turbo on, Ubuntu 22.04.2 LTS, kernel 6.2.0-1011-aws, gcc 11.3.0, Amazon Elastic Block Store 200 GB, BIOS Amazon EC2 1.010/16/2017; Software: PyTorch 2.1.0_rc4, oneDNN version 3.1.1, TorchBench, TorchVision, TorchText, TorchAudio, TorchData, TorchDynamo Benchmarks, TorchBench cpu userbenchmark, tested by Intel on 9/12/2023.

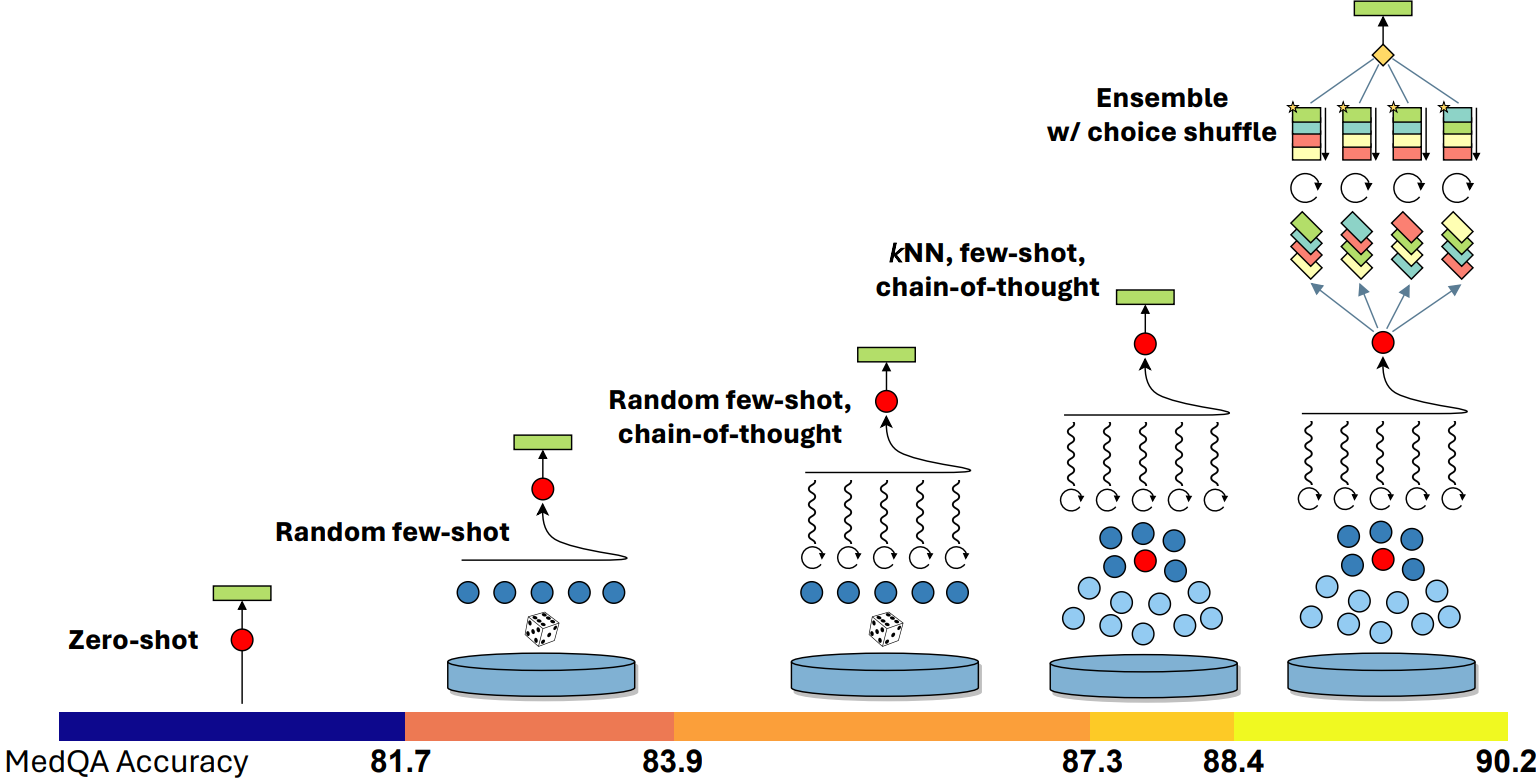

The Power of Prompting

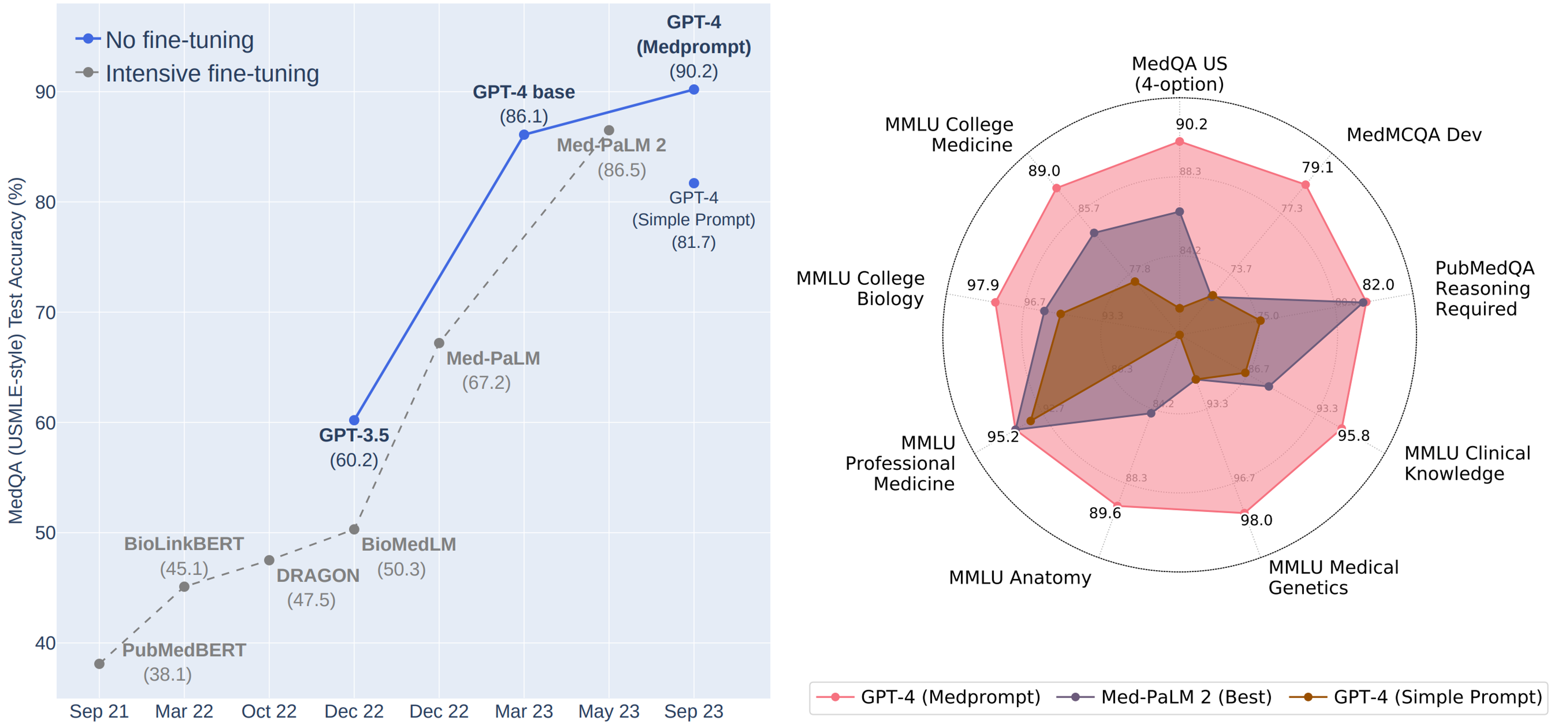

Today, we published an exploration of the power of prompting strategies that demonstrates how the generalist GPT-4 model can perform as a specialist on medical challenge problem benchmarks. The study shows GPT-4’s ability to outperform a leading model that was fine-tuned specifically for medical applications, on the same benchmarks and by a significant margin. These results are among other recent studies that show how prompting strategies alone can be effective in evoking this kind of domain-specific expertise from generalist foundation models.

During early evaluations of the capabilities of GPT-4, we were excited to see glimmers of general problem-solving skills, with surprising polymathic capabilities of abstraction, generalization, and composition—including the ability to weave together concepts across disciplines. Beyond these general reasoning powers, we discovered that GPT-4 could be steered via prompting to serve as a domain-specific specialist in numerous areas. Previously, eliciting these capabilities required fine-tuning the language models with specially curated data to achieve top performance in specific domains. This poses the question of whether more extensive training of generalist foundation models might reduce the need for fine-tuning.

In a study shared in March, we demonstrated how very simple prompting strategies revealed GPT-4’s strengths in medical knowledge without special fine-tuning. The results showed how the “out-of-the-box” model could ace a battery of medical challenge problems with basic prompts. In our more recent study, we show how the composition of several prompting strategies into a method that we refer to as “Medprompt” can efficiently steer GPT-4 to achieve top performance. In particular, we find that GPT-4 with Medprompt:

- Surpasses 90% on MedQA dataset for the first time

- Achieves top reported results on all nine benchmark datasets in the MultiMedQA suite

- Reduces error rate on MedQA by 27% over that reported by MedPaLM 2

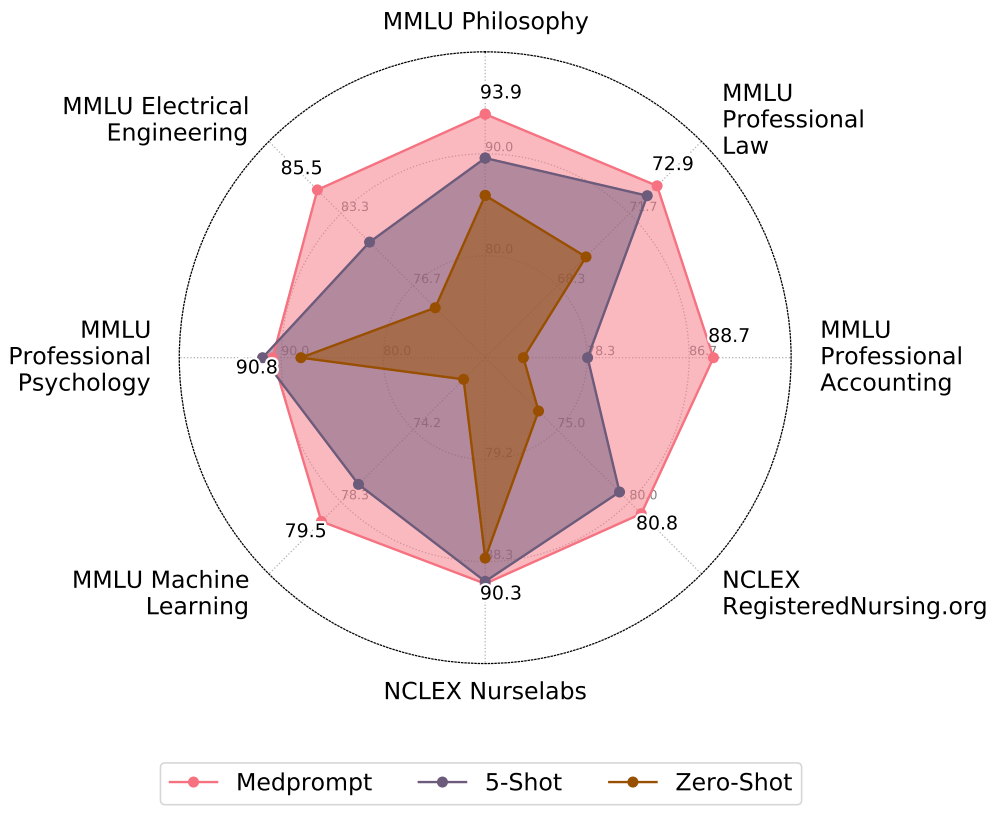

Many AI practitioners assume that specialty-centric fine-tuning is required to extend generalist foundation models to perform well on specific domains. While fine-tuning can boost performance, the process can be expensive. Fine-tuning often requires experts or professionally labeled datasets (e.g., via top clinicians in the MedPaLM project) and then computing model parameter updates. The process can be resource-intensive and cost-prohibitive, making the approach a difficult challenge for many small and medium-sized organizations. The Medprompt study shows the value of more deeply exploring prompting possibilities for transforming generalist models into specialists and extending the benefits of these models to new domains and applications. In an intriguing finding, the prompting methods we present appear to be valuable, without any domain-specific updates to the prompting strategy, across professional competency exams in a diversity of domains, including electrical engineering, machine learning, philosophy, accounting, law, and psychology.

At Microsoft, we’ve been working on the best ways to harness the latest advances in large language models across our products and services while keeping a careful focus on understanding and addressing potential issues with the reliability, safety, and usability of applications. It’s been inspirational to see all the creativity, and the careful integration and testing of prototypes, as we continue the journey to share new AI developments with our partners and customers.

The post The Power of Prompting appeared first on Microsoft Research.