Amazon Personalize now enables you to optimize personalized recommendations for a business metric of your choice, in addition to improving relevance of recommendations for your users. You can define a business metric such as revenue, profit margin, video watch time, or any other numerical attribute of your item catalog to optimize your recommendations. Amazon Personalize automatically learns what is relevant to your users, considers the business metric you’ve defined, and recommends the products or content to your users that benefit your overall business goals. Configuring an additional objective is easy. You select any numerical column in your catalog when creating a new solution in Amazon Personalize via the AWS Management Console or the API, and you’re ready to go.

Amazon Personalize enables you to easily add real-time personalized recommendations to your applications without requiring any ML expertise. With Amazon Personalize, you pay for what you use, with no minimum fees or upfront commitments. You can get started with a simple three-step process, which takes only a few clicks on the console or a few simple API calls. First, point Amazon Personalize to your user data, catalog data, and activity stream of views, clicks, purchases, and so on, in Amazon Simple Storage Service (Amazon S3) or upload using an API call. Second, either via the console or an API call, train a custom, private recommendation model for your data (CreateSolution). Third, retrieve personalized recommendations for any user by creating a campaign and using the GetRecommendations API.

The rest of this post walks you through the suggested best practices for generating recommendations for your business in greater detail.

Streaming movie service use case

In this post, we propose a fictitious streaming movie service, and as part of the service we provide movie recommendations using movie reviews from the MovieLens database. We assume the streaming service’s agreement with content providers requires royalties every time a movie is viewed. For our use case, we assume movies that have royalties that range from $0.00 to $0.10 per title. All things being equal, the streaming service wants to provide recommendations for titles that the subscriber will enjoy, but minimize costs by recommending titles with lower royalty fees.

It’s important to understand that a trade-off is made when including a business objective in recommendations. Placing too much weight on the objective can lead to a loss of opportunities with customers as the recommendations presented become less relevant to user interests. If the objective weight doesn’t impart enough impact on recommendations, the recommendations will still be relevant but may not drive the business outcomes you aim to achieve. By testing the models in real-world environments, you can collect data on the impact the objective has on your results and balance the relevance of the recommendations with your business objective.

Movie dataset

The items dataset from MovieLens has a structure as follows.

| ITEM_ID | TITLE | ROYALTY | GENRE |

| 1 | Toy Story (1995) | 0.01 | ANIMATION|CHILDRENS|COMEDY |

| 2 | GoldenEye (1995) | 0.02 | ACTION|ADVENTURE|THRILLER |

| 3 | Four Rooms (1995) | 0.03 | THRILLER |

| 4 | Get Shorty (1995) | 0.04 | ACTION|COMEDY|DRAMA |

| 5 | Copycat (1995) | 0.05 | CRIME|DRAMA|THRILLER |

| … | … | … | … |

Amazon Personalize objective optimization requires a numerical field to be defined in the item metadata, which is used when considering your business objective. Because Amazon Personalize optimizes for the largest value in the business metric column, simply passing in the royalty amount results in the recommendations driving customers to those movies with the highest royalties. To minimize royalties, we multiply the royalty field by -1, and capture how much the streaming service will spend in royalties to stream the movie.

| ITEM_ID | TITLE | ROYALTY | GENRE |

| 1 | Toy Story (1995) | -0.01 | ANIMATION|CHILDRENS|COMEDY |

| 2 | GoldenEye (1995) | -0.02 | ACTION|ADVENTURE|THRILLER |

| 3 | Four Rooms (1995) | -0.03 | THRILLER |

| 4 | Get Shorty (1995) | -0.04 | ACTION|COMEDY|DRAMA |

| 5 | Copycat (1995) | -0.05 | CRIME|DRAMA|THRILLER |

| … | … | … | … |

In this example, the royalty value ranges from -0.12 to 0. The objective’s value can be an integer or a floating point, and the lowest value is adjusted to zero internally by the service when creating a solution regardless of whether the lowest value is positive or negative. The highest value is adjusted to 1, and other values are interpolated between 0–1, preserving the relative difference between all data points.

For movie recommendations, we use the following schema for the items dataset:

{

"type": "record",

"name": "Items",

"namespace": "com.amazonaws.personalize.schema",

"fields": [

{

"name": "ITEM_ID",

"type": "string"

},

{

"name": "ROYALTY",

"type": "float"

},

{

"name": "GENRE",

"type": [

"null",

"string"

],

"categorical": True

}

],

"version": "1.0"

}

The items dataset includes the mandatory ITEM_ID field, list of genres, and savings fields.

Comparing three solutions

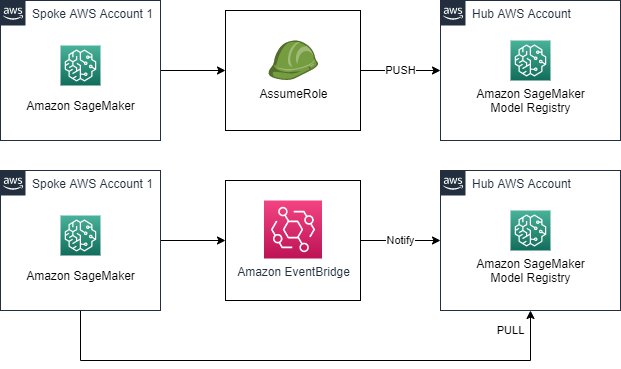

The following diagram illustrates the architecture we use to test the benefits of objective optimization. In this scenario, we use two buckets – Items contains movie data and Interactions contains positive movie reviews. The data from the buckets is loaded into the Amazon Personalize dataset group. Once loaded, three solutions are driven from the two datasets: one solution with objective sensitivity off, a second solution with objective sensitivity set to low, and the third has the objective sensitivity set to high. Each of these solutions drives a corresponding campaign.

After the datasets are loaded in an Amazon Personalize dataset group, we create three solutions to demonstrate the impact of the varied objective optimizations on recommendations. The optimization objective selected when creating an Amazon Personalize solution and can have a sensitivity level set to one of four values: OFF, LOW, MEDIUM, or HIGH. This provides a setting on how much weight to give to the business objective, and in this post we show the impact that these settings can have on recommendation performance. While developing your own models, you should experiment with the sensitivity setting to evaluate what drives the best results for your recommendations. Because the objective optimization maximizes for the business metric, we must select ROYALTY as the objective optimization column.

The following example Python code creates an Amazon Personalize solution:

create_solution_response = personalize.create_solution(

name = "solution name",

datasetGroupArn = dataset_group_arn,

recipeArn = recipe_arn,

solutionConfig = {

"optimizationObjective": {

"itemAttribute": "ROYALTY",

"objectiveSensitivity":"HIGH"

}

}

)

After the solution versions have been trained, you can compare the offline metrics by calling the DescribeSolutionVersion API or visiting the Amazon Personalize console for each solution version.

| Metric | no-optimization | low-optimization | high-optimization |

| Average rewards-at-k | 0.1491 | 0.1412 | 0.1686 |

| coverage | 0.1884 | 0.1711 | 0.1295 |

| MRR-25 | 0.0769 | 0.1116 | 0.0805 |

| NDCG-10 | 0.0937 | 0.1 | 0.0999 |

| NDCG-25 | 0.14 | 0.1599 | 0.1547 |

| NDCG-5 | 0.0774 | 0.0722 | 0.0698 |

| Precision-10 | 0.027 | 0.0292 | 0.0281 |

| Precision-25 | 0.0229 | 0.0256 | 0.0238 |

| Precision-5 | 0.0337 | 0.0315 | 0.027 |

In the preceding table, larger numbers are better. For coverage, this is the ratio of items that are present in recommendations compared to the total number of items in the dataset (how many items in your catalog are covered by the recommendation generated). To make sure Amazon Personalize recommends a larger portion of your movie catalog, use a model with a higher coverage score.

The average rewards-at-k metric indicates how the solution version performs in achieving your objective. Amazon Personalize calculates this metric by dividing the total rewards generated by interactions (for example, total revenue from clicks) by the total possible rewards from recommendations. The higher the score, the more gains on average per user you can expect from recommendations.

The mean reciprocal rank (MRR) metric measures the relevance of the highest ranked item in the list, and is important for situations where the user is very likely to select the first item recommended. Normalized discounted cumulative gain at k (NDCG-k) measures the relevance of the highest k items, providing the highest weight to the first k in the list. NDCG is useful for measuring effectiveness when multiple recommendations are presented to users, but highest-rated recommendations are more important than lower-rated recommendations. The Precision-k metric measures the number of relevant recommendations in the top k recommendations.

As the solution weighs the objective higher, metrics tend to show lower relevance for users because the model is selecting recommendations based on user behavior data and the business objective. Amazon Personalize provides the ability to control how much influence the objective imparts on recommendations. If the objective provides too much influence, you can expect it to create a poor customer experience because the recommendations stop being relevant to the user. By running an A/B test, you can collect the data needed to deliver the results that best balance relevance and your business objective.

We can retrieve recommendations from the solution versions by creating an Amazon Personalize campaign for each one. A campaign is a deployed solution version (trained model) with provisioned dedicated capacity for creating real-time recommendations for your users. Because the three campaigns share the same item and interaction data, the only variable in the model is the objective optimization settings. When you compare the recommendations for a randomly selected user, you can see how recommendations can change with varied objective sensitivities.

The following chart shows the results of the three campaigns. The rank indicates the order of relevance that Amazon Personalize has generated for each title for the sample user. The title, year, and royalty amount are listed in each cell. Notice how “The Big Squeeze (1994)” moves to the top of the list from fourth position when objective optimization is turned off. Meanwhile, “The Machine (1994)” drops from first position to fifth position when objective optimization is set to low, and down to 24th position when objective optimization is set to high.

| Rank | OFF | LOW | HIGH |

| 1 | Machine, The (1994)(0.01) | Kazaam (1996)(0.00) | Kazaam (1996)(0.00) |

| 2 | Last Summer in the Hamptons (1995)(0.01) | Machine, The (1994)(0.01) | Last Summer in the Hamptons (1995)(0.01) |

| 3 | Wedding Bell Blues (1996)(0.02) | Last Summer in the Hamptons (1995)(0.01) | Big One, The (1997)(0.01) |

| 4 | Kazaam (1996)(0.00) | Wedding Bell Blues (1996)(0.02) | Machine, The (1994)(0.01) |

| 5 | Heaven & Earth (1993)(0.01) | Gordy (1995)(0.00) | Gordy (1995)(0.00) |

| 6 | Pushing Hands (1992)(0.03) | Venice/Venice (1992)(0.01) | Vermont Is For Lovers (1992)(0.00) |

| 7 | Big One, The (1997)(0.01) | Vermont Is For Lovers (1992)(0.00) | Robocop 3 (1993)(0.01) |

| 8 | King of New York (1990)(0.01) | Robocop 3 (1993)(0.01) | Venice/Venice (1992)(0.01) |

| 9 | Chairman of the Board (1998)(0.05) | Big One, The (1997)(0.01) | Etz Hadomim Tafus (Under the Domin Tree) (1994… |

| 10 | Bushwhacked (1995)(0.05) | Phat Beach (1996)(0.01) | Phat Beach (1996)(0.01) |

| 11 | Big Squeeze, The (1996)(0.05) | Etz Hadomim Tafus (Under the Domin Tree) (1994… | Wedding Bell Blues (1996)(0.02) |

| 12 | Big Bully (1996)(0.03) | Heaven & Earth (1993)(0.01) | Truth or Consequences, N.M. (1997)(0.01) |

| 13 | Gordy (1995)(0.00) | Pushing Hands (1992)(0.03) | Surviving the Game (1994)(0.01) |

| 14 | Truth or Consequences, N.M. (1997)(0.01) | Truth or Consequences, N.M. (1997)(0.01) | Niagara, Niagara (1997)(0.00) |

| 15 | Venice/Venice (1992)(0.01) | King of New York (1990)(0.01) | Trial by Jury (1994)(0.01) |

| 16 | Invitation, The (Zaproszenie) (1986)(0.10) | Big Bully (1996)(0.03) | King of New York (1990)(0.01) |

| 17 | August (1996)(0.03) | Niagara, Niagara (1997)(0.00) | Country Life (1994)(0.01) |

| 18 | All Things Fair (1996)(0.01) | All Things Fair (1996)(0.01) | Commandments (1997)(0.00) |

| 19 | Etz Hadomim Tafus (Under the Domin Tree) (1994… | Surviving the Game (1994)(0.01) | Target (1995)(0.01) |

| 20 | Target (1995)(0.01) | Chairman of the Board (1998)(0.05) | Heaven & Earth (1993)(0.01) |

| 21 | Careful (1992)(0.10) | Bushwhacked (1995)(0.05) | Beyond Bedlam (1993)(0.00) |

| 22 | Vermont Is For Lovers (1992)(0.00) | August (1996)(0.03) | Mirage (1995)(0.01) |

| 23 | Phat Beach (1996)(0.01) | Big Squeeze, The (1996)(0.05) | Pushing Hands (1992)(0.03) |

| 24 | Johnny 100 Pesos (1993)(0.03) | Bloody Child, The (1996)(0.02) | You So Crazy (1994)(0.01) |

| 25 | Surviving the Game (1994)(0.01) | Country Life (1994)(0.01) | All Things Fair (1996)(0.01) |

| TOTAL Royalty | TOTAL ROYALTIES: 0.59 | TOTAL ROYALTIES: 0.40 | TOTAL ROYALTIES: 0.20 |

The trend of lower royalties as the objective optimization setting is increased from low to high, as you would expect. The sum of all the royalties for the 25 recommended titles also decreased from $0.59 with no objective optimization to $0.20 with objective optimization set to high.

Conclusion

You can use Amazon Personalize to combine user interaction data with a business objective, thereby improving the business outcomes that recommendations deliver for your business. As we’ve shown, objective optimization influenced the recommendations to lower the costs for the movies in our fictitious movie recommendation service. The trade-off between recommendation relevance and the objective is an important consideration, because optimizing for revenue can make your recommendations less relevant for your users. Other examples include steering users to premium content, promoted content, or items with the highest reviews. This additional objective can improve the quality of the recommendations as well as take into account factors you know are important to your business.

The source code for this post is available on GitHub.

To learn more about Amazon Personalize, visit the product page.

About the Authors

Mike Gillespie is a solutions architect at Amazon Web Services. He works with the AWS customers to provide guidance and technical assistance helping them improve the value of their solutions when using AWS. Mike specializes in helping customers with serverless, containerized, and machine learning applications. Outside of work, Mike enjoys being outdoors running and paddling, listening to podcasts, and photography.

Mike Gillespie is a solutions architect at Amazon Web Services. He works with the AWS customers to provide guidance and technical assistance helping them improve the value of their solutions when using AWS. Mike specializes in helping customers with serverless, containerized, and machine learning applications. Outside of work, Mike enjoys being outdoors running and paddling, listening to podcasts, and photography.

Matt Chwastek is a Senior Product Manager for Amazon Personalize. He focuses on delivering products that make it easier to build and use machine learning solutions. In his spare time, he enjoys reading and photography.

Matt Chwastek is a Senior Product Manager for Amazon Personalize. He focuses on delivering products that make it easier to build and use machine learning solutions. In his spare time, he enjoys reading and photography.

Ge Liu is an Applied Scientist at AWS AI Labs working on developing next generation recommender system for Amazon Personalize. Her research interests include Recommender System, Deep Learning, and Reinforcement Learning.

Ge Liu is an Applied Scientist at AWS AI Labs working on developing next generation recommender system for Amazon Personalize. Her research interests include Recommender System, Deep Learning, and Reinforcement Learning.

Abhishek Mangal is a Software Engineer for Amazon Personalize and works on architecting software systems to serve customers at scale. In his spare time, he likes to watch anime and believes ‘One Piece’ is the greatest piece of story-telling in recent history.

Abhishek Mangal is a Software Engineer for Amazon Personalize and works on architecting software systems to serve customers at scale. In his spare time, he likes to watch anime and believes ‘One Piece’ is the greatest piece of story-telling in recent history.