Video conferencing has allowed many to be productive from anywhere.

Now NVIDIA is boosting the productivity of the developers of video conferencing, call center and streaming applications within the $10 billion industry by allowing them to easily integrate AI into their workflows.

The new release of the Maxine AI Developer Platform transforms the creation of state-of-the-art, real-time video conferencing applications with features enabling enhanced user flexibility, engagement and efficiency.

Available through the NVIDIA AI Enterprise software platform, Maxine allows developers to tap into the latest AI-driven features — such as enhanced video and audio quality and augmented reality effects — to turn users’ everyday video calls into engaging, collaborative experiences.

Expanding Video Conferencing With New Maxine Features

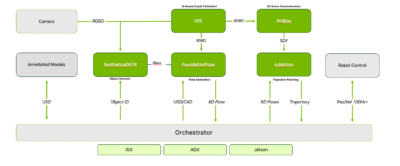

The Maxine AI Developer Platform enables developers to easily access and integrate real-time, AI-enhanced features that increase the quality of engagement for video conferencing users.

Features like noise reduction, video denoising and upscaling, and studio voice improve the quality of audio and video streams. With advanced capabilities like eye-gaze correction, live portrait and future features such as video relighting and cloud microservice Maxine 3D, developers can enhance video conferencing engagement and personal connection.

The platform extends the utility of the state-of-the-art AI models for audio, video and augmented reality effects with multiple ways for developers to deliver Maxine features with offerings of software development kits, microservices, and even application programming interface (API) endpoints delivered from NVIDIA’s cloud infrastructure.

Maxine production feature updates available now include:

- Eye Contact: The improved eye contact model provides gaze redirection with natural eye movements for deeper meeting participant engagement.

- Voice Font: This new model matches the speaker’s voice to a target voice while keeping linguistic information and prosody (rhythm and tone) unchanged.

- Background Noise Reduction (BNR) 2.0: This model updates noise reduction for human listening and for language encoding with a specific effort to decrease encoding word error rates.

New features available for early access this spring include:

- Speech Live Portrait: This model allows a user to drive their portrait with direct speech or any audio source, allowing users to always look their best during a conference call.

- Studio Voice: This model enables ordinary headset, laptop and desktop microphones to deliver the sound of a high-end studio mic, allowing users to always sound their best during a conference call.

The Maxine early access program shares preproduction and prerelease builds of upcoming features in order to get feedback from developers on the utility and refinement of Maxine models. In this release we are asking developers for feedback on features early in the development pipeline including:

- Maxine 3D: Previously shown as a research demonstration at SIGGRAPH 2023, this cloud microservice offers a new level of engagement for video conferencing with real-time NeRF technology lifting 2D video to 3D.

- Video Relighting: This new model uses a high-dynamic-range image to light the user, enabling seamless matching of user lighting with various background images.

- API Endpoints: API Endpoints offer developers the flexibility of accessing Maxine features through NVIDIA cloud infrastructure, making Maxine integration even easier.

Jugo and Arsenal Football Club Score Major Goals

Sporting events are the ultimate human experience, uniting teams and fans beyond borders and language barriers. Jugo, using Maxine’s AI Green Screen feature, offers a digital platform for virtual events that enables companies to create immersive experiences with Unreal Engine that bring fans together from all over the world without the use of a full production studio.

Arsenal FC, a powerhouse franchise in England’s Premier League, is collaborating with Jugo to revolutionize the way the soccer club engages with its 600 million global fan base. The collaboration offers new virtual sports entertainment experiences to boost engagement for global supporters. Jugo brings the power of real, human interaction into Arsenal events, creating realistic virtual connections between supporters and the club’s sports heroes.

“The Jugo Experience platform is transforming the market for brands in their pursuit of global awareness and engagement,” said Richard Stirk, CEO of Jugo Experience. “Arsenal F.C. is the perfect example of a global brand extension. The flexibility in creating an immersive brand experience is a key to Jugo’s offering and the Maxine AI Developer Platform is a basic building block of this flexibility.”

Setting a New Standard of AI-Enhanced Video Conferencing

Among the first customers to tap into the newest set of features within the early access program to create a professional audio-visual studio from commodity cameras and microphones are Gemelo, Pexip, Spectacle and VideoRequest.

“Gemelo has been involved in testing prerelease builds of Maxine models for a number of years now, and we value the chance to give early input on Maxine features as they’re developed,” said Paul Jaski, CEO of Gemelo. “The latest feature, Speech Live Portrait, will provide our customers with greater flexibility in creating customized video messaging, opening the doors to a new era of personalization.”

“Pexip welcomes the chance to test development versions of Maxine features and help guide the final product models,” said Ian Mortimer, chief technology officer at Pexip. “In testing the newest version of Maxine BNR, we are seeing significant improvements in intelligibility and speech quality and plan to continue refining our testing parameters to help optimize for accuracy in AI translation pipelines.”

“The NVIDIA Maxine Eye Contact API significantly simplified our path to providing engaging video processing capabilities to the users of our Spectacle app, eliminating the need to worry about infrastructure and resource-intensive integrations,” said Benjamin Portman, president of Spectacle. “With it, we were able to create a proof of concept within a matter of days, speeding up our production application deployment timeline.”

“Our early testing of Maxine Studio Voice enabled an impressive look into what is now possible with AI-enhanced production and video testimonials,” said Joe Tyler, chief technology officer at VideoRequest. “The new Maxine BNR and Eye Contact features will help elevate the quality of our customer’s videos by overcoming their challenging recording environments.”

Availability

Learn more about NVIDIA Maxine, which is available now on NVIDIA AI Enterprise.

See notice regarding software product information.