AWS DeepRacer is the fastest way to get started with machine learning (ML). You can train reinforcement learning (RL) models by using a 1/18th scale autonomous vehicle in a cloud-based virtual simulator and compete for prizes and glory in the global AWS DeepRacer League.

We’re excited to bring you two new features available on the AWS DeepRacer console: a new RL algorithm called Soft Actor Critic (SAC) and a new way of defining your action space called continuous action space. Understanding how SAC and continuous action space work will let you come up with new strategies to top the AWS DeepRacer League. This post walks you through the unique features of the SAC algorithm and how to use it with continuous action space. By the end, you will learn how to use continuous action space and be ready to train your first SAC RL model on the AWS DeepRacer console.

Reviewing the fundamentals

Let’s first review some fundamental RL concepts that give us a foundation to dive deeper into SAC. The objective of RL models is to maximize total reward, which is done by exploring the environment. In the case of the AWS DeepRacer, the environment is the track that you choose to train your model on. The agent, which for AWS DeepRacer is the car, explores the environment by following a policy. A policy determines the action the agent takes after observing the environment (for example turning left, forward, or right). AWS DeepRacer observes the environment by using image data or a combination of image and LIDAR data.

As the agent explores the environment, the agent learns a value function. We can think of the value function as a way to judge how good an action taken is, after observing the environment. The value function uses the reward function that you write in the AWS DeepRacer console to score the action. For example, if we choose the “follow the center line” sample reward function in the AWS DeepRacer console, a good action keeps the agent near the center of the track and is scored higher than a bad action, which moves the agent away from the center of the track.

Over time, the value function helps us learn policies that increase the total reward. To learn the optimal or best policy, we balance the amount of time we spend exploring the environment versus the amount of time we spend exploiting what our policy has learned over time. For example, if we consider the “follow the center line” sample reward function, we first take random actions to explore the environment, meaning that our agent doesn’t do a very good job at staying in the center of the track. Over time, the agent learns which actions keep it near the center of the track, but if we keep taking random actions, it takes a long time to learn how to stay at the center of the track for the entire lap. So as the policy begins to learn the good actions, we begin to use those actions instead of taking random actions. However, if we always use or exploit the good actions, we never learn anything new because we fail to explore the environment. This trade-off is often referred to as the “exploration vs. exploitation” problem in RL.

What’s new with SAC?

Now that we have the fundamental RL concepts down, let’s look at how SAC works and how it compares to the other algorithm available on the AWS DeepRacer console, Proximal Policy Optimization (PPO).

There are three main differences between PPO and SAC. The first is that the implementation of SAC on the AWS DeepRacer console only allows you to select continuous action space (covered later in this post).

The second and sharper contrast between PPO and SAC is in how they leverage the information learned by the policy while exploring the environment between training iterations. PPO uses on-policy learning, which means that we learn the value function from observations made by the current policy exploring the environment. SAC, on the other hand, uses off-policy learning, which means it can use observations made by previous policies exploring the environment.

The trade-off between off-policy and on-policy learning tends to be stability vs. data efficiency. On-policy algorithms tend to be more stable but are more data-hungry, whereas off-policy algorithms tend to be more unstable but more data efficient, where stability in this context refers to how the model performs in between training iterations. A stable model tends to have consistent performance between training iterations, meaning that if we’re training our model to follow the center of the track, we see it get better and better at staying in the center of the track with each training iteration. Because of the consistent performance, we tend to see the total reward consistently increase between training iterations.

Unstable models tend to have more random performance between training iterations, which means that our model may come closer to following the middle of the track in one training iteration and then be completely unable to stay on the track the next training iteration. This leads to total reward between training iterations that looks noisier than on-policy methods, particularly at the start of training.

The third and final difference is how PPO and SAC use entropy. In this case, entropy is a measure of the uncertainty in the policy, so it can be interpreted as a measure of how confident a policy is at choosing an action for a given observation. A policy with low entropy is very confident at choosing an action, whereas a policy with high entropy is unsure of which action to choose.

As mentioned earlier, exploration vs. exploitation is a key challenge in RL. To confront this issue, the PPO algorithm uses entropy regularization. Entropy regularization encourages the agent to explore by preventing it from settling on a specific policy.

Let’s once again use the “follow the center line” sample reward function. If we don’t have entropy regularization, after various training iterations we may end up with a policy that causes the agent to jitter around the center line. The jitter behavior occurs because the policy has a hard time deciding whether the best action is to stay forward or turn slightly left or right after making an observation of the environment. This behavior keeps us close to the center line, we just jitter around the center line by slightly turning left and right as the agent moves around the track. This means that this jitter policy has a high total reward because it keeps us close to the center line. The entropy of this policy is also relatively high, because the policy is unsure of what the best action is for a given observation of the environment.

At this point, without using entropy as a regularizer and the total reward being high, the algorithm starts producing policies with the same jitter behavior on every training iteration, effectively meaning that the algorithm has converged. By adding entropy as a regularizer on each training iteration, the algorithm requires the total reward to be high and the entropy to be low. If we end up in a training iteration where the total reward and entropy are both high, the algorithm produces a new policy with new behavior as opposed to producing another “jitter” policy. Because entropy regularization causes a new policy to be produced, we say that it encourages exploration, because the new policy likely takes different actions than the previous “jitter” policy when observing the environment.

For SAC, instead of using entropy as a regularizer, we change the objective of the RL model to maximize not only total reward but also entropy. This entropy maximization makes SAC a unique RL algorithm. Entropy maximization has similar benefits to using the entropy as a regularizer, such as incentivizing wider exploration and avoiding convergence to a bad policy.

Entropy maximization has one unique advantage: the algorithm tends to give up on policies that choose unpromising behavior. This happens because the polices produced by SAC for each training iteration choose actions that maximize total reward and entropy when observing the environment. This means that SAC policies tend to explore the environment more because high entropy means that we’re unsure which action to take. However, because we also maximize for total reward, we’re taking unsure actions as we observe the environment close to our desired behavior. SAC is an off-policy algorithm, which means we can use observations from policies produced from different training iterations. When we look at the observations of the previous policies, which have high entropy and therefore explore the environment more, the algorithm can pick out the promising behavior and give up on the unpromising behavior.

You can tune the amount of entropy to use in SAC with the hyperparameter SAC alpha, with a value between 0.0 and 1.0. The maximum value of the SAC alpha uses the whole entropy value of the policy and favors exploration. The minimum value of SAC alpha recovers the standard RL objective and there is no entropy bonus to incentivize the exploration. A good SAC alpha value to kick off your first model is 0.5. Then you can tune this hyperparameter accordingly as you iterate on your models.

The ins and outs of action spaces

Now let’s look at how action spaces work on the AWS DeepRacer console and introduce the new continuous action space, which allows you to define a range of actions instead of a discrete set of actions. To begin, let’s review how discrete action spaces work in AWS DeepRacer.

The AWS DeepRacer console uses a neural network to model the policy learned by both PPO and SAC. The output of the policy is a discrete set of values. For discrete action spaces, which is what the PPO algorithm available on the AWS console has traditionally used, the discrete values returned from the neural network are interpreted as a probability distribution and are mapped to a set of actions. The set of actions is defined by the user by specifying the maximum steering angle, speed values, and their respective granularities to generate the corresponding combinations of speed and steering actions. Therefore, the policy returns a discrete distribution of actions.

For example, if we select a maximum steering angle of 15 degrees, a maximum speed of 1 m/s, and corresponding granularities of 3 and 1, our discrete action space has three values mapped to the following steering angle and speed pairs: (-15 degrees, 1 m/s), (0 degrees, 1m/s), and (15 degrees, 1m/s). A policy may return the following discrete distribution [0.50, 0.25, 0.25] for a given observation in the environment, which can loosely be interpreted as the policy being 50% certain that action 1, (-15 degrees, 1 m/s), is the action most likely to maximize total reward for a given observed state.

During training, we sample the action space distribution to encourage exploration, meaning that if we have this discrete distribution, we have a 50% chance of picking action 1, a 25% chance of picking action 2, and a 25% chance of picking action 3. This means that during training, until our policy is very sure about which action to take for a given observed state, we always have the chance to explore the benefits of a new action.

For continuous action space, the policy only outputs two discrete values. These values are interpreted to be the mean and standard deviation of a continuous normal distribution. You define a range for speed and steering angle. The action for an observed state is chosen from this user-defined range of speed and steering by sampling the normal distribution, defined by the mean and standard deviation returned from the policy.

For example, we can define the following ranges for steering angel and speed, [-20 degrees, 20 degrees] and [0.75m/s, 4m/s]. This means that the policy can explore all combinations specified in this range, as opposed to the discrete action space case where it could only explore three combinations. Continuous action spaces tend to produce agents that exhibit less zig-zag motion when navigating the environment. This is because policies tend to learn smooth changes in steering angle and speed as opposed to discrete changes. The trade-off is that continuous action spaces are more sensitive to choices in reward function and steering angle and speed ranges. Depending on these choices, continuous action spaces may increase the amount of time it takes to train.

Although continuous action spaces are required for SAC, you can also use them for PPO. The AWS DeepRacer console now supports training PPO models that can use either continuous or discrete action spaces. Let’s look at how to set up a continuous action space on the AWS DeepRacer console.

Creating a new vehicle using continuous action space

In this section, we walk you through the steps to create a new vehicle in the My Garage section of the console with continuous action space. All you need to do is sign up for an AWS account (if you don’t already have one) and go to the AWS DeepRacer console:

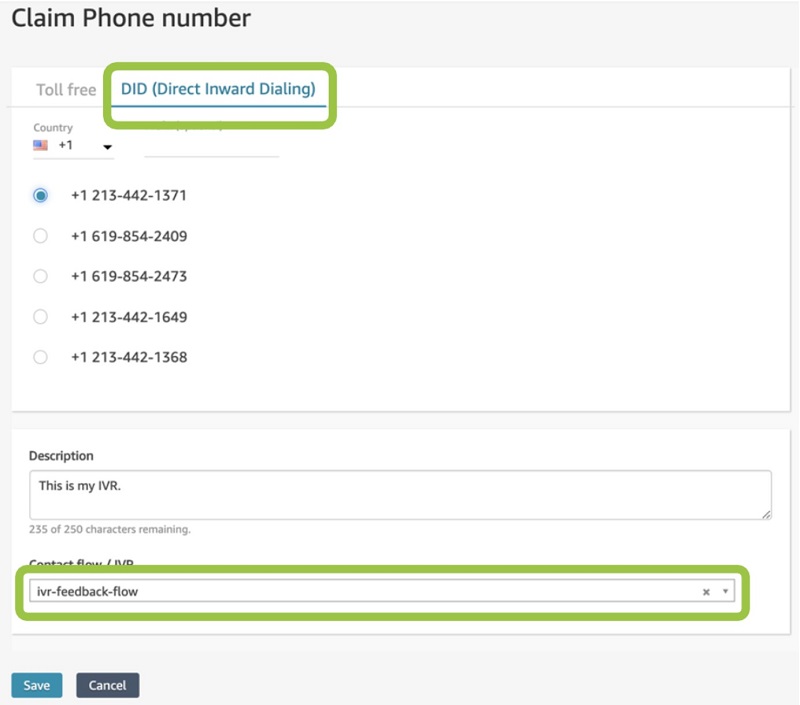

- On the AWS DeepRacer console, choose Your garage.

In the list of vehicles, you should see a new vehicle The Original DeepRacer (continuous action space) added. This is provided by default to all users to train their models using continuous action space. The vehicle uses a single camera and has a speed range of [0.5 : 1] m/s and steering angle range of [-30 : 30 ] degrees.

![The vehicle uses a single camera and has a speed range of [0.5 : 1] m/s and steering angle range of [-30 : 30 ] degrees.](https://d2908q01vomqb2.cloudfront.net/f1f836cb4ea6efb2a0b1b99f41ad8b103eff4b59/2021/02/03/ML-2373-1.jpg)

- Choose Build new vehicle to build your own vehicle with a new configuration.

In this example, we build a vehicle with stereo cameras.

- For Sensor modifications, select Stereo camera.

- Choose Next.

- For Choose your action space type, select Continuous.

For this post, we choose the action space range [0.5 : 2 ] m/s and [-30 : 30 ] degrees.

- For Right steering angle range, enter

-30. - For Left steering angle range, enter

30. - For Minimum speed, enter

5. - For Maximum speed, enter

2. - Choose Next.

- Customize your vehicle appearance and name your vehicle.

- Choose Done.

The vehicle is now available to choose when creating a model.

Training a Soft Actor Critic model on the console

In this section, we walk you through how to create new Soft Actor Critic model:

- On the AWS DeepRacer console, choose Your models.

- Choose Create model.

- For Model name, enter the name of one of your models.

- Optionally, for Training job description, enter a description.

- For Choose a track, select your track (for this post, we select European Seaside Circuit (Buildings)).

- Choose Next.

The next section allows you to customize the desired training environment, select an algorithm along with its hyperparameters, and choose the virtual car that contains your desired action spaces.

- For Race type, select the type (for this post, we select Time trial).

- For Training algorithm and hyperparameters, select SAC.

- Under Hyperparameters, configure your hyperparameters.

SAC Alpha is the hyperparameter that determines the relative importance of the entropy term against the reward.

- Lastly, choose your virtual car to use, which contains your desired action spaces. For this post, we chose My_DeepRacer_Continuous.

- Choose Next.

Lastly, you can write a reward function to guide the agent to your desired behavior and configure your desired time of training.

- In Code editor, write your reward function.

SAC is sensitive to the scaling of the reward signal, so it’s important to carefully tune the appropriate reward value. For small reward magnitudes, the policy may perform poorly because it’s likely to become uniform and fail to exploit the reward signal. For large reward magnitudes, the model learns quickly at first, but the policy quickly converges to poor local minima due to lack of exploration. So carefully tuning the right reward scaling is the key to training a successful SAC model.

- After writing your reward function, choose Validate to verify your reward function is compatible with AWS DeepRacer.

- Under Stop conditions, for Maximum time, set the desired duration of training time in minutes.

- Choose Create model to start training.

When the training starts, the model dashboard shows the progress of training along with the live streaming of the simulator.

Conclusion

With AWS DeepRacer, you can now get hands-on experience with the Soft Actor Critic algorithm. Finding the right hyperparameters values, choosing appropriate action spaces, and writing your custom reward function are the keys to improving your SAC models.

You’re now ready to train your first SAC model. Sign in to the AWS DeepRacer console to get started.

About the Author

Eddie Calleja is an SDM for AWS DeepRacer. He is the manager of the AWS DeepRacer simulation application and device software stacks. As a former physicist he spends his spare time thinking about applying AI techniques to modern day physics problems.

Eddie Calleja is an SDM for AWS DeepRacer. He is the manager of the AWS DeepRacer simulation application and device software stacks. As a former physicist he spends his spare time thinking about applying AI techniques to modern day physics problems.

Kevin Wang is a Solutions Architect for AWS, and passionate about building new applications on the latest AWS services. With a background in investment finance, Kevin loves to blend financial analysis with new technologies to find innovative ways to help customers. An inquisitive and pragmatic developer at heart, he loves community-driven learning and sharing of technology.

Kevin Wang is a Solutions Architect for AWS, and passionate about building new applications on the latest AWS services. With a background in investment finance, Kevin loves to blend financial analysis with new technologies to find innovative ways to help customers. An inquisitive and pragmatic developer at heart, he loves community-driven learning and sharing of technology. Kim Wendt is a Solutions Architect at AWS, responsible for helping global media & entertainment companies on their journey to the cloud. Prior to AWS, she was a Software Developer for the US Navy, and uses her development skills to build solutions for customers. She has a passion for continuous learning and is currently pursuing a masters in Computer Science with a focus in Machine Learning.

Kim Wendt is a Solutions Architect at AWS, responsible for helping global media & entertainment companies on their journey to the cloud. Prior to AWS, she was a Software Developer for the US Navy, and uses her development skills to build solutions for customers. She has a passion for continuous learning and is currently pursuing a masters in Computer Science with a focus in Machine Learning.

Peyman Razaghi is a data scientist at AWS. He holds a PhD in information theory from the University of Toronto and was a post-doctoral research scientist at the University of Southern California (USC), Los Angeles. Before joining AWS, Peyman was a staff systems engineer at Qualcomm contributing to a number of notable international telecommunication standards. He has authored several scientific research articles peer-reviewed in statistics and systems-engineering area, and enjoys parenting and road cycling outside work.

Peyman Razaghi is a data scientist at AWS. He holds a PhD in information theory from the University of Toronto and was a post-doctoral research scientist at the University of Southern California (USC), Los Angeles. Before joining AWS, Peyman was a staff systems engineer at Qualcomm contributing to a number of notable international telecommunication standards. He has authored several scientific research articles peer-reviewed in statistics and systems-engineering area, and enjoys parenting and road cycling outside work. Ross Claytor is a Sr Data Scientist on the ProServe Intelligence team at AWS. He works on the application of machine learning and orchestration to real world problems across industries including media and entertainment, life sciences, and financial services.

Ross Claytor is a Sr Data Scientist on the ProServe Intelligence team at AWS. He works on the application of machine learning and orchestration to real world problems across industries including media and entertainment, life sciences, and financial services.

Michael Wallner is a Global Data Scientist with AWS Professional Services and is passionate about enabling customers on their AI/ML journey in the cloud to become AWSome. Besides having a deep interest in Amazon Connect he likes sports and enjoys cooking.

Michael Wallner is a Global Data Scientist with AWS Professional Services and is passionate about enabling customers on their AI/ML journey in the cloud to become AWSome. Besides having a deep interest in Amazon Connect he likes sports and enjoys cooking. Chris Boomhower is a Machine Learning Engineer for AWS Professional Services. He loves helping enterprise customers around the world develop and automate impactful AI/ML solutions to their most challenging business problems. When he’s not tackling customers’ problems head-on, you’ll likely find him tending to his hobby farm with his family or barbecuing something delicious.

Chris Boomhower is a Machine Learning Engineer for AWS Professional Services. He loves helping enterprise customers around the world develop and automate impactful AI/ML solutions to their most challenging business problems. When he’s not tackling customers’ problems head-on, you’ll likely find him tending to his hobby farm with his family or barbecuing something delicious.