Artificial intelligence (AI) adoption is accelerating across industries and use cases. Recent scientific breakthroughs in deep learning (DL), large language models (LLMs), and generative AI is allowing customers to use advanced state-of-the-art solutions with almost human-like performance. These complex models often require hardware acceleration because it enables not only faster training but also faster inference when using deep neural networks in real-time applications. GPUs’ large number of parallel processing cores makes them well-suited for these DL tasks.

However, in addition to model invocation, those DL application often entail preprocessing or postprocessing in an inference pipeline. For example, input images for an object detection use case might need to be resized or cropped before being served to a computer vision model, or tokenization of text inputs before being used in an LLM. NVIDIA Triton is an open-source inference server that enables users to define such inference pipelines as an ensemble of models in the form of a Directed Acyclic Graph (DAG). It is designed to run models at scale on both CPU and GPU. Amazon SageMaker supports deploying Triton seamlessly, allowing you to use Triton’s features while also benefiting from SageMaker capabilities: a managed, secured environment with MLOps tools integration, automatic scaling of hosted models, and more.

AWS, in its dedication to help customers achieve the highest saving, has continuously innovated not only in pricing options and cost-optimization proactive services, but also in launching cost savings features like multi-model endpoints (MMEs). MMEs are a cost-effective solution for deploying a large number of models using the same fleet of resources and a shared serving container to host all of your models. Instead of using multiple single-model endpoints, you can reduce your hosting costs by deploying multiple models while paying only for a single inference environment. Additionally, MMEs reduce deployment overhead because SageMaker manages loading models in memory and scaling them based on the traffic patterns to your endpoint.

In this post, we show how to run multiple deep learning ensemble models on a GPU instance with a SageMaker MME. To follow along with this example, you can find the code on the public SageMaker examples repository.

How SageMaker MMEs with GPU work

With MMEs, a single container hosts multiple models. SageMaker controls the lifecycle of models hosted on the MME by loading and unloading them into the container’s memory. Instead of downloading all the models to the endpoint instance, SageMaker dynamically loads and caches the models as they are invoked.

When an invocation request for a particular model is made, SageMaker does the following:

- It first routes the request to the endpoint instance.

- If the model has not been loaded, it downloads the model artifact from Amazon Simple Storage Service (Amazon S3) to that instance’s Amazon Elastic Block Storage volume (Amazon EBS).

- It loads the model to the container’s memory on the GPU-accelerated compute instance. If the model is already loaded in the container’s memory, invocation is faster because no further steps are needed.

When an additional model needs to be loaded, and the instance’s memory utilization is high, SageMaker will unload unused models from that instance’s container to ensure that there is enough memory. These unloaded models will remain on the instance’s EBS volume so that they can be loaded into the container’s memory later, thereby removing the need to download them again from the S3 bucket. However, If the instance’s storage volume reaches its capacity, SageMaker will delete the unused models from the storage volume. In cases where the MME receives many invocation requests, and additional instances (or an auto-scaling policy) are in place, SageMaker routes some requests to other instances in the inference cluster to accommodate for the high traffic.

This not only provides a cost saving mechanism, but also enables you to dynamically deploy new models and deprecate old ones. To add a new model, you upload it to the S3 bucket the MME is configured to use and invoke it. To delete a model, stop sending requests and delete it from the S3 bucket. Adding models or deleting them from an MME doesn’t require updating the endpoint itself!

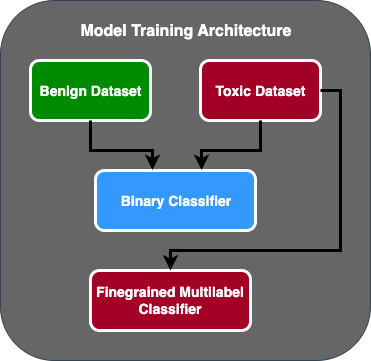

Triton ensembles

The Triton model ensemble represents a pipeline that consists of one model, preprocessing and postprocessing logic, and the connection of input and output tensors between them. A single inference request to an ensemble triggers the run of the entire pipeline as a series of steps using the ensemble scheduler. The scheduler collects the output tensors in each step and provides them as input tensors for other steps according to the specification. To clarify: the ensemble model is still viewed as a single model from an external view.

Triton server architecture includes a model repository: a file system-based repository of the models that Triton will make available for inferencing. Triton can access models from one or more locally accessible file paths or from remote locations like Amazon S3.

Each model in a model repository must include a model configuration that provides required and optional information about the model. Typically, this configuration is provided in a config.pbtxt file specified as ModelConfig protobuf. A minimal model configuration must specify the platform or backend (like PyTorch or TensorFlow), the max_batch_size property, and the input and output tensors of the model.

Triton on SageMaker

SageMaker enables model deployment using Triton server with custom code. This functionality is available through the SageMaker managed Triton Inference Server Containers. These containers support common machine leaning (ML) frameworks (like TensorFlow, ONNX, and PyTorch, as well as custom model formats) and useful environment variables that let you optimize performance on SageMaker. Using SageMaker Deep Learning Containers (DLC) images is recommended because they’re maintained and regularly updated with security patches.

Solution walkthrough

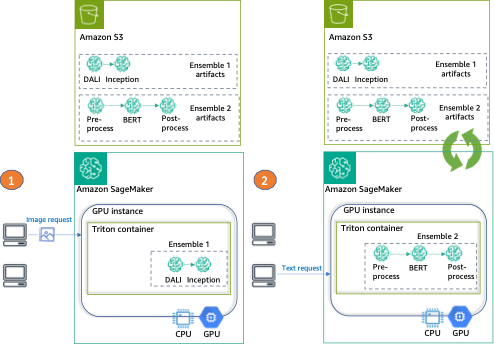

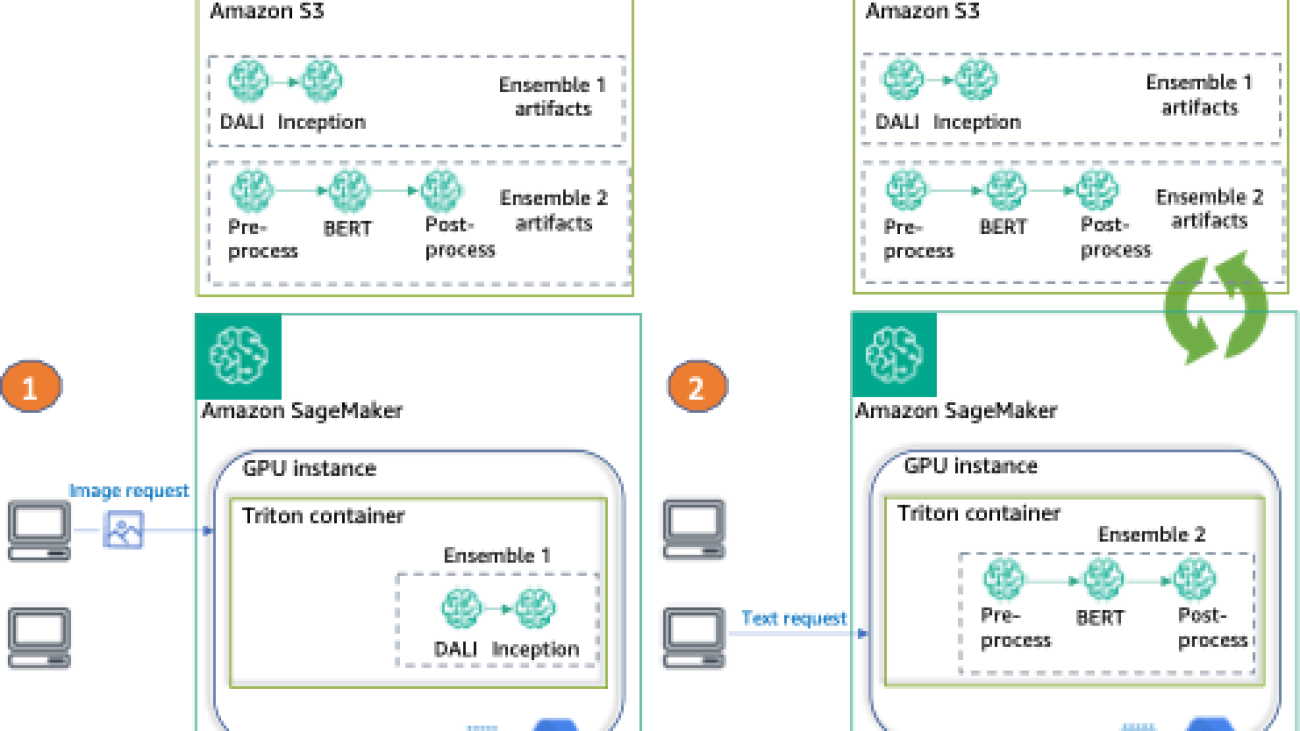

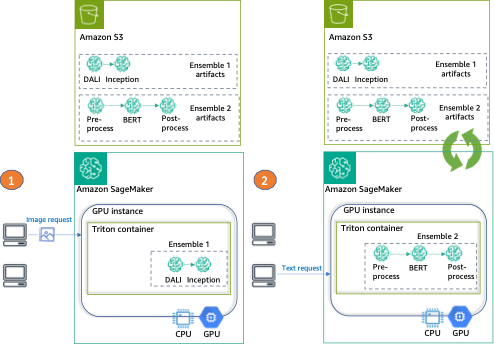

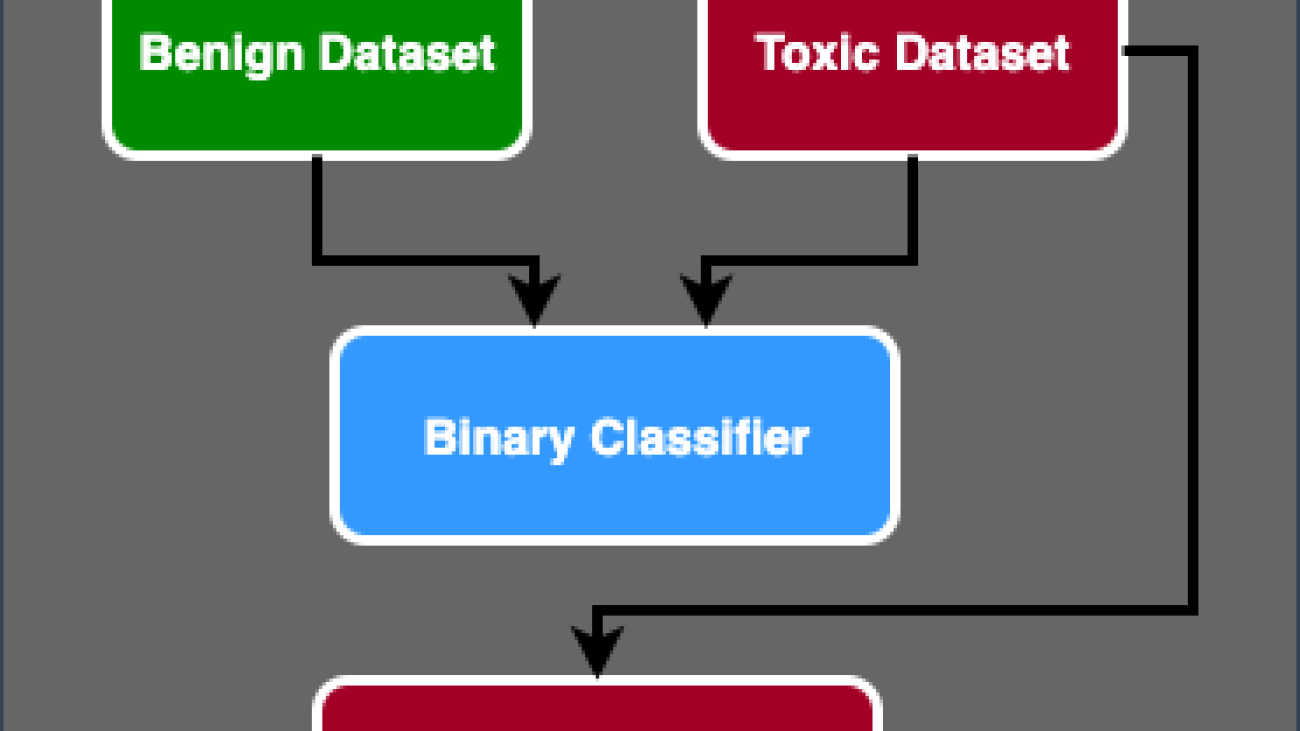

For this post, we deploy two different types of ensembles on a GPU instance, using Triton and a single SageMaker endpoint.

The first ensemble consists of two models: a DALI model for image preprocessing and a TensorFlow Inception v3 model for actual inference. The pipeline ensemble takes encoded images as an input, which will have to be decoded, resized to 299×299 resolution, and normalized. This preprocessing will be handled by the DALI model. DALI is an open-source library for common image and speech preprocessing tasks such as decoding and data augmentation. Inception v3 is an image recognition model that consists of symmetric and asymmetric convolutions, and average and max pooling fully connected layers (and therefore is perfect for GPU usage).

The second ensemble transforms raw natural language sentences into embeddings and consists of three models. First, a preprocessing model is applied to the input text tokenization (implemented in Python). Then we use a pre-trained BERT (uncased) model from the Hugging Face Model Hub to extract token embeddings. BERT is an English language model that was trained using a masked language modeling (MLM) objective. Finally, we apply a postprocessing model where the raw token embeddings from the previous step are combined into sentence embeddings.

After we configure Triton to use these ensembles, we show how to configure and run the SageMaker MME.

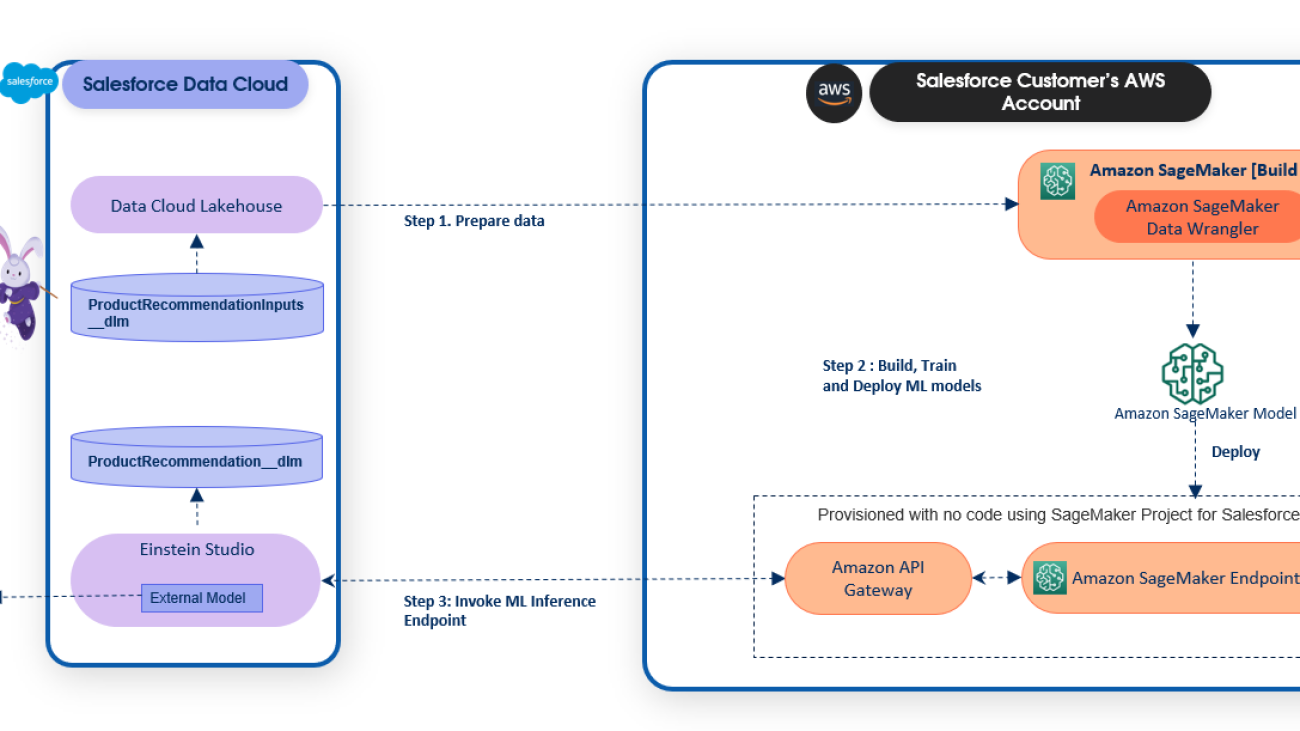

Finally, we provide an example of each ensemble invocation, as can be seen in the following diagram:

- Ensemble 1 – Invoke the endpoint with an image, specifying DALI-Inception as the target ensemble

- Ensemble 2 – Invoke the same endpoint, this time with text input and requesting the preprocess-BERT-postprocess ensemble

Set up the environment

First, we set up the needed environment. This includes updating AWS libraries (like Boto3 and the SageMaker SDK) and installing the dependencies required to package our ensembles and run inferences using Triton. We also use the SageMaker SDK default execution role. We use this role to enable SageMaker to access Amazon S3 (where our model artifacts are stored) and the container registry (where the NVIDIA Triton image will be used from). See the following code:

import boto3, json, sagemaker, time

from sagemaker import get_execution_role

import nvidia.dali as dali

import nvidia.dali.types as types

# SageMaker varaibles

sm_client = boto3.client(service_name="sagemaker")

runtime_sm_client = boto3.client("sagemaker-runtime")

sagemaker_session = sagemaker.Session(boto_session=boto3.Session())

role = get_execution_role()

# Other Variables

instance_type = "ml.g4dn.4xlarge"

sm_model_name = "triton-tf-dali-ensemble-" + time.strftime("%Y-%m-%d-%H-%M-%S", time.gmtime())

endpoint_config_name = "triton-tf-dali-ensemble-" + time.strftime("%Y-%m-%d-%H-%M-%S", time.gmtime())

endpoint_name = "triton-tf-dali-ensemble-" + time.strftime("%Y-%m-%d-%H-%M-%S", time.gmtime())

Prepare ensembles

In this next step, we prepare the two ensembles: the TensorFlow (TF) Inception with DALI preprocessing and BERT with Python preprocessing and postprocessing.

This entails downloading the pre-trained models, providing the Triton configuration files, and packaging the artifacts to be stored in Amazon S3 before deploying.

Prepare the TF and DALI ensemble

First, we prepare the directories for storing our models and configurations: for the TF Inception (inception_graphdef), for DALI preprocessing (dali), and for the ensemble (ensemble_dali_inception). Because Triton supports model versioning, we also add the model version to the directory path (denoted as 1 because we only have one version). To learn more about the Triton version policy, refer to Version Policy. Next, we download the Inception v3 model, extract it, and copy to the inception_graphdef model directory. See the following code:

Now, we configure Triton to use our ensemble pipeline. In a config.pbtxt file, we specify the input and output tensor shapes and types, and the steps the Triton scheduler needs to take (DALI preprocessing and the Inception model for image classification):

%%writefile model_repository/ensemble_dali_inception/config.pbtxt

name: "ensemble_dali_inception"

platform: "ensemble"

max_batch_size: 256

input [

{

name: "INPUT"

data_type: TYPE_UINT8

dims: [ -1 ]

}

]

output [

{

name: "OUTPUT"

data_type: TYPE_FP32

dims: [ 1001 ]

}

]

ensemble_scheduling {

step [

{

model_name: "dali"

model_version: -1

input_map {

key: "DALI_INPUT_0"

value: "INPUT"

}

output_map {

key: "DALI_OUTPUT_0"

value: "preprocessed_image"

}

},

{

model_name: "inception_graphdef"

model_version: -1

input_map {

key: "input"

value: "preprocessed_image"

}

output_map {

key: "InceptionV3/Predictions/Softmax"

value: "OUTPUT"

}

}

]

}

Next, we configure each of the models. First, the model config for DALI backend:

%%writefile model_repository/dali/config.pbtxt

name: "dali"

backend: "dali"

max_batch_size: 256

input [

{

name: "DALI_INPUT_0"

data_type: TYPE_UINT8

dims: [ -1 ]

}

]

output [

{

name: "DALI_OUTPUT_0"

data_type: TYPE_FP32

dims: [ 299, 299, 3 ]

}

]

parameters: [

{

key: "num_threads"

value: { string_value: "12" }

}

]

Next, the model configuration for TensorFlow Inception v3 we downloaded earlier:

%%writefile model_repository/inception_graphdef/config.pbtxt

name: "inception_graphdef"

platform: "tensorflow_graphdef"

max_batch_size: 256

input [

{

name: "input"

data_type: TYPE_FP32

format: FORMAT_NHWC

dims: [ 299, 299, 3 ]

}

]

output [

{

name: "InceptionV3/Predictions/Softmax"

data_type: TYPE_FP32

dims: [ 1001 ]

label_filename: "inception_labels.txt"

}

]

instance_group [

{

kind: KIND_GPU

}

]

Because this is a classification model, we also need to copy the Inception model labels to the inception_graphdef directory in the model repository. These labels include 1,000 class labels from the ImageNet dataset.

!aws s3 cp s3://sagemaker-sample-files/datasets/labels/inception_labels.txt model_repository/inception_graphdef/inception_labels.txt

Next, we configure and serialize the DALI pipeline that will handle our preprocessing to file. The preprocessing includes reading the image (using CPU), decoding (accelerated using GPU), and resizing and normalizing the image.

@dali.pipeline_def(batch_size=3, num_threads=1, device_id=0)

def pipe():

"""Create a pipeline which reads images and masks, decodes the images and returns them."""

images = dali.fn.external_source(device="cpu", name="DALI_INPUT_0")

images = dali.fn.decoders.image(images, device="mixed", output_type=types.RGB)

images = dali.fn.resize(images, resize_x=299, resize_y=299) #resize image to the default 299x299 size

images = dali.fn.crop_mirror_normalize(

images,

dtype=types.FLOAT,

output_layout="HWC",

crop=(299, 299), #crop image to the default 299x299 size

mean=[0.485 * 255, 0.456 * 255, 0.406 * 255], #crop a central region of the image

std=[0.229 * 255, 0.224 * 255, 0.225 * 255], #crop a central region of the image

)

return images

pipe().serialize(filename="model_repository/dali/1/model.dali")

Finally, we package the artifacts together and upload them as a single object to Amazon S3:

!tar -cvzf model_tf_dali.tar.gz -C model_repository .

model_uri = sagemaker_session.upload_data(

path="model_tf_dali.tar.gz", key_prefix="triton-mme-gpu-ensemble"

)

print("S3 model uri: {}".format(model_uri))

Prepare the TensorRT and Python ensemble

For this example, we use a pre-trained model from the transformers library.

You can find all models (preprocess and postprocess, along with config.pbtxt files) in the folder ensemble_hf. Our file system structure will include four directories (three for the individual model steps and one for the ensemble) as well as their respective versions:

ensemble_hf

├── bert-trt

| |── model.pt

| |──config.pbtxt

├── ensemble

│ └── 1

| └── config.pbtxt

├── postprocess

│ └── 1

| └── model.py

| └── config.pbtxt

├── preprocess

│ └── 1

| └── model.py

| └── config.pbtxt

In the workspace folder, we provide with two scripts: the first to convert the model into ONNX format (onnx_exporter.py) and the TensorRT compilation script (generate_model_trt.sh).

Triton natively supports the TensorRT runtime, which enables you to easily deploy a TensorRT engine, thereby optimizing for a selected GPU architecture.

To make sure we use the TensorRT version and dependencies that are compatible with the ones in our Triton container, we compile the model using the corresponding version of NVIDIA’s PyTorch container image:

model_id = "sentence-transformers/all-MiniLM-L6-v2"

! docker run --gpus=all --rm -it -v `pwd`/workspace:/workspace nvcr.io/nvidia/pytorch:22.10-py3 /bin/bash generate_model_trt.sh $model_id

We then copy the model artifacts to the directory we created earlier and add a version to the path:

! mkdir -p ensemble_hf/bert-trt/1 && mv workspace/model.plan ensemble_hf/bert-trt/1/model.plan && rm -rf workspace/model.onnx workspace/core*

We use a Conda pack to generate a Conda environment that the Triton Python backend will use in preprocessing and postprocessing:

!bash conda_dependencies.sh

!cp processing_env.tar.gz ensemble_hf/postprocess/ && cp processing_env.tar.gz ensemble_hf/preprocess/

!rm processing_env.tar.gz

Finally, we upload the model artifacts to Amazon S3:

!tar -C ensemble_hf/ -czf model_trt_python.tar.gz .

model_uri = sagemaker_session.upload_data(

path="model_trt_python.tar.gz", key_prefix="triton-mme-gpu-ensemble"

)

print("S3 model uri: {}".format(model_uri))

Run ensembles on a SageMaker MME GPU instance

Now that our ensemble artifacts are stored in Amazon S3, we can configure and launch the SageMaker MME.

We start by retrieving the container image URI for the Triton DLC image that matches the one in our Region’s container registry (and is used for TensorRT model compilation):

account_id_map = {

"us-east-1": "785573368785",

"us-east-2": "007439368137",

"us-west-1": "710691900526",

"us-west-2": "301217895009",

"eu-west-1": "802834080501",

"eu-west-2": "205493899709",

"eu-west-3": "254080097072",

"eu-north-1": "601324751636",

"eu-south-1": "966458181534",

"eu-central-1": "746233611703",

"ap-east-1": "110948597952",

"ap-south-1": "763008648453",

"ap-northeast-1": "941853720454",

"ap-northeast-2": "151534178276",

"ap-southeast-1": "324986816169",

"ap-southeast-2": "355873309152",

"cn-northwest-1": "474822919863",

"cn-north-1": "472730292857",

"sa-east-1": "756306329178",

"ca-central-1": "464438896020",

"me-south-1": "836785723513",

"af-south-1": "774647643957",

}

region = boto3.Session().region_name

if region not in account_id_map.keys():

raise ("UNSUPPORTED REGION")

base = "amazonaws.com.cn" if region.startswith("cn-") else "amazonaws.com"

triton_image_uri = "{account_id}.dkr.ecr.{region}.{base}/sagemaker-tritonserver:23.03-py3".format(

account_id=account_id_map[region], region=region, base=base

)

Next, we create the model in SageMaker. In the create_model request, we describe the container to use and the location of model artifacts, and we specify using the Mode parameter that this is a multi-model.

container = {

"Image": triton_image_uri,

"ModelDataUrl": models_s3_location,

"Mode": "MultiModel",

}

create_model_response = sm_client.create_model(

ModelName=sm_model_name, ExecutionRoleArn=role, PrimaryContainer=container

)

To host our ensembles, we create an endpoint configuration with the create_endpoint_config API call, and then create an endpoint with the create_endpoint API. SageMaker then deploys all the containers that you defined for the model in the hosting environment.

create_endpoint_config_response = sm_client.create_endpoint_config(

EndpointConfigName=endpoint_config_name,

ProductionVariants=[

{

"InstanceType": instance_type,

"InitialVariantWeight": 1,

"InitialInstanceCount": 1,

"ModelName": sm_model_name,

"VariantName": "AllTraffic",

}

],

)

create_endpoint_response = sm_client.create_endpoint(

EndpointName=endpoint_name, EndpointConfigName=endpoint_config_name

)

Although in this example we are setting a single instance to host our model, SageMaker MMEs fully support setting an auto scaling policy. For more information on this feature, see Run multiple deep learning models on GPU with Amazon SageMaker multi-model endpoints.

Create request payloads and invoke the MME for each model

After our real-time MME is deployed, it’s time to invoke our endpoint with each of the model ensembles we used.

First, we create a payload for the DALI-Inception ensemble. We use the shiba_inu_dog.jpg image from the SageMaker public dataset of pet images. We load the image as an encoded array of bytes to use in the DALI backend (to learn more, see Image Decoder examples).

sample_img_fname = "shiba_inu_dog.jpg"

import numpy as np

s3_client = boto3.client("s3")

s3_client.download_file(

"sagemaker-sample-files", "datasets/image/pets/shiba_inu_dog.jpg", sample_img_fname

)

def load_image(img_path):

"""

Loads image as an encoded array of bytes.

This is a typical approach you want to use in DALI backend

"""

with open(img_path, "rb") as f:

img = f.read()

return np.array(list(img)).astype(np.uint8)

rv = load_image(sample_img_fname)

print(f"Shape of image {rv.shape}")

rv2 = np.expand_dims(rv, 0)

print(f"Shape of expanded image array {rv2.shape}")

payload = {

"inputs": [

{

"name": "INPUT",

"shape": rv2.shape,

"datatype": "UINT8",

"data": rv2.tolist(),

}

]

}

With our encoded image and payload ready, we invoke the endpoint.

Note that we specify our target ensemble to be the model_tf_dali.tar.gz artifact. The TargetModel parameter is what differentiates MMEs from single-model endpoints and enables us to direct the request to the right model.

response = runtime_sm_client.invoke_endpoint(

EndpointName=endpoint_name, ContentType="application/octet-stream", Body=json.dumps(payload), TargetModel="model_tf_dali.tar.gz"

)

The response includes metadata about the invocation (such as model name and version) and the actual inference response in the data part of the output object. In this example, we get an array of 1,001 values, where each value is the probability of the class the image belongs to (1,000 classes and 1 extra for others).

Next, we invoke our MME again, but this time target the second ensemble. Here the data is just two simple text sentences:

text_inputs = ["Sentence 1", "Sentence 2"]

To simplify communication with Triton, the Triton project provides several client libraries. We use that library to prepare the payload in our request:

import tritonclient.http as http_client

text_inputs = ["Sentence 1", "Sentence 2"]

inputs = []

inputs.append(http_client.InferInput("INPUT0", [len(text_inputs), 1], "BYTES"))

batch_request = [[text_inputs[i]] for i in range(len(text_inputs))]

input0_real = np.array(batch_request, dtype=np.object_)

inputs[0].set_data_from_numpy(input0_real, binary_data=True)

outputs = []

outputs.append(http_client.InferRequestedOutput("finaloutput"))

request_body, header_length = http_client.InferenceServerClient.generate_request_body(

inputs, outputs=outputs

)

Now we are ready to invoke the endpoint—this time, the target model is the model_trt_python.tar.gz ensemble:

response = runtime_sm_client.invoke_endpoint(

EndpointName=endpoint_name,

ContentType="application/vnd.sagemaker-triton.binary+json;json-header-size={}".format(

header_length

),

Body=request_body,

TargetModel="model_trt_python.tar.gz"

)

The response is the sentence embeddings that can be used in a variety of natural language processing (NLP) applications.

Clean up

Lastly, we clean up and delete the endpoint, endpoint configuration, and model:

sm_client.delete_endpoint(EndpointName=endpoint_name)

sm_client.delete_endpoint_config(EndpointConfigName=endpoint_config_name)

sm_client.delete_model(ModelName=sm_model_name)

Conclusion

In this post, we showed how to configure, deploy, and invoke a SageMaker MME with Triton ensembles on a GPU-accelerated instance. We hosted two ensembles on a single real-time inference environment, which reduced our cost by 50% (for a g4dn.4xlarge instance, which represents over $13,000 in yearly savings). Although this example used only two pipelines, SageMaker MMEs can support thousands of model ensembles, making it an extraordinary cost savings mechanism. Furthermore, you can use SageMaker MMEs’ dynamic ability to load (and unload) models to minimize the operational overhead of managing model deployments in production.

About the authors

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch, and spending time with his family.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch, and spending time with his family.

Nikhil Kulkarni is a software developer with AWS Machine Learning, focusing on making machine learning workloads more performant on the cloud, and is a co-creator of AWS Deep Learning Containers for training and inference. He’s passionate about distributed Deep Learning Systems. Outside of work, he enjoys reading books, fiddling with the guitar, and making pizza.

Nikhil Kulkarni is a software developer with AWS Machine Learning, focusing on making machine learning workloads more performant on the cloud, and is a co-creator of AWS Deep Learning Containers for training and inference. He’s passionate about distributed Deep Learning Systems. Outside of work, he enjoys reading books, fiddling with the guitar, and making pizza.

Uri Rosenberg is the AI & ML Specialist Technical Manager for Europe, Middle East, and Africa. Based out of Israel, Uri works to empower enterprise customers to design, build, and operate ML workloads at scale. In his spare time, he enjoys cycling, backpacking, and backpropagating.

Uri Rosenberg is the AI & ML Specialist Technical Manager for Europe, Middle East, and Africa. Based out of Israel, Uri works to empower enterprise customers to design, build, and operate ML workloads at scale. In his spare time, he enjoys cycling, backpacking, and backpropagating.

Eliuth Triana Isaza is a Developer Relations Manager on the NVIDIA-AWS team. He connects Amazon and AWS product leaders, developers, and scientists with NVIDIA technologists and product leaders to accelerate Amazon ML/DL workloads, EC2 products, and AWS AI services. In addition, Eliuth is a passionate mountain biker, skier, and poker player.

Eliuth Triana Isaza is a Developer Relations Manager on the NVIDIA-AWS team. He connects Amazon and AWS product leaders, developers, and scientists with NVIDIA technologists and product leaders to accelerate Amazon ML/DL workloads, EC2 products, and AWS AI services. In addition, Eliuth is a passionate mountain biker, skier, and poker player.

Read More

Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering and an ML background, he works with customers of any size to understand their business and technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, computer vision, and NLP, involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football.

Giuseppe Angelo Porcelli is a Principal Machine Learning Specialist Solutions Architect for Amazon Web Services. With several years software engineering and an ML background, he works with customers of any size to understand their business and technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. He has worked on projects in different domains, including MLOps, computer vision, and NLP, involving a broad set of AWS services. In his free time, Giuseppe enjoys playing football. Bruno Pistone is an AI/ML Specialist Solutions Architect for AWS based in Milan. He works with customers of any size, helping them understand their technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. His field of expertice includes machine learning end to end, machine learning endustrialization, and generative AI. He enjoys spending time with his friends and exploring new places, as well as traveling to new destinations.

Bruno Pistone is an AI/ML Specialist Solutions Architect for AWS based in Milan. He works with customers of any size, helping them understand their technical needs and design AI and ML solutions that make the best use of the AWS Cloud and the Amazon Machine Learning stack. His field of expertice includes machine learning end to end, machine learning endustrialization, and generative AI. He enjoys spending time with his friends and exploring new places, as well as traveling to new destinations.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch, and spending time with his family.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch, and spending time with his family. Nikhil Kulkarni is a software developer with AWS Machine Learning, focusing on making machine learning workloads more performant on the cloud, and is a co-creator of AWS Deep Learning Containers for training and inference. He’s passionate about distributed Deep Learning Systems. Outside of work, he enjoys reading books, fiddling with the guitar, and making pizza.

Nikhil Kulkarni is a software developer with AWS Machine Learning, focusing on making machine learning workloads more performant on the cloud, and is a co-creator of AWS Deep Learning Containers for training and inference. He’s passionate about distributed Deep Learning Systems. Outside of work, he enjoys reading books, fiddling with the guitar, and making pizza. Uri Rosenberg is the AI & ML Specialist Technical Manager for Europe, Middle East, and Africa. Based out of Israel, Uri works to empower enterprise customers to design, build, and operate ML workloads at scale. In his spare time, he enjoys cycling, backpacking, and backpropagating.

Uri Rosenberg is the AI & ML Specialist Technical Manager for Europe, Middle East, and Africa. Based out of Israel, Uri works to empower enterprise customers to design, build, and operate ML workloads at scale. In his spare time, he enjoys cycling, backpacking, and backpropagating. Eliuth Triana Isaza is a Developer Relations Manager on the NVIDIA-AWS team. He connects Amazon and AWS product leaders, developers, and scientists with NVIDIA technologists and product leaders to accelerate Amazon ML/DL workloads, EC2 products, and AWS AI services. In addition, Eliuth is a passionate mountain biker, skier, and poker player.

Eliuth Triana Isaza is a Developer Relations Manager on the NVIDIA-AWS team. He connects Amazon and AWS product leaders, developers, and scientists with NVIDIA technologists and product leaders to accelerate Amazon ML/DL workloads, EC2 products, and AWS AI services. In addition, Eliuth is a passionate mountain biker, skier, and poker player.

James Poquiz is a Data Scientist with AWS Professional Services based in Orange County, California. He has a BS in Computer Science from the University of California, Irvine and has several years of experience working in the data domain having played many different roles. Today he works on implementing and deploying scalable ML solutions to achieve business outcomes for AWS clients.

James Poquiz is a Data Scientist with AWS Professional Services based in Orange County, California. He has a BS in Computer Science from the University of California, Irvine and has several years of experience working in the data domain having played many different roles. Today he works on implementing and deploying scalable ML solutions to achieve business outcomes for AWS clients. Han Man is a Senior Data Science & Machine Learning Manager with AWS Professional Services based in San Diego, CA. He has a PhD in Engineering from Northwestern University and has several years of experience as a management consultant advising clients in manufacturing, financial services, and energy. Today, he is passionately working with key customers from a variety of industry verticals to develop and implement ML and GenAI solutions on AWS.

Han Man is a Senior Data Science & Machine Learning Manager with AWS Professional Services based in San Diego, CA. He has a PhD in Engineering from Northwestern University and has several years of experience as a management consultant advising clients in manufacturing, financial services, and energy. Today, he is passionately working with key customers from a variety of industry verticals to develop and implement ML and GenAI solutions on AWS. Safa Tinaztepe is a full-stack data scientist with AWS Professional Services. He has a BS in computer science from Emory University and has interests in MLOps, distributed systems, and web3.

Safa Tinaztepe is a full-stack data scientist with AWS Professional Services. He has a BS in computer science from Emory University and has interests in MLOps, distributed systems, and web3.

Munish Dabra is a Principal Solutions Architect at Amazon Web Services (AWS). His current areas of focus are AI/ML and Observability. He has a strong background in designing and building scalable distributed systems. He enjoys helping customers innovate and transform their business in AWS. LinkedIn:

Munish Dabra is a Principal Solutions Architect at Amazon Web Services (AWS). His current areas of focus are AI/ML and Observability. He has a strong background in designing and building scalable distributed systems. He enjoys helping customers innovate and transform their business in AWS. LinkedIn:  Patrick Lin is a Software Development Engineer with Amazon SageMaker Data Wrangler. He is committed to making Amazon SageMaker Data Wrangler the number one data preparation tool for productionized ML workflows. Outside of work, you can find him reading, listening to music, having conversations with friends, and serving at his church.

Patrick Lin is a Software Development Engineer with Amazon SageMaker Data Wrangler. He is committed to making Amazon SageMaker Data Wrangler the number one data preparation tool for productionized ML workflows. Outside of work, you can find him reading, listening to music, having conversations with friends, and serving at his church.

Arun Anand is a Senior Solutions Architect at Amazon Web Services based in Houston area. He has 25+ years of experience in designing and developing enterprise applications. He works with partners in Energy & Utilities segment providing architectural and best practice recommendations for new and existing solutions.

Arun Anand is a Senior Solutions Architect at Amazon Web Services based in Houston area. He has 25+ years of experience in designing and developing enterprise applications. He works with partners in Energy & Utilities segment providing architectural and best practice recommendations for new and existing solutions. Rajnish Shaw is a Senior Solutions Architect at Amazon Web Services, with a background as a Product Developer and Architect. Rajnish is passionate about helping customers build applications on the cloud. Outside of work Rajnish enjoys spending time with family and friends, and traveling.

Rajnish Shaw is a Senior Solutions Architect at Amazon Web Services, with a background as a Product Developer and Architect. Rajnish is passionate about helping customers build applications on the cloud. Outside of work Rajnish enjoys spending time with family and friends, and traveling. Yuanhua Wang is a software engineer at AWS with more than 15 years of experience in the technology industry. His interests are software architecture and build tools on cloud computing.

Yuanhua Wang is a software engineer at AWS with more than 15 years of experience in the technology industry. His interests are software architecture and build tools on cloud computing.

Daryl Martis is the Director of Product for Einstein Studio at Salesforce Data Cloud. He has over 10 years of experience in planning, building, launching, and managing world-class solutions for enterprise customers including AI/ML and cloud solutions. He has previously worked in the financial services industry in New York City. Follow him on

Daryl Martis is the Director of Product for Einstein Studio at Salesforce Data Cloud. He has over 10 years of experience in planning, building, launching, and managing world-class solutions for enterprise customers including AI/ML and cloud solutions. He has previously worked in the financial services industry in New York City. Follow him on  Rachna Chadha is a Principal Solutions Architect AI/ML in Strategic Accounts at AWS. Rachna is an optimist who believes that ethical and responsible use of AI can improve society in the future and bring economic and social prosperity. In her spare time, Rachna likes spending time with her family, hiking, and listening to music.

Rachna Chadha is a Principal Solutions Architect AI/ML in Strategic Accounts at AWS. Rachna is an optimist who believes that ethical and responsible use of AI can improve society in the future and bring economic and social prosperity. In her spare time, Rachna likes spending time with her family, hiking, and listening to music. Ife Stewart is a Principal Solutions Architect in the Strategic ISV segment at AWS. She has been engaged with Salesforce Data Cloud over the last 2 years to help build integrated customer experiences across Salesforce and AWS. Ife has over 10 years of experience in technology. She is an advocate for diversity and inclusion in the technology field.

Ife Stewart is a Principal Solutions Architect in the Strategic ISV segment at AWS. She has been engaged with Salesforce Data Cloud over the last 2 years to help build integrated customer experiences across Salesforce and AWS. Ife has over 10 years of experience in technology. She is an advocate for diversity and inclusion in the technology field. Dharmendra Kumar Rai (DK Rai) is a Sr. Data Architect, Data Lake & AI/ML, serving strategic customers. He works closely with customers to understand how AWS can help them solve problems, especially in the AI/ML and analytics space. DK has many years of experience in building data-intensive solutions across a range of industry verticals, including high-tech, FinTech, insurance, and consumer-facing applications.

Dharmendra Kumar Rai (DK Rai) is a Sr. Data Architect, Data Lake & AI/ML, serving strategic customers. He works closely with customers to understand how AWS can help them solve problems, especially in the AI/ML and analytics space. DK has many years of experience in building data-intensive solutions across a range of industry verticals, including high-tech, FinTech, insurance, and consumer-facing applications. Marc Karp is an ML Architect with the SageMaker Service team. He focuses on helping customers design, deploy, and manage ML workloads at scale. In his spare time, he enjoys traveling and exploring new places.

Marc Karp is an ML Architect with the SageMaker Service team. He focuses on helping customers design, deploy, and manage ML workloads at scale. In his spare time, he enjoys traveling and exploring new places.