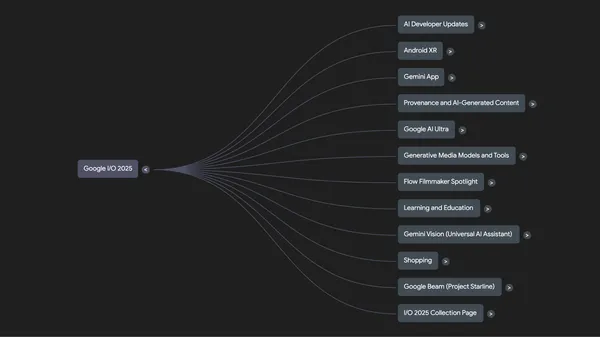

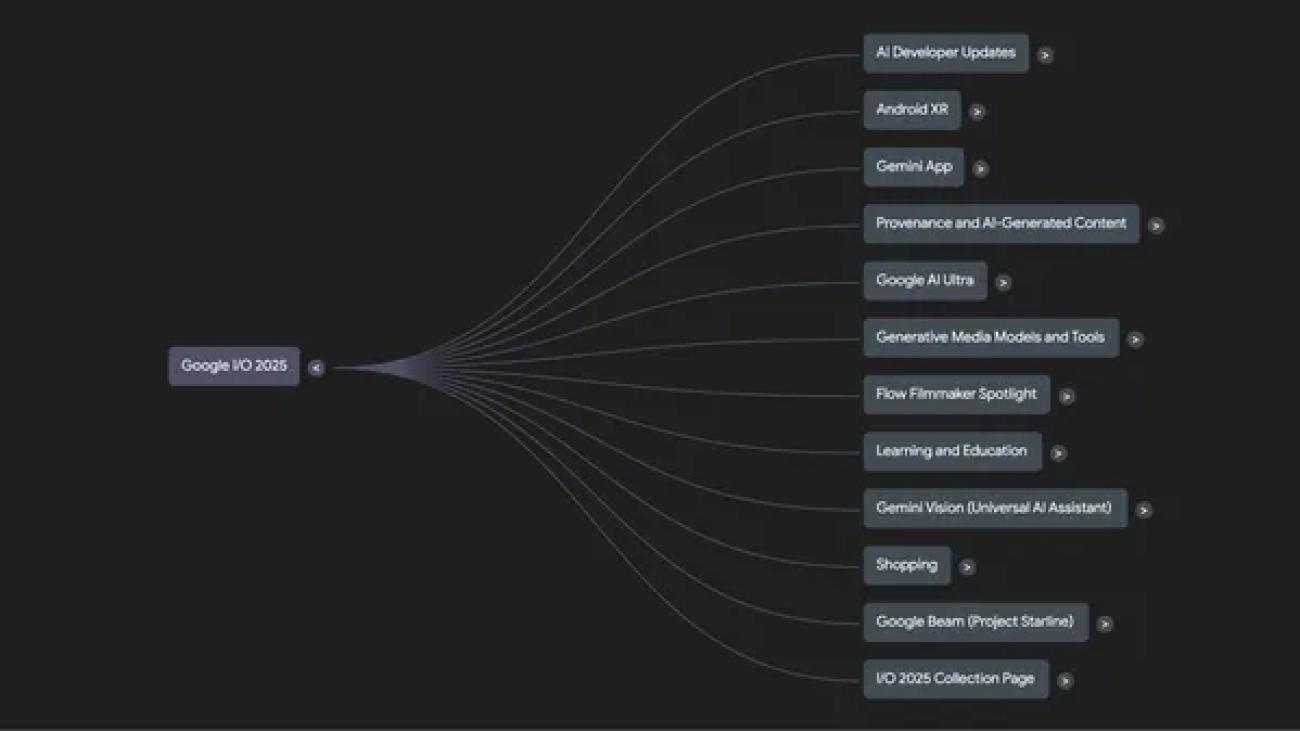

Learn more about the biggest announcements and launches from Google’s 2025 I/O developer conference.Read More

Learn more about the biggest announcements and launches from Google’s 2025 I/O developer conference.Read More

Understand all the I/O news with NotebookLM.

Google I/O 2025 was full of tons of announcements, lots of launches and plenty of demos! And if you just can’t get enough of all things I/O, you can dive deeper into the…Read More

Google I/O 2025 was full of tons of announcements, lots of launches and plenty of demos! And if you just can’t get enough of all things I/O, you can dive deeper into the…Read More

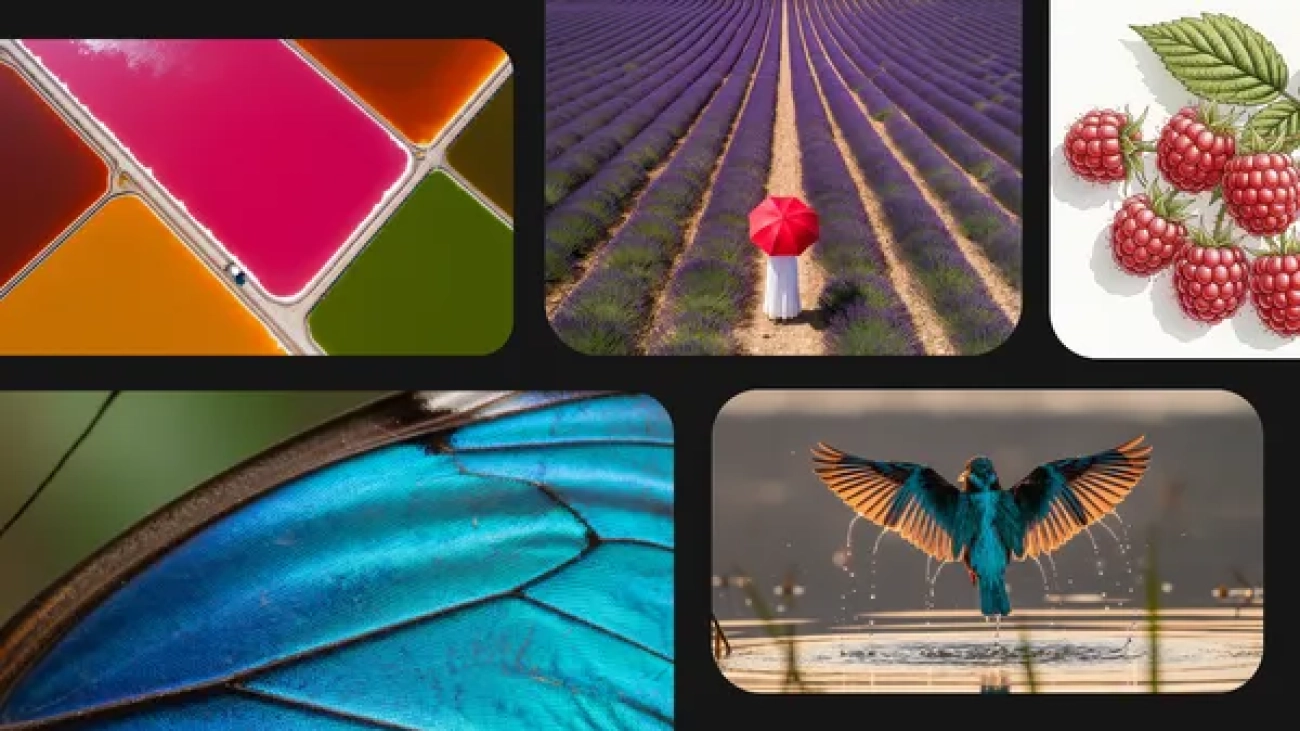

Fuel your creativity with new generative media models and tools

From Imagen 4 and Veo 3 to Flow, try these new generative media tools today.Read More

From Imagen 4 and Veo 3 to Flow, try these new generative media tools today.Read More

Gemini 2.5: Our most intelligent models are getting even better

At I/O 2025, we shared updates to our Gemini 2.5 model series and Deep Think, an experimental enhanced reasoning mode for 2.5 Pro.Read More

At I/O 2025, we shared updates to our Gemini 2.5 model series and Deep Think, an experimental enhanced reasoning mode for 2.5 Pro.Read More

Gemini gets more personal, proactive and powerful

The Gemini app is getting major new updates, from Veo 3 and Imagen 4 to Deep Research and Canvas.Read More

The Gemini app is getting major new updates, from Veo 3 and Imagen 4 to Deep Research and Canvas.Read More

Darren Aronofky’s Primordial Soup and Google DeepMind are partnering to explore AI’s role in storytelling.

Today, we’re announcing a partnership between Google DeepMind and Primordial Soup, a new venture dedicated to storytelling innovation founded by pioneering director Darr…Read More

Today, we’re announcing a partnership between Google DeepMind and Primordial Soup, a new venture dedicated to storytelling innovation founded by pioneering director Darr…Read More

Our vision for building a universal AI assistant

At Google I/O, we discussed how we’re extending Gemini to become a world model.Read More

At Google I/O, we discussed how we’re extending Gemini to become a world model.Read More

Google I/O 2025: From research to reality

At our annual developer conference, we announced how we’re making AI even more helpful with Gemini.Read More

At our annual developer conference, we announced how we’re making AI even more helpful with Gemini.Read More

AI in Search: Going beyond information to intelligence

Today at I/O, we showed how we’re enhancing Search with our latest Gemini models via AI Mode.Read More

Today at I/O, we showed how we’re enhancing Search with our latest Gemini models via AI Mode.Read More

We’re doing cutting-edge research to build the most helpful AI that’s more intelligent, agentic and personalized.

We’re doing cutting-edge research to build the most helpful AI that’s more intelligent, agentic and personalized.