[MUSIC FADES]

So, Chris, you have recently published a new textbook on deep learning, maybe the new definitive textbook on deep learning. Time will tell. So, of course, I want to get into that. But first, I’d like to dive right into a few philosophical questions. In the preface of the book, you make reference to the massive scale of state-of-the-art language models, generative models comprising on the order of a trillion learnable parameters. How well do you think we understand what a system at that scale is actually learning?

CHRIS BISHOP: That’s a super interesting question, Ashley. So in one sense, of course, we understand the systems extremely well because we designed them; we built them. But what’s very interesting about machine learning technology compared to most other technologies is that the, the functionality in large part is learned, is learned from data. And what we discover in particular with these very large language models is, kind of, emergent behavior. As we go up at each factor of 10 in scale, we see qualitatively new properties and capabilities emerging. And that’s super interesting. That, that was called the scaling hypothesis. And it’s proven to be remarkably successful.

LLORENS: Your new book lays out foundations in statistics and probability theory for modern machine learning. Central to those foundations is the concept of probability distributions, in particular learning distributions in the service of helping a machine perform a useful task. For example, if the task is object recognition, we may seek to learn the distribution of pixels you’d expect to see in images corresponding to objects of interest, like a teddy bear or a racecar. On smaller scales, we can at least conceive of the distributions that machines are learning. What does it mean to learn a distribution at the scale of a trillion learnable parameters?

BISHOP: Right. That’s really interesting. So, so first of all, the fundamentals are very solid. The fact that we have this, this, sort of, foundational rock of probability theory on which everything is built is extremely powerful. But then these emergent properties that we talked about are the result of extremely complex statistics. What’s really interesting about these neural networks, let’s say, in comparison with the human brain is that we can perform perfect diagnostics on them. We can understand exactly what each neuron is doing at each moment of time. And, and so we can almost treat the system in a, in a, sort of, somewhat experimental way. We can, we can probe the system. You can apply different inputs and see how different units respond. You can play games like looking at a unit that responds to a particular input and then perhaps amplifying the, amplifying that response, adjusting the input to make that response stronger, seeing what effect it has, and so on. So there’s an aspect of machine learning these days that’s somewhat like experimental neurobiology, except with the big advantage that we have sort of perfect diagnostics.

LLORENS: Another concept that is key in machine learning is generalization. In more specialized systems, often smaller systems, we can actually conceive of what we might mean by generalizing. In the object recognition example I used earlier, we may want to train an AI model capable of recognizing any arbitrary image of a teddy bear. Because this is a specialized task, it is easy to grasp what we mean by generalization. But what does generalization mean in our current era of large-scale AI models and systems?

BISHOP: Right. Well, generalization is a fundamental property, of course. If we couldn’t generalize, there’d be no point in building these systems. And again, these, these foundational principles apply equally at a very large scale as they do at a, at a smaller scale. But the concept of generalization really has to do with modeling the distribution from which the data is generated. So if you think about a large language model, it’s trained by predicting the next word or predicting the next token. But really what we’re doing is, is creating a task for the model that forces it to learn the underlying distribution. Now, that distribution may be extremely complex, let’s say, in the case of natural language. It can convey a tremendous amount of meaning. So, really, the system is forced to … in order to get the best possible performance, in order to make the best prediction for the next word, if you like, it’s forced to effectively understand the meaning of the content of the data. In the case of language, the meaning of effectively what’s being said. And so from a mathematical point of view, there’s a very close relationship between learning this probability distribution and the problem of data compression, because it turns out if you want to compress data in a lossless way, the optimal way to do that is to learn the distribution that generates the data. So that’s, that’s … we show that in the book, in fact. And so, and the best way to … let’s take the example of images, for instance. If you’ve got a very, very large number of natural images and you had to compress them, the most efficient way to compress them would be to understand the mechanisms by which the images come about. There are objects. You could, you could pick a car or a bicycle or a house. There’s lighting from different angles, shadows, reflections, and so on. And learning about those mechanisms—understanding those mechanisms—will give you the best possible compression, but it’ll also give you the best possible generalization.

LLORENS: Let’s talk briefly about one last fundamental concept—inductive bias. Of course, as you mentioned, AI models are learned from data and experience, and my question for you is, to what extent do the neural architectures underlying those models represent an inductive bias that shapes the learning?

BISHOP: This is a really interesting question, as well, and it sort of reflects the journey that neural nets have been on in the last, you know, 30–35 years since we first started using gradient-based methods to train them. So, so the idea of inductive bias is that, actually, you can only learn from data in the presence of assumptions. There’s, actually, a theorem called the “no free lunch” theorem, which proves this mathematically. And so, to be able to generalize, you have to have data and some sort of assumption, some set of assumptions. Now, if you go back, you know, 30 years, 35 years, when I first got excited about neural nets, we had very simple one– and two–layered neural nets. We had to put a lot of assumptions in. We’d have to code a lot of human expert knowledge into feature extraction, and then the neural net would do a little bit of, the last little bit of work of just mapping that into a, sort of, a linear representation and then, then learning a classifier or whatever it was. And then over the years as we’ve learned to train bigger and richer neural nets, we can allow the data to have more influence and then we can back off a little bit on some of that prior knowledge. And today, when we have models like large-scale transformers with a trillion parameters learned on vast datasets, we’re letting the data do a lot of the heavy lifting. But there always has to be some kind of assumption. So in the case of transformers, there are inductive biases related to the idea of attention. So that’s a, that’s a specific structure that we bake into the transformer, and that turns out to be very, very successful. But there’s always inductive bias somewhere.

LLORENS: Yeah, and I guess with these new, you know, generative pretrained models, there’s also some inductive bias you’re imposing in the inferencing stage, just with your, with the way you prompt the system.

BISHOP: And, again, this is really interesting. The whole field of deep learning has become incredibly rich in terms of pretraining, transfer learning, the idea of prompting, zero-shot learning. The field has exploded really in the last 10 years—the last five years—not just in terms of the number of people and the scale of investment, number of startups, and so on, but the sort of the richness of ideas and, and, and techniques like, like order differentiation, for example, that mean we don’t have to code up all the gradient optimization steps. It allows us to explore a tremendous variety of different architectures very easily, very readily. So it’s become just an amazingly exciting field in the last decade.

LLORENS: And I guess we’ve, sort of, intellectually pondered here in the first few minutes the current state of the field. But what was it like for you when you first used, you know, a state-of-the-art foundation model? What was that moment like for you?

BISHOP: Oh, I could remember it clearly. I was very fortunate because I was given, as you were, I think, a very early access to GPT-4, when it was still very secret. And I, I’ve described it as being like the, kind of, the five stages of grief. It’s a, sort of, an emotional experience actually. Like first, for me, it was, like, a, sort of, first encounter with a primitive intelligence compared to human intelligence, but nevertheless, it was … it felt like this is the first time I’ve ever engaged with an intelligence that was sort of human-like and had those first sparks of, of human-level intelligence. And I found myself going through these various stages of, first of all, thinking, no, this is, sort of, a parlor trick. This isn’t real. And then, and then it would do something or say something that would be really quite shocking and profound in terms of its … clearly it was understanding aspects of what was being discussed. And I’d had several rounds of that. And then, then the next, I think, was that real? Did I, did I imagine that? And go back and try again and, no, there really is something here. So, so clearly, we have quite a way to go before we have systems that really match the incredible capabilities of the human brain. But nevertheless, I felt that, you know, after 35 years in the field, here I was encountering the first, the first sparks, the first hints, of real machine intelligence.

LLORENS: Now let’s get into your book. I believe this is your third textbook. You contributed a text called Neural Networks for Pattern Recognition in ’95 and a second book called Pattern Recognition and Machine Learning in 2006, the latter still being on my own bookshelf. So I think I can hazard a guess here, but what inspired you to start writing this third text?

BISHOP: Well, really, it began with … actually, the story really begins with the COVID pandemic and lockdown. It was 2020. The 2006 Pattern Recognition and Machine Learning book had been very successful, widely adopted, still very widely used even though it predates the, the deep learning revolution, which of course one of the most exciting things to happen in the field of machine learning. And so it’s long been on my list of things to do, to update the book, to bring it up to date, to include deep learning. And when the, when the pandemic lockdown arose, 2020, I found myself sort of imprisoned, effectively, at home with my family, a very, very happy prison. But I needed a project. And I thought this would be a good time to start to update the book. And my son, Hugh, had just finished his degree in computer science at Durham and was embarking on a master’s degree at Cambridge in machine learning, and we decided to do this as a joint project during, during the lockdown. And we’re having a tremendous amount of fun together. We quickly realized, though, that the field of deep learning is so, so rich and obviously so important these days that what we really needed was a new book rather than merely, you know, a few extra chapters or an update to a previous book. And so we worked on that pretty hard for nearly a couple of years or so. And then, and then the story took another twist because Hugh got a job at Wayve Technologies in London building deep learning systems for autonomous vehicles. And I started a new team in Microsoft called AI4Science. We both found ourselves extremely busy, and the whole project, kind of, got put on the back burner. And then along came GPT and ChatGPT, and that, sort of, exploded into the world’s consciousness. And we realized that if ever there was a time to finish off a textbook on deep learning, this was the moment. And so the last year has really been absolutely flat out getting this ready, in fact, ready in time for launch at NeurIPS this year.

LLORENS: Yeah, you know, it’s not every day you get to do something like write a textbook with your son. What was that experience like for you?

BISHOP: It was absolutely fabulous. And, and I hope it was good fun for Hugh, as well. You know, one of the nice things was that it was a, kind of, a pure collaboration. There was no divergence of agendas or any sense of competition. It was just pure collaboration. The two of us working together to try to understand things, try to work out what’s the best way to explain this, and if we couldn’t figure something out, we’d go to the whiteboard together and sketch out some maths and try to understand it together. And it was just tremendous fun. Just a real, a real pleasure, a real honor, I would say.

LLORENS: One of the motivations that you articulate in the preface of your book is to make the field of deep learning more accessible for newcomers to the field. Which makes me wonder what your sense is of how accessible machine learning actually is today compared to how it was, say, 10 years ago. On the one hand, I personally think that the underlying concepts around transformers and foundation models are actually easier to grasp than the concepts from previous eras of machine learning. Today, we also see a proliferation of helpful packages and toolkits that people can pick up and use. And on the other hand, we’ve seen an explosion in terms of the scale of compute necessary to do research at the frontiers. So net, what’s your concept of how accessible machine learning is today?

BISHOP: I think you’ve hit on some good points there. I would say the field of machine learning has really been through these three eras. The first was the focus on neural networks. The second was when, sort of, neural networks went into the back burner. As you, you hinted there, there was a proliferation of different ideas—Gaussian processes, graphical models, kernel machines, support vector machines, and so on—and the field became very broad. There are many different concepts to, to learn. Now, in a sense, it’s narrowed. The focus really is on deep neural networks. But within that field, there has been an explosion of different architectures and different … and not only in terms of the number of architectures. Just the sheer number of papers published has, has literally exploded. And, and so it can be very daunting, very intimidating, I think, especially for somebody coming into the field afresh. And so really the value proposition of this book is distill out the, you know, 20 or so foundational ideas and concepts that you really need to understand in order to understand the field. And the hope is that if you’ve really understood the content of the book, you’d be in pretty good shape to pretty much read any, any paper that’s published. In terms of actually using the technology in practice, yes, on the one hand, we have these wonderful packages and, especially with all the differentiation that I mentioned before, is really quite revolutionary. And now you can, you can put things together very, very quickly, a lot of open-source code that you can quickly bolt together and assemble lots of different, lots of different things, try things out very easily. It’s true, though, that if you want to operate at the very cutting edge of large-scale machine learning, that does require resources on a very large scale. So that’s obviously less accessible. But if your goal is to understand the field of machine learning, then, then I hope the book will serve a good purpose there. And in one sense, the fact that the packages are so accessible and so easy to use really hides some of the inner workings, I would say, of these, of these systems. And so I think in a way, it’s almost too easy just to train up a neural network on some data without really understanding what’s going on. So, so the book is really about, if you like, the minimum set of things that you need to know about in order to understand the field, not just to, sort of, turn the crank on it on a package but really understand what’s going on inside.

LLORENS: One of the things I think you did not set out to do, as you just mentioned, is to create an exhaustive survey of the most recent advancements, which might have been possible, you know, a decade or so ago. How do you personally keep up with the blistering pace of research these days?

BISHOP: Ah, yes, it’s a, it’s a challenge, of course. So, so my focus these days is on AI4Science, AI for natural science. But that’s also becoming a very large field. But, you know, one of the, one of the wonderful things about being at Microsoft Research is just having fantastic colleagues with tremendous expertise. And so, a lot of what I learn is from, is from colleagues. And we’re often swapping notes on, you know, you should take a look at this paper, did you hear about this idea, and so on, and brainstorming things together. So a lot of it is, you know, just taking time each day to read papers. That’s important. But also, just conversations with, with colleagues.

LLORENS: OK, you mentioned AI4Science. I do want to get into that. I know it’s an area that you’re passionate about and one that’s become a focus for your career in this moment. And, you know, I think of our work in AI4Science as creating foundation models that are fluent not in human language but in the language of nature. And earlier in this conversation, we talked about distribution. So I want to, kind of, bring you back there. Do you think we can really model all of nature as one wildly complex statistical distribution?

BISHOP: [LAUGHS] Well, that’s, that’s really interesting. I do think I could imagine a future, maybe not too many years down the road, where scientists will engage with the tools of scientific discovery through something like a natural language model. That model will also have understanding of concepts around the structures of molecules and the nature of data, will read scientific literature, and so on, and be able to assemble these ideas together. But it may need to draw upon other kinds of tools. So whether everything will be integrated into one, one overarching tool is less clear to me because there are some aspects of scientific discovery that are being, truly being revolutionized right now by deep learning. For example, our ability to simulate the fundamental equations of nature is being transformed through deep learning, and the nature of that transformation, on the one hand, it leverages, might leverage architectures like diffusion models and large language, large language models, large transformers, and the ability to train on large GPU clusters. But the fundamental goals there are to solve differential equations at a very large scale. And so the kinds of techniques we use there are a little bit different from the ones we’d use in processing natural language, for example. So you could imagine, maybe not too many years in the future, where a scientist will have a, kind of, “super copilot” that they can interact with directly in natural language. And that copilot or system of copilots can itself draw upon various tools. They may be tools that simulate Schrödinger equation, solves Schrödinger equation, to predict the properties of molecules. It might call upon large-scale deep learning emulators that can do a similar thing to the simulators but very, very much more efficiently. It might even call upon automated labs, wet labs, that can run experiments and gather data and can help the scientist marshal these resources and make optimal decisions as they go through that iterative scientific discovery process, whether inventing a new battery, electrolyte, or whether discovering a new drug, for example.

LLORENS: We talked earlier about the “no free lunch” theorem and the concept of inductive bias. What does that look like here in training science foundation models?

BISHOP: Well, it’s really interesting, and maybe I’m a little biased because my background is in physics. I did a PhD in quantum field theory many decades ago. For me, one of the reasons that this is such an exciting field is that, you know, my own career has come full circle. I now get to combine machine learning with physics and chemistry and biology. I think the inductive bias here is, is particularly interesting. If you think about large language models, we don’t have very many, sort of, fundamental rules of language. I mean, the rules of linguistics are really human observations about the structure of language. But neural nets are very good at extracting that, that kind of structure from data. Whereas when we look at physics, we have laws which we believe hold very accurately. For example, conservation of energy or rotational invariance. The energy of a molecule in a vacuum doesn’t depend on its rotation in space, for example. And that kind of inductive bias is very rigorous. We believe that it holds exactly. And so there is … and also, very often, we want to train on data that’s obtained from simulators. So the training data itself is obtained by solving some of those fundamental equations, and that process itself is computationally expensive. So the data can often be in relatively limited supply. So you’re in a regime that’s a little bit different from the large language models. It’s a little bit more like, in a way, machine learning was, you know, 10 to 20 years ago, as you were talking about, where data, data is limited. But now we have these powerful and strong inductive biases, and so there’s, it’s a very rich field of research for how to build in those inductive biases into the machine learning models but in a way that retains computational efficiency. So I personally, actually, find this one of the most exciting frontiers not only of the natural sciences but also of machine learning.

LLORENS: Yeah, you know, physics and our understanding of the natural world has come so far, you know, over the last, you know, centuries and decades. And yet our understanding of physics is evolving. It’s an evolving science. And so maybe I’ll ask you somewhat provocatively if baking our current understanding of physics into these models as inductive biases is limiting in some way, perhaps limiting their ability to learn new physics?

BISHOP: It’s a great question. I think for the kinds of things that we’re particularly interested in, in Microsoft Research, in the AI4Science team, we’re very interested in things that have real-world applicability, things to do with drug discovery, materials design. And there, first of all, we do have a very good understanding of the fundamental equations, essentially Schrödinger equation and fundamental equations of physics, and those inductive biases such as energy conservation. We really do believe they hold very accurately in the domains that we’re interested in. However, there’s a lot of scientific knowledge that is, that represents approximations to that, because you can only really solve these equations exactly for very small systems. And as you start to get to larger, more complex systems, there are, as it were, laws of physics that aren’t, aren’t quite as rigorous, that are somewhat more empirically derived, where there perhaps is scope for learning new kinds of physics. And, certainly, as you get to larger systems, you get, you get emergent properties. So, so conservation of energy doesn’t get violated, but nevertheless, you can have a very interesting new emergent physics. And so it’s, from the point of view of scientific discovery, I think the field is absolutely wide open. If you look at solid-state physics, for example, and device physics, there’s a tremendous amount of exciting new research to be done over the coming decades.

LLORENS: Yeah, you alluded to this. I think maybe it’s worth just double clicking on for a moment because there is this idea of compositionality and emergent properties as you scale up, and I wonder if you could just elaborate on that a little bit.

BISHOP: Yeah, that’s a good, that’s a good, sort of, picture to have this, sort of, hierarchy of different levels in the way they interact with each other. And at the very deepest level, the level of electrons, you might even more or less directly solve Schrödinger equation or do some very good approximation to that. That quickly becomes infeasible. And as you go up this hierarchy of, effectively, length scales, you have to make more and more approximations in order to be computationally efficient or computationally even practical. But in a sense, the previous levels of the hierarchy can provide you with training data and with validation verification of what you’re doing at the next level. And so the interplay between these different hierarchies is also very, very, very interesting. So at the level of electrons, they govern forces between atoms, which governs the dynamics of atoms. But once you look at larger molecules, you perhaps can’t simulate the behavior of every electron. You have to make some approximations. And then for larger molecules still, you can’t even track the behavior of every atom. You need some sort of coarse graining and so on. And so you have this, this hierarchy of different length scales. But every single one of those length scales is being transformed by deep learning, by our ability to learn from simulations, learn from those fundamental equations, in some cases, learn also from experimental data and build emulators, effectively, systems that can simulate that particular length scale and the physical and biological properties but do so in a way that’s computationally very efficient. So every layer of this hierarchy is currently being transformed, which is just amazingly exciting.

LLORENS: You alluded to some of the application domains that stand to get disrupted by advancements in AI4Science. What are a couple of the applications that you’re most excited about?

BISHOP: There are so many, it would be impossible to list them. But let me give you a couple of domains. I mean, the first one is, is healthcare and the ability to design new molecules, whether it’s small-molecule drugs or more protein-based therapies. That, that whole field is rapidly shifting to a much more computational domain, and that should accelerate our ability to develop new therapies, new drugs. The other class of domains has more to do with materials, and there are a lot of … the applications that we’re interested in relate to sustainability, things to do with capturing CO2 from the atmosphere, creating, let’s say, electricity from hydrogen, creating hydrogen from electricity. We need to do things both ways round. Just storing heat as a form of energy storage. Many, many applications relating to sustainability to do with, to do with protecting our water supply, to do with providing green energy, to do with storing and transporting energy. Many, many applications.

LLORENS: And at the core of all those advancements is deep learning as we’ve kind of started. And so maybe as we, as we close, we can, kind of, come back to your book on deep learning. I don’t have the physical book yet, but there’s a spot on my shelf next to your last book that’s waiting for it. But as we close here, maybe you can tell folks where to look for or how to get a copy of your new book.

BISHOP: Oh, sure. It’s dead easy. You go to bishopbook.com, and from there, you’ll see how to order a hardback copy if that’s what you’d like, or there’s a PDF based e-book version. There’ll be a Kindle version, I believe. But there’s also a free-to-use online version on bishopbook.com, and it’s available there. It’s, sort of, PDF style and fully hyperlinked, free to use, and I hope people will read it, and enjoy it, and learn from it.

LLORENS: Thanks for a fascinating discussion, Chris.

BISHOP: Thanks, Ashley.

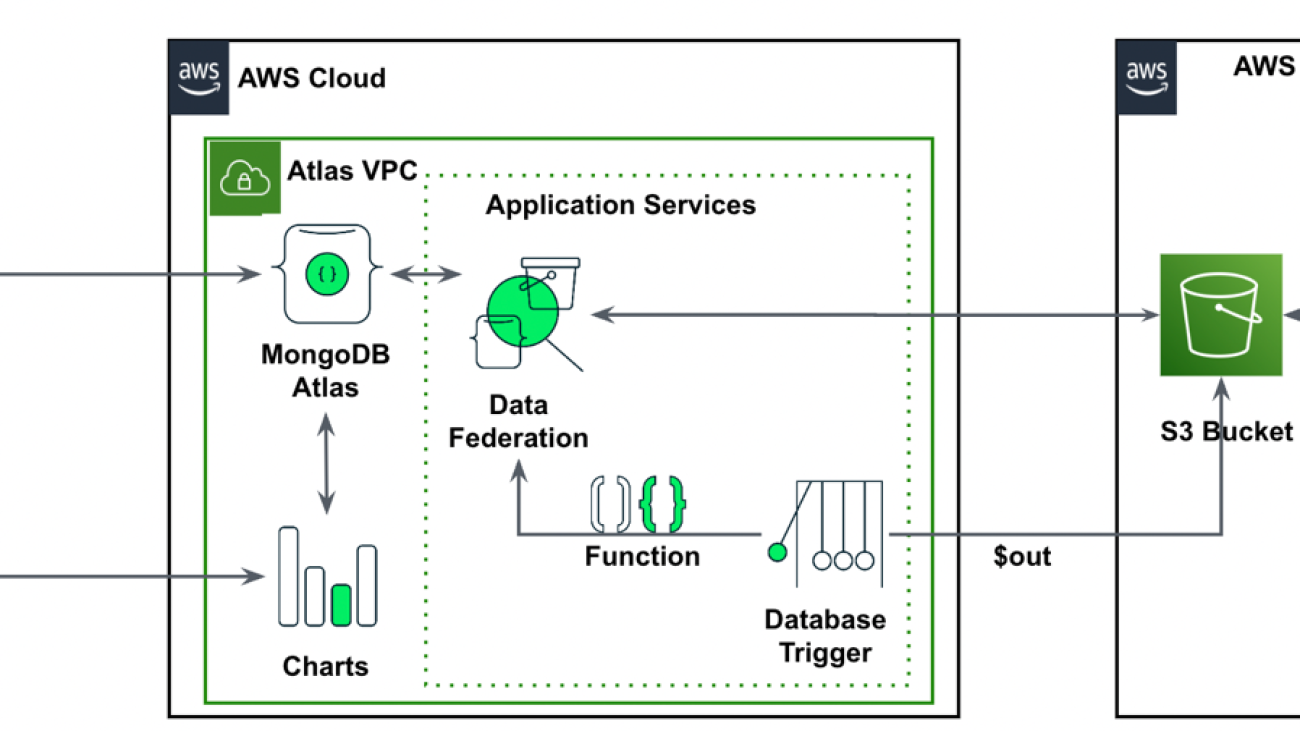

Igor Alekseev is a Senior Partner Solution Architect at AWS in Data and Analytics domain. In his role Igor is working with strategic partners helping them build complex, AWS-optimized architectures. Prior joining AWS, as a Data/Solution Architect he implemented many projects in Big Data domain, including several data lakes in Hadoop ecosystem. As a Data Engineer he was involved in applying AI/ML to fraud detection and office automation.

Igor Alekseev is a Senior Partner Solution Architect at AWS in Data and Analytics domain. In his role Igor is working with strategic partners helping them build complex, AWS-optimized architectures. Prior joining AWS, as a Data/Solution Architect he implemented many projects in Big Data domain, including several data lakes in Hadoop ecosystem. As a Data Engineer he was involved in applying AI/ML to fraud detection and office automation. Babu Srinivasan is a Senior Partner Solutions Architect at MongoDB. In his current role, he is working with AWS to build the technical integrations and reference architectures for the AWS and MongoDB solutions. He has more than two decades of experience in Database and Cloud technologies . He is passionate about providing technical solutions to customers working with multiple Global System Integrators(GSIs) across multiple geographies.

Babu Srinivasan is a Senior Partner Solutions Architect at MongoDB. In his current role, he is working with AWS to build the technical integrations and reference architectures for the AWS and MongoDB solutions. He has more than two decades of experience in Database and Cloud technologies . He is passionate about providing technical solutions to customers working with multiple Global System Integrators(GSIs) across multiple geographies.